The Sandy Bridge Review: Intel Core i7-2600K, i5-2500K and Core i3-2100 Tested

by Anand Lal Shimpi on January 3, 2011 12:01 AM ESTGaming Performance

There's simply no better gaming CPU on the market today than Sandy Bridge. The Core i5 2500K and 2600K top the charts regardless of game. If you're building a new gaming box, you'll want a SNB in it.

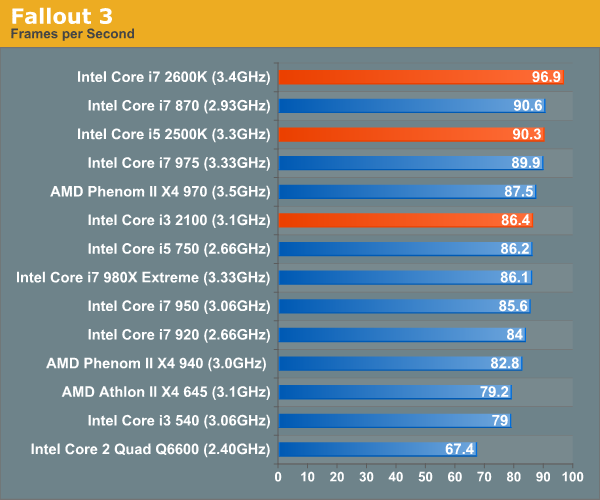

Our Fallout 3 test is a quick FRAPS runthrough near the beginning of the game. We're running with a GeForce GTX 280 at 1680 x 1050 and medium quality defaults. There's no AA/AF enabled.

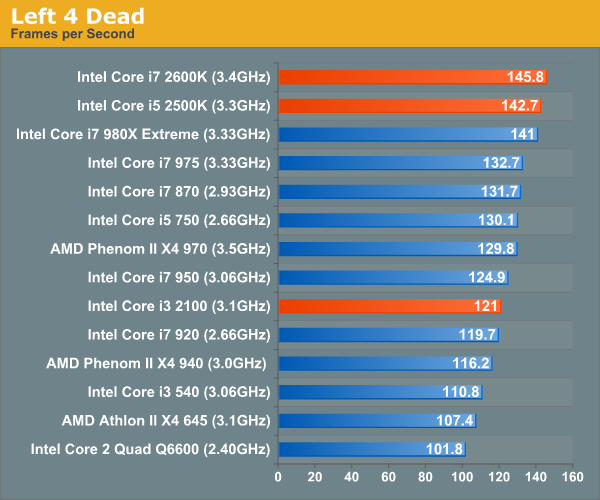

In testing Left 4 Dead we use a custom recorded timedemo. We run on a GeForce GTX 280 at 1680 x 1050 with all quality options set to high. No AA/AF enabled.

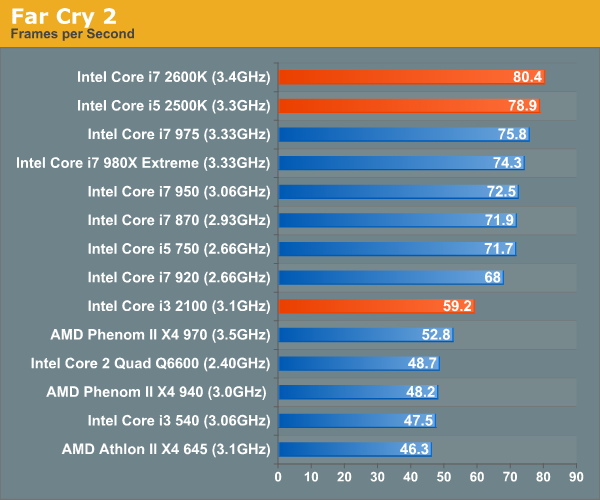

Far Cry 2 ships with several built in benchmarks. For this test we use the Playback (Action) demo at 1680 x 1050 in DX9 mode on a GTX 280. The game is set to medium defaults with performance options set to high.

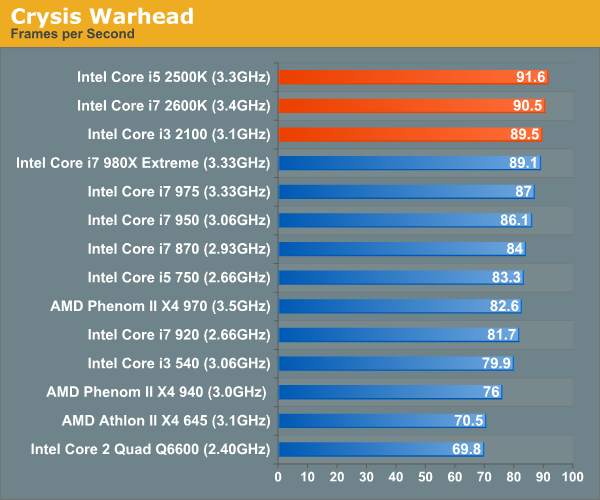

Crysis Warhead also ships with a number of built in benchmarks. Running on a GTX 280 at 1680 x 1050 we run the ambush timedemo with mainstream quality settings. Physics is set to enthusiast however to further stress the CPU.

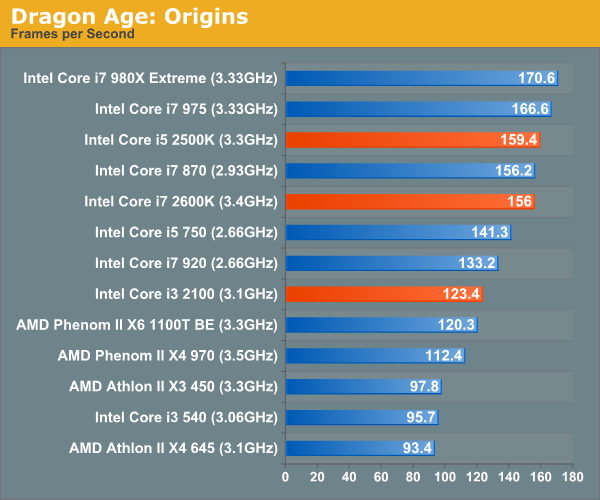

Our Dragon Age: Origins benchmark begins with a shift to the Radeon HD 5870. From this point on these games are run under our Bench refresh testbed under Windows 7 x64. Our benchmark here is the same thing we ran in our integrated graphics tests - a quick FRAPS walkthrough inside a castle. The game is run at 1680 x 1050 at high quality and texture options.

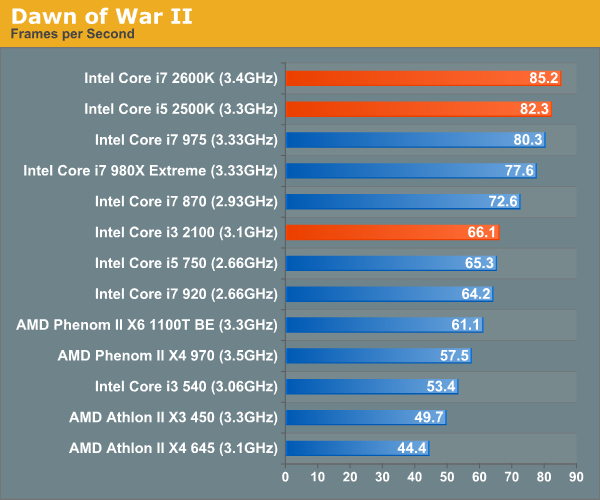

We're running Dawn of War II's internal benchmark at high quality defaults. Our GPU of choice is a Radeon HD 5870 running at 1680 x 1050.

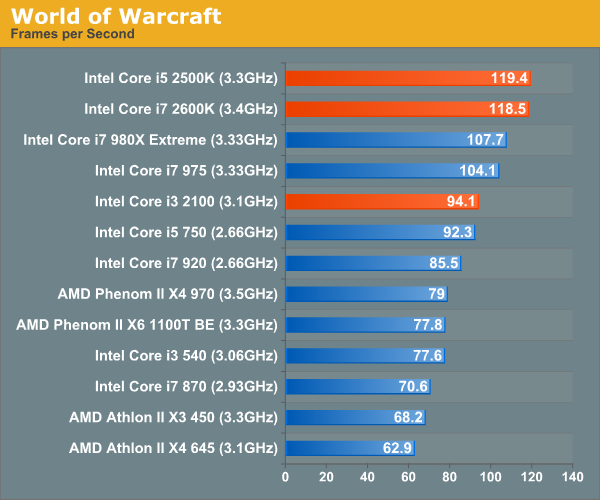

Our World of Warcraft benchmark is a manual FRAPS runthrough of a lightly populated server with no other player controlled characters around. The frame rates here are higher than you'd see in a real world scenario, but the relative comparison between CPUs is accurate.

We run on a Radeon HD 5870 at 1680 x 1050. We're using WoW's high quality defaults but with weather intensity turned down all the way.

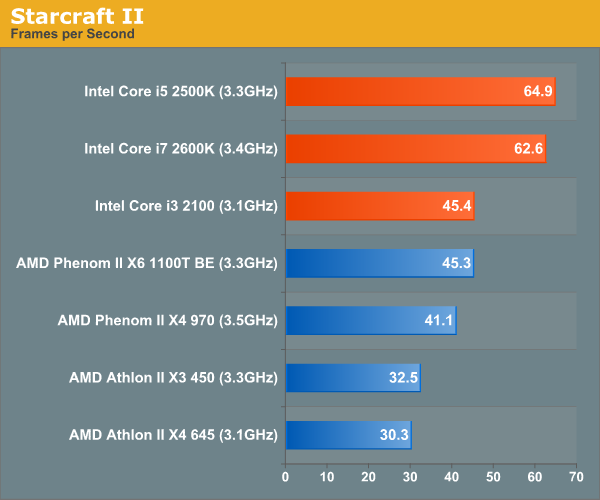

For Starcraft II we're using our heavy CPU test. This is a playback of a 3v3 match where all players gather in the middle of the map for one large, unit-heavy battle. While GPU plays a role here, we're mostly CPU bound. The Radeon HD 5870 is running at 1024 x 768 at medium quality settings to make this an even more pure CPU benchmark.

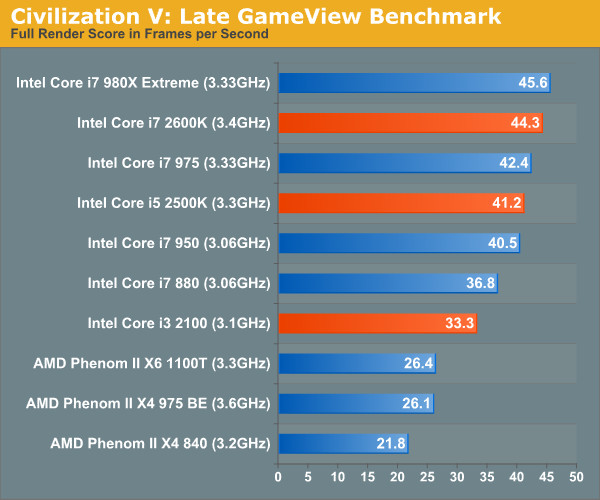

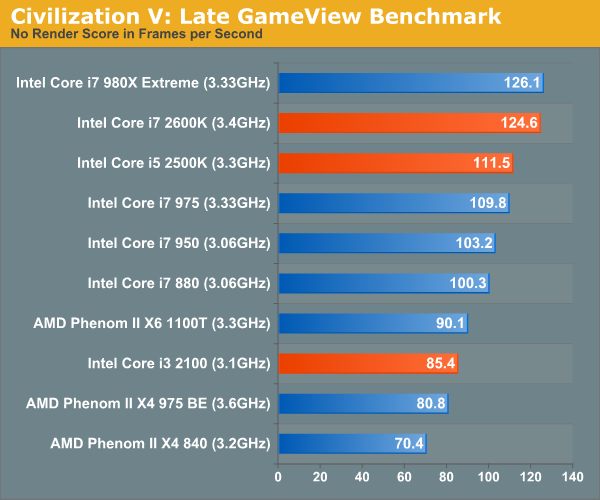

This is Civ V's built in Late GameView benchmark, the newest addition to our gaming test suite. The benchmark outputs three scores: a full render score, a no-shadow render score and a no-render score. We present the first and the last, acting as a GPU and CPU benchmark respectively.

We're running at 1680 x 1050 with all quality settings set to high. For this test we're using a brand new testbed with 8GB of memory and a GeForce GTX 580.

283 Comments

View All Comments

GeorgeH - Monday, January 3, 2011 - link

With the unlocked multipliers, the only substantive difference between the 2500K and the 2600K is hyperthreading. Looking at the benchmarks here, it appears that at equivalent clockspeeds the 2600K might actually perform worse on average than the 2500K, especially if gaming is a high priority.A short article running both the 2500K and the 2600K at equal speeds (say "stock" @3.4GHz and overclocked @4.4GHz) might be very interesting, especially as a possible point of comparison for AMD's SMT approach with Bulldozer.

Right now it looks like if you're not careful you could end up paying ~$100 more for a 2600K instead of a 2500K and end up with worse performance.

Gothmoth - Monday, January 3, 2011 - link

and what benchmarks you are speaking about?as anand wrote HT has no negative influence on performance.

GeorgeH - Monday, January 3, 2011 - link

The 2500K is faster in Crysis, Dragon Age, World of Warcraft and Starcraft II, despite being clocked slower than a 2600K. If it weren't for that clockspeed deficiency, it looks like it also might be faster in Left 4 Dead, Far Cry 2, and Dawn of War II. Just about the only game that looks like a "win" for HT is Civ5 and Fallout 3.The 2500K also wins the x264 HD 3.03 1st Pass benchmark, and comes pretty close to the 2600K in a few others, again despite a clockspeed deficiency.

Intel's new "no overclocking unless you get a K" policy looks like it might be a double-edged sword. Ignoring the IGP stuff, the only difference between a 2500K and a 2600K is HT; if you're spending extra for a K you're going to be overclocking, making the 2500K's base clockspeed deficiency irrelevant. That means HT's deficiencies won't be able to hide behind lower clockspeeds and locked multipliers (as with the i5-7xx and i7-8xx.)

In the past HT was a no-brainer; it might have hurt performance in some cases but it also came with higher clocks that compensated for HT's shortcomings. Now that Intel has cut enthusiasts down to two choices, HT isn't as clear cut, especially if those enthusiasts are gamers - and most of them are.

Shorel - Monday, January 3, 2011 - link

I don't ever watch soap operas (why somebody can enjoy such crap is beyond me) but I game a lot. All my free time is spent gaming.High frame rate reminds me of good video cards (or games that are not cutting edge) and the so called film 24p reminds me of the Michael Bay movies where stuff happens fast but you can't see anything, like in transformers.

Please don't assume that your readers know or enjoy soap operas. Standard TV is for old people and movies look amazing at 120hz when almost all you do is gaming.

mmcc575 - Monday, January 3, 2011 - link

Just want to say thanks for such a great opening article on desktop SNB. The VS2008 benchmark was also a welcome addition!SNB launch and CES together must mean a very busy time for you, but it would be great to get some clarification/more in depth articles on a couple of areas.

1. To clarify, if the LGA-2011 CPUs won't have an on-chip GPU, does this mean they will forego arguably the best feature in Quick Sync?

2. Would be great to have some more info on the Overclocking of both the CPU and GPU, such as the process, how far you got on stock voltage, the effect on Quick Sync and some OC'd CPU benchmarks.

3. A look at the PQ of the on-chip GPU when decoding video compared to discrete low-end rivals from nVidia and AMD, as it is likely that the main market for this will be those wanting to decode video as opposed to play games. If you're feeling generous, maybe a run through the HQV benchmark? :P

Thanks for reading, and congrats again for having the best launch-day content on the web.

ajp_anton - Monday, January 3, 2011 - link

In the Quantum of Solace comparison, x86 and Radeon screens are the same.I dug up a ~15Mbit 1080p clip with some action and transcoded it to 4Mbit 720p using x264. So entirely software-based. My i7 920 does 140fps, which isn't too far away from Quick Sync. I'd love to see some quality comparisons between x264 on fastest settings and QS.

ajp_anton - Monday, January 3, 2011 - link

Also, in the Dark Knight comparison, it looks like the Radeon used the wrong levels (so not the encoder's fault). You should recheck the settings used both in the encoder and when you took the screenshot.testmeplz - Monday, January 3, 2011 - link

Thanks for the great reveiw! I believe the colors in the legend of the graphs on the Graphics overclocking page are mixed up.THanks,

Chris

politbureau - Monday, January 3, 2011 - link

Very concise. Cheers.One thing I miss is clock-for-clock benchmarks to highlight the effect of architectural changes. Though not perhaps within the scope of this review, it would nonetheless be interesting to see how SNB fairs against Bloomfield and Lynnfield at similar clock speeds.

Cheerio

René André Poeltl - Monday, January 3, 2011 - link

Good performance for a bargain - that was amd's terrain.Now sandy bridge for ~200 $ targets on amd's clientel. A Core i5-2500K for $216 - that's a bargain. (included is even a 40$ value gpu) And the overclocking ability!

If I understood it correctly: Intel Core i7 2600K @ 4.4GHz 111W under load is quite efficient. At 3.4 ghz 86 W and a ~30% more 4.4 ghz = ~30% more performance ... that would mean it scales ~ 1:1 power consumption/performance.

Many people need more performance per core, but not more cores. At 111 W under load this would be the product they wanted. e.g. People who make music with pc's, not playing mp3's but mixing, producing music.

But for more cores the x6 Thuban is the better choice on a budget. For e.g. building a server on a budget intel has no product to rival it. Or developers - they may also want as many cores as they can get for their apps to test multithreading performance.

And Amd's also scores with their more conservative approach when it comes to upgrading e.g. motherboards. People don't like to buy a new motherboard every time they upgrade the cpu.