10G Ethernet: More Than a Big Pipe

by Johan De Gelas on November 24, 2010 2:34 PM EST- Posted in

- IT Computing

- Networking

- 10G Ethernet

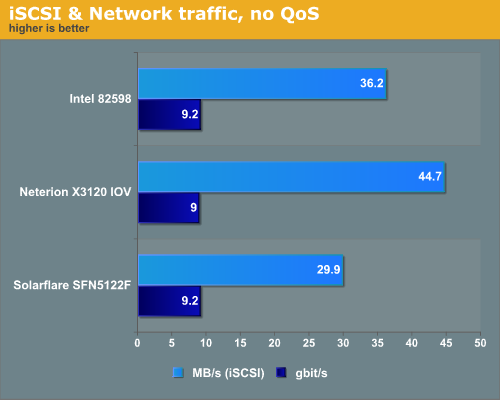

Consolidation and Choking

If we consolidate storage, network, and management traffic in a single or dual 10GbE cable, a simpler, cheaper and easier to manage datacenter is in reach. So how well do the new NICs cope with these I/O demands? We decided to mix a VM with an iSCSI initiator that is sending off lots of storage traffic with three VMs that are demanding lots of “normal” network traffic. In our first scenario we simulate the “I don’t have time to properly configure” scenario. We just hooked up one VM with an iSCSI initiator, and three other VMs were running IxChariot endpoints, creating as much traffic as they like.

It is easy to notice that the four virtual function NICs allow the Neterion X3120 to spread the load more evenly. The iSCSI VM runs 50% faster on the Neterion chip, which is significant. Reading the disk blocks 50% faster can make a real difference to the end-user, as the user experience is in many applications highly depended on the slow disks.

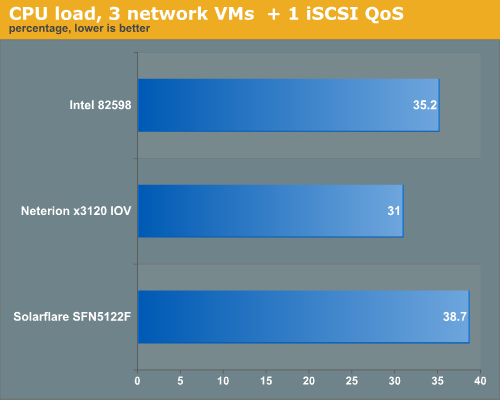

Let us see how much CPU load was necessary to keep this kind of brutal network traffic under control. The two CPUs on the virtual node were Xeon X5670 2.93GHz.

The Neterion chip does a better job at a slightly lower CPU load.

38 Comments

View All Comments

fr500 - Wednesday, November 24, 2010 - link

I guess there is LACP or PAGP and some propietary solution.A quick google told me it's called cross-module trunking.

mlambert - Wednesday, November 24, 2010 - link

FCoE, iSCSI (*not that you would, but you could), FC, and IP all across the same link. Cisco offers VCP LACP with CNA as well. 2 links per server, 2 links per storage controller, thats not many cables.mlambert - Wednesday, November 24, 2010 - link

I meant VPC and Cisco is the only one that offers it today. I'm sure Brocade will in the near future.Zok - Friday, November 26, 2010 - link

Brocade's been doing this for a while with the Brocade 8000 (similar to the Nexus 5000), but their new new VDX series takes it a step further for FCoE.Havor - Wednesday, November 24, 2010 - link

Do these network adapters are real nice for servers, don't need a manged NIC, i just really want affordable 10Gbit over UTP ore STP.Even if its only 30~40M / 100ft because just like whit 100Mbit network in the old days my HDs are more then a little out preforming my network.

Wondering when 10Gbit will become common on mobos.

Krobar - Thursday, November 25, 2010 - link

Hi Johan,Wanted to say nice article first of all, you pretty much make the IT/Pro section what it is.

In the descriptions of the cards and conclusion you didnt mention Solarflares "Legacy" Xen netfront support. This only works for paravirt Linux VMs and requires a couple of extra options at kernal compile time but it run like a train and requires no special hardware support from the motherboard at all. None of the other brands support this.

marraco - Thursday, November 25, 2010 - link

I once made a resume of total cost of the network on the building where I work.Total cost of network cables was far larger than the cost of the equipment (at least with my country prices). Also, solving any cable related problem was a complete hell. The cables were hundreds, all entangled over the false roof.

I would happily replace all that for 2 of tree cables with cheap switches at the end. Selling the cables would pay for new equipment and even give a profit.

Each computer has his own cable to the central switch. A crazy design.

mino - Thursday, November 25, 2010 - link

IF you go 10G for cable consolidation, you better forget about cheap switches.The real saving are in the manpower, not the cables themselves.

myxiplx - Thursday, November 25, 2010 - link

If you're using a Supermicro Twin2, why don't you use the option for the on board Mellanox ConnectX-2? Supermicro have informed me that with a firmware update these will act as 10G Ethernet cards, and Mellanox's 10G Ethernet range has full support for SR-IOV:Main product page:

http://www.mellanox.com/content/pages.php?pg=produ...

Native support in XenServer 5:

http://www.mellanox.com/content/pages.php?pg=produ...

AeroWB - Thursday, November 25, 2010 - link

Nice Article,It is great to see more test around virtual environments. What surprises me a little bit is that at the start of the article you say that ESXi and Hyper-V do not support SR-IOV yet. So I was kind of expecting a test with Citrix Xenserver to show the advantages of that. Unfortunately it's not there. I hope you can do that in the near future.

I work with both Vmware ESX and Citrix XenServer we have a live setup of both. We started with ESX and later added a XenServer system, but as XenServer is getting more mature and gets more and more features we probably replace the ESX setup with XenServer (as it is much much cheaper) when maintenance runs out in about one year so I'm really interested in tests on that platform.