Retesting AMD Ryzen Threadripper’s Game Mode: Halving Cores for More Performance

by Ian Cutress on August 17, 2017 12:01 PM ESTRise of the Tomb Raider (1080p, 4K)

One of the newest games in the gaming benchmark suite is Rise of the Tomb Raider (RoTR), developed by Crystal Dynamics, and the sequel to the popular Tomb Raider which was loved for its automated benchmark mode. But don’t let that fool you: the benchmark mode in RoTR is very much different this time around.

Visually, the previous Tomb Raider pushed realism to the limits with features such as TressFX, and the new RoTR goes one stage further when it comes to graphics fidelity. This leads to an interesting set of requirements in hardware: some sections of the game are typically GPU limited, whereas others with a lot of long-range physics can be CPU limited, depending on how the driver can translate the DirectX 12 workload.

Where the old game had one benchmark scene, the new game has three different scenes with different requirements: Spine of the Mountain (1-Valley), Prophet’s Tomb (2-Prophet) and Geothermal Valley (3-Mountain) - and we test all three (and yes, I need to relabel them - I got them wrong when I set up the tests). These are three scenes designed to be taken from the game, but it has been noted that scenes like 2-Prophet shown in the benchmark can be the most CPU limited elements of that entire level, and the scene shown is only a small portion of that level. Because of this, we report the results for each scene on each graphics card separately.

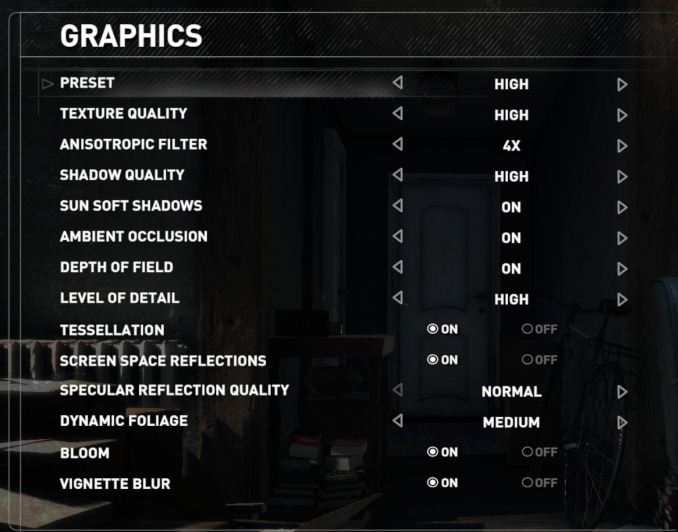

Graphics options for RoTR are similar to other games in this type, offering some presets or allowing the user to configure texture quality, anisotropic filter levels, shadow quality, soft shadows, occlusion, depth of field, tessellation, reflections, foliage, bloom, and features like PureHair which updates on TressFX in the previous game.

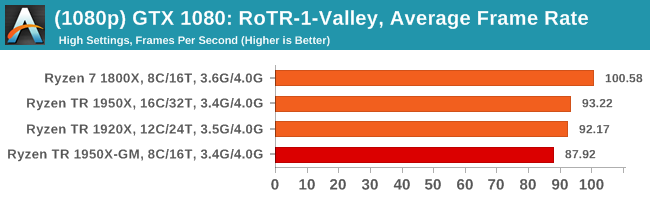

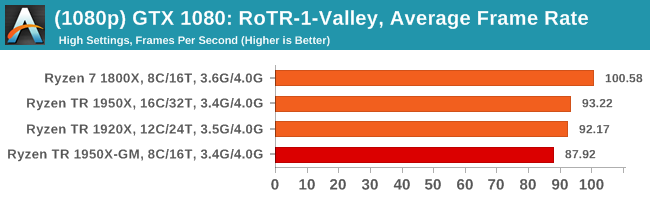

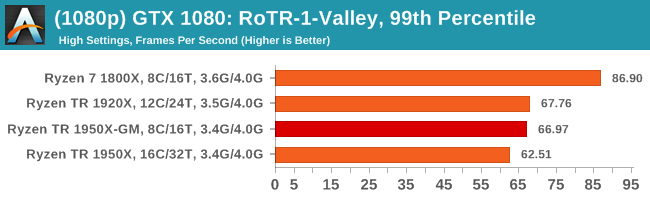

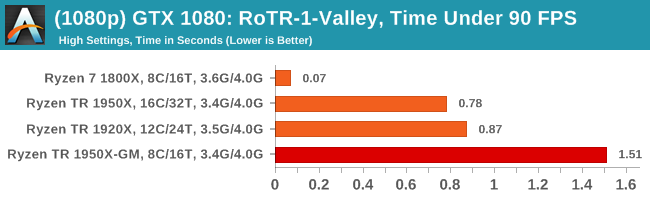

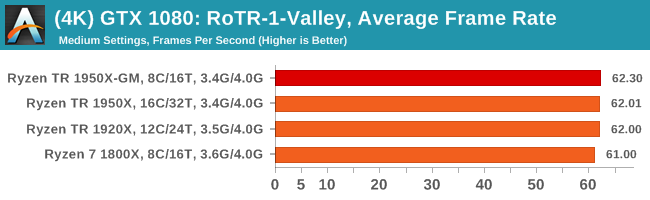

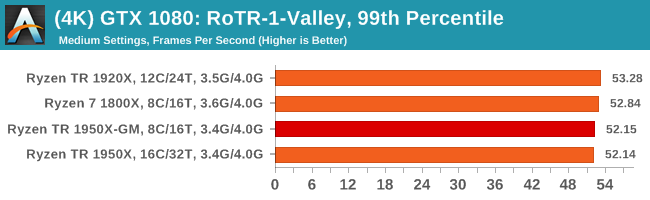

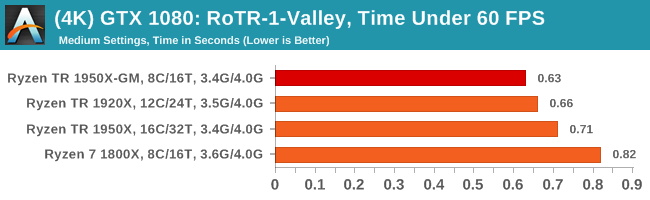

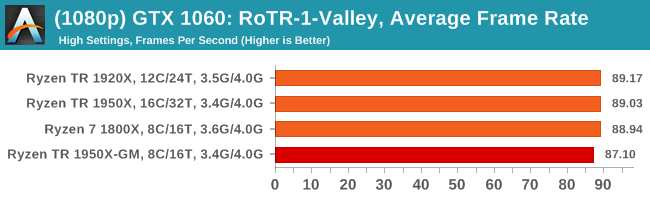

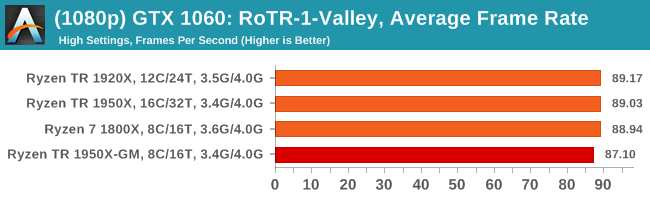

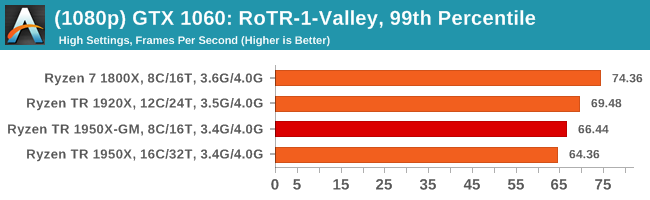

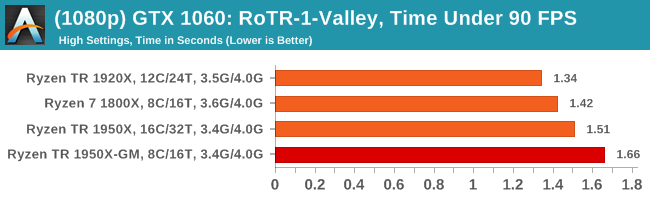

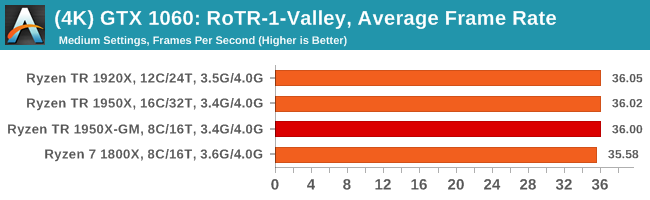

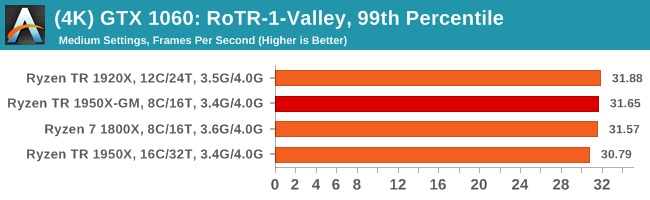

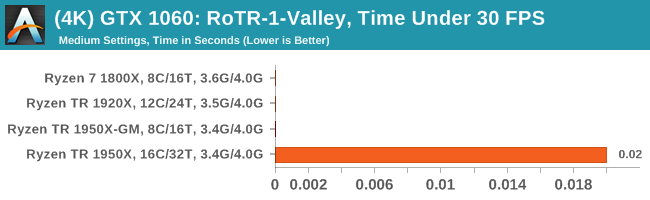

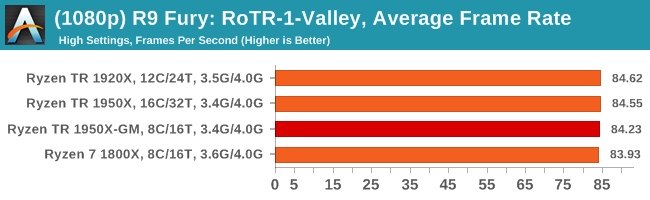

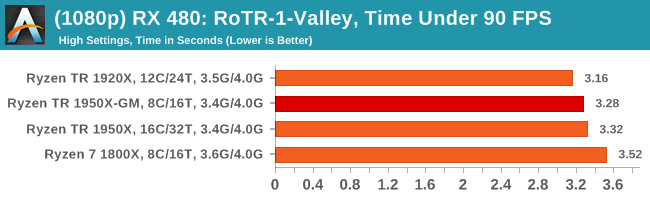

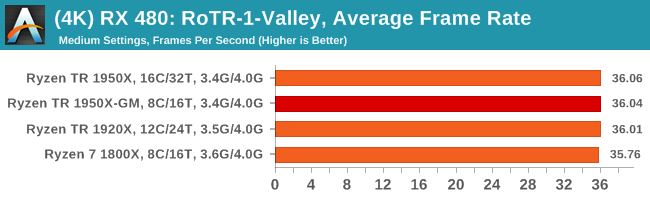

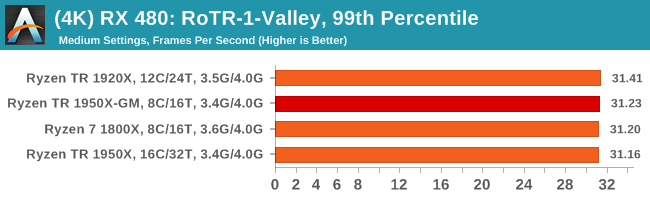

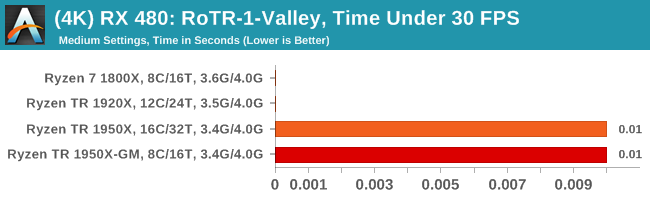

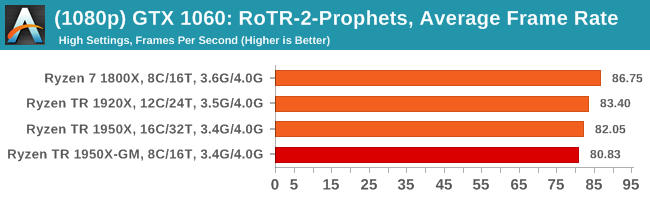

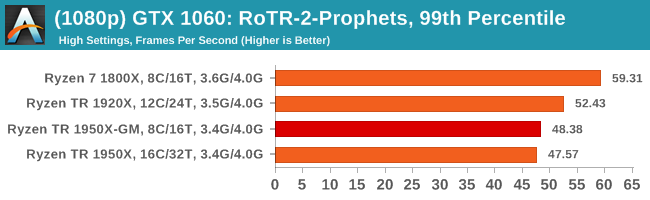

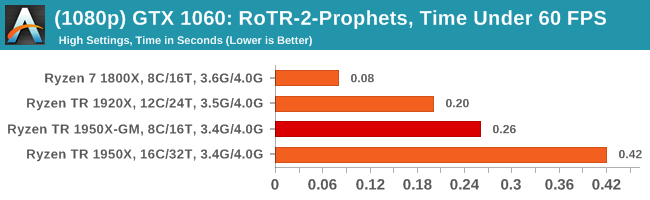

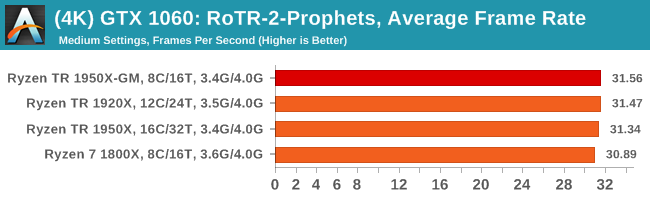

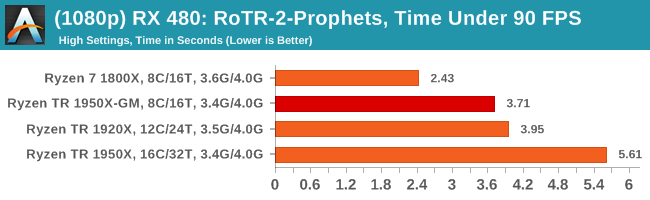

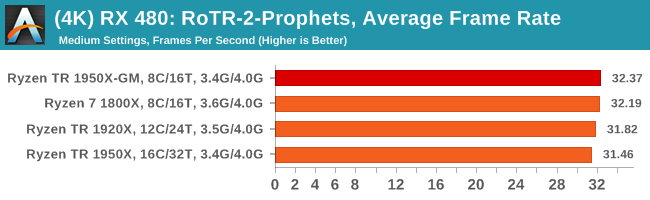

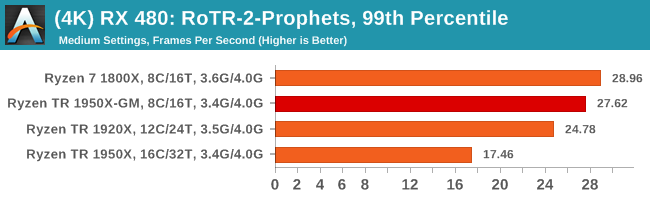

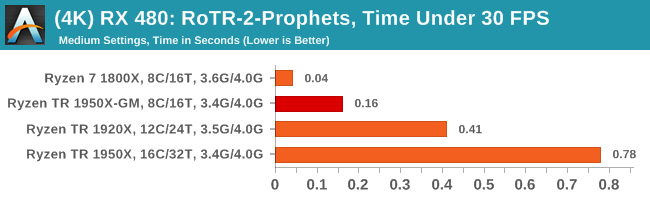

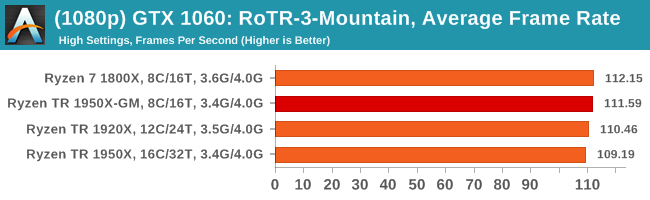

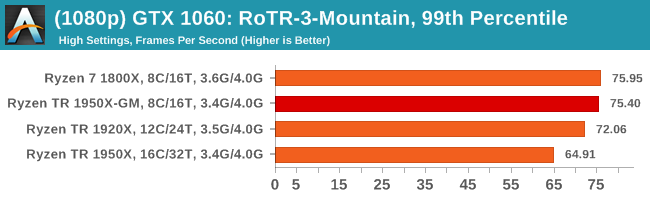

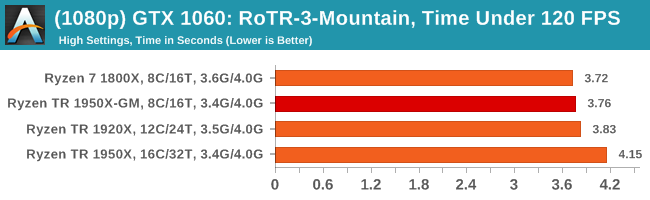

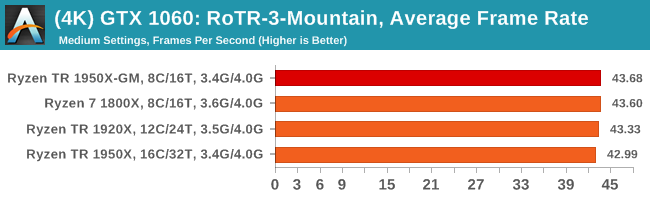

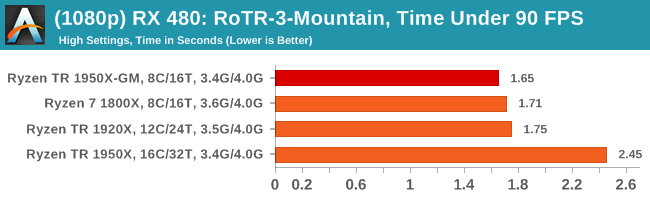

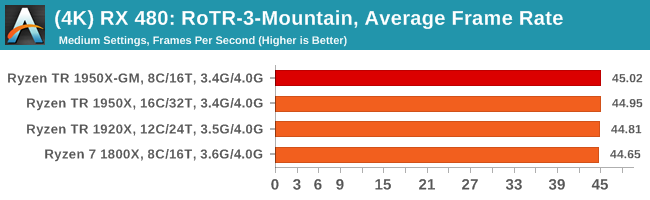

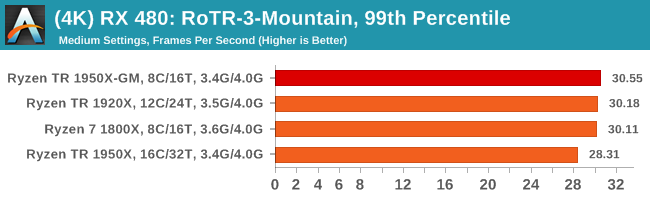

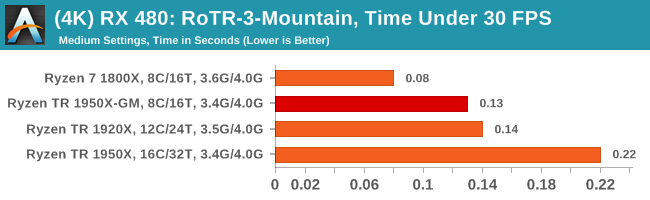

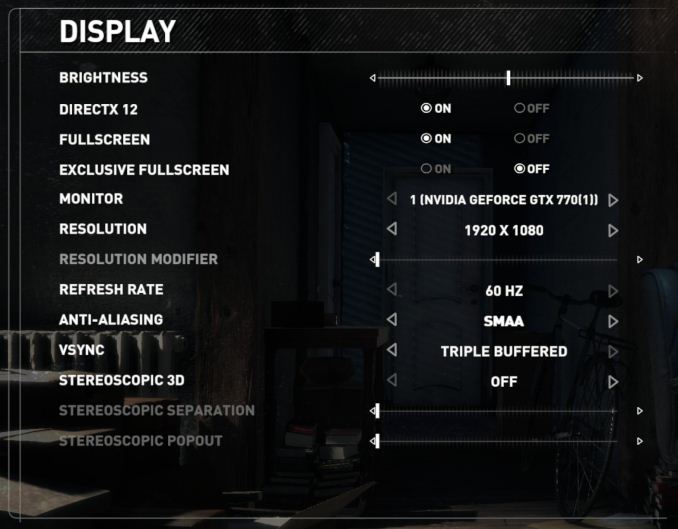

Again, we test at 1920x1080 and 4K using our native 4K displays. At 1080p we run the High preset, while at 4K we use the Medium preset which still takes a sizable hit in frame rate.

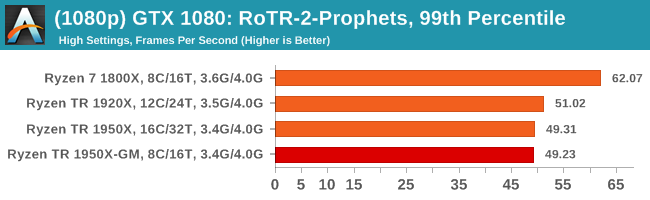

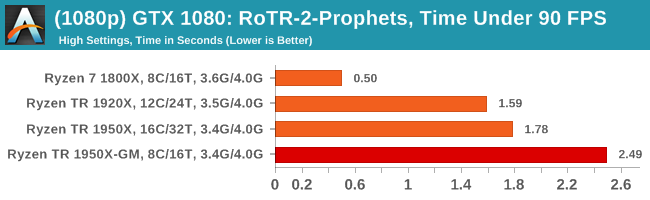

It is worth noting that RoTR is a little different to our other benchmarks in that it keeps its graphics settings in the registry rather than a standard ini file, and unlike the previous TR game the benchmark cannot be called from the command-line. Nonetheless we scripted around these issues to automate the benchmark four times and parse the results. From the frame time data, we report the averages, 99th percentiles, and our time under analysis.

All of our benchmark results can also be found in our benchmark engine, Bench.

#1 Geothermal Valley Spine of the Mountain

MSI GTX 1080 Gaming 8G Performance

1080p

4K

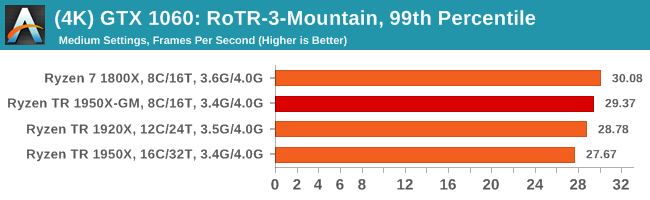

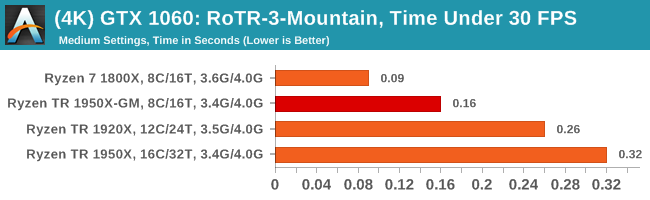

ASUS GTX 1060 Strix 6G Performance

1080p

4K

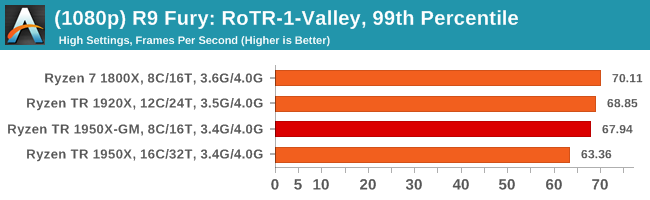

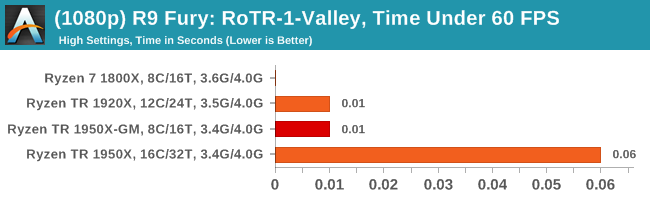

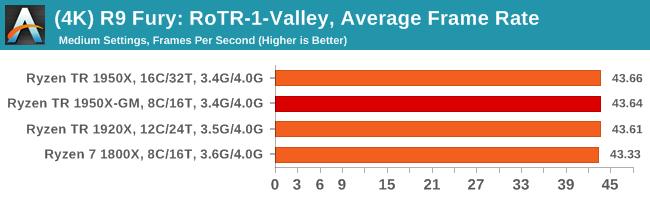

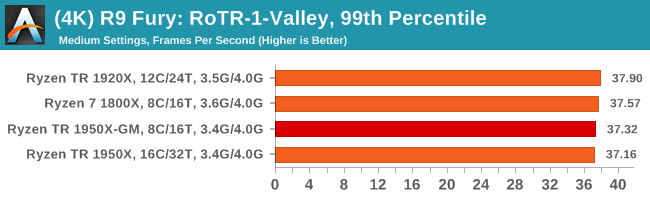

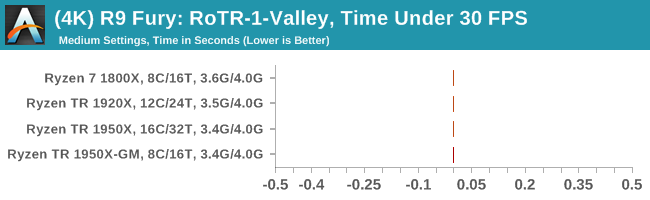

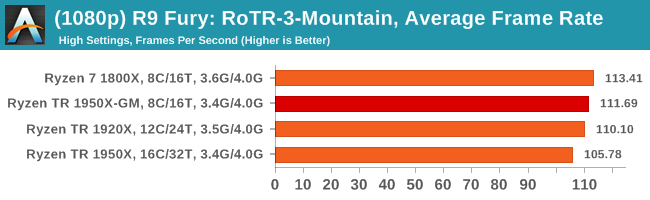

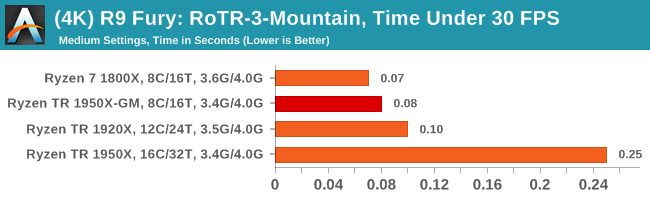

Sapphire Nitro R9 Fury 4G Performance

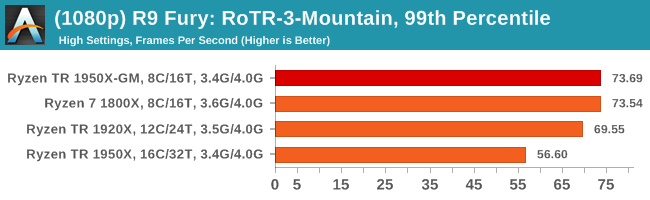

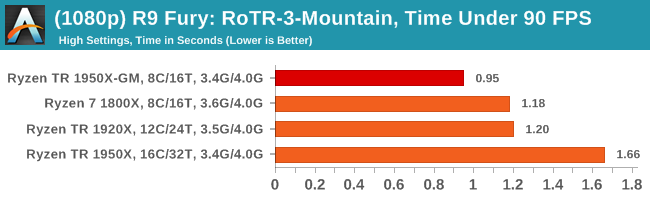

1080p

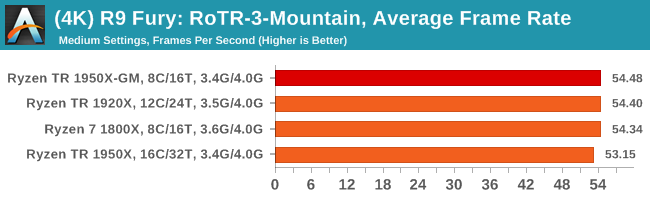

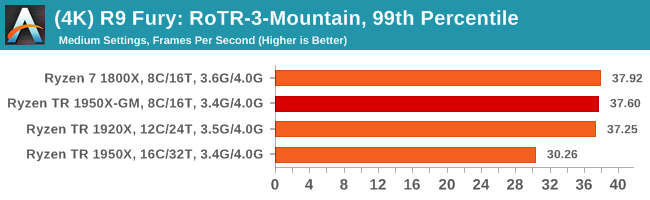

4K

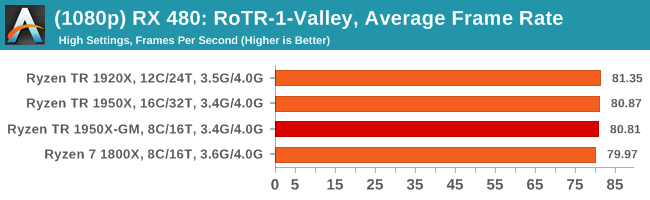

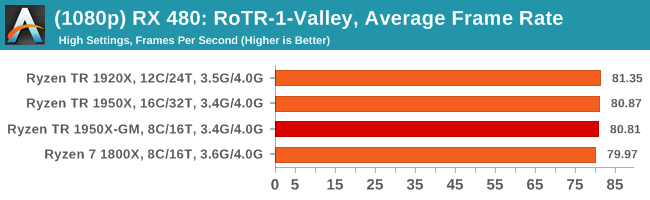

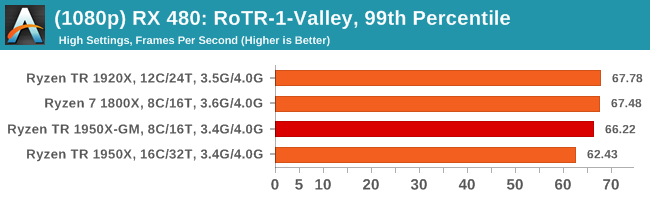

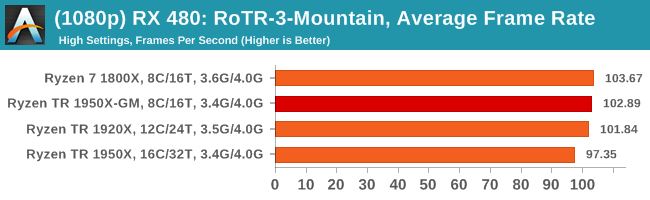

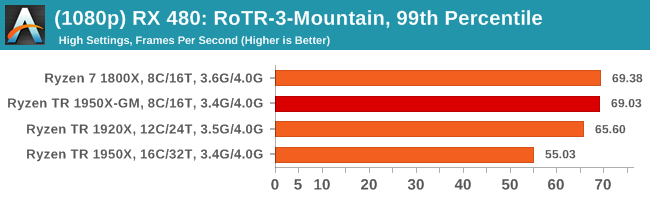

Sapphire Nitro RX 480 8G Performance

1080p

4K

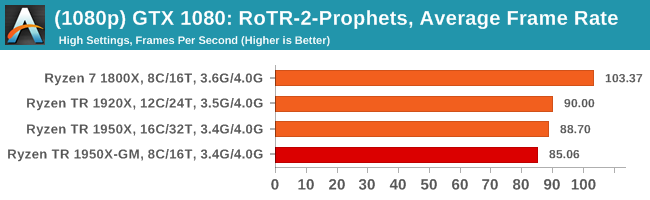

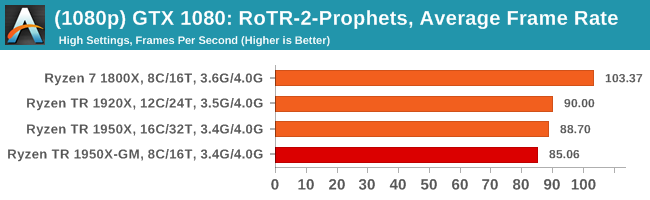

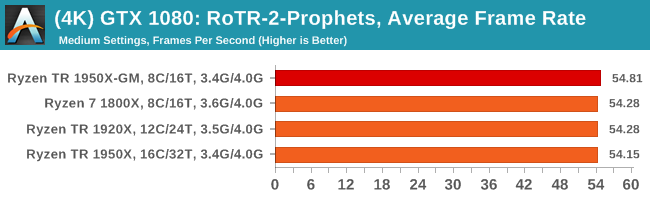

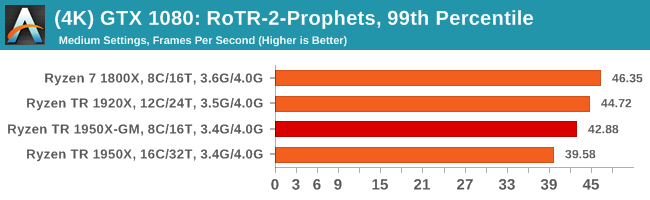

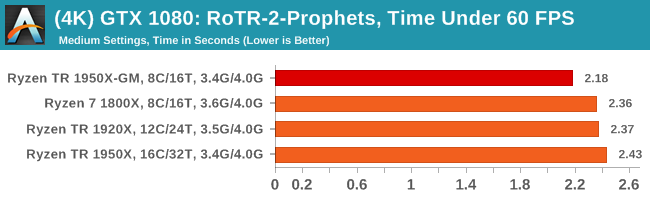

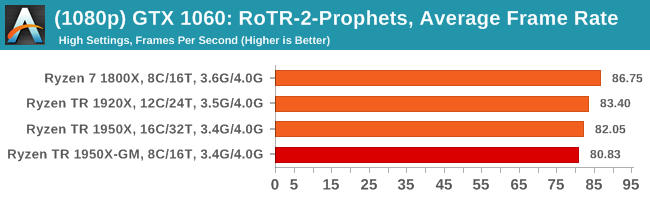

#2 Prophet’s Tomb

MSI GTX 1080 Gaming 8G Performance

1080p

4K

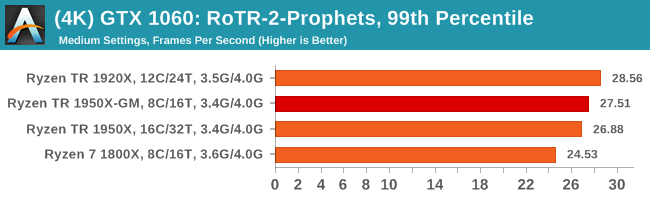

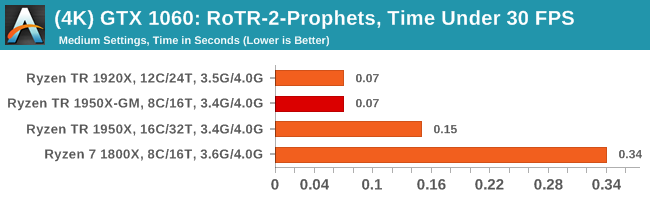

ASUS GTX 1060 Strix 6G Performance

1080p

4K

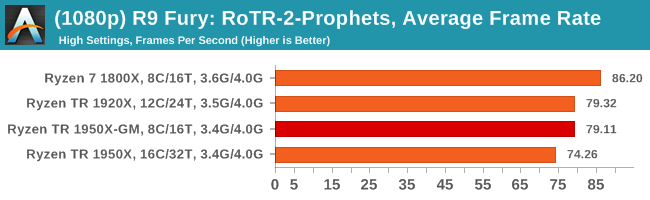

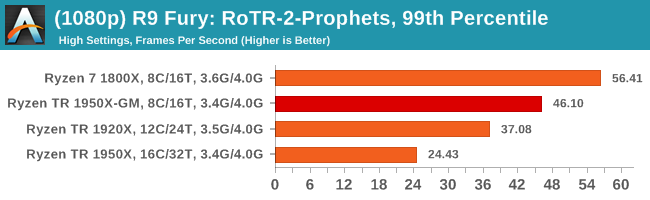

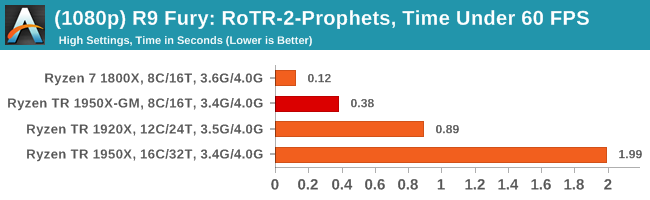

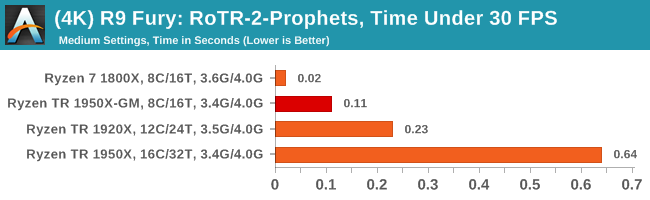

Sapphire Nitro R9 Fury 4G Performance

1080p

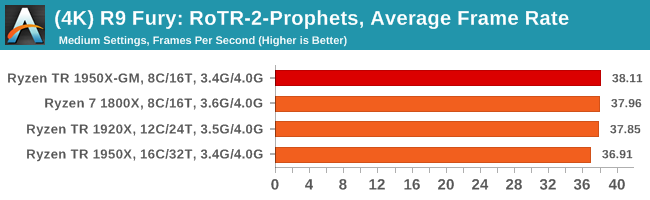

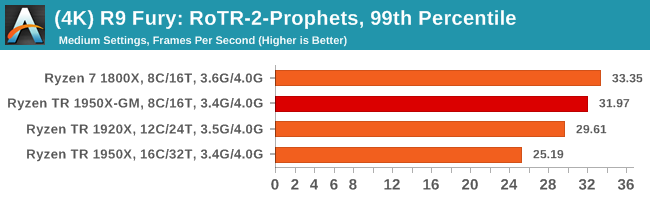

4K

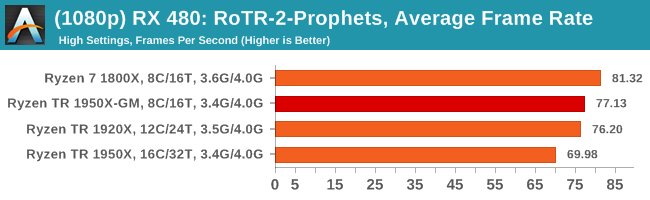

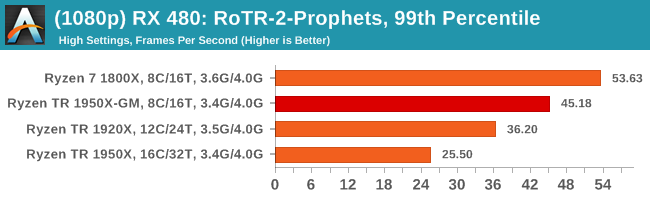

Sapphire Nitro RX 480 8G Performance

1080p

4K

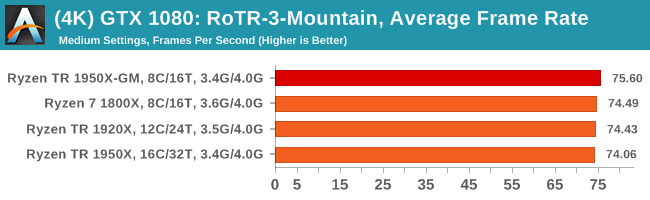

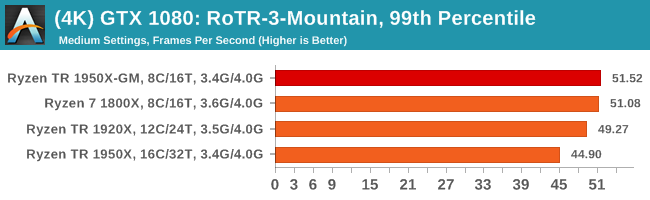

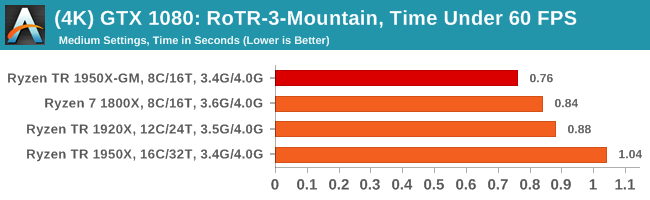

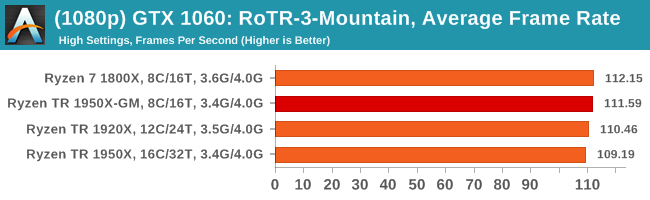

#3 Spine of the Mountain Geothermal Valley

MSI GTX 1080 Gaming 8G Performance

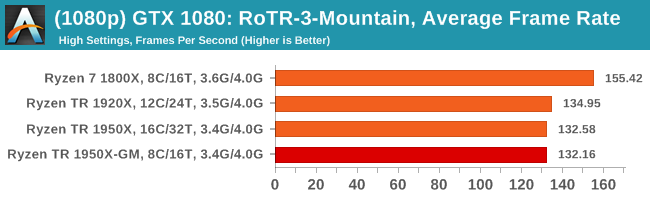

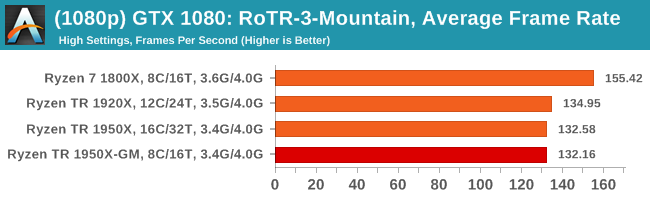

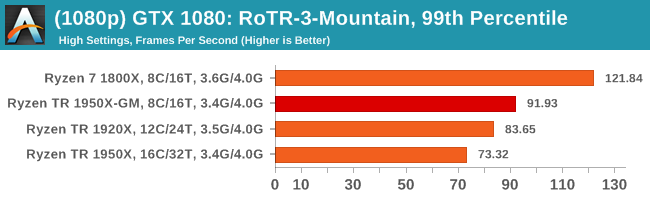

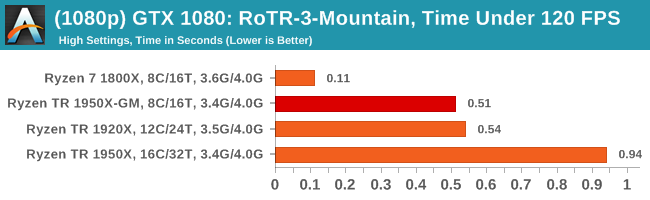

1080p

4K

ASUS GTX 1060 Strix 6G Performance

1080p

4K

Sapphire Nitro R9 Fury 4G Performance

1080p

4K

Sapphire Nitro RX 480 8G Performance

1080p

4K

104 Comments

View All Comments

silverblue - Friday, August 18, 2017 - link

I'd like to see what happens when you manually set a 2+2+2+2 core configuration, instead of enabling Game Mode. From what I've read, Game Mode destroys memory bandwidth but yields better latency, however it's not answering whether Zen cores can really benefit from the extra bandwidth that a quad-channel memory interface affords.Alternatively, just clock the 1950 and 1920 identically, and see if the 1920's per-core performance is any higher.

KAlmquist - Friday, August 18, 2017 - link

“One of the interesting data points in our test is the Compile. Because <B>this test requires a lot of cross-core communication</B> and DRAM, we get an interesting metric where the 1950X still comes out on top due to the core counts, but because the 1920X has fewer cores per CCX, it actually falls behind the 1950X in Game Mode and the 1800X despite having more cores.”Generally speaking, copmpilers are single threaded, so the parallelism in a software build comes from compiling multiple source files in parallel, meaning the cross-core communication is minimal. I have no idea what MSVC is doing here, can you explain? In any case, while I appreciate you including a software development benchmark, the one you've chosen would seem to provide no useful information to anyone who doesn't use MSVC.

peevee - Friday, August 18, 2017 - link

I use MSVC and it scales pretty well if you are using it right. They are doing something wrong.KAlmquist - Saturday, August 19, 2017 - link

Thanks. It makes sense that MSVC would scale about as well as any other build environment.ARS Technica also benchmarked a Chromium build, which I think uses MSVC, but uses the Google tools GN and Ninja to manage the build. They get:

Ryzen 1800X (8 cores) - 9.8 build/day

Threadripper 1920X (12 cores) - 16.7 build/day

Threadripper 1950X (16 cores) - 18.6 build/day

Very good speedup with the 1920X over the 1800X, but not so much going from the 1920X to the 1950X. Perhaps the benchmark is dependent on memory bandwidth and L3 cache.

Timur Born - Friday, August 18, 2017 - link

Thanks for the tests!I would have liked to see a combination of both being tested: Game Mode to switch off the second die and SMT disabled. That way 4 full physical cores with low latency memory access would have run the games.

Hopefully modern titles don't benefit from this, but some more "legacy" ones might like this setup even more.

Timur Born - Friday, August 18, 2017 - link

Sorry, I meant 8 cores, aka 8/8 cores mode.mat9v - Friday, August 18, 2017 - link

I wish someone had an inclination to test creative mode but with games pinned to one module. It is essentially NUMA mode but with all cores active.Or just enable SMT that is disabled in Gaming Mode - we actually then get a Ryzen 1800X CPU that overclocks well but with possibly higher performance due to all system task running on different module (if we configure system that way) and unencumbered access to more PCIEx lines.

peevee - Friday, August 18, 2017 - link

Yes, that would be interesting.c:\>start /REALTIME /NODE 0 /AFFINITY 5555 you_game_here.exe

mat9v - Friday, August 18, 2017 - link

I think I would start it on node 1 is anything since system task would be at default running on node 0.Mask 5555? Wouldn't it be AAAA - for 8 cores (8 threads) and FFFF for 8 cores (16 threads)?

peevee - Friday, August 18, 2017 - link

The mask 5555 assumes that SMT is enabled. Otherwise it should be FF.When SMT is enabled, 5555 and AAAA will allocate threads to the same cores, just different logical CPUs.

Where system threads will be run is system dependent, nothing prevents Windows from running them on NODE 1. /NODE 0 allows to run whether or not you actually have multiple NUMA nodes.

With /REALTIME Windows will have hard time allocating anything on those logical CPUs, but can use the same cores with other logical CPUs, so yes, technically it will affect results. But unless you load it with something, the difference should not be significant - things like cache and memory bus contention are more important anyway and don't care on which cores you run.