Retesting AMD Ryzen Threadripper’s Game Mode: Halving Cores for More Performance

by Ian Cutress on August 17, 2017 12:01 PM ESTAshes of the Singularity Escalation

Seen as the holy child of DirectX12, Ashes of the Singularity (AoTS, or just Ashes) has been the first title to actively go explore as many of DirectX12s features as it possibly can. Stardock, the developer behind the Nitrous engine which powers the game, has ensured that the real-time strategy title takes advantage of multiple cores and multiple graphics cards, in as many configurations as possible.

As a real-time strategy title, Ashes is all about responsiveness during both wide open shots but also concentrated battles. With DirectX12 at the helm, the ability to implement more draw calls per second allows the engine to work with substantial unit depth and effects that other RTS titles had to rely on combined draw calls to achieve, making some combined unit structures ultimately very rigid.

Stardock clearly understand the importance of an in-game benchmark, ensuring that such a tool was available and capable from day one, especially with all the additional DX12 features used and being able to characterize how they affected the title for the developer was important. The in-game benchmark performs a four minute fixed seed battle environment with a variety of shots, and outputs a vast amount of data to analyze.

For our benchmark, we run a fixed v2.11 version of the game due to some peculiarities of the splash screen added after the merger with the standalone Escalation expansion, and have an automated tool to call the benchmark on the command line. (Prior to v2.11, the benchmark also supported 8K/16K testing, however v2.11 has odd behavior which nukes this.)

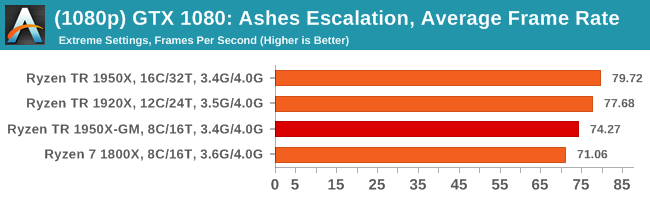

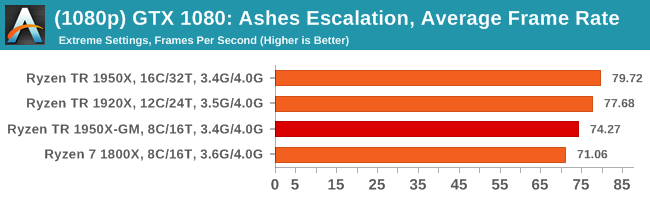

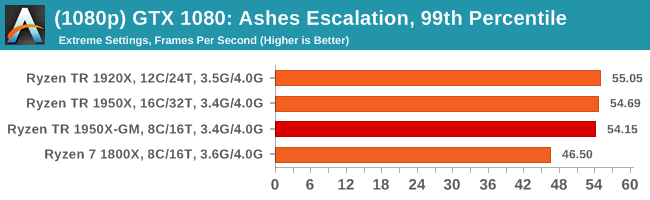

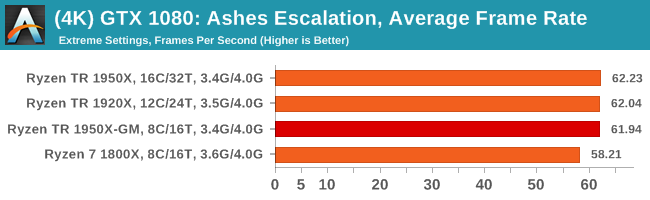

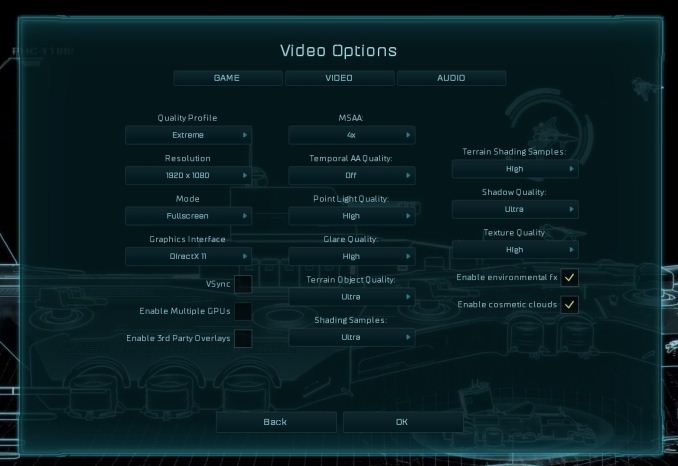

At both 1920x1080 and 4K resolutions, we run the same settings. Ashes has dropdown options for MSAA, Light Quality, Object Quality, Shading Samples, Shadow Quality, Textures, and separate options for the terrain. There are several presents, from Very Low to Extreme: we run our benchmarks at Extreme settings, and take the frame-time output for our average, percentile, and time under analysis.

All of our benchmark results can also be found in our benchmark engine, Bench.

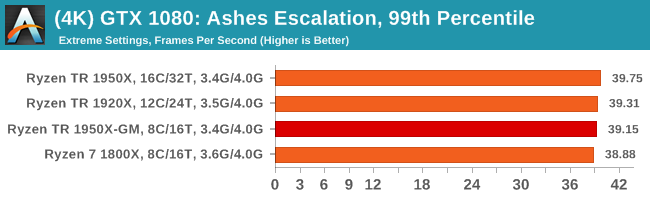

MSI GTX 1080 Gaming 8G Performance

1080p

4K

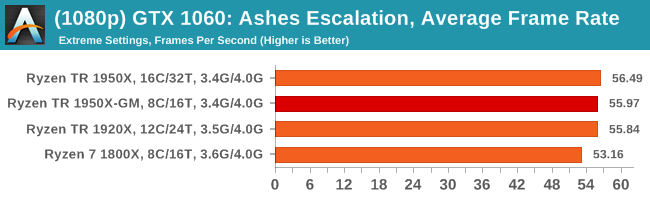

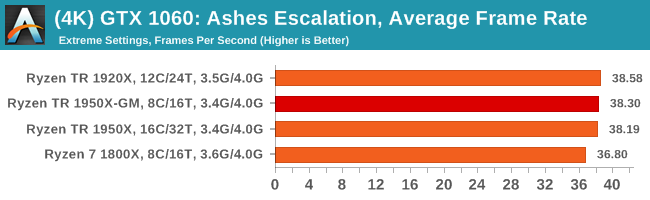

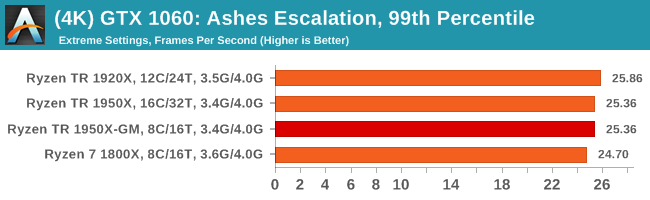

ASUS GTX 1060 Strix 6G Performance

1080p

4K

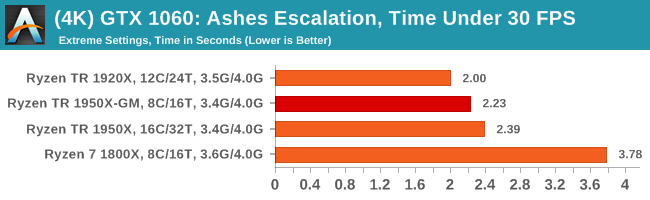

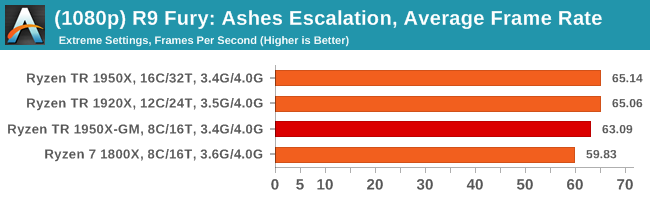

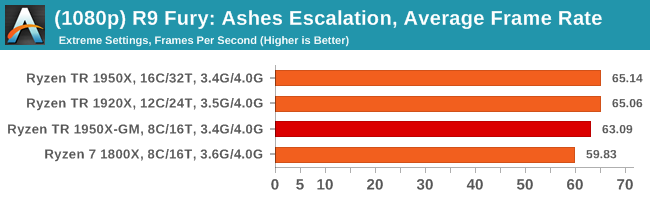

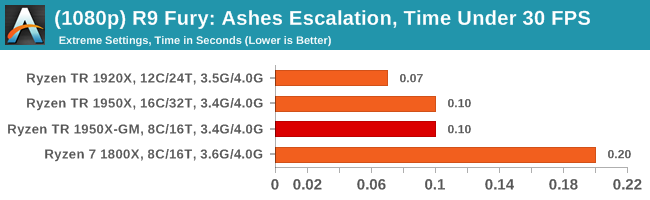

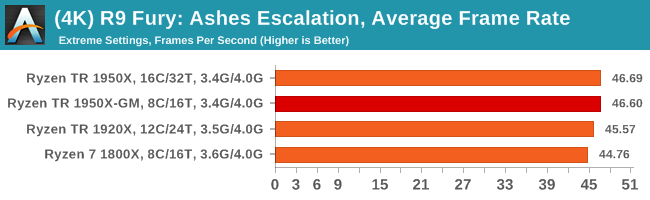

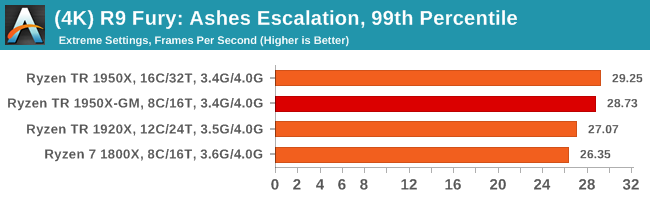

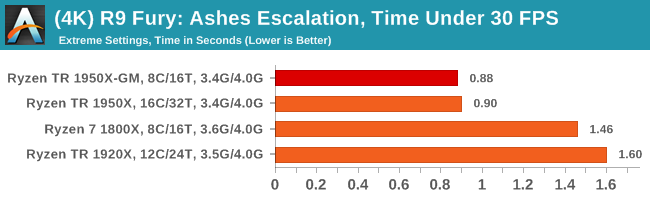

Sapphire Nitro R9 Fury 4G Performance

1080p

4K

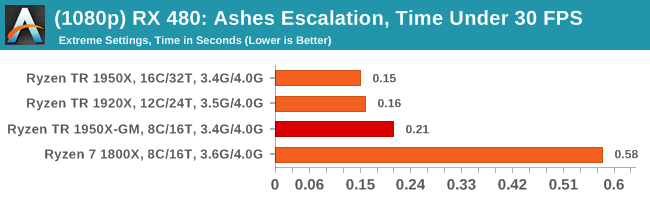

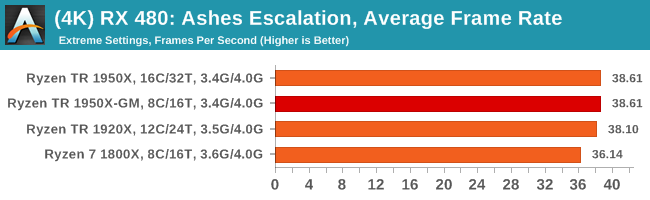

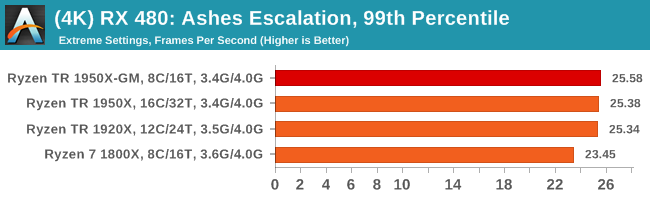

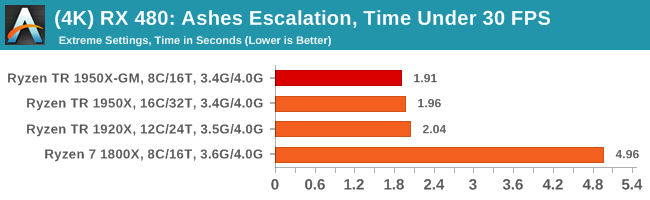

Sapphire Nitro RX 480 8G Performance

1080p

4K

104 Comments

View All Comments

peevee - Friday, August 18, 2017 - link

Compilation scales even on multi-CPU machines. With much higher communication latencies.In general, compilers running in parallel on MSVC (with MSBuild) run in different processes, they don't write into each other's address spaces and so do not need to communicate at all.

Quit making excuses. You are doing something wrong. I am doing development for multi-CPU machines and ON multi-CPU machines for a very long time. YOU are doing something wrong.

peevee - Friday, August 18, 2017 - link

BTW, when you enable NUMA on TR, does Windows 10 recognize it as one CPU group or 2?gzunk - Saturday, August 19, 2017 - link

It recognizes it as two NUMA nodes.Alexey291 - Saturday, September 2, 2017 - link

They aren't going to do anything.All their 'scientific benchmarking' is running the same macro again and again on different hardware setups.

What you are suggesting requires actual work and thought.

Arbie - Thursday, August 17, 2017 - link

As noted by edzieba, the correct phrase (and I'm sure it has a very British heritage) is "The proof of the pudding is in the eating".Another phrase needing repair: "multithreaded tests were almost halved to the 1950X". Was this meant to be something like "multithreaded tests were almost half of those in Creator mode" (?).

Technically, of course, your articles are really well-done; thanks for all of them.

fanofanand - Thursday, August 17, 2017 - link

Thank you for listening to the readers and re-testing this, Ian!ddriver - Thursday, August 17, 2017 - link

To sum it up - "game mode" is moronic. It is moronic for amd to push it, and to push TR as a gaming platform, which is clearly neither its peak, nor even its strong point. It is even more moronic for people to spend more than double the money just to have half of the CPU disabled, and still get worse performance than a ryzen chip.TR is great for prosumers, and represents a tremendous value and performance at a whole new level of affordability. It will do for games if you are a prosumer who occasionally games, but if you are a gamer it makes zero sense. Having AMD push it as a gaming platform only gives "people" the excuse to whine how bad it is at it.

Also, I cannot shake the feeling there should be a better way to limit scheduling to half the chip for games without having to disable the rest, so it is still usable to the rest of the system.

Gothmoth - Thursday, August 17, 2017 - link

first coders should do their job.. that is the main problem today. lazy and uncompetent coders.eriohl - Thursday, August 17, 2017 - link

Of course you could limit thread scheduling on software level. But it seems to me that there is a perfectly reasonable explanation why Microsoft and the game developers haven't been spending much time optimizing for running games on systems with NUMA.HomeworldFound - Thursday, August 17, 2017 - link

You can't call a coder that doesn't anticipate a 16 core 32 thread CPU lazy. The word is incompetent btw. I'd like to see you make a game worth millions of dollars and account for this processor, heck any processor with more than six cores.