Retesting AMD Ryzen Threadripper’s Game Mode: Halving Cores for More Performance

by Ian Cutress on August 17, 2017 12:01 PM ESTAshes of the Singularity Escalation

Seen as the holy child of DirectX12, Ashes of the Singularity (AoTS, or just Ashes) has been the first title to actively go explore as many of DirectX12s features as it possibly can. Stardock, the developer behind the Nitrous engine which powers the game, has ensured that the real-time strategy title takes advantage of multiple cores and multiple graphics cards, in as many configurations as possible.

As a real-time strategy title, Ashes is all about responsiveness during both wide open shots but also concentrated battles. With DirectX12 at the helm, the ability to implement more draw calls per second allows the engine to work with substantial unit depth and effects that other RTS titles had to rely on combined draw calls to achieve, making some combined unit structures ultimately very rigid.

Stardock clearly understand the importance of an in-game benchmark, ensuring that such a tool was available and capable from day one, especially with all the additional DX12 features used and being able to characterize how they affected the title for the developer was important. The in-game benchmark performs a four minute fixed seed battle environment with a variety of shots, and outputs a vast amount of data to analyze.

For our benchmark, we run a fixed v2.11 version of the game due to some peculiarities of the splash screen added after the merger with the standalone Escalation expansion, and have an automated tool to call the benchmark on the command line. (Prior to v2.11, the benchmark also supported 8K/16K testing, however v2.11 has odd behavior which nukes this.)

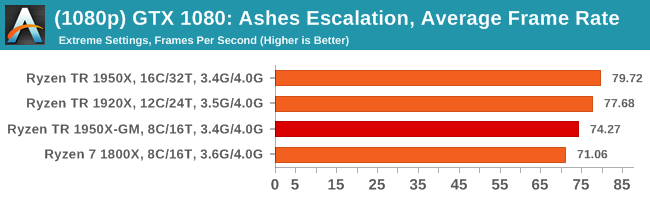

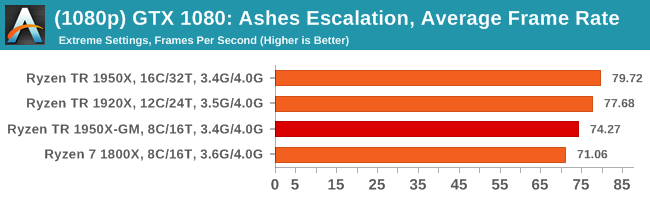

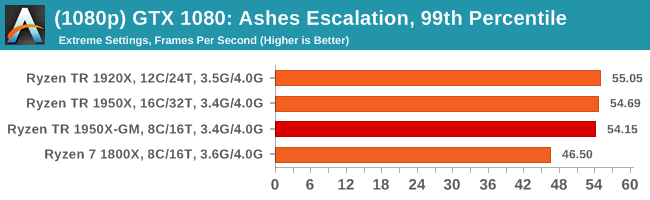

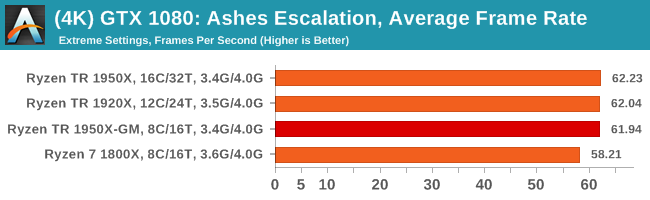

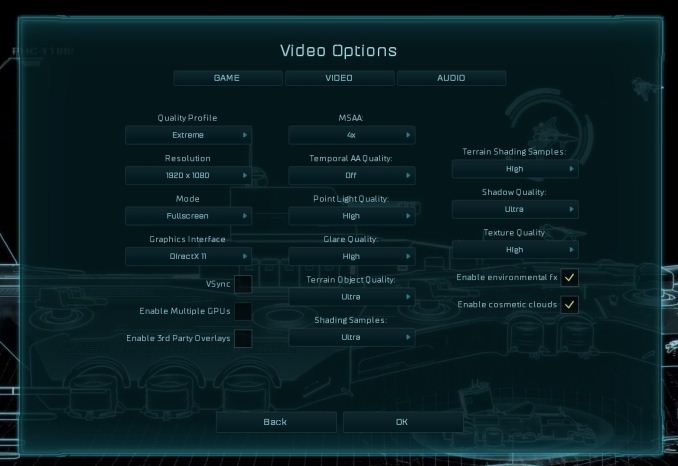

At both 1920x1080 and 4K resolutions, we run the same settings. Ashes has dropdown options for MSAA, Light Quality, Object Quality, Shading Samples, Shadow Quality, Textures, and separate options for the terrain. There are several presents, from Very Low to Extreme: we run our benchmarks at Extreme settings, and take the frame-time output for our average, percentile, and time under analysis.

All of our benchmark results can also be found in our benchmark engine, Bench.

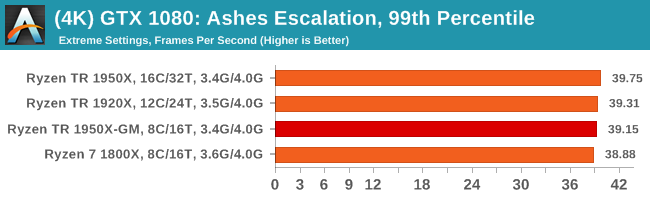

MSI GTX 1080 Gaming 8G Performance

1080p

4K

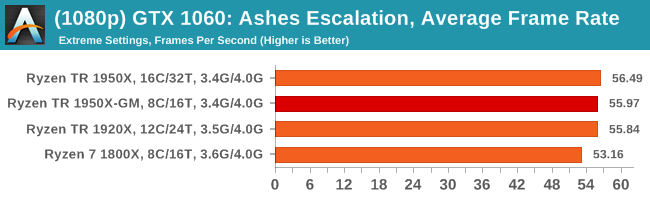

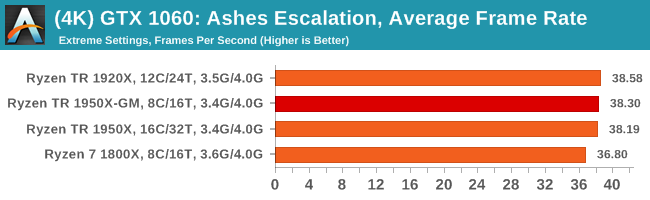

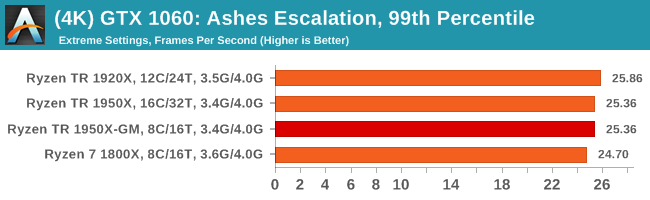

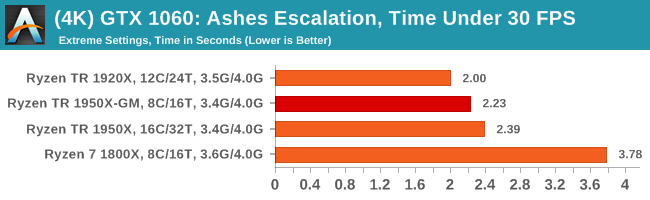

ASUS GTX 1060 Strix 6G Performance

1080p

4K

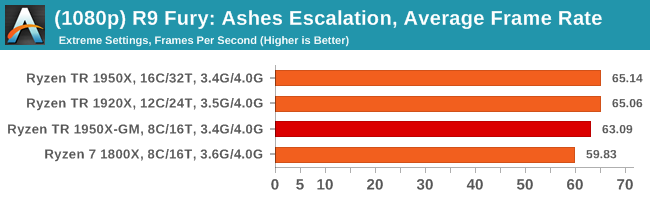

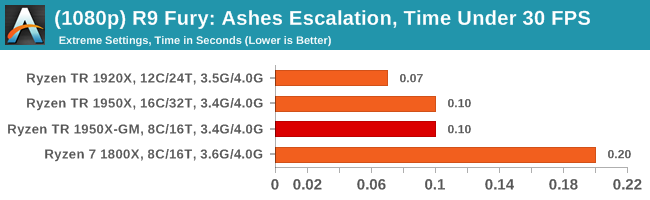

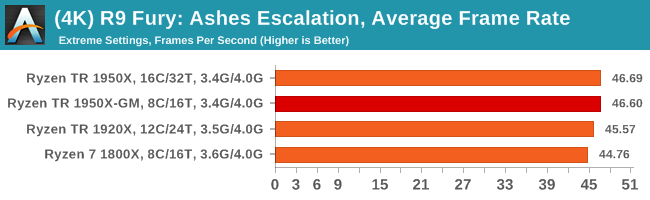

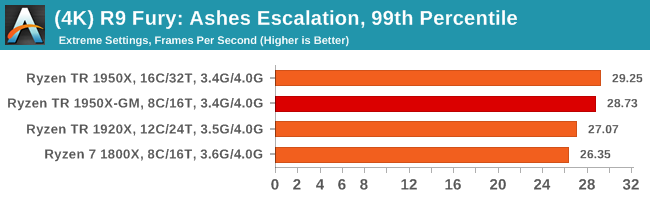

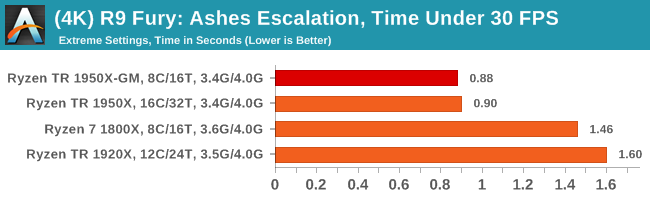

Sapphire Nitro R9 Fury 4G Performance

1080p

4K

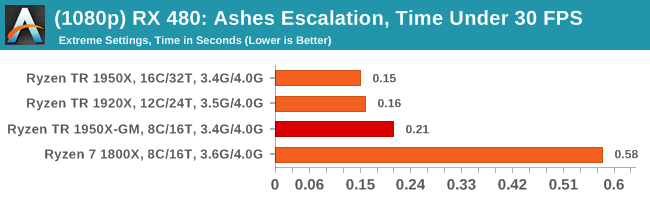

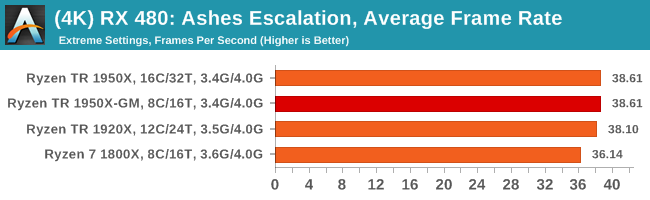

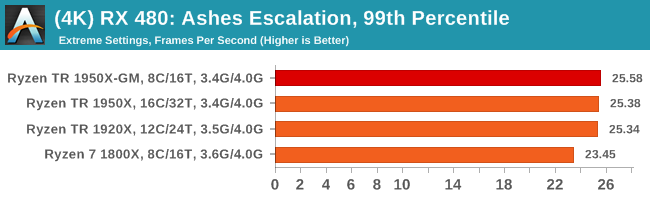

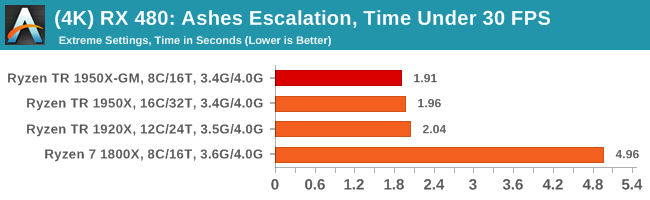

Sapphire Nitro RX 480 8G Performance

1080p

4K

104 Comments

View All Comments

peevee - Friday, August 18, 2017 - link

Of course. Work CPUs must be tested at work. Kiddies are fine with i3s.Ian Cutress - Sunday, August 20, 2017 - link

https://myhacker.net hacking news hacking tutorials hacking ebooksIGTrading - Thursday, August 17, 2017 - link

It would be nice and very useful to post some power consumption results at the platform level, if we're doing "extra" additional testing.It is very important since we're paying for the motherboard just as much as we pay for a Ryzen 5 or even Ryzen 7 processor.

And it will correctly compare the TCO of the X399 platform with the TCO of X299.

jordanclock - Thursday, August 17, 2017 - link

So it looks like AMD should have gone with just disabling SMT for Game Mode. There are way more benefits and it is easier to understand the implications. I haven't seen similar comparisons for Intel in a while, perhaps that can be exploration for Skylake-X as well?HStewart - Thursday, August 17, 2017 - link

I would think disable SMT would be better, but the reason maybe in designed of link between the two 8 Core dies on chip.GruenSein - Thursday, August 17, 2017 - link

I'd really love to see a frame time probability distribution (Frame time on x-axis, rate of occurrence on y-axis). Especially in cases with very unlikely frames below a 60Hz rate, the difference between TR and TR-GM/1800X seem most apparent. Without the distribution, we will never know if we are seeing the same distribution but slightly shifted towards lower frame rates as the slopes of the distribution might be steep. However, those frames with frame times above a 60Hz rate might be real stutters down to a 30Hz rate but they might just as well be frames at a 59,7Hz rate. I realize why this threshold was selected but every threshold is quite arbitrary.MrSpadge - Thursday, August 17, 2017 - link

Does AMD comment on the update? What's their reason for choosing 8C/16T over 16C/16T?> One could postulate that Windows could do something similar with the equivalent of hyperthreads.

They're actually already doing that. Loading 50% of all threads on an SMT machine will result in ~50% average load on every logical core, i.e. all physical cores are only working on 1 thread at a time.

I know mathematically other schedulings are possible, leading to the same result - but by now I think it's common knowledge that the default Win scheduler works like that. Hence most lightly threaded software is indifferent to SMT. Except games.

NetMage - Sunday, August 20, 2017 - link

Then why did SMT mode show differences from Creator mode in the original review?Dribble - Thursday, August 17, 2017 - link

No one is ever going to run game mode - why buy a really expensive chip and then disable half of it, especially as you have to reboot to do it? It's only use is to make threadripper look slightly better in reviews. Imo it would be more honest as a reviewer to just run it in creator mode all the time.jordanclock - Thursday, August 17, 2017 - link

The point is compatibility, as mentioned in the article multiple times. AMD is offering this as an option for applications (mainly games) that do not run correctly, if at all, on >16 core CPUs.