Sizing Up Servers: Intel's Skylake-SP Xeon versus AMD's EPYC 7000 - The Server CPU Battle of the Decade?

by Johan De Gelas & Ian Cutress on July 11, 2017 12:15 PM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Enterprise

- Skylake

- Zen

- Naples

- Skylake-SP

- EPYC

Multi-core SPEC CPU2006

For the record, we do not believe that the SPEC CPU "Rate" metric has much value for estimating server CPU performance. Most applications do not run lots of completely separate processes in parallel; there is at least some interaction between the threads. But since the benchmark below caused so much discussion, we wanted to satisfy the curiosity of our readers.

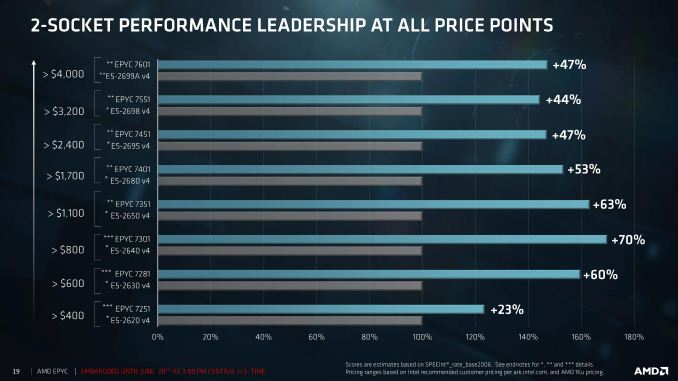

Does the EPYC7601 really have 47% more raw integer power? Let us find out. Though please note that you are looking at officially invalid base SPEC rate runs, as we still have to figure out how to tell the SPEC software that our "invalid" flag "-Ofast" is not invalid at all. We did the required 3 iterations though.

| Subtest | Application type | Xeon E5-2699 v4 @ 2.8 |

Xeon 8176 @ 2.8 |

EPYC 7601 @2.7 |

EPYC Vs Broadwell EP |

EPYC vs Skylake SP |

| 400.perlbench | Spam filter | 1470 | 1980 | 2020 | +37% | +2% |

| 401.bzip2 | Compression | 860 | 1120 | 1280 | +49% | +14% |

| 403.gcc | Compiling | 960 | 1300 | 1400 | +46% | +8% |

| 429.mcf | Vehicle scheduling | 752 | 927 | 837 | +11% | -10% |

| 445.gobmk | Game AI | 1220 | 1500 | 1780 | +46% | +19% |

| 456.hmmer | Protein seq. analyses | 1220 | 1580 | 1700 | +39% | +8% |

| 458.sjeng | Chess | 1290 | 1570 | 1820 | +41% | +16% |

| 462.libquantum | Quantum sim | 545 | 870 | 1060 | +94% | +22% |

| 464.h264ref | Video encoding | 1790 | 2670 | 2680 | +50% | -0% |

| 471.omnetpp | Network sim | 625 | 756 | 705 (*) | +13% | -7% |

| 473.astar | Pathfinding | 749 | 976 | 1080 | +44% | +11% |

| 483.xalancbmk | XML processing | 1120 | 1310 | 1240 | +11% | -5% |

(*) We had to run 471.omnetpp with 64 threads on EPYC: when running at 128 threads, it gave errors. Once solved, we expect performance to be 10-20% higher.

Ok, first a disclaimer. The SPECint rate test is likely unrealistic. If you start up 88 to 128 instances, you create a massive bandwidth bottleneck and a consistent CPU load of 100%, neither of which are very realistic in most integer applications. You have no synchronization going on, so this is really the ideal case for a processor such as the AMD EPYC 7601. The rate test estimates more or less the peak integer crunching power available, ignoring many subtle scaling problems that most integer applications have.

Nevertheless, AMD's claim was not farfetched. On average, and using a "neutral" compiler with reasonable compiler settings, the AMD 7601 has about 40% (42% if you take into account that our Omnetpp score will be higher once we fixed the 128 instances issue) more "raw" integer processing power than the Xeon E5-2699 v4, and is even about 6% faster than the Xeon 8176. Don't expect those numbers to be reached in most real integer applications though. But it shows how much progress AMD has made nevertheless...

219 Comments

View All Comments

ddriver - Wednesday, July 12, 2017 - link

LOL, buthurt intel fanboy claims that the only unbiased benchmark in the review is THE MOST biased benchmark in the review, the one that was done entirely for the puprpose to help intel save face.Because if many core servers running 128 gigs of ram are primarily used to run 16 megabyte databases in the real world. That's right!

Beany2013 - Tuesday, July 11, 2017 - link

Sure, test against Ubuntu 17.04 if you only plan to have your server running till January. When it goes end of life. That's not a joke - non LTS Ubuntu released get nine months patches and that's it.https://wiki.ubuntu.com/Releases

16.04 is supported till 2021, it's what will be used in production by people who actually *buy* and *use* servers and as such it's a perfectly representative benchmark for people like me who are looking at dropping six figures on this level of hardware soon and want to see how it performs on...goodness, realistic workloads.

rahvin - Wednesday, July 12, 2017 - link

This is a silly argument. No one running these is going to be running bleeding edge software, compiling special kernels or putting optimizing compiler flags on anything. Enterprise runs on stable verified software and OS's. Your typical Enterprise Linux install is similar to RHEL 6 or 7 or it's variants (some are still running RHEL 5 with a 2.6 kernel!). Both RHEL6 and 7 have kernels that are 5+ years old and if you go with 6 it's closer to 10 year old.Enterprises don't run bleeding edge software or compile with aggressive flags, these things create regressions and difficult to trace bugs that cost time and lots of money. Your average enterprise is going to care about one thing, that's performance/watt running something like a LAMP stack or database on a standard vanilla distribution like RHEL. Any large enterprise is going to take a review like this and use it as data point when they buy a server and put a standard image on it and test their own workloads perf/watt.

Some of the enterprises who are more fault tolerant might run something as bleeding edge as an Ubuntu Server LTS release. This review is a fair review for the expected audience, yes every writer has a little bias but I'd dare you to find it in this article, because the fanboi's on both sides are complaining that indicates how fair the review is.

jjj - Tuesday, July 11, 2017 - link

Do remember that the future is chiplets, even for Intel.The 2 are approaching that a bit differently as AMD had more cost constrains so they went with a 4 cores CCX that can be reused in many different prods.

Highly doubt that AMD ever goes back to a very large die and it's not like Intel could do a monolithic 48 cores on 10nm this year or even next year and that would be even harder in a competitive market. Sure if they had a Cortex A75 like core and a lot less cache, that's another matter but they are so far behind in perf/mm2 that it's hard to even imagine that they can ever be that efficient.

coder543 - Tuesday, July 11, 2017 - link

Never heard the term "chiplet" before. I think AMD has adequately demonstrated the advantages (much higher yield -> lower cost, more than adequate performance), but I haven't heard Intel ever announce that they're planning to do this approach. After the embarrassment that they're experiencing now, maybe they will.Ian Cutress - Tuesday, July 11, 2017 - link

Look up Intel's EMIB. It's an obvious future for that route to take as process nodes get smaller.Threska - Saturday, July 22, 2017 - link

We may see their interposer (like used with their GPUs) technology being used.jeffsci - Tuesday, July 11, 2017 - link

Benchmarking NAMD with pre-compiled binaries is pretty silly. If you can't figure out how to compile it for each every processor of interest, you shouldn't be benchmarking it.CajunArson - Tuesday, July 11, 2017 - link

On top of all that, they couldn't even be bothered to download and install a (completely free) vanilla version that was released this year. Their version of NAMD 2.10 is from *2014*!http://www.ks.uiuc.edu/Development/Download/downlo...

tamalero - Tuesday, July 11, 2017 - link

Do high level servers update their versions constantly?I know that most of the critical stuff, only patch serious vulnerabilities and not update constantly to newer things just because they are available.