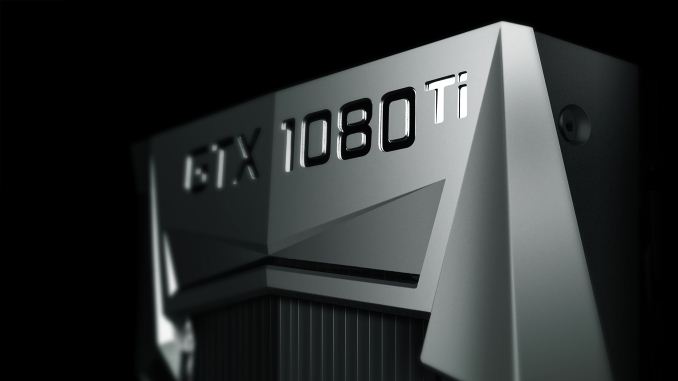

The NVIDIA GeForce GTX 1080 Ti Founder's Edition Review: Bigger Pascal for Better Performance

by Ryan Smith on March 9, 2017 9:00 AM ESTFinal Words

Bringing this review to a close, the launch of the GeForce GTX 1080 Ti Founder’s Edition gives NVIDIA a chance to set their pace and tone for the rest of 2017. After a fantastic 2016 powered by Pascal, NVIDIA is looking to repeat that success this year. And that success starts with a very strong launch of what is NVIDIA’s new flagship GeForce card.

Because the GeForce GTX 1080 Ti Founder’s Edition isn’t NVIDIA’s first GP102-based product – even if it is their first GeForce product – I don’t think anything we’ve seen today is going to catch anyone by surprise. In fact as the third time now that they’ve released a 250 Watt Ti refresher at the high-end, I don’t think any of this should be surprising. At this point NVIDIA has their GeForce launches down to an art, and that ability to execute so well on these kinds of launches is part of the reason that 2016 was such a banner year for the company.

| GeForce GTX 1080 Ti: Average Performance Gains | |||

| Card | 4K | 1440p | |

| vs. GTX 1080 |

+32%

|

+28%

|

|

| vs. GTX 980 Ti |

+74%

|

+68%

|

|

| vs. GTX 780 Ti |

+154%

|

+154%

|

|

Taking a look at the numbers, as a mid-generation refresh of their high-end products, the GTX 1080 Ti delivers around 32% better performance than the GTX 1080 at 4K, and 28% better performance at 1440p. NVIDIA said they were going to get a 35% improvement over the GTX 1080 with the GTX 1080 Ti, and while our numbers don’t quite match that, they are close to the mark.

For GTX 980 Ti and GTX 780 Ti owners then, who are the most likely groups to be in the market for a $699 video card and looking to upgrade, the GTX 1080 Ti should prove a suitable card. Relative to the last-generation GTX 980 Ti, the GTX 1080 Ti offers 74% better performance at 4K and 68% better performance at 1440p. This is very similar to the kinds of gains we saw in the GTX 1080 over the GTX 980 last year, and in fact is a bit better than what the GTX 980 Ti did to its predecessors.

Speaking of which, it’s now been three-and-a-half years since the GTX 780 Ti launch, and GTX 1080 Ti’s performance shows it. At both 4K and 1440p, NVIDIA’s card offers just over 2.5 times the performance of their Kepler-based powerhouse. Internally, NVIDIA tends to plan for a two to four year upgrade cadence on their video cards, and 2017 is going to be the year they push remaining GTX 700 series owners to upgrade through a combination of product launches like the GTX 1080 Ti and better pricing. If you didn’t already upgrade to a Pascal card last year, then your benefit for waiting a year is 32% better performance for the same price.

Relative performance aside, in terms of absolute performance I feel like NVIDIA is finally reaching the point where they can offer no-compromises 4K gaming. While both NVIDIA and AMD pushed 4K hard on their 28nm generation of products, even parts like the GeForce GTX 980 Ti and Radeon Fury X weren’t quite fast enough for the task. 4K gaming in 2015 meant making compromises between image quality and framerates. GTX 1080 Ti on the other hand is the first card to crack 60fps at 4K in a few of our games, and it comes very close to doing so in a few others. While performance requirements for video games are always a moving target (and always moving up, at that), I think with the FinFET generation we’re finally at the point where 4K gaming is practical. And that’s in an “all the frames, all the quality” sense, not by using checkerboarding and other image scaling techniques being used by the game consoles to stretch into 4K.

Overall then, the GeForce GTX 1080 Ti is another well-executed launch by NVIDIA. The $699 card isn’t for the faint of wallet, but if you can afford to spend that much money on the hobby, then the GTX 1080 Ti is unrivaled in performance.

Finally, looking at the big picture, this launch further solidifies NVIDIA’s dominance of the high-end video card market. The GTX 1080 has gone unchallenged in the last 10 months, and with the GTX 1080 Ti NVIDIA is extending that performance lead even farther. As I mentioned towards the start of this article, the launch of the GTX 1080 Ti is both a chance for NVIDIA to take a victory lap for 2016 and to set the stage for the rest of the year. For now it puts them that much farther ahead of AMD and gives them a chance to start 2017 on a high note. But GTX 1080 Ti won’t go unanswered forever, and later on this year we’re going to get a chance to see where AMD’s Vega fits into the big picture. I for one am hoping for an exciting year.

161 Comments

View All Comments

eddman - Friday, March 10, 2017 - link

So we moved from "nvidia pays devs to deliberately not optimize for AMD" to "nvidia works with devs to optimize the games for their own hardware, which might spoil them and result in them not optimizing for AMD properly".How is that bribery, illegal? If they did not prevent the devs from optimizing for AMD then nothing illegal happened. It was the devs own doing.

ddriver - Friday, March 10, 2017 - link

Nope, there is an implicit, unspoken condition to receiving support from nvidia. To lazy slobs, that's welcome, and most devs are lazy slobs. Their line of reasoning is quite simple:"Working to optimize for amd is hard, I am a lazy and possibly lousy developer, so if they don't do that for me like nvidia does, I won't do that either, besides that would angry nvidia, since they only assist me in order to make their hardware look better, if I do my job and optimize for amd and their hardware ends up beating nvidia's, I risk losing nvidia's support, since why would they put money into helping me if they don't get the upper hand in performance. Besides, most people use nvidia anyway, so why even bother. I'd rather be taken to watch strippers again than optimize my software."

Manipulation, bribery and extortion. nvidia uses its position to create situation in which game developers have a lot to profit from NOT optimizing for amd, and a lot to lose if they do. Much like intel did with its exclusive discounts. OEM's weren't exactly forced to take those discounts in exchange for not selling amd, they did what they knew would please intel to get rewarded for it. Literally the same thing nvidia does. Game developers know nvidia will be pleased to see their hardware getting an unfair performance advantage, and they know amd doesn't have the money to pamper them, so they do what is necessary please nvidia and ensure they keep getting support.

akdj - Monday, March 13, 2017 - link

Where to start?Best not to start, as you are completely, 100% insane and I've spent two and a half 'reads' of your replies... trying to grasp WTH you're talking about and I'm lost

Totally, completely lost in your conspiracy theories about two major GPU silicon builders while being apparently and completely clueless about ANY of it!

Lol - Wow, I'm truly astounded that you were able to make up that much BS ...

cocochanel - Friday, March 10, 2017 - link

You forgot to mention one thing. Nvidia tweaking the drivers to force users into hardware updates. Say, there is a bunch of games coming up this Christmas. If you have a card that's 3-4 years old, they release a new driver which performs poorly on your card ( on those games ) and another driver which performs way better on the newest cards. Then, if you start crying, they say: It's an old card, pal, why don't you buy a new one !With DX11 they could do that a lot. With DX12 and Vulkan it's a lot harder. Most if not all optimizations have to be done by the game programmers. Very little is left to the driver.

eddman - Friday, March 10, 2017 - link

That's how the ENTIRE industry is. Do you really expect developers to optimize for old architectures. Everyone does it, nvidia, AMD, intel, etc.It is not deliberate. Companies are not going to spend time and money on old hardware with little market share. That's how it's been forever.

Before you say that's not the case with radeons, it's because their GCN architecture hasn't changed dramatically since its first iteration. As a result, any optimization done for the latest GCN, affects the older ones to some extent too.

cocochanel - Friday, March 10, 2017 - link

There is good news for the future. As DX12 and Vulkan become mainstream API's, game developers will have to roll up their sleeves and sweat it hard. Architecturely, these API's are totally different from the ground up and both trace their origin from Mantle. And Mantle was the biggest advance in graphics API's in a generation. The good days for lazy game developers is coming to an end, since these new API's put just about everything back into their hands whether they like it or not. Tweaking the driver won't make much of a difference. Read the API's documentation.cmdrdredd - Monday, March 13, 2017 - link

Yes hopefully this will be the future where games are the responsibility of the developer. Just like on Consoles. I know people hate consoles sometimes but the closed platform shows which developers have their stuff together and which are lazy bums because Sony and Microsoft don't optimize anything for the games.Nfarce - Friday, March 10, 2017 - link

Always amusing watching to tin foil hat Nvidia conspiracy nuts talk. Here's my example: working on Project Cars as an "early investor." Slightly Mad Studios gave both Nvidia and AMD each 12 copies of the beta release to work on, the same copy I bought. Nvidia was in constant communication with SMS developers and AMD was all but never heard from. After about six months, Nvidia had a demo of the racing game ready for a promotion of their hardware. Since AMD didn't take Project Cars seriously with SMS, Nvidia was able to get the game tweaked better for Nvidia. And SMS hat-tipped Nvidia with having billboards in the game showing Nvidia logos.Of course all the AMD fanboys claimed unfair competition and the usual whining when their GPUs do not perform as well in some games as Nvidia (they amazingly stayed silent when DiRT Rally, another development I was involved with, ran better on AMD GPUs and had AMD billboards).

ddriver - Friday, March 10, 2017 - link

So was there anything preventing the actual developers from optimizing the game? They didn't have nvidia and amd hardware, so they sent betas to the companies to profile things and see how it runs?How silly one must be to expect that nvidia - a company that rakes in billions every year, and amd - a company is in the red most of the time and has lost billions, will have the same capacity to do game developers jobs for them?

It is the game developer's job top optimize. Alas, as it seems, nvidia has bred a new breed of developers - those who do their job half-assedly and then wait on them to optimize, conveniently creating unfair advantage to their hardware.

ddriver - Friday, March 10, 2017 - link

Also talking about fanboys - I am not that. Yes, I am running dozens of amd gpus, and I don't see myself buying any nvidia product any time soon, but that's only because the offer superior value to what I need them for.I don't give amd extra credit for offering a better value. I know this is not what they want. It is what they are being forced into.

I am in a way grateful to nvidia for sandbagging amd, because this way I can get a much better value products. If things were square between the two, and all games were equally optimized, then both companies would offer products with approximately identical value.

Which I would hate, because I'd lose the currently, 2-3x better value for the money i get with amd. I benefit and profit from nvidia being crooks, and I am happy that I can do that.

So nvidia, keep doing what you are doing. I am not really objecting, I am simply stating the facts. Of course, nvidia fanboys would have a problem understanding that, and a problem with anyone tarnishing the good name of that helpful awesome and paying for strippers company.