The AMD Zen and Ryzen 7 Review: A Deep Dive on 1800X, 1700X and 1700

by Ian Cutress on March 2, 2017 9:00 AM ESTConclusion

The march towards Ryzen has been a long road for AMD. Anyone creating a new CPU microarchitecture deserves credit as designing such a complex thing requires millions of hours of hard graft. Nonetheless, hard graft doesn’t always guarantee success, and AMD’s targets of low power, small area, and high-performance while using x86 instructions was a spectacularly high bar, especially with a large blue incumbent that regularly outspends them in R&D many times over.

Through the initial disclosures on the Zen microarchitecture, one thing was clear when speaking to the senior staff, such as Dr. Lisa Su, Mark Papermaster, Jim Anderson, Mike Clark, Sam Naffziger and others: that quiet confidence an engineer gets when they know their product is not only good but competitive. The PR campaign up until this point of launch has been managed (we assume) such that the trickle of information comes down the pipe and keeps people on the edge of their seat. Given the interest in Ryzen, it has worked.

Ryzen is AMD’s first foray into CPUs on 14nm, and using FinFETs, as well as a new microarchitecture and pulling in optimization methods from previous products such as Excavator/Carrizo and the GPU line. If we were talking tick-tock strategy, such as Intel’s process over the last decade, this is both a tick and a tock in one. Ryzen is the first part of that strategy, on the desktop processors first, with server parts coming out in Q2 and Notebook APUs in 2H17. One of the main concerns with AMD is typically the ability to execute – get enough good parts out on time and with sufficient performance to merit their launch. As Dr. Su said in our interview, it’s one big hurdle but there are many to come.

| AMD Ryzen 7 SKUs | ||||||

| Cores/ Threads |

Base/ Turbo |

L3 | TDP | Cost | Launch Date | |

| Ryzen 7 1800X | 8/16 | 3.6/4.0 | 16 MB | 95 W | $499 | 3/2/2017 |

| Ryzen 7 1700X | 8/16 | 3.4/3.8 | 16 MB | 95 W | $399 | 3/2/2017 |

| Ryzen 7 1700 | 8/16 | 3.0/3.7 | 16 MB | 65 W | $329 | 3/2/2017 |

Today’s launch of three CPUs, part of the Ryzen 7 family, will be followed by Ryzen 5 in Q2 and Ryzen 3 later in the year. Ryzen 7 uses a single eight-core die, and uses simultaneous multi-threading (SMT) to provide sixteen threads altogether, up to 4.0 GHz on the top Ryzen 7 1800X chip for $499. Officially AMD is positioning the 1800X as a direct competitor to Intel’s i7-6900K, an 8-core processor with hyperthreading that costs over $1000. In our benchmarks, it’s been clear that this battle goes toe-to-toe.

Analyzing the Results

In the brief time we had before getting a sample and this review, we were able to run our new benchmark suite on twelve different Intel CPUs, as well as AMD’s former APU crown holder, the A10-7890K. Throughout the discussion about Ryzen, AMD was advertising a 40%+ gain in raw performance per clock over their previous generations, which was then upped to 52% when the CPUs were actually announced. Back of the envelope calculations put Ryzen at the level of the high-end desktop Broadwell CPUs or just about, which means it would be a case of pure frequency. That being said, Intel is already running CPUs two generations ahead on its mainstream platform, such as the Kaby-Lake based i7-7700K, so it’s going to be an interesting analysis.

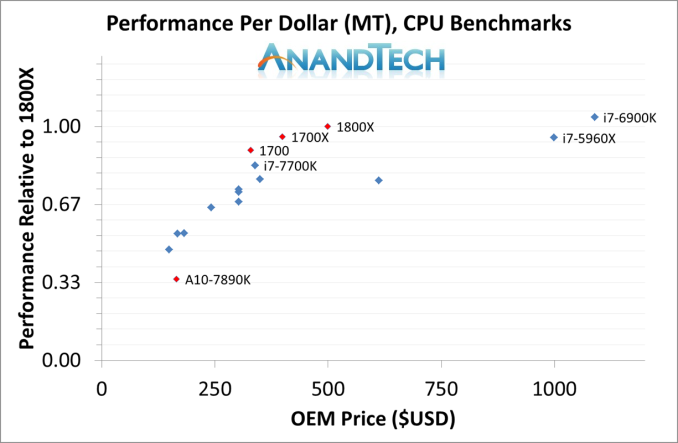

Multi-Threaded Tests

First up, AMD’s strength in our testing was clearly in the multithreaded benchmarks. Given the microarchitectures of AMD’s and Intel’s high-performance x86 cores are somewhat similar, for the most part there’s a similar performance window. Intel’s design is slightly wider and has a more integrated cache hierarchy, meaning it manages to win out on ‘edge cases’ that might be a result of bad code. As one might expect, it takes a lot of R&D to cater for particular edge cases.

But as a workstation-based core design, the Zen microarchitecture pulls no punches. As long as the software doesn’t need strenuous AVX code, and manages its memory carefully (such as false sharing), the performance of Ryzen in conventional multi-threaded CPU environments means AMD is back in the game. This is going to be beneficial for the Zen microarchitecture when we see it applied into server environments, especially those that require virtualization.

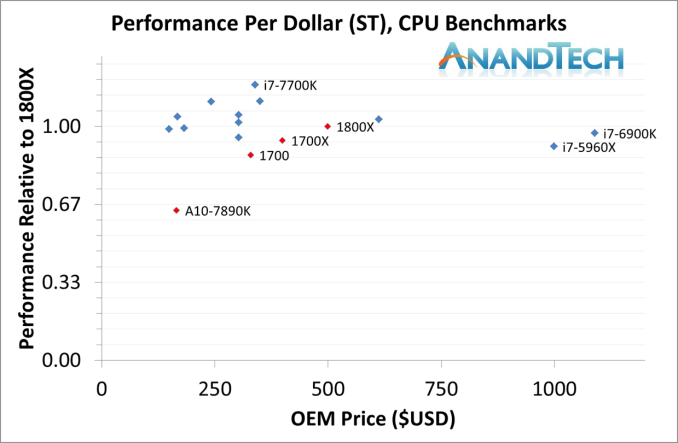

Single Threaded Tests

Since the launch of Bulldozer, AMD has always been a step behind on single-threaded performance. ST performance is a holy-grail of x86 core design, but often requires significantly advanced features that can potentially burn extra power to get there. AMD’s message on this, even in our interview with Dr. Su, is that AMD has the ability to innovate. This is why AMD promotes features such as SenseMI for their new advanced pre-fetch algorithms, why implementing a micro-op cache into the core was a big thing, and how having double the L2 cache over Intel’s comparable parts were important to the story. Nonetheless, AMD hammered down that a 40%+ IPC gain into its narrative, which then folded into a 52% gain at launch. We’ll take a deeper look into this in a separate review, but our single threaded results show that AMD is back in the fight.

Most of the data points in this graph come from Intel Kaby Lake processors at various frequencies, but the important thing here is that the Ryzen parts are at least in the mix, sitting above the other eight core parts on the far right of the graph. Also, the jump from the A10 up to the 1700 is a big generational jump, let alone considering the 1800X.

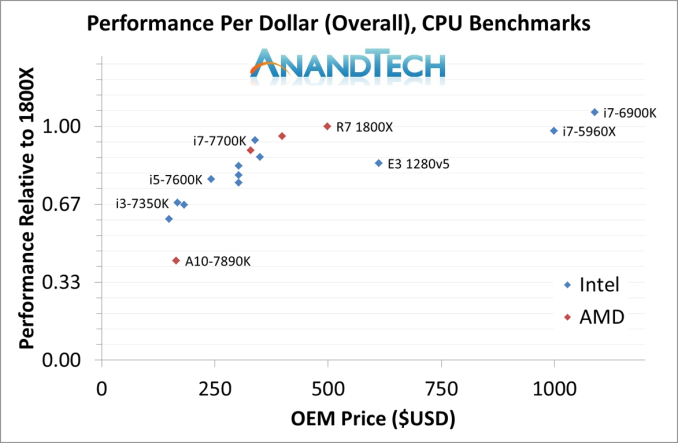

Overall Performance

Putting these two elements into the same graph gives the following:

By this measure, for overall performance, it’s clear the Core i7-7700K is still better in price/performance. However, AMD would argue that the competition for the Ryzen 7 parts is on the right, with the i7-6900K and i7-5960X. In our CPU-based results, AMD wins this performance per dollar hands down.

Some Caveats

Since testing this review, and waiting a few days to even write the conclusion, there has been much going on about ways in which AMD’s performance is perhaps being neutered. This list includes discussions around:

- Windows 10 RTC disliking 0.25x multipliers, causing timing issues,

- Software not reading L3 properly (thinking each core has 8MB of L3, rather than 2MB/core),

- Latency within a CCX being regular, but across CCX boundaries having limited bandwidth,

- Static partitioning methods being used shows performance gains when SMT is disabled,

- Ryzen showing performance gains with faster memory, more so than expected,

- Gaming Performance, particularly towards 240 Hz gaming, is being questioned,

- Microsoft’s scheduler not understanding the different CCX core-to-core latencies,

- Windows not scheduling threads within a CCX before moving onto the next CCX,

- Some motherboards having difficulty with DRAM compatibility,

- Performance related EFIs being highly regularly near weekly since two weeks before launch.

A number of these we are already taking steps to measure. Some of the fixes to these issues come from Microsoft’s corner, and we are already told that both AMD and Microsoft are underway to implement scheduling routines to fix these. Other elements will be AMD focused: working with software companies to ensure that after a decade of programming for the other main x86 microarchitecture, it’s a small step to also consider the Zen platform.

At this point, we’re unsure at what level some of these might be default design issues with Zen. The issue of single-thread performance increasing when SMT is disabled (we’ve done some pre-testing, up to 6% in ST) is clearly related to the design of the core, with static partitioning vs competitive partitioning of certain parts of the design. The CCX latency and detection is one that certainly needs further investigation.

The future according to Senior Fellow Mike Clark, one of the principle engineers on the Zen microarchitecture, is that AMD knows where the easy gains are for their next generation product (codenamed Zen 2), and they're already working through the list. A question is then if Intel continues at 5% performance gains clock for clock each year, can AMD make 5-15% and close the gap?

The Silver Lining

It is relevant to point out that Intel is on its 7th Generation of Core microarchitecture. Sure, it looks significantly different when it was designed, but software vendors have had seven generations to optimize for it. Coming in and breaking the incumbent’s stranglehold is difficult, when every software vendor knows that design in and out. While AMD’s design looks similar to Intel, there are nuances which programmers might not have expected, and so it might be a good couple of years before the programming guides have made their way through into production software.

But,

When we look at the CPU benchmarks as part of this review, which have a strong range in algorithm difficulty and dependency, AMD still does well. There’s no getting around that AMD has a strong workstation core design on their hands. This bodes well for users who need compute at a lower cost, especially when you can pick up an eight-core Ryzen 7 at half the cost of the competition.

This makes the server story for AMD, under Naples, much more interesting.

AnandTech Recommended Award

For Performance/Price on a Workstation CPU

574 Comments

View All Comments

deltaFx2 - Wednesday, March 8, 2017 - link

@Meteor2: No. Consumer GPUs have poor throughput for Double precision FP. So you can't push those to the GPU (unless you own those super-expensive Nvidia compute cards). Apparently, many rendering/video editing programs use GPUs for preview but do the final rendering on CPU. Quality, apparently, and might be related to DP FP. I'm not the expert, so if you know otherwise, I'd be happy to be corrected and educated. Also, you could make the same argument about AVX-256.The quoted paragraph is probably the only balanced statement in that entire review. Compare the tone of that review with AT review above.

On an unrelated note, there's the larger question of running games at low res on top-end gpus and comparing frame-rates that far exceed human perception. I know, they have to do something, so why not just do this. The rationale is: " In future a faster GPU in future will create a bottleneck ". If this is true, it should be easy to demonstrate, right? Just dig through a history of Intel desktop CPUs paired with increasingly powerful GPUs and see how it trends. There's not one reviewer that has proven that this is true. It's being taken as gospel. OTOH, plenty of folks seem happy with their Sandy Bridge + Nvidia 1080, so clearly the bottleneck isn't here 5 years after SB. Maybe, just maybe, it's because the differences are imperceptible?

Ryzen clearly has some bottlenecks but the whole gaming thing is a tempest in a tea-cup.

theuglyman0war - Thursday, March 9, 2017 - link

ZBRUSHprobably 90% of all 3d assets that are created from concept ( NOT SCANNED )

Went through Zbrush at some point.

Which means no GPU acceleration at all.

Renderman

Maxwell

Vray

Arnold

still all use CPU rendering As do a mountain of other renderers.

Arnold will be getting an option

But the two popular GPU renderers are Otoy Octane and Redshift...

The have their excellent expensive place. But the majority of rendering out there is still suffered through software rendering. And will always be a valid concern as long as they come FREE built into major DCC applications.

theuglyman0war - Thursday, March 9, 2017 - link

Saw that same GPU trumps CPU render validity concerns...Comment and had a good laugh.

I'll remember to spread that around every time I see Renderman Vray Arnold Maxwell sans GPU rendering going on.

Or the next time a Mercury engine update negates all non Quadro GPU acceleration.

To be fair a lot of creative pros and tech artists seem to disagree with me but...

The only time between pulling vrts in Maya and brushing a surface in Zbrush that I really feel that I am suffering buckets of tears and desire a new CPU ( still on i7-980x ) is when I am cussing out a progress bar that is teasing me with it's slow progress. And that means CORES! encoding... un compressing... Rendering! Otherwise I could probably not notice day to day on a ten year old CPU. ( excluding CPU bound gaming of course... talking bout day to day vrt pulling )

I was just as productive in 2007 as I am today.

MaidoMaido - Saturday, March 4, 2017 - link

Been trying to find a review including practical benchmarks for common video editing / motion graphics applications like After Effects, Resolve, Fusion, Premiere, Element 3D.In a lot of these tasks, the multithreading is not always the best, as a result quad core 6700K often outperforms the more expensive Xeon and 5960X etc

deltaFx2 - Saturday, March 4, 2017 - link

I would recommend this response to the GamersNexus hit piece: https://www.reddit.com/r/Amd/comments/5xgonu/analy...The i5 level performance is a lie.

Notmyusualid - Saturday, March 4, 2017 - link

@ deltaFx2Sorry, not reading a 4k worded response. I'll wait for Anand to finish its Ryzen reviews before I draw any final conclusions.

Meteor2 - Tuesday, March 7, 2017 - link

@deltaFX2 RE: in the 4k word Reddit 'rebuttal', what that person seems to be saying, is that once you've converted your $500 Ryzen 1800X into a 8C/8T chip, _then_ it beats a $240 i5, while still falling short of the $330 i7. Out-of-the-box, it has worse gaming performance than either Intel chip.That's not exactly a ringing endorsement.

The analysis in the Anandtech forums, which concludes that in a certain narrow and low power band a heavily down-clocked 1800X happens to get excellent performance/W, isn't exactly thrilling either.

deltaFx2 - Wednesday, March 8, 2017 - link

@ Meteor2: The anandtech forum thing: Perf/watt matters for servers and laptop. Take a look at the IPC numbers too. His average is that Zen == Broadwell IPC, and ~10% behind Sky/Kaby lake (except for AVX256 workloads). That's not too shabby at all for a $300 part.You completely missed the point of the reddit rebuttal. The GN reviewer drops i5s from plenty of tests citing "methodological reasons", but then says R7==i5 in gaming. The argument is that plenty of games use >4 threads and that puts i5 at a disadvantage.

tankNZ - Sunday, March 5, 2017 - link

yes I agree, it's even better than okay for gaming[img]http://smsh.me/li3a.png[/img]deltaFx2 - Monday, March 6, 2017 - link

You may wish to see this though: https://forums.anandtech.com/threads/ryzen-strictl... Way, way, more detailed than any tech media review site can hope to get. No, it's got nothing to do with gaming. Gaming isn't the story here. AMD's current situation in x86 market share had little to do with gaming efficiency, but perf/watt.I'll quote the author: "850 points in Cinebench 15 at 30W is quite telling. Or not telling, but absolutely massive. Zeppelin can reach absolutely monstrous and unseen levels of efficiency, as long as it operates within its ideal frequency range."