The AMD Zen and Ryzen 7 Review: A Deep Dive on 1800X, 1700X and 1700

by Ian Cutress on March 2, 2017 9:00 AM ESTAMD Stock Coolers: Wraith v2

When AMD launched the Wraith cooler last year, bundled with the premium FX CPUs and highest performing APUs, it was a refreshing take on the eternal concept that the stock cooler isn’t worth the effort of using if you want any sustained performance. The Wraith, and the 125W/95W silent versions of the Wraith, were built like third party coolers, with a copper base/core, heatpipes, and a good fan. In our roundup of stock coolers, it was clear the Wraith held the top spot, easily matching $30 coolers in the market, except now it was being given away with the CPUs/APUs that needed that amount of cooling.

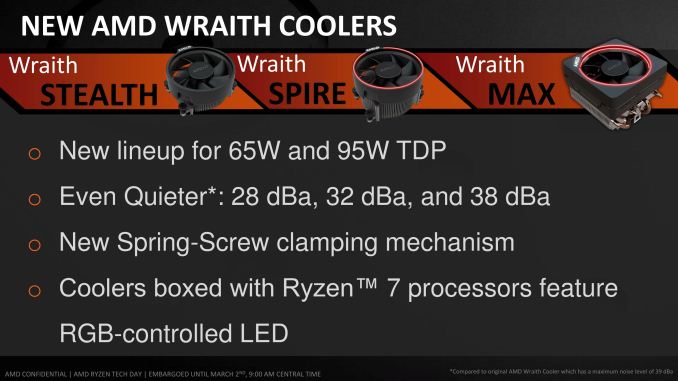

That was essentially a trial run for the Ryzen set of Wraith coolers. For the Ryzen 7 launch, AMD will have three models in play.

These are iterative designs on the original, with minor tweaks and aesthetic changes, but the concept is still the same – a 65W near silent design (Stealth), a 95W near silent design (Spire), and a 95W/125W premium model (Max). The 125W models come with an RGB light (which can be disabled), however AMD has stated that the premium model is currently destined for OEM and SI designs only. The other two will be bundled with the CPUs or potentially be available at retail. We have asked that we get the set in for review, to add to our Wraith numbers.

Memory Support

With every generation of CPUs, each one comes with a ‘maximum supported memory frequency’. This is typically given as a number, with the number aligning with the industry standard JEDEC sub-timings. Technically most processors will go above and beyond the memory frequency as the integrated memory controller supports a lot more; but the manufacturer only officially guarantees up to the maximum supported frequency on qualified memory kits.

The frequency, for consumer chips, is usually given as a single number no matter how many memory slots are populated. In reality when more memory modules are in play, it puts more strain on the memory controller so there is a higher potential for errors. This is why qualification is important – if the vendor has a guaranteed speed, any configuration for a qualified kit should work at that speed.

In the server market, a CPU manufacturer might list support a little differently – a supported frequency depending on how many memory modules are in play, and what type of modules. This arguably makes it very confusing when applied at a consumer level, but on a server level it is expected that OEMs can handle the varying degree of support.

For Ryzen, AMD is taking the latter approach. What we have is DDR4-2666 for the simplest configuration – one module per channel of single rank UDIMMs. This moves through to DDR4-1866 for the most strenuous configuration at two modules per channel with dual-rank UDIMMs. For our testing, we were running the memory at DDR4-2400, for lack of a fixed option, however we will have memory scaling numbers in due course. At present, ECC is not supported ECC is supported.

574 Comments

View All Comments

Notmyusualid - Saturday, March 4, 2017 - link

Can't disagree with you pal. They look like they execptional value for money.I on the other hand, am already on LGA2011-v3 platform, so I won't be changing, but the main point here is - AMD are back. And we welcome them too.

Alexvrb - Saturday, March 4, 2017 - link

Yeah... if the pricing is as good as rumored for the Ryzen 5, I may pick up a quad-core model. Gives me an upgrade path too, maybe a Ryzen+ hexa or octa-core down the road. For budget builds that Ryzen 3 non-SMT quad-core is going to be hard to argue with though.wut - Sunday, March 5, 2017 - link

You're really optimistically assuming things.Kaby Lake Core i5 7400 $170

Ryzen 5 1600X $259

...and single thread benchmark shows Core i5 to be firmly ahead, just as Core i7 is. The story doesn't seem to change much in the mid range.

Meteor2 - Tuesday, March 7, 2017 - link

@wut spot-on. It also seems that Zen on GloFlo 14 nm doesn't clock higher than 4.0 GHz. Zen has lower IPC and lower actual clocks than Intel KBL.Whichever way you cut it, however many cores in a chip are being considered, in terms of performance, Intel leads. Intel's pricing on >4 core parts is stupid and AMD gives them worthy price competition here. But at 4C and below, Intel still leads. AMD isn't price-competitive here either. No wonder Intel haven't responded to Zen. A small clock bump with Coffee Lake and a slow move to 10 nm starting with Cannon Lake for mobile CPUs (alongside or behind the introduction of 10 nm 'datacentre' chips) is all they need to do over the next year.

After all, if Intel used the same logic as TSMC and GloFlo in naming their process nodes, i.e. using the equivalent nanometre number of if finFETs weren't being used, Intel would say they're on a 10 nm process. They have a clear lead over GloFlo and thus anything AMD can do.

Cooe - Sunday, February 28, 2021 - link

I'm here from the future to tell you that you were wrong about literally everything though. AMD is kicking Intel's ass up and down the block with no end in sight.Cooe - Sunday, February 28, 2021 - link

Hahahaha. I really fucking hope nobody actually took your "buying advice". The 6-core/12-thread Ryzen 5 1600 was about as fast at 1080p gaming as the 4c/4t i5-7400 ON RELEASE in 2017, and nowadays with modern games/engines it's like TWICE AS FAST.deltaFx2 - Saturday, March 4, 2017 - link

I think the reviewer you're quoting is Gamers Nexus. He doesn't come across as being a particularly erudite person on matters of computer architecture. He throws a bunch of tests at it, and then spews a few untutored opinions, which may or may not be true. Tom's hardware does a lot of the same thing, and more, and their opinions are far more nuanced. Although they too could have tried to use an AMD graphics card to see if the problems persist there as well, but perhaps time was the constraint.There's the other question of whether running the most expensive GPU at 1080p is representative of real-world performance. Gaming, after all, is visual and largely subjective. Will you notice a drop of (say) 10 FPS at 150 FPS? How do you measure goodness of output? Let's contrive something.

All CPUs have bottlenecks, including Intel. The cases where AMD does better than Intel are where AMD doesn't have the bottlenecks Intel has, but nobody has noticed it before because there wasn't anything else to stack up against it. The question that needs to be answered in the following weeks and months is, are AMD's bottlenecks fixable with (say) a compiler tweak or library change? I'd expect much of it is, but lets see. There was a comment on some forum (can't remember) that said that back when Athlon64 (K8) came out, the gaming community was certain that it was terrible for gaming, and Netburst was the way to go. That opinion changed pretty quickly.

Notmyusualid - Saturday, March 4, 2017 - link

Gamers Nexus seem 'OK' to me. I don't know the site like I do Anandtech, but since Anand missed out the games....I am forced to make my opinions elsewhere. And funny you mentions Toms, they seem to back it up to some degree too, and I know these two sites are cross-owned.

But still, when Anand get around to benching games with Ryzen, only then will I draw my final conclusions.

deltaFx2 - Sunday, March 5, 2017 - link

@ Notmyusualid: I'm sure Gamers Nexus numbers are reasonable. I think they and Tom's (and other reviewers) see a valid bottleneck that I can only guess is software optimization related. The issue with GN was the bizarre and uninformed editorializing. Comments like, the workloads that AMD does well at are not important because they can be accelerated on GPU (not true, but if true, why on earth did GN use it in the first place?). There are other cases where he drops i5s from evaluation for "methodological reasons" but then says R7 == i5. Even based on the tests he ran, this is not true. Anyway, the reddit link goes over this in far more detail than I could (or would).Meteor2 - Tuesday, March 7, 2017 - link

@DeltaFX2 in what way was GamersNexus conclusion that tasks that can be pushed to GPUs should be incorrect? Are you saying Premiere and Blender can't be used on GPUs?GN's conclusion was:

"If you’re doing something truly software accelerated and cannot push to the GPU, then AMD is better at the price versus its Intel competition. AMD has done well with its 1800X strictly in this regard. You’ll just have to determine if you ever use software rendering, considering the workhorse that a modern GPU is when OpenCL/CUDA are present. If you know specific in stances where CPU acceleration is beneficial to your workflow or pipeline, consider the 1800X."

I think that's very fair and a very good summary of Ryzen.