The Intel SSD 600p (512GB) Review

by Billy Tallis on November 22, 2016 10:30 AM ESTAnandTech Storage Bench - Light

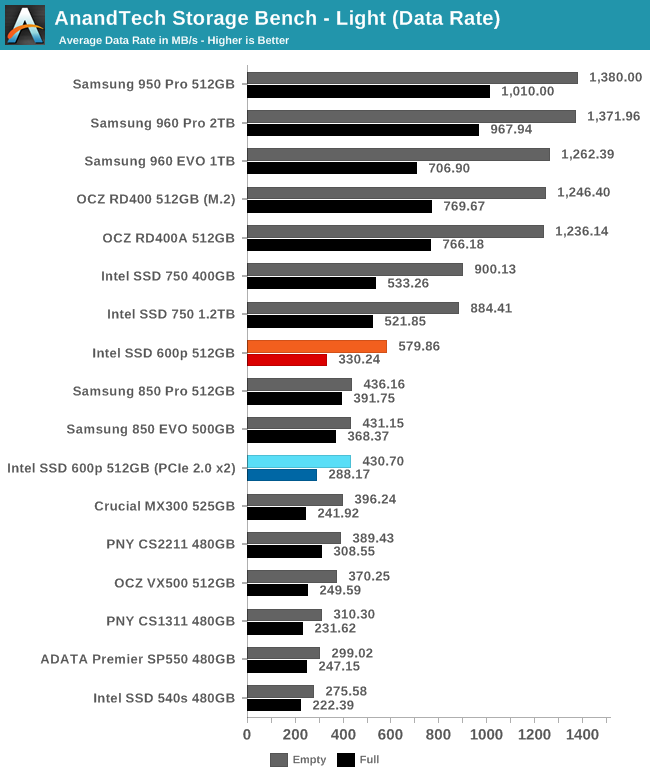

Our Light storage test has relatively more sequential accesses and lower queue depths than The Destroyer or the Heavy test, and it's by far the shortest test overall. It's based largely on applications that aren't highly dependent on storage performance, so this is a test more of application launch times and file load times. This test can be seen as the sum of all the little delays in daily usage, but with the idle times trimmed to 25ms it takes less than half an hour to run. Details of the Light test can be found here.

On the Light test we finally see the 600p pull ahead of SATA SSDs, albeit not when the drive is full. This test shows what the 600p can do before it gets overwhelmed by sustained writes, and it's also the first time where the PCIe 2.0 x2 connection is a significant bottleneck.

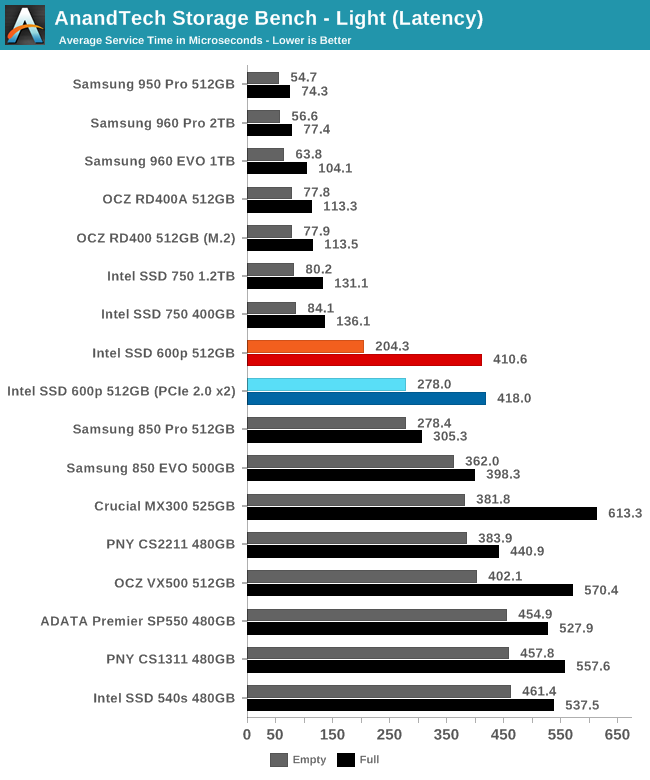

The average service times of the 600p rank about where the should: worse than the other NVMe SSDs, but also better than the SATA drives can manage. When the 600p is full its latency is significantly worse and isn't quite as good as Samsung's SATA SSDs, but it is nothing to complain about.

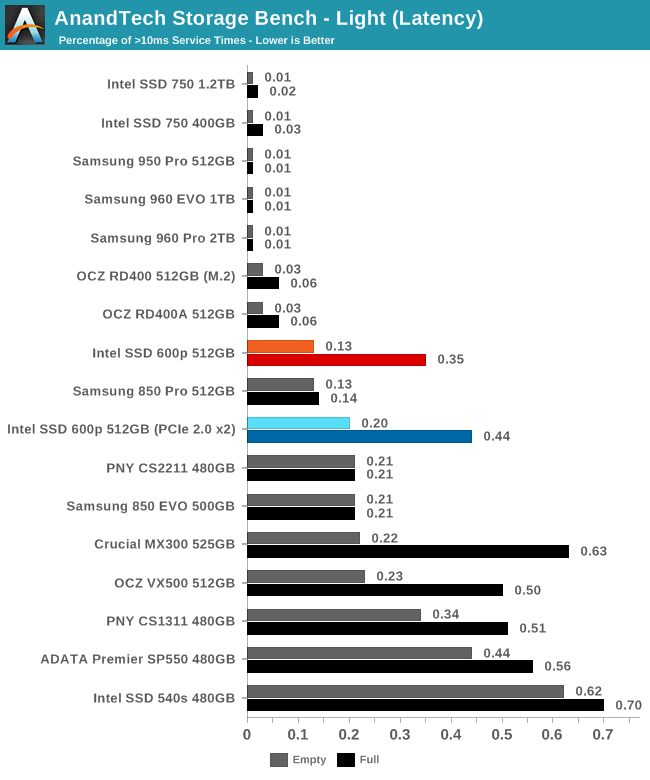

Aside from the usual caveat that it suffers acutely when full, the 600p meets expectations for the number of latency outliers.

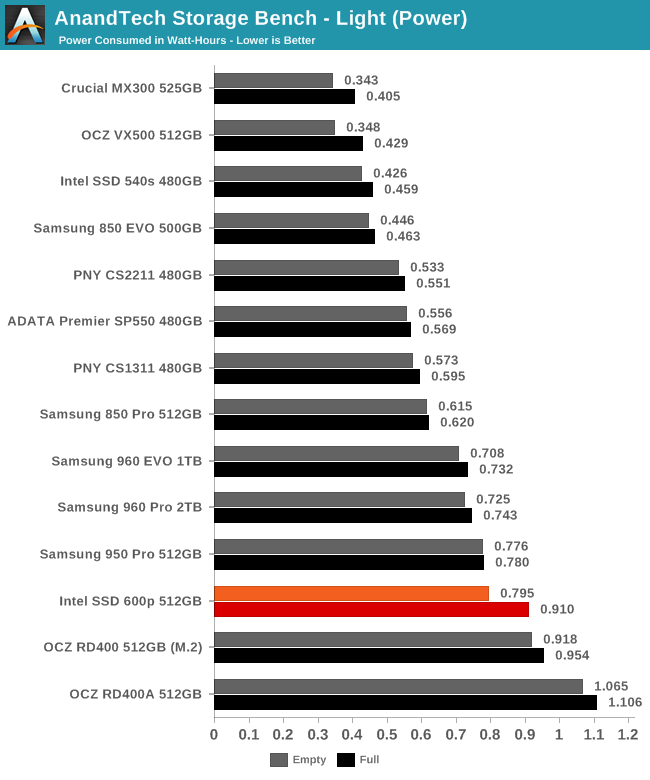

The 600p manages to pull ahead of the OCZ RD400 in power consumption and is close to Samsung's NVMe SSDs in efficiency, but the SATA drives are all significantly more efficient.

63 Comments

View All Comments

Samus - Wednesday, November 23, 2016 - link

Multicast helped but when you are saturating the backbone of the switch with 60Gbps of traffic it only slightly improves transfer. With light traffic we were getting 170-190MB/sec transfer rate but with a full image battery it was 120MB/sec. Granted with Unicast it never cracked 110MB/sec under any condition.ddriver - Wednesday, November 23, 2016 - link

Multicast would be UDP, so it would have less overhead, which is why you are seeing better bandwidth utilization. Point is with multicast you could push the same bandwidth to all clients simultaneously, whereas without multicast you'd be limited by the medium and switching capacity on top of the TCP/IP overhead.Assuming dual 10gbit gives you the full theoretical 2500 mb/s, if you have 100 mb/s to each client, that means you will only be able to serve no more than 25 clients. Whereas with multicast you'd be able to push those 170-190 mb/s to any number of clients, tens, hundreds, thousands or even millions, and by daisy chaining simply gigabit routers you make sure you don't run out of switching capacity. Of course, assuming you want to send the same identical data to all of them.

BrokenCrayons - Wednesday, November 23, 2016 - link

"Also, he doesn't really have "his specific application", he just spat a bunch of nonsense he believed would be cool :D"Technical sites are great places to speculate about what-ifs of computer technology with like minded people. It's absolutely okay to disagree with someone's opinion, but I don't think you're doing so in a way that projects your thoughts as calm, rational, or constructive. It seems as though idle speculation on a very insignificant matter is treated as a threat worthy of attack in your mind. I'm not sure why that's the case, but I don't think it's necessary. I try to tell my children to keep things in perspective and not to make a mountain out of a problem if its not necessary. It's something that helps them get along in their lives now that they're more independent of their system of parental checks and balances. Maybe stopping for a few moments to consider whether or not the thing that's upsetting you and making you feel mad inside is a good idea. It could put some of these reader comments into a different, more lucid perspective.

ddriver - Tuesday, November 22, 2016 - link

Oh and obviously, he meant "image" as in pictures, not image as in os images LOL, that was made quite obvious by the "media" part.tinman44 - Monday, November 28, 2016 - link

The 960 EVO is only a little bit more expensive for consistent, high performance compared to the 600p. Any hardware implementation where more than a few people are using the same drive should justify getting something worthwhile, like a 960 pro or real enterprise SSD, but the 960 EVO comes very close to the performance of those high-end parts for a lot less money.ddriver: compare perf consistency of the 600p and the 960 EVO, you don't want the 600p.

vFunct - Wednesday, November 23, 2016 - link

> There is already a product that's unbeatable for media storage - an 8tb ultrastar he8. As ssd for media storage - that makes no sense, and a 100 of those only makes a 100 times less sense :DYou've never served an image gallery, have you?

You know it takes 5-10 ms to serve a single random long-tail image from an HDD. And a single image gallery on a page might need to serve dozens (or hundreds) of them, taking up up to 1 second of drive time.

Do you want to tie up an entire hard for one second, when you have hundreds of people accessing your galleries per second?

Hard drives are terrible for image serving on the web, because of their access times.

ddriver - Wednesday, November 23, 2016 - link

You probably don't know, but it won't really matter, because you will be bottlenecked by network bandwidth. hdd access times would be completely masked off. Also, there is caching, which is how the internet ran just fine before ssds became mainstream.You will not be losing any service time waiting for the hdd, you will be only limited by your internet bandwidth. Which means that regardless of the number of images, the client will receive the entire data set only 5-10 msec slower compared to an ssd. And regardless of how many clients you may have connected, you will always be limited by your bandwidth.

Any sane server implementation won't read the entire gallery in a burst, which may be hundreds of megabytes before it services another client. So no single client will ever block the hdd for a second. Practically every contemporary hdd have ncq, which means the device will deliver other requests while your network is busy delivering data. Servers buffer data, so say you have two clients requesting 2 different galleries at the same time, the server will read the first image for the first client and begin sending it, and then read the first image for the second client and begin sending it. The hdd will actually be idling quite a lot waiting, because your connection bandwidth will be vastly exceeded by the drive's performance. And regardless of how many clients you may have, that will not put any more strain on the hdd, as your network bandwidth will remain the same bottleneck. If people end up waiting too long, it won't be the hdd but the network connection.

But thanks for once again proving you don't have a clue, not that it wasn't obvious from your very first post ;)

vFunct - Friday, November 25, 2016 - link

> You probably don't know, but it won't really matter, because you will be bottlenecked by network bandwidth. hdd access times would be completely masked off. Also, there is caching, which is how the internet ran just fine before ssds became mainstream.ddriver, just stop. You literally have no idea what you're talking about.

Image galleries aren't hundreds of megabytes. Who the hell would actually send out that much data at once? No image gallery sends out full high-res images at once. Instead, they might be 50 mid-size thumbnails of 20kb each that you scroll through on your mobile device, and send high-res images later when you zoom in. This is like literally every single e-commerce shopping site in the world.

Maybe you could take an internship at a startup to gain some experience in the field? But right now, I recommend you never, ever speak in public ever again, because you don't know anything at all about web serving.

close - Wednesday, November 23, 2016 - link

@ddriver, I really didn't expect you to laugh at other people's ideas for new hardware given your "thoroughly documented" 5.25" hard drive brain-fart.ddriver - Wednesday, November 23, 2016 - link

Nobody cares what clueless troll wannabes like you expect, you are entirely irrelevant.