The Intel Skull Canyon NUC6i7KYK mini-PC Review

by Ganesh T S on May 23, 2016 8:00 AM ESTHTPC Credentials

The higher TDP of the processor in Skull Canyon, combined with the new chassis design, makes the unit end up with a bit more noise compared to the traditional NUCs. It would be tempting to say that the extra EUs in the Iris Pro Graphics 580, combined with the eDRAM, would make GPU-intensive renderers such as madVR operate more effectively. That could be a bit true in part (though, madVR now has a DXVA2 option for certain scaling operations), but, the GPU still doesn't have full HEVC 10b decoding, or stable drivers for HEVC decoding on WIndows 10. In any case, it is still worthwhile to evaluate basic HTPC capabilities of the Skull Canyon NUC6i7KYK.

Refresh Rate Accurancy

Starting with Haswell, Intel, AMD and NVIDIA have been on par with respect to display refresh rate accuracy. The most important refresh rate for videophiles is obviously 23.976 Hz (the 23 Hz setting). As expected, the Intel NUC6i7KYK (Skull Canyon) has no trouble with refreshing the display appropriately in this setting.

The gallery below presents some of the other refresh rates that we tested out. The first statistic in madVR's OSD indicates the display refresh rate.

Network Streaming Efficiency

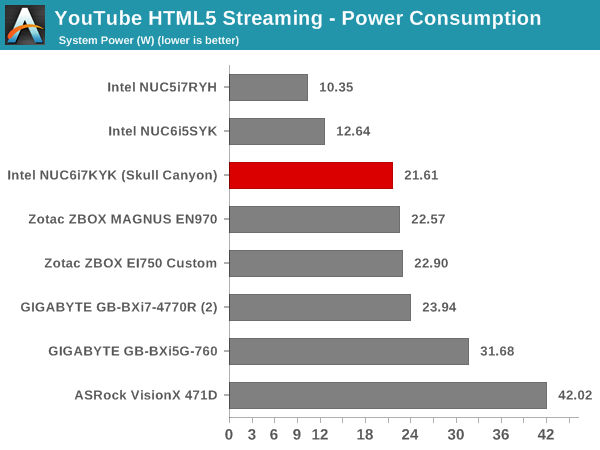

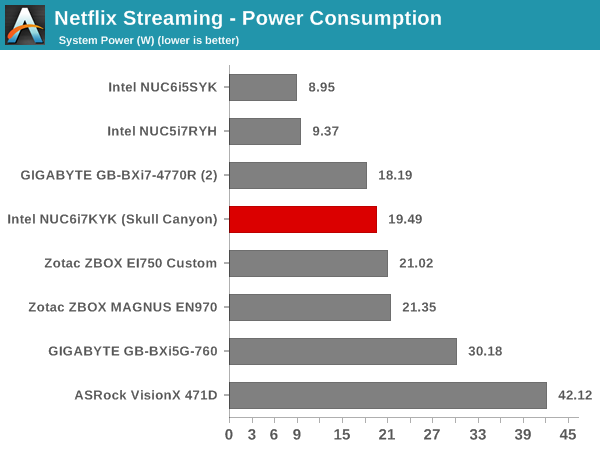

Evaluation of OTT playback efficiency was done by playing back our standard YouTube test stream and five minutes from our standard Netflix test title. Using HTML5, the YouTube stream plays back a 1080p H.264 encoding. Since YouTube now defaults to HTML5 for video playback, we have stopped evaluating Adobe Flash acceleration. Note that only NVIDIA exposes GPU and VPU loads separately. Both Intel and AMD bundle the decoder load along with the GPU load. The following two graphs show the power consumption at the wall for playback of the HTML5 stream in Mozilla Firefox (v 46.0.1).

GPU load was around 13.71% for the YouTube HTML5 stream and 0.02% for the steady state 6 Mbps Netflix streaming case. The power consumption of the GPU block was reported to be 0.71W for the YouTube HTML5 stream and 0.13W for Netflix.

Netflix streaming evaluation was done using the Windows 10 Netflix app. Manual stream selection is available (Ctrl-Alt-Shift-S) and debug information / statistics can also be viewed (Ctrl-Alt-Shift-D). Statistics collected for the YouTube streaming experiment were also collected here.

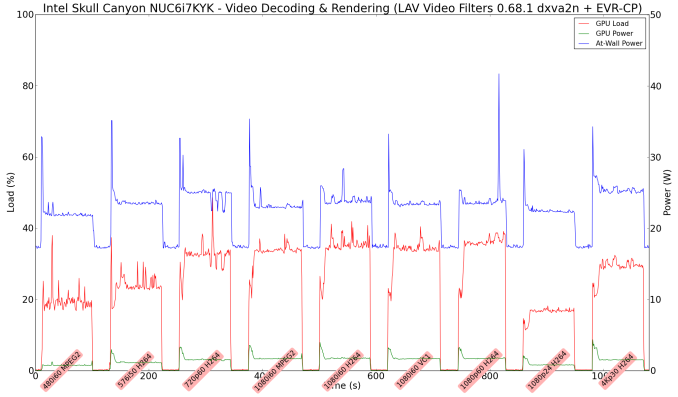

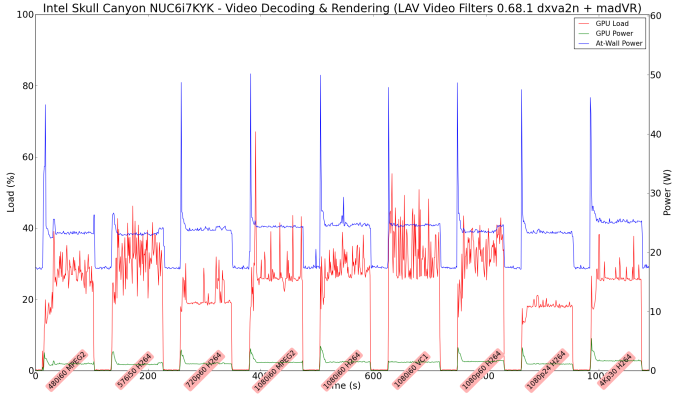

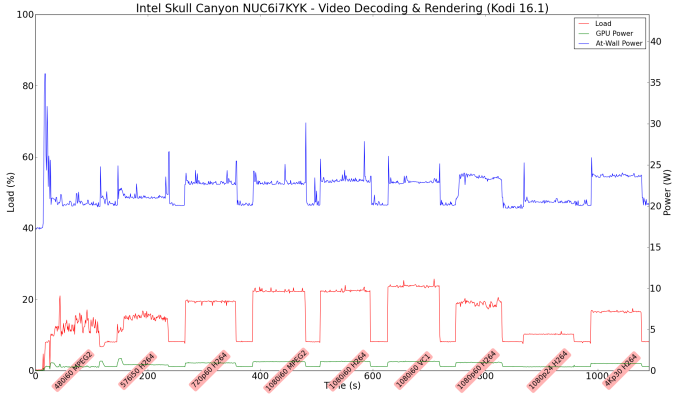

Decoding and Rendering Benchmarks

In order to evaluate local file playback, we concentrate on EVR-CP, madVR and Kodi. We already know that EVR works quite well even with the Intel IGP for our test streams. Under madVR, we used the DXVA2 scaling logic (as Intel's fixed-function scaling logic triggered via DXVA2 APIs is known to be quite effective). We used MPC-HC 1.7.10 x86 with LAV Filters 0.68.1 set as preferred in the options. In the second part, we used madVR 0.90.19.

In our earlier reviews, we focused on presenting the GPU loading and power consumption at the wall in a table (with problematic streams in bold). Starting with the Broadwell NUC review, we decided to represent the GPU load and power consumption in a graph with dual Y-axes. Nine different test streams of 90 seconds each were played back with a gap of 30 seconds between each of them. The characteristics of each stream are annotated at the bottom of the graph. Note that the GPU usage is graphed in red and needs to be considered against the left axis, while the at-wall power consumption is graphed in green and needs to be considered against the right axis.

Frame drops are evident whenever the GPU load consistently stays above the 85 - 90% mark. We did not hit that case with any of our test streams. Note that we have not moved to 4K officially for our HTPC evaluation. We did check out that HEVC 8b decoding works well (even 4Kp60 had no issues), but HEVC 10b hybrid decoding was a bit of a mess - some clips worked OK with heavy CPU usage, while other clips tended to result in a black screen (those clips didn't have any issues with playback using a GTX 1080).

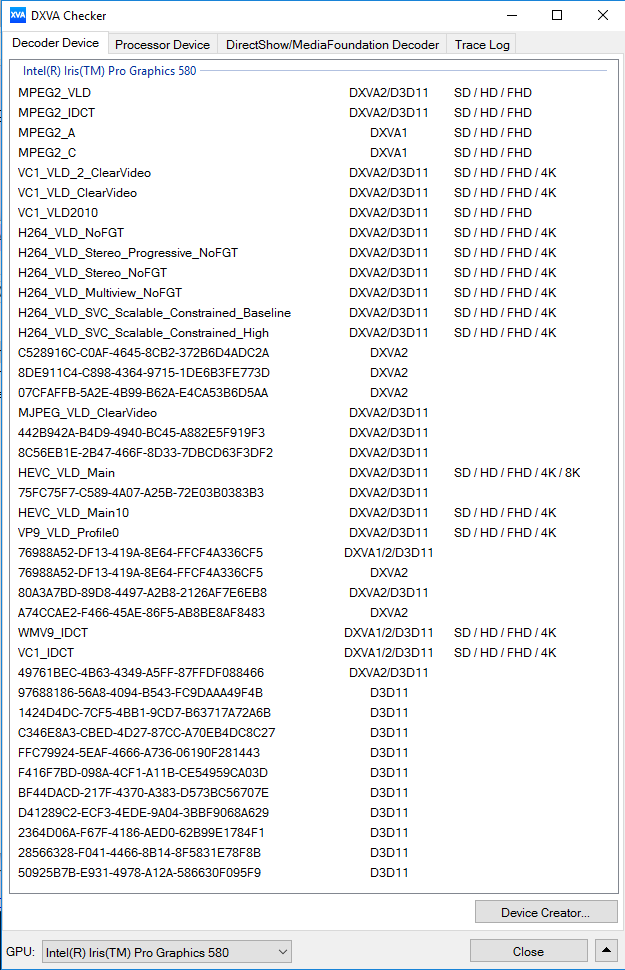

Moving on to the codec support, the Intel Iris Pro Graphics 580 is a known quantity with respect to the scope of supported hardware accelerated codecs. DXVA Checker serves as a confirmation for the features available in driver version 15.40.23.4444.

It must be remembered that the HEVC_VLD_Main10 DXVA profile noted above utilizes hybrid decoding with both CPU and GPU resources getting taxed.

On a generic note, while playing back 4K videos on a 1080p display, I noted that madVR with DXVA2 scaling was more power-efficient compared to using the EVR-CP renderer that MPC-HC uses by default.

133 Comments

View All Comments

fanofanand - Monday, May 23, 2016 - link

So in other words, another 20 mm gives the customer a 50% haircut on price. Yup, more evidence this is a product in search of high margins and little else.jwcalla - Monday, May 23, 2016 - link

You have to understand that Intel thinks its customers turn on their faucets and liquid gold comes pouring out.Shadowmaster625 - Monday, May 23, 2016 - link

So its not even as fast as an ancient R9 270M despite being two nodes ahead.... and the power advantage is only 30%. Intel has a problem it seems.ganeshts - Monday, May 23, 2016 - link

Did you consider the power budget?extide - Monday, May 23, 2016 - link

Are you kidding? That is actually a pretty damn good achievement, I mean that uses a Cape Verde ASIC, and has about 1TFLOP of compute power, (640 shaders @ ~750 Mhz)The Iris Pro 580 has 576 FP32 cores (72 EU * 8) and runs about 1Ghz, so has a bit over 1.1TFLOP compute -- so really pretty similar in terms of compute horsepower. It seems like it is performing right where it should based on it's hardware config.

fanofanand - Monday, May 23, 2016 - link

Well, looks like I will be waiting yet another generation. Still can't game on 1080p. I like the storage and Wi-Fi numbers, and I can picture this doing fairly well in some office environments, but are there that many people who are building home PC's (user still has to add RAM etc.) and who will pay that kind of a premium for a device that is somewhat limited in it's use case? I can't speak for anyone else of course, but there is no way my employer would fork out $1k for this, not when they can spend $400 on a cheap business laptop that takes up a similar amount of space in the office but also offers them the ability to extract additional work from me over the weekend. I love the idea, I just don't think the hardware is there yet.Taracta - Monday, May 23, 2016 - link

How is Alpine Ridge going to get USB 3.1 if it is not off the PCH?ganeshts - Monday, May 23, 2016 - link

Alpine Ridge has a USB 3.1 host controller integrated along with the Thunderbolt controller. Please look into our Thunderbolt 3 hands-on coverage here: http://www.anandtech.com/show/10248/thunderbolt-3-... : Note the presence of the xHCI controller in the Alpine Ridge block diagram towards the middle of the linked page.tipoo - Monday, May 23, 2016 - link

This makes me wish Intel offered GT4e with a cheaper dual, then it could compete with boxes like the Alienware Alpha on price/value.tipoo - Monday, May 23, 2016 - link

Does this support free sync?