Google’s Tensor Processing Unit: What We Know

by Joshua Ho on May 20, 2016 6:00 AM EST- Posted in

- Google IO

- Trade Shows

- ASICs

- DSPs

- Machine Learning

- Google IO 2016

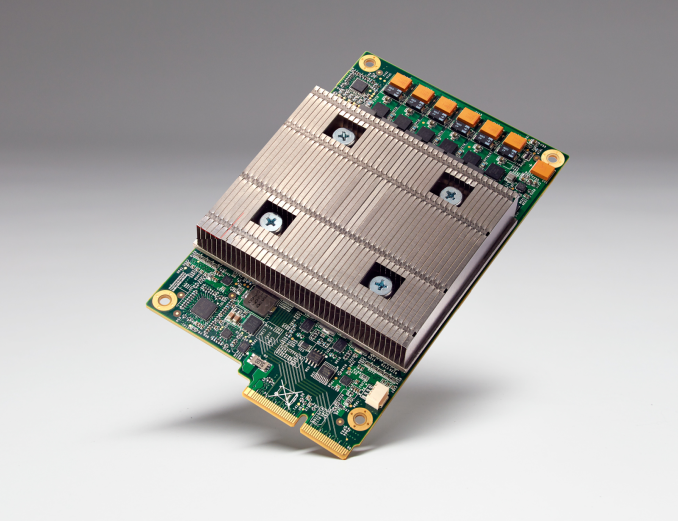

If you’ve followed Google’s announcements at I/O 2016, one stand-out from the keynote was the mention of a Tensor Processing Unit, or TPU (not to be confused with thermoplastic urethane). I was hoping to learn more about this TPU, however Google is currently holding any architectural details close to their chest.

More will come later this year, but for now what we know is that this is an actual processor with an ISA of some kind. What exactly that ISA entails isn't something Google is disclosing at this time - and I'm curious as to whether it's even Turing complete - though in their blog post on the TPU, Google did mention that it uses "reduced computational precision." It’s a fair bet that unlike GPUs there is no ISA-level support for 64 bit data types, and given the workload it’s likely that we’re looking at 16 bit floats or fixed point values, or possibly even 8 bits.

Reaching even further, it’s possible that instructions are statically scheduled in the TPU, although this was based on a rather general comment about how static scheduling is more power efficient than dynamic scheduling, which is not really a revelation in any shape or form. I wouldn’t be entirely surprised if the TPU actually looks an awful lot like a VLIW DSP with support for massive levels of SIMD and some twist to make it easier to program for, especially given recent research papers and industry discussions regarding the power efficiency and potential for DSPs in machine learning applications. Of course, this is also just idle speculation, so it’s entirely possible that I’m completely off the mark here, but it’ll definitely be interesting to see exactly what architecture Google has decided is most suited towards machine learning applications.

Source: Google

39 Comments

View All Comments

ddriver - Saturday, May 21, 2016 - link

you really have no idea, do youWolfpackN64 - Friday, May 20, 2016 - link

It's it's really VLIW like, it's basically a smaller and more numerous cousin to the Elbrus 2K chips?JoshHo - Friday, May 20, 2016 - link

It could be but unlike the Elbrus VLIW CPUs it wouldn't even be attempting to run general purpose code, so any concessions made to improve performance like doing something better to handle unpredictable branches, non-deterministic memory latency, virtual memory, syscalls, or anything else that a CPU would expect to handle.When you can get a normal CPU to handle those tasks, you can afford to focus on the essentials for maximizing performance. I doubt the TPU has a TLB or much in the way of advanced prefetch mechanisms or similar things seen in CPUs or GPUs to handle unpredictable code.

Heavensrevenge - Friday, May 20, 2016 - link

It's most likely the "Lanai" in-house cpu-ish thing they patched LLVM for a few months ago https://www.phoronix.com/scan.php?page=news_item&a...name99 - Friday, May 20, 2016 - link

Lanai is almost certainly something very different; a processor specialized for running network switching, routing, and suchlike.Very different architecture specialized for a very different task --- but just as relevant to my larger point above about the twilight of Intel.

kojenku - Friday, May 20, 2016 - link

First, this chip is an ASIC. It performs a set of static functions therefore not modifiable. Second, since it is an ASIC, its precision is limited (it does not need to adjust the angle of a rocket's nozzle) and Google has mentioned this in the party. Third, since its precision is limited, it does not require lots of transistors in the chip therefore not very power hungry. This actually has been extensively studied by the academic researchers for a long time, and lots of research papers and experiments are available around the internet (Stochastic Computing, Imprecise computations, and etc.). Fourth, since it is imprecise and power efficient, the regular PC chip designs will gradually moving to the similar directions. What this means is that we will have PC CPUs making imprecise numeric presentations (1.0 vs. 0.99999999999999999999999999999912345).extide - Monday, May 23, 2016 - link

Well, you know all chips are ASIC's .. I mean technically even an FPGA is an ASIC, but that IS modifiable. GPU's are ASIC's, CPU's are ASIC's. Being an ASIC has NOTHING to do with it's precision. They went with 8-bit as a design decision because it was all that they needed. 8-bit does mean the SIMD units will be smaller, and thus they can pack more of them in for the same transistor budget.No, regular CPU's will not start to have less precision, regular CPU's (like x86, or ARM) have to conform to the ISA that they are designed for and that ISA will specify those things and you have to follow that spec exactly or it will not be able to run code compiled for that ISA.

ASIC - Application Specific Integrated Circuit

SIMD - Single Instruction Multiple Data

ISA - Instruction Set Architecture

FPGA - Field Programmable Gate Array

Jaybus - Saturday, May 21, 2016 - link

The naming of the device could be a clue. A tensor is a geometric object describing a relationship between vectors, scalars, or other tensors. For example, a linear map represented by a NxM matrix that transforms N M-dimensional vectors into a single N-dimensional vector is an example of a 2nd-order tensor. A vector is itself a 1st-order tensor. A neuron in an artificial neural network could be a dynamic tensor that transforms M synaptic inputs into N synaptic outputs. My guess is that the TPU allows for massively parallel processing of neurons in an artificial neural network. The simple mapping of M scalar inputs to N scalar outputs does not require a complex and power-consuming ISA, but rather benefits from having very many extremely simple compute units, far simpler than ARM cores and even simpler than general purpose DSPs; simply a (dynamic) linear map implemented in hardware.easp - Saturday, May 21, 2016 - link

Congratulations, you are so far the only commenter who coudln't be bothered to google for "Google Tensor" to reason out that this is likely targeted at running neural nets.Googling supports your reasoning. "Tensor Flow" is Google's open-source machine learning library. It was developed for running neural nets, but, they assert, it specializes in "numerical computation using data flow graphs" and is applicable to domains beyond neural networks and machine learning.