Google’s Tensor Processing Unit: What We Know

by Joshua Ho on May 20, 2016 6:00 AM EST- Posted in

- Google IO

- Trade Shows

- ASICs

- DSPs

- Machine Learning

- Google IO 2016

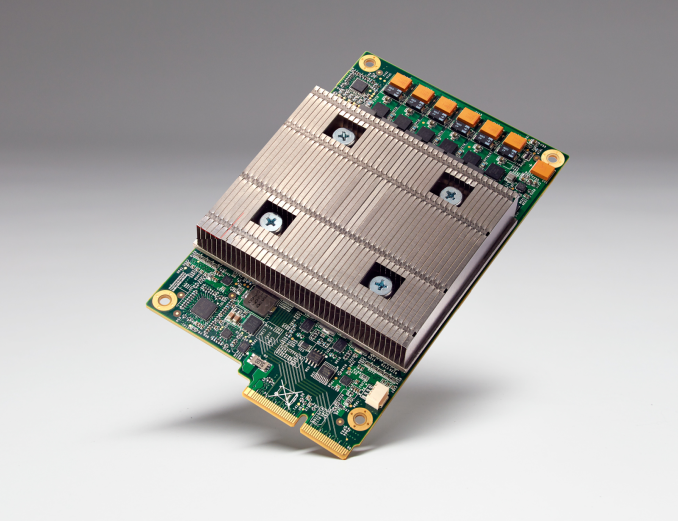

If you’ve followed Google’s announcements at I/O 2016, one stand-out from the keynote was the mention of a Tensor Processing Unit, or TPU (not to be confused with thermoplastic urethane). I was hoping to learn more about this TPU, however Google is currently holding any architectural details close to their chest.

More will come later this year, but for now what we know is that this is an actual processor with an ISA of some kind. What exactly that ISA entails isn't something Google is disclosing at this time - and I'm curious as to whether it's even Turing complete - though in their blog post on the TPU, Google did mention that it uses "reduced computational precision." It’s a fair bet that unlike GPUs there is no ISA-level support for 64 bit data types, and given the workload it’s likely that we’re looking at 16 bit floats or fixed point values, or possibly even 8 bits.

Reaching even further, it’s possible that instructions are statically scheduled in the TPU, although this was based on a rather general comment about how static scheduling is more power efficient than dynamic scheduling, which is not really a revelation in any shape or form. I wouldn’t be entirely surprised if the TPU actually looks an awful lot like a VLIW DSP with support for massive levels of SIMD and some twist to make it easier to program for, especially given recent research papers and industry discussions regarding the power efficiency and potential for DSPs in machine learning applications. Of course, this is also just idle speculation, so it’s entirely possible that I’m completely off the mark here, but it’ll definitely be interesting to see exactly what architecture Google has decided is most suited towards machine learning applications.

Source: Google

39 Comments

View All Comments

easp - Saturday, May 21, 2016 - link

How does this hurt intel? By not helping Intel. By running stuff that was previously run on intel CPUs. By running more and more of that stuff. By being another growing semiconductor market that Intel doesn't have any competitive advantage in. Meanwhile, to stay competitive in their core markets, intel needs to invest in new fabs, and those new fabs turn out 2x as many tranistors as the old fabs, and intel really doesn't have a market for all those transistors any more.Michael Bay - Sunday, May 22, 2016 - link

You of all people should know well that name99 is a completely crazy pro-Apple and contra-Intel cultist.stephenbrooks - Friday, May 20, 2016 - link

I read your comment about the "slow decline of Intel" immediately after this other article: http://anandtech.com/show/10324/price-check-q2-may...Well, you did say it was slow. Guess I've just got to keep waiting... for that slow decline...

jjj - Friday, May 20, 2016 - link

Apparently Google confirmed that it's 8-bit and it might be 8-bit integer.ShieTar - Friday, May 20, 2016 - link

Which makes sense for something focusing on image recognition and similar tasks. The CogniMem CM1K also uses 8-bit:http://www.cognimem.com/products/chips-and-modules...

stephenbrooks - Friday, May 20, 2016 - link

8-bit = string data? Makes sense for Google the web company. Maybe it has acceleration for processing UTF-8 strings?xdrol - Saturday, May 21, 2016 - link

I'd rather go with images. A 16M color image is only 3x 8 bit channels.ShieTar - Sunday, May 22, 2016 - link

Though it is very likely that you can use very similar processing for both images and texts. The information is perceived differently by the human brain, but for tasks like pattern recognition it should make no difference if you search for a face in an image or for a phrase in a text.easp - Saturday, May 21, 2016 - link

Look up TensorFlow. Neural nets. Not UTF-8 strings. Jeeze.Jon Tseng - Friday, May 20, 2016 - link

I'd assume given the form factor and the apparent passive cooling it's not a massive chip/performance monster along the lines of an NVIDIA GPU. Although I would concede ASIC is more power efficient which does help things.