The SanDisk X400 1TB SSD Review

by Billy Tallis on May 6, 2016 9:00 AM ESTMixed Random Read/Write Performance

The mixed random I/O benchmark starts with a pure read test and gradually increases the proportion of writes, finishing with pure writes. The queue depth is 3 for the entire test and each subtest lasts for 3 minutes, for a total test duration of 18 minutes. As with the pure random write test, this test is restricted to a 16GB span of the drive, which is empty save for the 16GB test file.

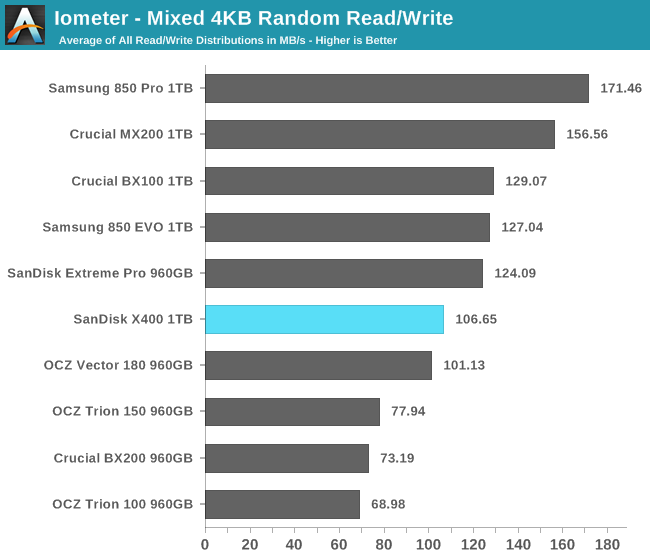

The mixed random I/O performance of the X400 is in the middle of the pack, slightly ahead of the MLC-based OCZ Vector 180 and significantly faster than the other three planar TLC drives.

The X400 is once again the most power efficient planar TLC drive by a wide margin, and also ahead of a few of the MLC drives.

|

|||||||||

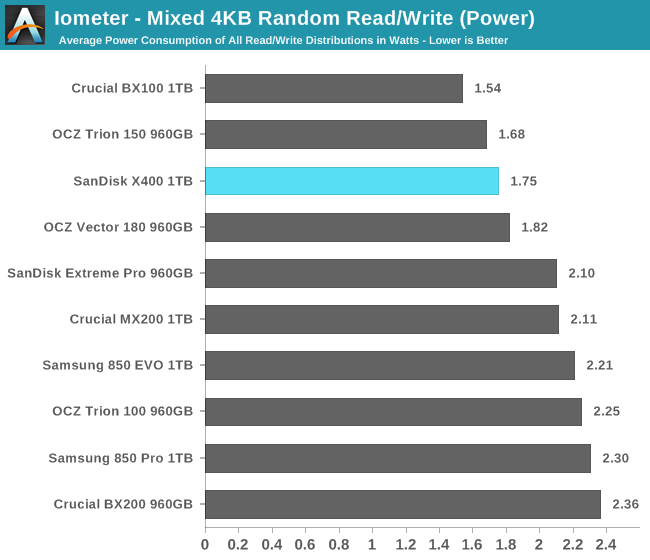

As the proportion of writes increases, the X400's power consumption grows quite slowly. Performance does suffer in the middle of the test and the spike at the end when the test shifts to pure writes is smaller than for the MLC drives and the 850 EVO.

Mixed Sequential Read/Write Performance

The mixed sequential access test covers the entire span of the drive and uses a queue depth of one. It starts with a pure read test and gradually increases the proportion of writes, finishing with pure writes. Each subtest lasts for 3 minutes, for a total test duration of 18 minutes. The drive is filled before the test starts.

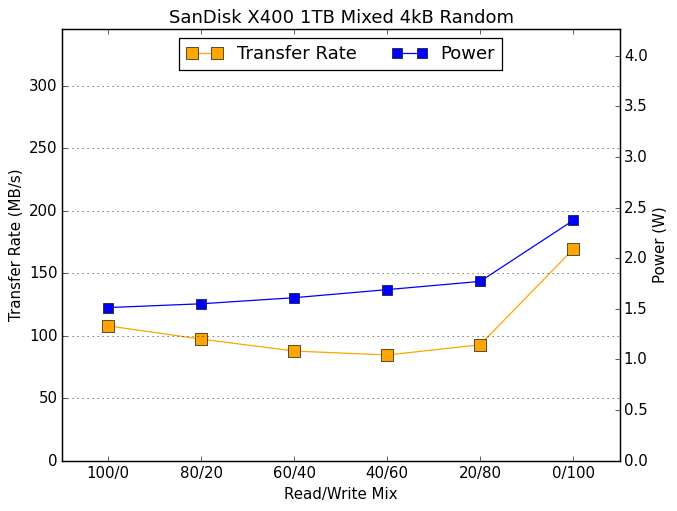

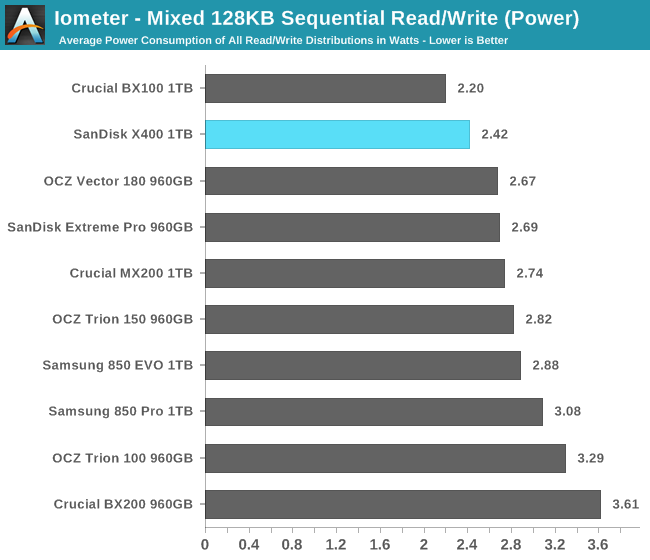

The mixed sequential I/O performance of the SanDisk X400 is not able to match any of the MLC drives, but is still comfortably ahead of the other planar TLC drives.

Average power consumption on the mixed sequential test is lower than everything other than the Crucial BX100, and the SanDisk X400 manages to tie the Vector 180 for efficiency, but otherwise it stands above only the other planar TLC drives.

|

|||||||||

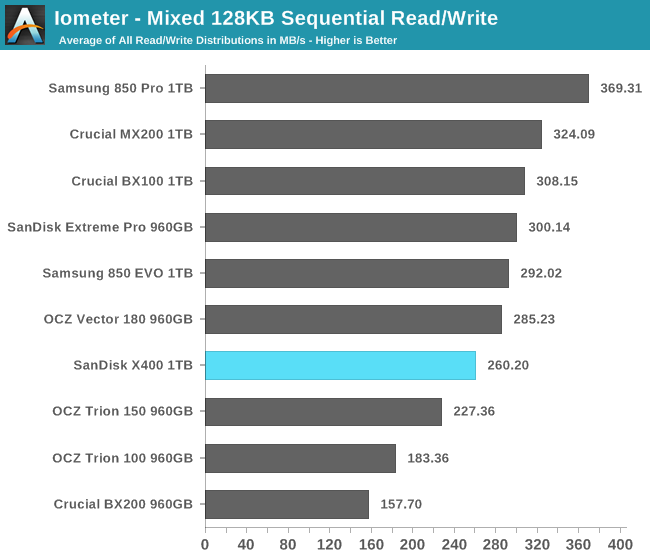

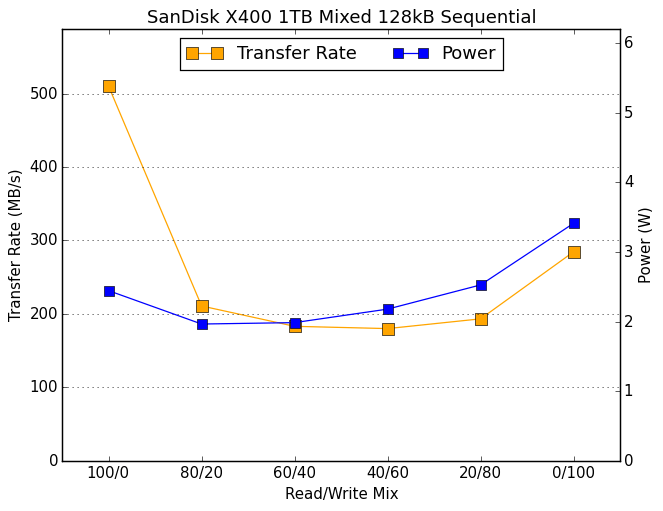

The performance and power curves for the SanDisk X400 are very similar to the SanDisk Extreme Pro except in the pure-write phase at the end of the test, where the X400's performance cannot come close to any of the MLC drives. The X400 beats the OCZ Trion 150 overall because its high read speed outweighs the write speed advantage of the Trion 150.

41 Comments

View All Comments

Billy Tallis - Friday, May 6, 2016 - link

I changed TiB to TB. In reality the sizes are only nominal. The exact capacity of the X400 is 1,024,209,543,168 bytes while 1TiB would be 1,099,511,627,776 bytes and 1000GB drives like the 850 EVO are 1,000,204,886,016 bytes.HollyDOL - Friday, May 6, 2016 - link

yay, that's some black magic with spare areas / crc prossibly...X vs Xi prefixes are treatcherous... while with kilo it does only 2,4%, with Tera it's already 9,95%...more than enough to hide OS and majority of installed software :-)

bug77 - Tuesday, May 10, 2016 - link

Then you should put that into the article, unless you're intentionally trying to be misleading ;)SaolDan - Friday, May 6, 2016 - link

Neat!!hechacker1 - Friday, May 6, 2016 - link

I'm tempted to buy two 512GB and RAID 0 them. Does anybody know if that would improve performance consistency compared to a single 1TB drive? I don't really care about raw bandwidth, but 4k IOPS for VMs. I'm having trouble finding benchmarks showing what RAID 0 does to latency outliersCaedenV - Friday, May 6, 2016 - link

As someone who has been running 2 SSDs in RAID0 for the last few years I would recommend against it. That is not to say that I have had any real issues with it, just that it is not really worth doing.1) once you have a RAID your boot time takes much longer as you have to POST, RAID, and then POST again, then boot. This undoes any speed benefit for fast start times that SSDs bring you.

2) It adds points of failure. Having 2 drives means that things are (more or less) twice as likely to fail. SSDs are not as scary as they use to be, but it is still added risk for no real world benefit.

3) Very little real-world benefit. While in benchmarks you will see a bit of improvement, real world workloads are very bursty. And the big deal with RAID with mechanical drives is the ability to que up read requests in rapid succession to dramatically reduce seek time (or at least hide seek time). With SSDs there is practically no seek time to begin with, so that advantage is not needed. For read/write performance you will also see a minor increase, but typically the bottleneck will be at the CPU, GPU, or now even the bus itself.

Sure, if you are a huge power-user that is editing multiple concurrent 4K video streams then maybe you will need that extra little bit of performance... but for most people you would never notice the difference.

The reason I did it 4 years ago was simply a cost and space issue. I started with a 240GB SSD that cost ~$250 which was a good deal. Then when the price dropped to $200 I picked up another and put it in RAID 0 because I needed the extra space and could not afford a larger drive. Now with the price of a single 1TB drive so low, and with RAID having just as many potential issues as potential up-sides, I would just stick with a single drive and be done with it.

Impulses - Friday, May 6, 2016 - link

I did it with 2x 128GB 840s at one point and again last year for the same reasons, cost and space... 1TB EVO x2 (using a 256GB SM951 as OS drive) If I were to add more SSD space now I'd probably just end up with a 2TB EVO.Probably won't need to RAID up drives to form a single large-enough volume again in the future, this X400 is already $225 on Amazon (basically hours after the article went up with the $244 price).

I don't even need the dual 1TB in RAID in an absolute sense, but it's more convenient to have a single large volume shared by games/recent photos than to balance two volumes.

I don't think the downsides are a big deal, but I wouldn't do it for performance reasons either, and I backup often.

phoenix_rizzen - Friday, May 6, 2016 - link

Get 4 of them and stick them into a RAID10. :)Lolimaster - Friday, May 6, 2016 - link

There's no point in doind raid0 with SSD's. You won't decrease latency/access times or improve random 4k reads (they will be worse most of the time).Sequential gains are meaningless (if it matter to you then you should stick to a PCI-e/m.2 NVME drive)

Pinn - Friday, May 6, 2016 - link

I have the Samsung NVME M.2 512gb in the only m.2 slot and am aching to get more storage. Should I just fill one of my PCIe slots (x99)?