AMD Radeon HD 7870 GHz Edition & Radeon HD 7850 Review: Rounding Out Southern Islands

by Ryan Smith on March 5, 2012 12:01 AM ESTOverclocking: Power, Temp, & Noise

As with the rest of Southern Islands, AMD is making sure to promote the overclockability of their cards. And why not? So far we’ve seen every 7700 and 7900 card overclock by at least 12% on stock voltage, indicating there’s a surprising amount of headroom in these cards. The fact that performance has been scaling so well with overclocking only makes overclocking even more enticing. Who doesn’t want free performance?

So how does Pircairn and the 7800 series stack up compared to the 7700 and 7900 series when it comes to overclocking? Quite well actually; it easily lives up to the standards set by AMD’s previous Southern Islands cards.

| Radeon HD 7800 Series Overclocking | ||||

| AMD Radeon HD 7870 | AMD Radeon HD 7850 | |||

| Shipping Core Clock | 1000MHz | 860MHz | ||

| Shipping Memory Clock | 4.8GHz | 4.8GHz | ||

| Shipping Voltage | 1.219v | 1.213v | ||

| Overclock Core Clock | 1150MHz | 1050MHz | ||

| Overclock Memory Clock | 5.4GHz | 5.4GHz | ||

| Overclock Voltage | 1.219v | 1.213v | ||

Overall we were able to push our 7870 from 1000MHz to 1150MHz, representing a sizable 15% core overclock. This is now the 3rd SI card we’ve hit 1125MHz or 1150MHz – the other two being the 7970 and the 7770 – so AMD’s overclocking headroom has been extremely consistent for their upper tier cards.

As for memory overclocking, we hit 5.4GHz on both cards before general performance started to plateau, representing a 12.5% memory overclock. Considering that both cards use the same RAM on the same PCB, and the performance limitation is the memory bus itself, this is consistent with what we would have expected. With that said, we are a bit surprised that we got so far over 5GHz on 2Gb GDDR5 memory chips only rated for 5GHz in the first place; it indicates that Hynix’s GDDR5 production very mature.

With that said, because of the unique and non-retail nature of the 7850 AMD supplied us, the 7850 overclocking results should be considered low-confidence. The retail 7850 cards will be using simpler and no doubt cheaper coolers, PCBs, and VRMs; all of these can reduce the amount of overclocking headroom a card has. It’s by no means impossible that a 7850 could hit 1050MHz/5.4GHz, but it’s far more likely on a 7870 PCB than it is on a 7850 PCB.

Anyhow we’ll take a look at gaming performance in a moment, but in the meantime let’s take a look at what our overclocks do to power, temperature, and noise.

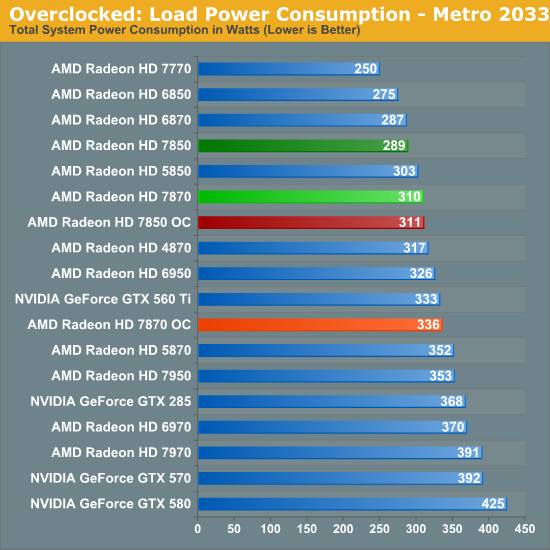

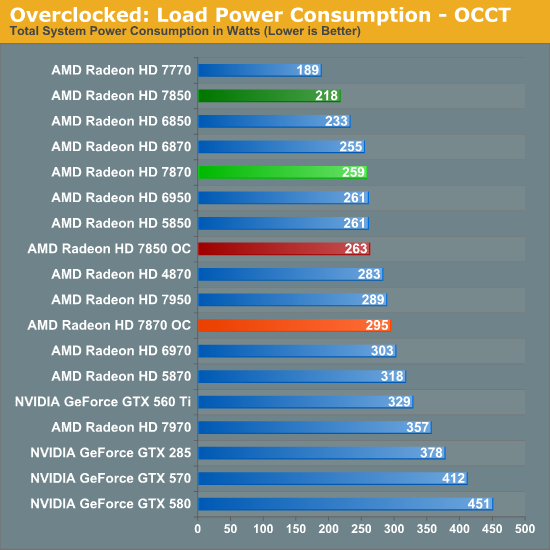

Even without a voltage increase overclocking does cause power consumption to go up, but not by a great deal. Under Metro the total difference is roughly 21W for the 7850 and 25W for the 7870, at least some of which can be traced back to the increased load on the CPU. Whereas on OCCT there’s a difference of nearly 40W on both cards, thanks to the increased PowerTune limits we’re using to avoid any kind of throttling when overclocked. All things considered with our overclocks power consumption for the 7850 approaches that of the 7870 and the 7870 approaches the GTX 560 Ti, which as we’ll see is a fairly small power consumption increase for the performance increase we’re getting.

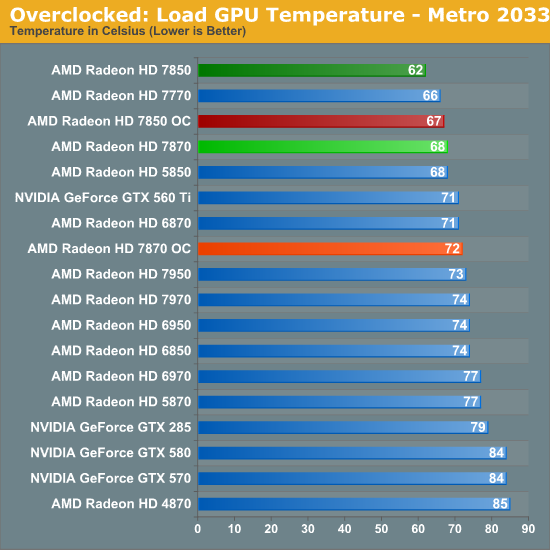

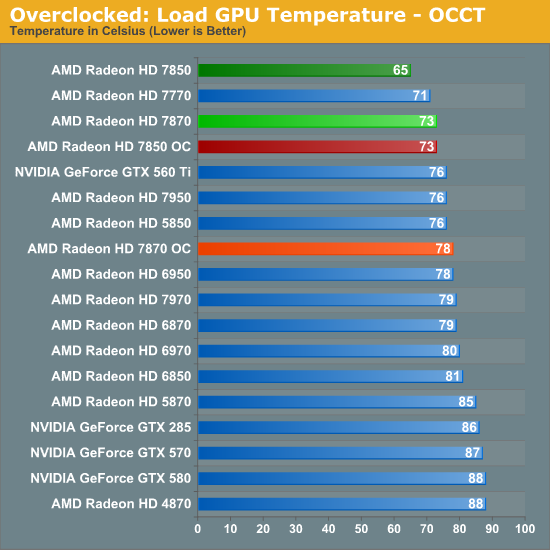

Of course when power consumption goes up so does temperature. For both cards under Metro and for the 7870 under OCCT this amounts to a 5C increase, while the 7850 rises 8C under Metro. However as with our regular temperature readings we would not suggest putting too much consideration into the 7950 numbers since it’s using a non-retail design.

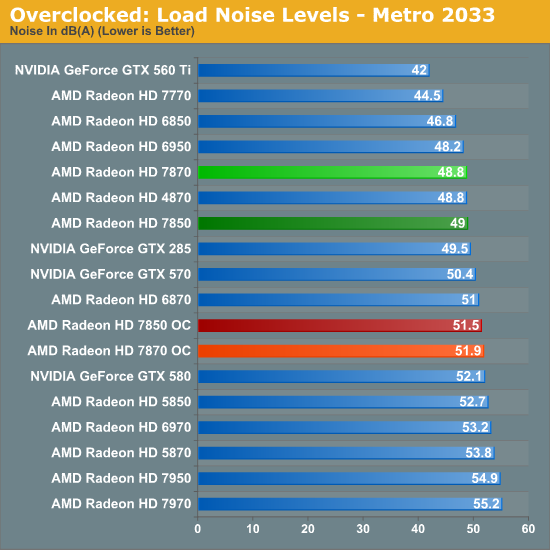

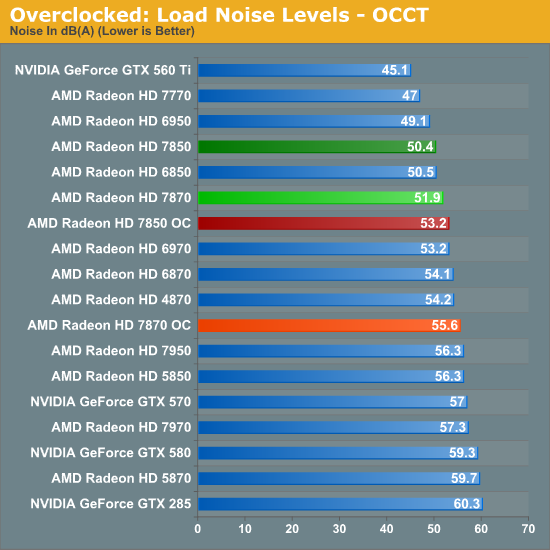

AMD’s conservative fan profiles mean that what are already somewhat loud cards get a bit louder, but in spite of what the earlier power draw differences would imply the increase in noise is rather limited. Paying particular attention to Metro 2033 here, the 7870 is just shy of 3dB louder at 51.9dB, while the 7850 increases by 2.7dB to 51.5dB. OCCT does end up being worse at 2.8dB and 3.7dB louder respectively, but keep in mind this is our pathological case with a much higher PowerTune limit.

173 Comments

View All Comments

mak360 - Monday, March 5, 2012 - link

Enjoy, now go and buyImSpartacus - Monday, March 5, 2012 - link

Yeah, I'm trying to figure out if a 7850 could go in an Alienware X51. It looks like it uses a 6 pin power connector and puts out 150W of heat.While we would lose Optimus, would it work?

taltamir - Monday, March 5, 2012 - link

optimus is laptops only. You do not have optimus with your desktop.ImSpartacus - Monday, March 5, 2012 - link

The X51 has desktop Optimus."The icing on the graphics cake is that the X51 is the first instance of desktop Optimus we've seen. That's right: you can actually plug your monitor into the IGP's HDMI port and the tower will power down the GPU when it's not in use. This implementation functions just like the notebook version does, and it's a welcome addition."

http://www.anandtech.com/show/5543/alienware-x51-t...

In reality, if I owned an X51, I would wait so I could shove the biggest 150W Kepler GPU in there for some real gaming.

But I'm sure the X51 will be updated for Kepler and Ivy Bridge, so now wouldn't be the best time to get an X51.

Waiting games are lame...

scook9 - Monday, March 5, 2012 - link

Wrong. Read a review.....The bigger issue will be the orientation of the PCIe Power Connector I expect. I have a tightly spaced HTPC that currently uses a GTX 570 HD from EVGA because it was the best card I could fit in the Antec Fusion enclosure. If the PCIe power plugs were facing out the side of the card and not the back I would have not been able to use it. I expect the same consideration will apply to the even smaller X51kungfujedis - Monday, March 5, 2012 - link

he does. x51 is a desktop with optimus.http://www.theverge.com/2012/2/3/2768359/alienware...

Samus - Monday, March 5, 2012 - link

EA really screwed AMD over with Battlefield 3. There's basically no reason to consider a Radeon card if you plan on heavily playing BF3, especially since most other games like Skyrim, Star Wars, Rage, etc, all run excellent on any $200+ card, with anything $300+ being simply overkill.The obvious best card for Battlefield 3 is a Geforce GTX 560 TI 448 Cores for $250-$280, basically identical in performance to the GTX570 in BF3. Even those on a budget would be better served with a low-end GTX560 series card unless you run resolutions above 1920x1200.

If I were AMD, I'd concentrate on increasing Battlefield 3 performance with driver tweaks, because it's obvious their architecture is superior to nVidia's, but these 'exclusive' titles are tainted.

kn00tcn - Monday, March 5, 2012 - link

screwed how? only the 7850 is slightly lagging behind, & historically BC2 was consistently a little faster on nvalso BF3 has a large consistent boost since feb14 drivers (there was another boost sometime in december, benchmark3d should have the info for both)

chizow - Tuesday, March 6, 2012 - link

@ SamusBF3 isn't an Nvidia "exclusive", they made sure to remain vendor agnostic and participate in both IHV's vendor programs. No pointing the finger and crying foul on this game, it just runs better on Nvidia hardware but I do agree it should be running better than it does on this new gen of AMD hardware.

http://www.amd4u.com/freebattlefield3/

http://sites.amd.com/us/game/games/Pages/battlefie...

CeriseCogburn - Monday, March 26, 2012 - link

In the reviews here SHOGUN 2 total war is said to be the very hardest on hardware, and Nvidia wins that - all the way to the top.--

So on the most difficult game, Nvidia wins.

Certainly other factors are at play on these amd favored games like C1 and M2033 and other amd optimized games.

--

Once again, on the MOST DIFFICULT to render Nvidia has won.