Samsung Galaxy S 2 (International) Review - The Best, Redefined

by Brian Klug & Anand Lal Shimpi on September 11, 2011 11:06 AM EST- Posted in

- Smartphones

- Samsung

- Galaxy S II

- Exynos

- Mobile

Understanding Rendering Techniques

It's been years since I've had to describe the differences in rendering techniques but given the hardware we're talking about today it's about time for a quick refresher. Despite the complexities involved in CPU and GPU design, both processors work in a manner that's pretty easy to understand. The GPU fundamentally has one function: to determine the color of each pixel displayed on the screen for a given frame. The input the GPU receives however is very different from a list of pixel coordinates and colors.

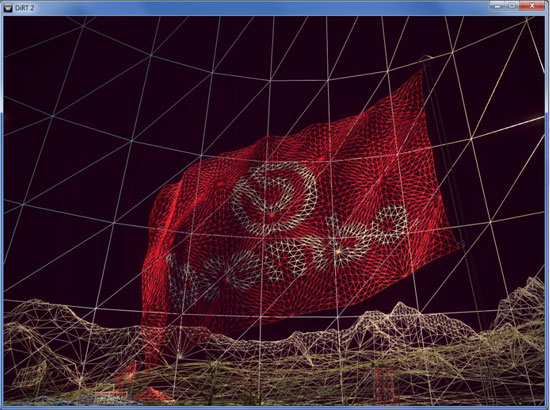

A 3D application or game will first provide the GPU with a list of vertex coordinates. Each set includes the coordinates for three vertices in space, these describe the size, shape and position of a triangle. A single frame is composed of hundreds to millions of these triangles. Literally everything you see on screen is composed of triangles:

Having more triangles (polygons) can produce more realistic scenes but it requires a lot more processing on the front end. The trend in 3D gaming has generally been towards higher polygon counts over time.

The GPU's first duty is to take this list of vertices and convert them into triangles on a screen. Doing so results in a picture similar to what we've got above. We're dealing with programmable GPUs now so its possible to run code against these vertexes to describe their interactions or effects on them. An explosion in an earlier frame may have caused the vertices describing a character's elbow to move. The explosion will also impact lighting on our character. There's going to be a set of code that describes how the aforementioned explosion impacts vertices and another snippet of code that describes what vertices it impacts. These code segments run and modify details of the vertices at this stage.

With the geometry defined the GPU's next job is rasterization: figure out what pixels cover each triangle. From this point on the GPU stops dealing in vertices and starts working in pixel coordinates.

Once rasterized, it's time to give these pixels some color. The color of each pixel is determined by the texture that covers that pixel and/or the pixel shader program that runs on that pixel. Similar to vertex shader programs, pixel shader programs describe effects on pixels (e.g. flicker bright orange at interval x to look like fire).

Textures are exactly what they sound like: wallpaper for your polygons. Knowing pixel coordinates the GPU can go out to texture memory, fetch the texture that maps to those pixels and use it to determine the color of each pixel that it covers.

There's a lot of blending and other math that happens at this stage to deal with corner cases where you don't have perfect mapping of textures on polygons, as well as dealing with what happens when you've got translucency in your textures. After you get through all of the math however the GPU has exactly what it wanted in the first place: a color value for every pixel on the screen.

Those color values are written out to a frame buffer in memory and the frame buffer is displayed on the screen. This process continues (hopefully) dozens of times per second in order to deliver a smooth visual experience.

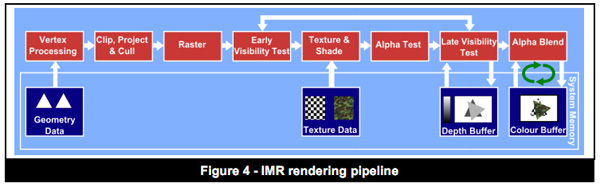

The pipeline I've just described is known as an immediate mode renderer. With a few exceptions, immediate mode renderers were the common architectures implemented in PC GPUs over the past 10+ years. These days pure immediate mode renderers are tough to find though.

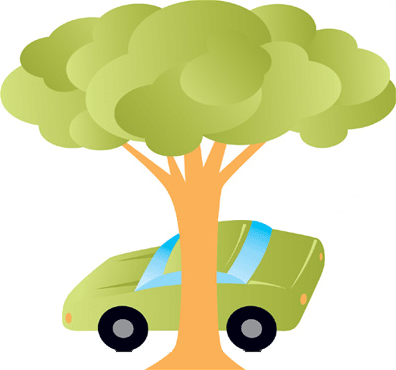

IMRs render the full car and the tree, even though part of the car is occluded

Immediate mode renderers (IMRs) brute force the problem of determining what to draw on the screen. They take polygons as they receive them from the CPU, manipulate and shade them. The biggest problem here is although data for every polygon is sent to the GPU, some of those polygons will never be displayed on the screen. A character with thousands of polygons may be mostly hiding behind a pillar, but a traditional immediate mode renderer will still put in all of the work necessary to plot its geometry and shade its pixels, even though they'll never be seen. This is called overdraw. Overdraw unfortunately wastes time, memory bandwidth and power - hardly desirable when you're trying to deliver high performance and long battery life. In the old days of IMRs it wasn't uncommon to hear of 4x overdraw in a given scene (i.e. drawing 4x the number of pixels than are actually visible to the user). Overdraw becomes even more of a problem with scene complexity.

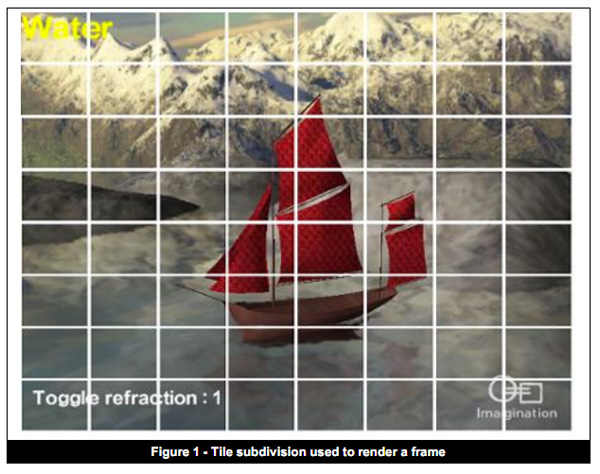

Tile Based Deferred Rendering

On the opposite end of the spectrum we have tile based deferred rendering (TBDR). Immediate mode renderers work in a very straightforward manner. They take vertices, create polygons, transform and light those polygons and finally texture/shade/blend the pixels on them. Tile based deferred renderers take a slightly different approach.

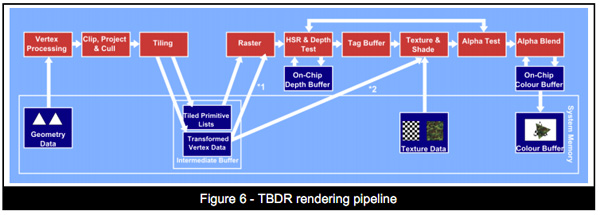

TBDRs subdivide the scene into smaller tiles on the order of a few hundred pixels. Vertex processing and shading continue as normal, but before rasterization the scene is carved up into tiles. This is where the deferred label comes in. Rasterization is deferred until after tiling and texturing/shading is deferred even longer, until after overdraw is eliminated/minimized via hidden surface removal (HSR).

Hidden surface removal is performed long before we ever get to the texturing/shading stage. If the frontmost surface being rendered is opaque, there's absolutely zero overdraw in a TBDR architecture. Everything behind the frontmost opaque surface is discarded by performing a per-pixel depth test once the scene has been tiled. In the event of multiple overlapping translucent surfaces, overdraw is still minimized. Only surfaces above the farthest opaque surface are rendered. HSR is performed one tile at a time, only the geometry needed for a single tile is depth tested to keep the problem manageable.

With all hidden surfaces removed then, and only then, is all texture data fetched and all pixel shader code executed. Rendering (or more precisely texturing and shading) is deferred until after a per-pixel visibility test is passed. No additional work is expended and no memory bandwidth wasted. Only what is visible in the final scene is rasterized, textured and shaded on each tile.

The application doesn't need to worry about the order polygons are sent for rendering when dealing with a TBDR, the hidden surface removal process takes care of everything.

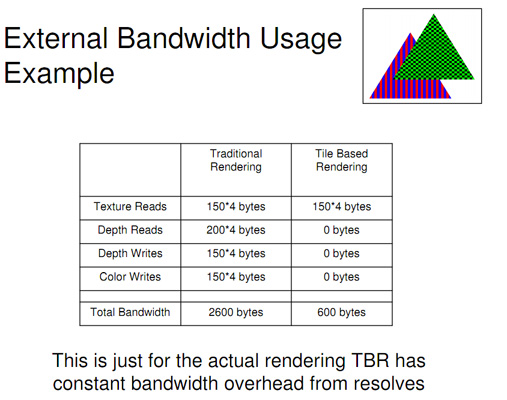

In memory bandwidth constrained environments TBDRs do incredibly well. Furthermore, the efficiencies of a TBDR really shine when running applications and games that are more shader heavy rather than geometry heavy. As a result of the extensive hidden surface removal process, TBDRs tend not to do as well in scenes with lots of complex geometry.

What's In Between Immediate Mode and Deferred Rendering?

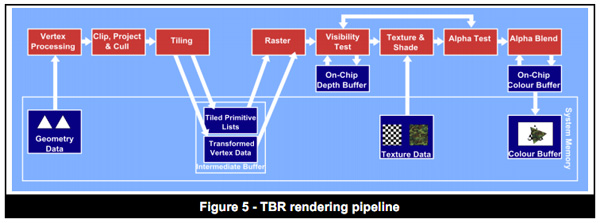

These days, particularly in the mobile space, many architectures refer to themselves as "tile based". Unfortunately these terms can have a wide variety of meanings. The tile based deferred rendering architecture I described above really only applies to GPUs designed by Imagination Technologies. Everything else falls into the category of tile based immediate mode renderers, or immediate mode renderers with early-z.

These GPUs look like IMRs but they implement one or both of the following: 1) scene tiling, 2) early z rejection.

Scene tiling is very similar to what I described in the section on TBDRs. Each frame is divided up into tiles and work is done on a per-tile basis at some point in the rendering pipeline. The goal of dividing the scene into tiles is to simplify the problem of rendering and better match the workload to the hardware (e.g. since no GPU is a million execution units wide, you make the workload more manageable for your hardware). Also by working on small tiles caches behave a lot better.

The big feature that this category of GPUs implements is early-z rejection. Instead of waiting until after the texturing/shading stage to determine pixel visibility, these architectures implement a coarse test for visibility earlier in the pipeline.

Each vertex has a depth value and using those values you can design logic to find out what polygons (or parts of polygons) are occluded from view. GPU makers like ATI and NVIDIA introduced these early visibility tests years ago (early-z or hierarchical-z are some names you may have heard). The downside here is that early-z techniques only work if the application submits vertices in a front-to-back order, which does require extra work on the application side. IMRs process polygons in the order they're received, and you can't reject anything if you're not sure if anything will be in front of it. Even if an application packages up vertex data in the best way possible, there are still situations where overdraw will occur.

The good news is you get some of the benefits of a TBDR without running into trouble should geometry complexities increase. The bad news is that a non-TBDR architecture will still likely have higher amounts of overdraw and be less memory bandwidth efficient than a TBDR.

Most modern PC GPUs fall into this category. Both NVIDIA's Fermi and AMD's Cayman GPUs do some amount of tiling although they have their roots in immediate mode rendering.

The Mobile Landscape

Understanding the difference between IMRs, IMRs with early-z, TBRs and TBDRs, where do the current ultra mobile GPUs fall? Imagination Technologies' PowerVR SGX 5xx is technically the only tile based deferred renderer that allows for order independent hidden surface removal.

Qualcomm's Adreno 2xx and ARM's Mali-400 both appear to be tile based immediate mode renderers that implement early-z. This is particularly confusing because ARM lists the Mali-400 as featuring "advanced tile-based deferred rendering and local buffering of intermediate pixel states". The secret is in ARM's optimization documentation that states: "One specific optimization to do for Mali GPUs is to sort objects or triangles into front-to-back order in your application. This reduces overdraw." The front-to-back sort requirement is necessary for most early-z technologies to work properly. These GPUs fundamentally tile the scene but don't perform full order independent hidden surface removal. Some aspects of the traditional rendering pipeline are deferred but not to the same extent as Imagination's design.

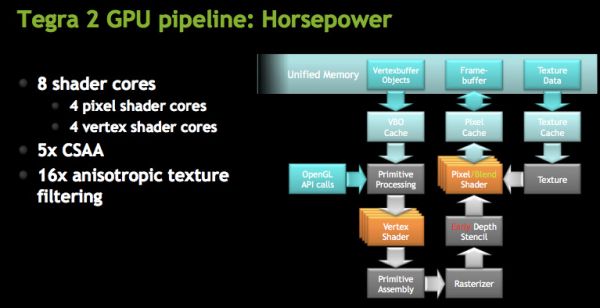

NVIDIA's GeForce ULP in the Tegra 2 is an IMR with early-z. NVIDIA has long argued that its design is the best for future games with increasing geometry complexities as a result of its IMR design.

Today there's no real benefit to not building a TBDR in the ultra mobile space. Geometry complexities aren't very high and memory bandwidth does come at a premium. Moving forward however, the trend is likely going to mimic what we saw in the PC space: towards more polygon heavy games. There is one hiccup though: Apple.

In the evolution of the PC graphics industry the installed base of tile based deferred renderers was extremely small. Imagination's technology surfaced in two discrete GPUs: STMicro's Kyro and Kyro II, but neither was enough to stop NVIDIA's momentum at the time. Since immediate mode renderers were the norm, games simply developed around their limitations. AMD and NVIDIA both eventually implemented elements of tiling and early-z rejection, but TBDRs never took off in PCs.

In the ultra mobile space Apple exclusively uses Imagination Technologies GPUs, which I mentioned above are tile based deferred renderers. Apple also happens to be a major player, if not the biggest, in the smartphone/tablet gaming space today. Any game developer looking to put out a successful title is going to make sure it runs well on iOS hardware. Game developers will likely rely on increasing visual quality through pixel shader effects rather than ultra high polygon counts. As long as Imagination Technologies is a significant player in this space, game developers will optimize for TBDRs.

132 Comments

View All Comments

VivekGowri - Sunday, September 11, 2011 - link

I literally cannot wait to read this article, and I similarly cannot wait for SGS2 to launch in the US.ImSpartacus - Sunday, September 11, 2011 - link

You guys don't get early access to drafts?niva - Monday, September 12, 2011 - link

I own an original Galaxy S, until it's been proven that Samsung updates to the latest Android within a month after major releases I will not buy anything but a Nexus phone in the future (assuming I even go with Android). By the time that decision has to be made I'm optimistic there will be unlocked WP7 Nokias available.Havor - Monday, September 12, 2011 - link

Seriously , whats the problem, I was running 2.2 and 2.3 when they came out, could have them sooner, I just dont like to run roms with beta builds.So you never heard of Rooting and Custom Roms?

Its the nature of companies to have long and COSTLY eternal testing routs, done mainly by people with 9 to 5 jobs, as delivering buggy roms is bad for there name, but then so is not updating to but its lots less hurtful, as most people dont care or know any better.

Next to that if your phone is a phone is customized with extra crapeware by your provider it can be that it takes months before you get a update even do Samsung delivered one a long time ago.

The rooting scene is totally different, its done by nerds with passion for what they do, and yes the early/daily builds have bugs but also get mouths quicker reported and fixed by the scene.

And imho are the final updates just as stable as the factory builds.

Dont like how your Android is working?

Stop bitching and fixed your self, its not that hard, as it is a OS platform, just make sure you can root your phone, before you buy it.

The following website explains it all.

http://androidforums.com/galaxy-s-all-things-root/...

http://androidforums.com/galaxy-s-all-things-root/

vision33r - Monday, September 12, 2011 - link

If it's your personal phone, you can do whatever you want. However like some of us here with jobs that let us pick phones. One requirement is the phone has to be stock and no rooting allowed.Samsung is about the worst of the 3 makers in terms of software updates.

niva - Monday, September 12, 2011 - link

Seriously calm down, I've heard plenty about rooting and custom roms but phone hackery is not something I'm interested in right now. I don't have the time or energy for it. I shouldn't have to manually go through rooting and updating my phone, especially when security issues are involved.I like the way 2.2 is working on the SGS. I bought this phone from a friend who upgraded and it's not something I would've paid the retail price for. I've not run into anything so far that's made me actually bother with the rooting and manual upgrade process. I've not read into rooting the phone or updating it, but I'm sure if I get into it this will take me a long time (hours/days) which I shouldn't need to sacrifice to run the latest version of the OS.

From the political standpoint the blame is both on Samsung and T-Mobile apparently in terms of getting the new revisions out.

From my personal standpoint I despise all companies who do not use the default Android distro, running skins and secondary apps, on the phones they ship out. While some of the things they do are nice, it slows down their ability to keep up with android revisions.

On the other hand, my wife's Nexus (original one) updates faster than internet posts saying Android 2.3.x has been rolled out. It's friggin awesome. She had one problem with battery draining really fast after a recent upgrade but I managed to fix that after a couple of hours of forum searching and trying different things.

So it's simple, if I will buy another Android in the future, it will be a Nexus phone, where I know from personal experience that everything works in terms of having the latest and greatest. Notice the Nexus S is made by Samsung, it's for the most part identical to the phone I have, yet gets the updates immediately and doesn't have the known security problems I'm exposed to.

ssj4Gogeta - Monday, September 12, 2011 - link

Well, the international version got 2.3.3 around ~3 months ago here (and earlier for other countries).poohbear - Tuesday, September 13, 2011 - link

vision33r u dont know what you're talking about. People bitch and complaina bout software updates, but how are the quality of those updates? when its updated too soon there are bugs and ppl complain, updated later ppl complain about the wait times. I remember last year Motorola said they're not updating their XT720 to android 2.2., they're leaving it at 2.1. S korea Motorola was the only branch that decided to do it, but guess what? 2.2 was too much for the hardware in the XT720 to handle, and it ran slooooow! XT720 users all over complained about it, but the reality is the phone couldnt handle it. 90% of smartphone users want something stable that works, they dont care about having the latest and greatest Android build. So if Samsung errs on the side of quality and takes more time to release stable quality software, then all the power to them!anishannayya - Friday, September 23, 2011 - link

Actually, if updates are your hard-on, then you'd likely be looking at Motorola in the future (due to the Google acquisition).The entire reason why the Nexus lines of phones are quick to get updates is because the are co-developed with Google. As a result, these phones are the ones the Google developers are using to test the OS. When it is ready to go, it is bug free on the device, so Samsung/HTC can roll it out immediately.

At the end of the day, any locked phone is plagued by carrier bloatware, which is the biggest slowdown in software release. Just buy an unlocked phone, like this one, in the future.

ph00ny - Sunday, September 11, 2011 - link

It's awesome to see this article finallyI'm glad François Simond aka supercurio contributed to the article

Btw that slot on the left is for the hand strap which is very popular in asia for accessory attachments