OCZ Vertex 3 MAX IOPS & Patriot Wildfire SSDs Reviewed

by Anand Lal Shimpi on June 23, 2011 4:35 AM ESTA Note on Real World Performance

The majority of our SSD test suite is focused on I/O bound tests. These are benchmarks that intentionally shift the bottleneck to the SSD and away from the CPU/GPU/memory subsystem in order to give us the best idea of which drives are the fastest. Unfortunately, as many of you correctly point out, these numbers don't always give you a good idea of how tangible the performance improvement is in the real world.

Some of them do. Our 128KB sequential read/write tests as well as the ATTO and AS-SSD results give you a good indication of large file copy performance. Our small file random read/write tests tell a portion of the story for things like web browser cache accesses, but those are difficult to directly relate to experiences in the real world.

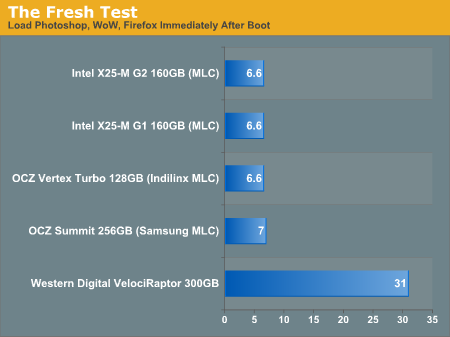

So why not exclusively use real world performance tests? It turns out that although the move from a hard drive to a decent SSD is tremendous, finding differences between individual SSDs is harder to quantify in a single real world metric. Take application launch time for example. I stopped including that data in our reviews because the graphs ended up looking like this:

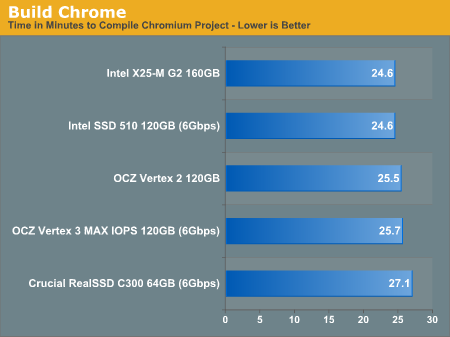

All of the SSDs performed the same. It's not just application launch times though. Here is data from our Chrome Build test timing how long it takes to compile the Chromium project:

Even going back two generations of SSDs, at the same capacity nearly all of these drives perform within a couple of percent of one another. Note that the Vertex 3 is even a 6Gbps drive and doesn't even outperform its predecessor.

So do all SSDs perform the same then? The answer there is a little more complicated. As I mentioned at the start of this review, I do long term evaluation of all drives I recommend in my own personal system. If a drive is particularly well recommended I'll actually hand out drives for use in the systems of other AnandTech editors. For example, back when I wanted to measure actual write amplification on SandForce drives I sent three Vertex 2s to three different AnandTech editors. I had them use the drives normally for two - three months and then looked at the resulting wear on the NAND.

In doing these real world use tests I get a good feel for when a drive is actually faster or slower than another. My experiences typically track with the benchmark results but it's always important to feel it first hand. What I've noticed is that although single tasks perform very similarly on all SSDs, it's during periods of heavy I/O activity that you can feel the difference between drives. Unfortunately these periods of heavy I/O activity aren't easily measured, at least in a repeatable fashion. Getting file copies, compiles, web browsing, application launches, IM log updates and searches to all start at the same time while properly measuring overall performance is near impossible without some sort of automated tool. Unfortunately most system-wide benchmarks are more geared towards CPU or GPU performance and as a result try to minimize the impact of I/O.

The best we can offer is our Storage Bench suite. In those tests we are actually playing back the I/O requests captured of me using a PC over a long period of time. While all other bottlenecks are excluded from the performance measurement, the source of the workload is real world in nature.

What you have to keep in mind is that a performance advantage in our Storage Bench suite isn't going to translate linearly into the same overall performance impact on your system. Remember these are I/O bound tests, so a 20% increase in your Heavy 2011 score is going to mean that the drive you're looking at will be 20% faster in that particular type of heavy I/O bound workload. Most desktop PCs aren't under that sort of load constantly, so that 20% advantage may only be seen 20% of the time. The rest of the time your drive may be no quicker than a model from last year.

The point of our benchmarks isn't to tell you that only the newest SSDs are fast, but rather to show you the best performing drive at a given price point. The best values in SSDs are going to be last year's models without a doubt. I'd say that the 6Gbps drives are interesting mostly for the folks that do a lot of large file copies, but for most general use you're fine with an older drive. Almost any SSD is better than a hard drive (almost) and as long as you choose a good one you won't regret the jump.

I like the SF-2281 series because, despite things like the BSOD issues, SandForce has put a lot more development and validation time into this controller than its predecessor. Even Intel's SSD 320 is supposed to be more reliable than the X25-M G2 that came before it. Improvements do happen from one generation to the next but they're evolutionary - they just aren't going to be as dramatic as the jump from a hard drive to an SSD.

So use these numbers for what they tell you (which drive is the fastest) but keep in mind that a 20% advantage in an I/O bound scenario isn't going to mean that your system is 20% faster in all cases.

112 Comments

View All Comments

Paazel - Thursday, June 23, 2011 - link

Do you allow your computer to sleep? I had a Vertex 2 die on me, and forum speculation led me to believe that allowing my computer to sleep may have been the culprit.Anand Lal Shimpi - Thursday, June 23, 2011 - link

My personal machine that it's deployed in is a notebook that is allowed to sleep (and does so) regularly.I also don't do any of the odd stability optimizations on my testbeds either. Sleep is always enabled and definitely allowed to happen (I don't always catch my testbeds after they've finished a long test so they'll go off to sleep).

While I do believe that earlier issues may have been sleep related, I'm not sure about this one in particular.

Take care,

Anand

Ryan Smith - Thursday, June 23, 2011 - link

Just to throw in my own $0.02, although I put my Vertex 2 in a desktop, my results are the same as what Anand has seen. My desktop hybrid sleeps regularly, and I have not encountered any issues.JasonInofuentes - Friday, June 24, 2011 - link

+1 On an Agility 2 90GB, MicroCenter Sandforce 64GB drive and Agility 2 40GB in a desktop, netbook and HTPC setting, all allowed to sleep. Indeed I blame many of my PC related issues to my inability to sleep.sam. - Saturday, June 25, 2011 - link

I have a 120GB Vertex with the Indilinx controller and had mine die on me after about a year and a half of average use in my laptop. (Mind you the RMA process was good, and they replaced it with a new identical SSD). I had nearly 2700 power on times (putting my laptop to sleep multiple times a day) and 3.7 terrabytes written onto the SSD before it started corrupting registry files and BSODing.To be honest, a year and a half as a lifespan seems really bad for what was a high end product, though from what I hear the Sandforce controller is better in terms of reliability. I am still willing to let my laptop sleep though, though just doing my best to write less to the SSD.

kahwaji_n - Thursday, June 23, 2011 - link

i don't think so, maybe if your computer hibernate a Lot then it may be the reason for that, cause when computer sleep the ram will still hold the data and little data has to be written to disk drive contrary to hibernation where the Ram will put to sleep and all data will be written back to disk drive, if you have windows 7 and SSD in raid setup (where no trim command could be pass to controller) and your computer hibernate periodically! run the index Performance in windows 7 and see how the Performance is degraded severely.iwod - Thursday, June 23, 2011 - link

I think the first few Graph / Charts pretty much sums up what i have been saying. With Double the Seq Read, Random Read numbers, you only get less then 10% performance difference. The bottleneck for majority of our workload has shifted back from SSD storage to CPU processing speed.Which means, the best time to get an SSD is now!, If you can afford it and the Storage space is enough for a main OS drive.

L. - Thursday, June 23, 2011 - link

Err .. it's going to be dirt cheap pretty soon .. I wouldn't spend "GFX bucks" on a storage device tbh. (Seriously, for that price I prefer my 2TBWDgreen raid10 ... makes so much more sense even though it does not serve the same purpose...)khan321 - Thursday, June 23, 2011 - link

Why no mention of the increased lifespan of 32nm NAND? This is a massive benefit to me over 25nm.B3an - Thursday, June 23, 2011 - link

Because Anand has pointed this out before. Theres absolutely nothing to worry about regarding the lifespan on 25nm with a good controller, as it would last many many decades. The nand flash will lose it's charge before this happens anyway.