The Intel SSD 320 Review: 25nm G3 is Finally Here

by Anand Lal Shimpi on March 28, 2011 11:08 AM EST- Posted in

- IT Computing

- Storage

- SSDs

- Intel

- Intel SSD 320

AnandTech Storage Bench 2011: Much Heavier

I didn't expect to have to debut this so soon, but I've been working on updated benchmarks for 2011. Last year we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. I assembled the traces myself out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally I kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system.

Not too long ago I tweeted that I had created what I referred to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. It's the load you'd put on a drive after nearly two weeks of constant usage. And it takes a *long* time to run.

I'll be sharing the full details of the benchmark in some upcoming SSD articles but here are some details:

1) The MOASB, officially called AnandTech Storage Bench 2011 - Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. My thinking was that it's during application installs, file copies, downloading and multitasking with all of this that you can really notice performance differences between drives.

2) I tried to cover as many bases as possible with the software I incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II & WoW are both a part of the test) as well as general use stuff (application installing, virus scanning). I included a large amount of email downloading, document creation and editing as well. To top it all off I even use Visual Studio 2008 to build Chromium during the test.

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result I'm going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time I'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, I will also break out performance into reads, writes and combined. The reason I do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. Lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running last year.

As always I don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea.

The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests. All of the older tests are still run on our X58 platform.

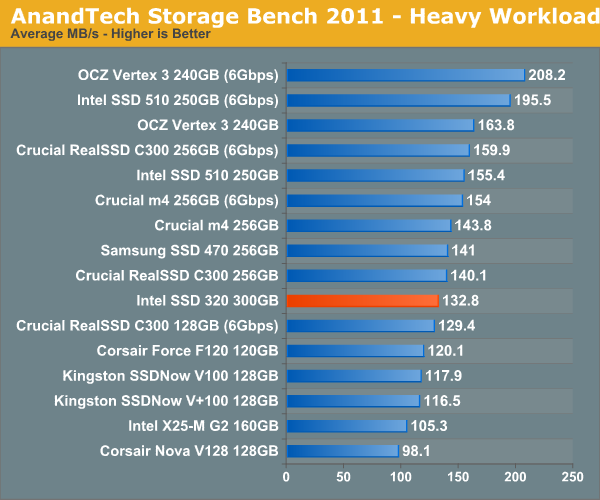

AnandTech Storage Bench 2011 - Heavy Workload

We'll start out by looking at average data rate throughout our new heavy workload test:

Overall performance is decidedly last generation. The 320 is within striking distance of the 510 but is slower overall in our heavy workload test.

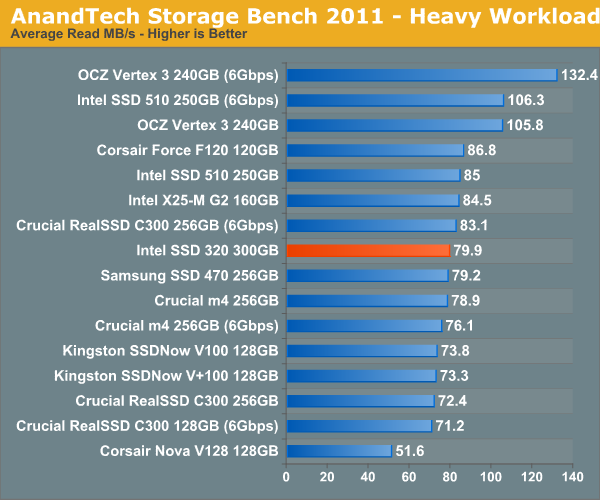

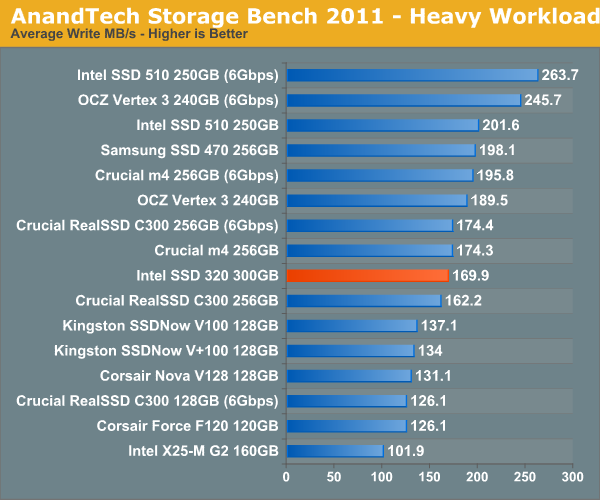

The breakdown of reads vs. writes tells us more of what's going on:

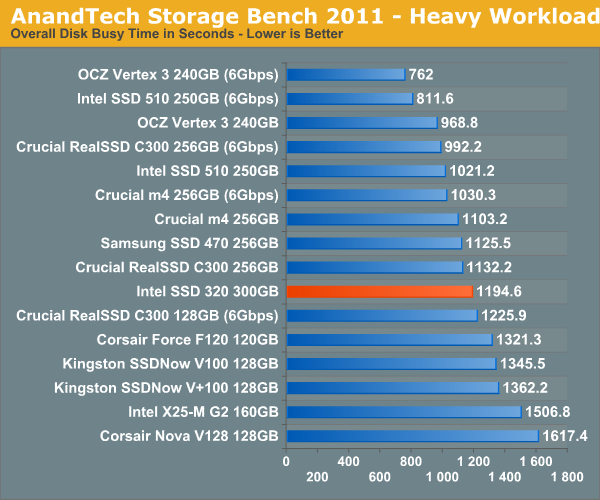

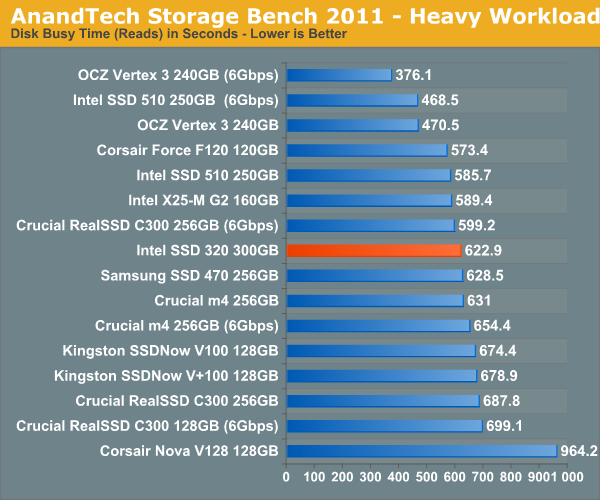

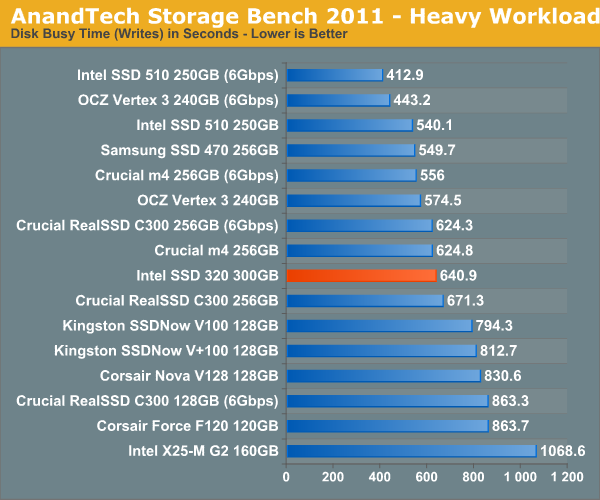

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

194 Comments

View All Comments

hackztor - Monday, March 28, 2011 - link

very sad from Intel. Was waiting for these and disappointed. Last gen performance...Price is not very exciting either.Wolfpup - Monday, March 28, 2011 - link

Looks good to me. Intel reliability, FINALLY larger drive sizes + automatic full disk encryption is awesome.Only bad thing is I can't actually find them on Amazon or Newegg yet, and I'd like one for a new system...

vol7ron - Monday, March 28, 2011 - link

You must be blind.This is to the X25-M G2 what Vista was to XP. It's a, "don't buy unless you have to" situation.

OCZ/SandForce have to be laughing - Intel is in shambles. First, SSD delays, then Mobo chipset recalls, then Chandra Anand quits, and now this crap.

Sure, their SSD is still reliable and it's not a bad product, but the pricing isn't even that great. Maybe they don't need to implement the SF-2k series algorithm, but some sort of compression engine would be nice. Claiming powerout reliability is like saying, "we just don't know how to make these capacitors hold a charge." Honestly, I don't care if you have to add a backup NiCad battery. Sure Intel has had reliability down, but they've had over a year to work on speed - this is akin to the Western Digital SSD release; of course it's much more attractive, but it's the reliability vs speed situation.

Who knows, maybe Intel's laughing at us just as hard as OCZ is laughing at them.

Griswold - Tuesday, March 29, 2011 - link

Actually, I'm laughing at you and your nonsense theories. Thanks for that.vol7ron - Tuesday, March 29, 2011 - link

:) a little lite accusations for a comical releaseThermogenic - Tuesday, March 29, 2011 - link

Intel is in shambles? LOL.vol7ron - Tuesday, March 29, 2011 - link

Okay, maybe a little bit of exaggeration, but this wasn't a strong release and they've had their large share of problems lately - don't you think?x86 will have a tough time dealing with RISC. I think RISC is just a better technology, since it doesn't have to deal with legacy instructions. That translates to performances and power efficiencies.

While Intel does still have some room to shrink the die, there isn't much room with current technologies. Also, the ARM chips will continue to decrease in die shrinks as well. That being said, there was some evidence that there could be a cheap alternative to Silicon on the horizon, which would allow for smaller theoretical components. (http://www.dailytech.com/Researchers+Claim+Molybde...

The way I see it, this is similar to when Intel moved the mem controller of the chipset and onto the die. They could have done it sooner, but they are good at extracting $$ from their customers. They reached the high clock rate track and had re-think their position.

While Intel may not be in shambles per se, in its current state, Intel has dished out a lot of debt, they haven't done too well with their NVidia relationship, and they're struggling in the mobile space. And I think AMD is still the better choice on the server front, if I recall correctly. This is all going on while their CEO is on the Obama's Job Council, which I still say that was probably not the best decision.

So, for a company that's been ahead for the last few years, they still have some short-term capabilities, but it's the long term that's important. They need to be successful in breaking into and building a new market segment (Mobile/HTPCs/SSDs).

Wiggy McShades - Tuesday, April 5, 2011 - link

Right now intel has to at very least TRY to hold on to x86 because they only have one competitor in this area, a competitor is severely limited compared to intel, which means huge profits. Intel could easily license the arm architecture and produce an soc that'd blow the everything out of the water. The reason I'm saying this is their manufacturing capabilities are the best in the world which means a LOT for producing microprocessors and currently their cpu's are just a RISC design that translates x86 instructions, so they'd really not even have to hire new engineers. Although then that means a loss in confidence in x86 and could lead to a transition to the arm architecture which they don't control. They probably know x86 isn't going to make it forever, but holding on to it for now is extremely profitable. We'll see how crafty they can get in shoehorning x86 into the mobile arena and that should truly decide how much longer x86 will be around. Intel can easily stand on their manufacturing capabilities and the insanely talented engineers they employ to compete in any up and coming markets, but it's just in their best interest to try and keep x86 dominance for now.FunBunny2 - Saturday, January 14, 2012 - link

Just re-reading, and Intel hasn't built a X86 chip with the instruction set in hardware in more than a decade. There chips are RISC with a X86 suit.piroroadkill - Friday, July 5, 2013 - link

Haha, it's fun reading these old comments, this is one so ill-conceived it makes me laugh.I have an Intel 320 120GB and it still works perfectly. Same can't be said for the OCZ Vertex 2 it replaced, and of course, we all know what happened to OCZ and partly Sandforce's reputation..