- Hybrid SLI

- nForce 780a

- nForce 750a

- nForce 730a

- GeForce 8200 Motherboard GPU

- New PureVideo HD features

Over the coming days we’ll touch on all of this, but for now we’ll stick with the most exciting pieces of news, related to Hybrid SLI.

We’ll start things off with a shocker then: NVIDIA is going to putting integrated GPUs on all of their motherboards. Yes, you’re reading that right, soon every NVIDIA motherboard will ship with an integrated GPU, from the highest end enthusiast board to the lowest end budget board. Why? Hybrid SLI.

It’s no secret that enthusiast-class GPUs have poor power performance, even when idling they eat a lot of power. It’s just not possible right now to make a G80 or G92 based video card power down enough that it’s not sucking at least some non-trivial amount of power. NVIDIA sees this as a problem, particularly with the most powerful setups (2 8800GTXs for example) and wants to avoid this problem in the future. Their solution to this is the first part of Hybrid SLI and the reasoning for moving to adding integrated GPUs in every board, what NVIDIA is calling HybridPower.

If you’ve read our article on AMD’s Hybrid Crossfire technology then you already have a good idea of where this is leading. NVIDIA wants to achieve better power reductions by switching to an integrated GPU when doing non-GPU intensive tasks and shutting off the discrete GPU entirely. The integrated GPU eats a fraction of the power of even an idle discrete GPU, with the savings increasing as we move up the list of discrete GPUs towards the most powerful ones.

To do this, NVIDIA needs to get integrated GPUs in more than just their low-end boards, which is why they are going to begin putting integrated GPUs in all of their boards. NVIDIA seems rather excited about what this is going to do for power consumption . To illustrate the point they gave us some power consumption numbers from their own testing, which while we can’t confirm at this point and are certainly the worst (best) case situation, we know from our own testing that NVIDIA’s numbers can’t be too far off.

Currently NVIDIA is throwing around savings of up to 400W, this assumes a pair of high-end video cards in SLI, where they eat 300W each at load and 200W at idle. NVIDIA doesn’t currently have any cards with this kind of power consumption, but they might with future cards. Even if this isn’t the case, we saw a live demo where NVIDIA showed us using this technology saving around 120W on a rig they brought, so 100W looks like a practical target right now. The returns will diminish as we work our way towards less powerful cards, but NVIDIA is arguing that the savings are still worth it. The electricity to idle a enthusiast GPU is not trivial, so even cutting 60W is approaching a level on-par with getting rid of an incandescent lightbulb.

NVIDIA is looking at this as a value-added feature of their nForce line and not something they want to be charging people for. What we are being told is that the new motherboards supporting this technology will not cost anything more than the motherboards they are replacing, which relieves an earlier concern we had about basically being forced to buy another GPU.

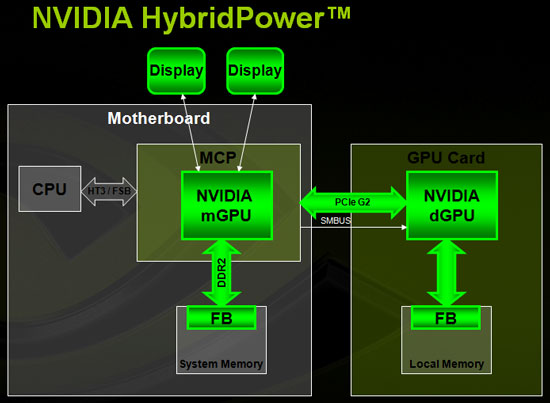

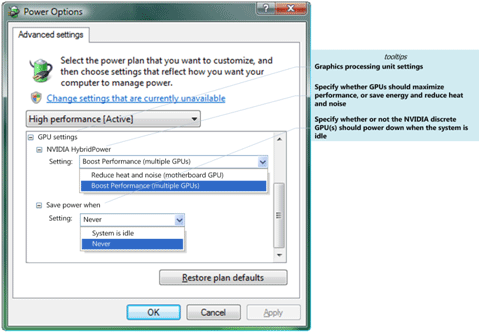

We also have some technical details about how NVIDIA will be accomplishing this, which on a technical level we find impressive, but we’re also waiting on more information. On a supported platform (capable GPU + capable motherboard, Windows only for now) an NVIDIA application will be active that controls the status of the HybridPower technology. When the computer is under a light load, the discrete GPU will be turned off entirely via the SMbus, and all rendering will be handled by the integrated GPU. If it needs more GPU power, the SMbus will wake up the discrete GPU and rendering will be taken over by it.

Because of the switching among GPUs, the motherboard will now be the location of the various monitor connections, with NVIDIA showing us boards that have a DVI and a VGA connector attached. Operation when using the integrated GPU is pretty straightforward, while when the discrete GPU is active things get a bit more interesting. When in this mode, the front buffer (completed images ready to be displayed) will be transferred from the discrete GPU to the front buffer for the integrated GPU (in this case a region of allocated system memory) and finally displayed by the electronics housed in the integrated GPU. By having the integrated GPU be the source of all output, this allows seamless switching between the two GPUs.

All of this technology is Vista only right now, with NVIDIA hinting that this will rely on some new features in Vista SP1 due this quarter. We also got an idea of the amount of system memory required by the integrated GPU to pull this off, NVIDIA is throwing around a 256MB minimum frame buffer for that chip.

NVIDIA demonstrated this at their event with some alpha software which will be finished and smoothed out as the software gets closer to shipping. For now the transition between GPUs isn’t smooth (the current process has some flickering involved) but NVIDIA has assured us this will be resolved by the time it ship and we have no reason not to believe them. The other issue we saw with our demonstration is that the software is currently all manual; the user decides which mode to run in. NVIDIA wasn’t as straightforward on when this would be handled. It’s sounding like the first shipping implementations will still be manual, with automatic switching already possible at the hardware level but the software won’t catch up until later this year. This could be a stumbling block for NVIDIA, but we’re going to have to wait and see.

The one piece of information we don’t have that we’d like to have is the performance impact of running a high-load situation with a discrete GPU with HybridPower versus without. Transferring the frame buffer takes resources (but nothing that PCIe can’t handle) but we’re more worried about what this means for system memory bandwidth. Since the copied buffer ends up there first before being displayed, this could take up a decent chunk of system memory bandwidth in copying large buffers in to memory and then back out to display it (a problem that would be more problematic with higher end cards and the higher resolutions commonly used). Further we’re getting the impression that this is going to add another frame of delay between rendering displaying (basically this could operate in a manner similar to triple buffering) which would be a problem for users who start getting input lagged to the point where it’s affecting the enjoyment of their game. NVIDIA is talking about the performance not being a problem, but it’s something we really need to see for ourselves. It becomes a lot harder to recommend and sell HybridPower if it’s going to cause any significant performance issues and we’ll be following this up with solid data once we have it.

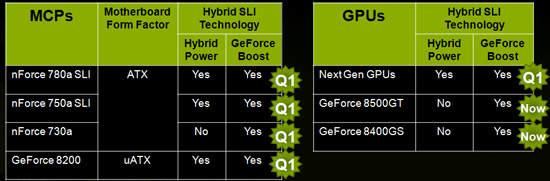

Moving on, the other piece of Hybrid SLI that we heard about today is what NVIDIA is calling GeForce Boost. Here the integrated GPU will be put in to SLI rendering with a discrete GPU, which can greatly improve performance. Unlike HybridPower this isn’t a high-end feature and rather is a low-end feature; NVIDIA is looking to use this in situations where low-end discrete GPUs like the 8400GS and 8500GT. Here the performance of the integrated GPU is similar to that of the discrete GPU, so they’re fairly well matched. Since the rendering mode will be AFR, this is critical as it would have the opposite impact and slow down rendering performance if paired with a high end GPU (plus the performance impact otherwise would be tiny, the speed gap is just too great).

NVIDIA is showcasing this as a way to use the integrated GPU to boost the performance of the discrete GPU, but since this also requires new platforms, we’d say this is more important for the upgrade market for now; if you need more performance on a cheap computer drop in a still-cheap low end GPU and with SLI watch performance improve. We don’t have an idea on the exact performance impact, but we already knows how SLI scales today on higher end products, so the results should be similar. It’s worth noting that while GeForce Boost can be used in conjunction with HybridPower, that’s not the case for now; since low-end discrete GPUs use so little power in the first place, it doesn’t make much sense to turn them off. This will be far more important when it comes to notebooks later on, where both the power savings of HybridPower and GeForce Boost can be realized. In fact once everything settles down, notebooks could be the biggest beneficiary of the complete Hybrid SLI package out of any segment.

NVIDIA’s roadmap for Hybrid SLI calls for the technology to be introduced in Q1 with the 780a, 750a, 730a, and GeForce 8200 moherboard chipsets, followed by Intel in Q2 and notebooks later in the year along with other stragglers. On the GPU side the current 8400GS and 8500GT support GeForce Boost but not HybridPower. Newer GPUs coming in this quarter will support the full Hybrid SLI feature set, and it’s sounding like the G92 GPUs from the 8800GT/GTS may also have HybridPower support once the software is ready.

As we get closer to the launch of all the requisite parts of Hybrid SLI we’ll have more details. Our initial impressions that HybridPower could do a lot to cut idle power consumption, while GeForce Boost s something we’ll be waiting to test. Frankly the biggest news of the day may just be that NVIDIA is doing integrated GPUs on all of its motherboard chipsets, everything else seems kind of quaint in comparison.

39 Comments

View All Comments

ebuyings0005 - Friday, September 4, 2009 - link

http://www.ebuyings.com">http://www.ebuyings.comthe website wholesale for many kinds of fashion shoes, like the nike,jordan,prama,****, also including the jeans,shirts,bags,hat and the decorations. All the products are free shipping, and the the price is competitive, and also can accept the paypal payment.,after the payment, can ship within short time.

free shipping

competitive price

any size available

accept the paypal

our price:

gstar coogi evisu true jeans $36;

coach chanel gucci LV handbags $32;

coogi DG edhardy gucci t-shirts $15;

CA edhardy vests.paul smith shoes $35;

jordan dunk af1 max gucci shoes $33;

EDhardy gucci ny New Era cap $15;

coach okely **** CHANEL DG Sunglass $16;

http://www.ebuyings.com/productlist.asp?id=s28">http://www.ebuyings.com/productlist.asp?id=s28 (JORDAN SHOES)

http://www.ebuyings.com/productlist.asp?id=s1">http://www.ebuyings.com/productlist.asp?id=s1 (ED HARDY)

http://www.ebuyings.com/productlist.asp?id=s11">http://www.ebuyings.com/productlist.asp?id=s11 (JEANS)

http://www.ebuyings.com/productlist.asp?id=s6">http://www.ebuyings.com/productlist.asp?id=s6 (TSHIRTS)

http://www.ebuyings.com/productlist.asp?id=s5">http://www.ebuyings.com/productlist.asp?id=s5 (Bikini)

http://www.ebuyings.com/productlist.asp?id=s65">http://www.ebuyings.com/productlist.asp?id=s65 (HANDBAGS)

http://www.ebuyings.com/productlist.asp?id=s21">http://www.ebuyings.com/productlist.asp?id=s21 (Air_max_man)

http://www.ebuyings.com/productlist.asp?id=s29">http://www.ebuyings.com/productlist.asp?id=s29 (Nike shox)

http://www.ebuyings.com/productlist.asp?id=s6">http://www.ebuyings.com/productlist.asp?id=s6 (Polo tshirt)

ATWindsor - Sunday, February 3, 2008 - link

It's about time, I'm ired of VGA not beeing integrated in more high-end boards, despite them having extremly many features. Not every high/midend rig is a gamer-rig. So inbuilt vga is a welcome change, even if you're not going to use hybrid SLI.nubie - Monday, January 14, 2008 - link

Hi, silly peoples. Most (I mean All), of the cheapest motherboards include integrated graphics already. To insinuate that this "will make them more expensive" is STUUUPID.Have you priced the cheapest motherboards? They are all integrated. I think this will lower nVidia's cost by standardizing the entire line-up, including the drivers and Bios, thus allowing for reduced operating, design, production, and engineering costs.

I agree that the display switching and connector issues need work, and I am happy nVidia is working on them. Since the display is to be routed through the motherboard in this iteration, I foresee any problems being worked out, if possible.

Let's not forget that the move is toward DDR3, when/if that is possible, we may see socketed GPU's, hopefully with their own RAM. Just think, 4x Ram slots for DDR3, use more expensive low-latency for the video, and cheaper high-latency for the system.

I am righteously upset at the current practice of a board hosting at least half of the heat production in current form factor, clearly a move to dual socket systems with heat-pipe cooling would be better. Although integrated CPU/GPUs would be interesting as well, and wouldn't preclude a secondary gpu socket, if this technology is perfected.

Knowname - Friday, January 11, 2008 - link

I agree with the op, seems to me the disadvantages for this, in desktop use, FAR outweigh the advantages. Seems like this'll be yet another driver option we'll have to tick saying 'no! we don't want to use this feature!' Heck, just the increased reliance (is that a word??) on system ram for all things 2d, ON A ENTHUSIAST SYSTEM??, is bad enough. Frankly I hope they do this, give me another excuse to go AMD.In fact, even in Notebooks it just seems like too much too soon or not even needed at all. I mean, what notebook costs less than 3 grand and DOESN'T use an integrated gpu anyway??? WHAT'S THE POINT?!! Even if, the savings isn't that big. We'll have to buy those big, inefficient PSUs anyway planning for a worst possible scenario, you can't always have the cake ;p. It just all sounds pretty dumb. I mean, even AMD's implementation sounds pretty dumb... but at least they don't make a big deal about it... or is that the fault of anandtech... woopsie ^.^

Sharpie - Thursday, January 10, 2008 - link

but i don't plan on switching to vista unless forced.roadrun777 - Thursday, January 10, 2008 - link

Hopefully, Nvidia will release some open source code that allows other OS's to take advantage of their features. Otherwise they are just being ignorant of the fact that 2008 will be the year that the penguin goes through puberty and becomes a real desktop alternative.It's just too bad no one has created a Linux gaming developer platform kit to allow for easy porting of games. *Hint*

I think that would be a major reason why desktop linux would succeed.

ark2008 - Tuesday, January 8, 2008 - link

What will happen when future cpus will come with onboard graphics?roadrun777 - Thursday, January 10, 2008 - link

[quote]What will happen when future cpus will come with onboard graphics?[/quote]Exactly. Seems to me they are just laying the groundwork now, so there is less work later. They may actually switch to drop in socketed GPU replacements (like CPU's are now) first, before they mold them into the CPU.

Doctorweir - Monday, January 7, 2008 - link

I am also leaning towards the totally stupid idea - comments, because1) Why heating up the motherboard more and adding features that cost money and no one needs

2) And why make concurrent use of the memory bus...? Especially during gaming the memory bus should be exclusive to game-data...also forking gigs of framebuffer data concurrently should not help the fps...

3) They should rather invest to power down the primary GPU. Why can a CPU consume so little power in idle and a GPU cannot? Just invest more on throtteling mechanisms and idle states with reduced MHz and low power consumption should also be achievable for high-end card (e.g. reduce frequencies and voltage, shutdown shader units, shutdown 3/4 of the ram, etc. etc...)

4) And if they are not able to do this, why not implementing a "mini-GPU" on the graphics board? Than all switching can be done on the board and you are platform independent...ummm...whoops...my bad. Than I do not need to buy an nVidia mobo...no, that's not an option then... :-P

Doctorweir - Monday, January 7, 2008 - link

I am also leaning towards the totally stupid idea - comments, because1) Why heating up the motherboard more and adding features that cost money and no one needs

2) And why make concurrent use of the memory bus...? Especially during gaming the memory bus should be exclusive to game-data...also forking gigs of framebuffer data concurrently should not help the fps...

3) They should rather invest to power domn the primary GPU. Why can a CPU consume so little power in idle and a GPU cannot? Just invest more on throtteling mechanisms and idle states with reduced MHz and low power consumption should also be achievable for high-end card (e.g. reduce frequencies and voltage, shutdown shader units, shutdown 3/4 of the rem, etc. etc...)

4) And if they are not able to do this, why not implementing a "mini-GPU" on the graphics board? Than all switching can be done on the board and you are platform independent...ummm...whoops...my bad. Than I do not need to buy an nVidia mobo...no, that's not an option then... :-P