WinHEC 2005 - Keynote and Day 1

by Derek Wilson & Jarred Walton on April 26, 2005 4:00 AM EST- Posted in

- Trade Shows

Introduction

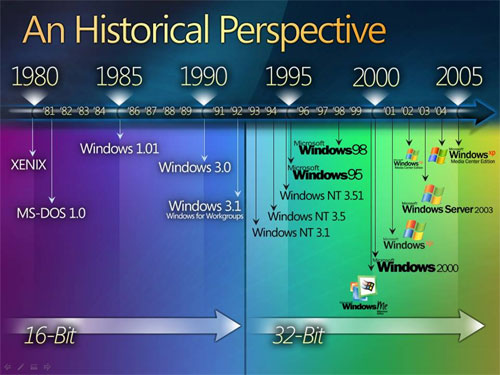

Landmarks events don't occur very often in life, and as advancements in technology come and go, the landmark evens come less frequently. Consider the area of transportation. For centuries, we relied on the brute force approach, while in the last 125 years we have gone from horse-drawn carriages to steam powered and gas powered engines, on to flight and eventually to space travel. The last major landmark is now more than 30 years in the past, and it's difficult to say what will happen next.We can see similar progress in the realm of computing, and narrowing things down a bit in the history of Microsoft. There have been a few major transitions over the past 25 or so years. 8-bit to 16-bit computing was a massive step forward, with address spaces increasing from 20-bit to 24-bit and a nearly complete rewrite of the entire Operating System. The transition from 16-bit to 32-bit came quite a bit later, and it happened in stages. First we got the hardware with the 386, and over the next five years we began to see software that utilized the added instructions and power that 32-bit computing provided. The benefits were all there, but it required quite a bit of effort to realize them, and it wasn't until the introduction of Windows NT and/or Windows 95 that we really saw the shift away from the old 16-bit model and into the new 32-bit world.

You can see the historical perspective in the following slide. Keep in mind that while there are many more releases in the modern era (post 1993), many of the releases are incremental upgrades. 95 to 98 to Me were not major events, and many people skipped the last version. Similarly, NT 4.0 to 2000 to XP while all useful upgrades didn't exactly shake the foundations of computing.

The next transition is now upon us. Of course we're talking about the official launch of Windows XP 64. It has taken a long time for us to really begin to reach the limits of 32-bit computing (at least on the desktop), and while the transition may not be as bumpy as the change from 16 to 32-bits, there will still be some differences and the accompanying transitional period. This year's WinHEC (Windows Hardware Engineering Conference) focused on the advancements that the new 64-bit OS will bring, as well as taking a look at other technologies that are due in the next couple of years.

Gates characterized the early 16-bit days of Windows as an exploration into what was useful. For many people, the initial question was often "why bother with a GUI?" After the first decade of Windows, the question was no longer present, as most people had come to accept the GUI as a common sense solution. The second decade of Windows was characterized by increases in productivity and the change to 32-bit platforms. Gates suggested that the third decade of Windows and the shift to 64-bits will bring the greatest increases in productivity as well as the most competition that we have yet seen.

36 Comments

View All Comments

JarredWalton - Saturday, April 30, 2005 - link

KHysiek - part of the bonus of the Hybrid HDDs is that apparently Longhorn will be a lot more intelligent on memory management. (I'm keeping my fingers crossed.) XP is certainly better than NT4 or the 9x world, but it's still not perfect. Part of the problem is that RAM isn't always returned to the OS when it's deallocated.Case in point: Working on one of these WinHEC articles, I opened about 40 images in Photoshop. Having 1GB of RAM, things got a little sluggish after about half the images were open, but it still worked. (I wasn't dealing with layers or anything like that.) After I finished editing/cleaning each image, I saved it and closed it.

Once I was done, total memory used had dropped from around 2 GB max down to 600 MB. Oddly, Photoshop was showing only 60 MB of RAM in use. I exited PS and suddenly 400 MB of RAM freed up. Who was to blame: Adobe or MS? I don't know for sure. Either way, I hope MS can help eliminate such occurrences.

KHysiek - Friday, April 29, 2005 - link

PS. In this case making hybrid hard drives with just 128MB of cache is laughable. Windows massive memory swapping will ruin cache effectiveness quickly.KHysiek - Friday, April 29, 2005 - link

Windows memory management is one of the worst moments in history of software development. It made all windows software bad in terms of managing memory and overall performance of OS. You actually need at least 2x of memory neede by applications to work flawlessly. I see that saga continues.CSMR - Thursday, April 28, 2005 - link

A good article!Regarding the "historical" question:

Strictly speaking, if you say "an historical" you should not pronounce the h, but many people use "an historical" and pronounce the h regardless.

Pete - Thursday, April 28, 2005 - link

That's about how I reasoned it, Jarred. The fisherman waits with (a?)bated breath, while the fish dangles from baited hook. Poor bastard(s).'Course, when I went fishing as a kid, I usually ended up bothering the tree frogs more than the fish. :)

patrick0 - Wednesday, April 27, 2005 - link

If Microsoft manages graphics memory, it will sure be a lot easier to read this memory. This can make it easier to use the GPU as a co-processor to do non-graphics tasks. Now I could manage image-processing, but this doesn't sound like a no-graphcs task, does it? Anyways, it is a cpu task. Neither ATI nor nVidia has made it easy so far to use the GPU as co-processor. So I think ms managing this memory will be an improvement.JarredWalton - Wednesday, April 27, 2005 - link

Haven't you ever been fishing, Pete? There you sit, with a baited hook waiting for a fish to bite. It's a very tense, anxious time. That's what baited breath means.... Or something. LOL. I never really thought about what "bated breath" actually meant. Suspended breath? Yeah, sure... whatever. :)Pete - Wednesday, April 27, 2005 - link

Good read so far, Derek and Jarred. Just wanted to note one mistake at the bottom of page three: bated, not baited, breath.Unless, of course, they ordered their pie with anchovies....

Calin - Wednesday, April 27, 2005 - link

"that Itanium is not dead and it's not going anywhere"I would say "that Itanium is not dead but it's not going anywhere"

JarredWalton - Tuesday, April 26, 2005 - link

26 - Either way, we're still talking about a factor of 2. 1+ billion vs. 2+ billion DIMMs isn't really important in the grand scheme of things. :)23 - As far as the "long long" vs "long", I'm not entirely sure what you're talking about. AMD initially made their default integer size - even in 64-bit mode - a 32-bit integer (I think). Very few applications really need 64-bit integers, plus fetching a 64-bit int from RAM requires twice as much bandwidth as a 32-bit int. That's part one of my thoughts.

Part 2 is that MS may have done this for code compatibility. While in 99% of cases, an application really wouldn't care if the 32-bit integers suddenly became 64-bit integers, that's still a potential problem. Making the user explicitly request 64-bit integers gets around this problem. Things are backwards compatible.

Anyway, none of that is official, and I'm only partly sure I understand what you're asking in the first place. If you've got some links for us to check out, we can look into it further.