Fable Legends Early Preview: DirectX 12 Benchmark Analysis

by Ryan Smith, Ian Cutress & Daniel Williams on September 24, 2015 9:00 AM ESTFinal Words

Non-final benchmarks are a tough element to define. On one hand, they do not show the full range of both performance and graphical enhancements and could be subject to critical rendering paths that cause performance issues. On the other side, they are near-final representations and aspirations of the game developers, with the game engine almost at the point of being comfortable. To say that a preview benchmark is somewhere from 50% to 90% representative of the final product is not much of a bold statement to make in these circumstances, but between those two numbers can be a world of difference.

Fable Legends, developed by Lionhead Studios and published by Microsoft, uses EPIC’s Unreal 4 engine. All the elements of that previous sentence have gravitas in the gaming industry: Fable is a well-known franchise, Lionhead is a successful game developer, Microsoft is Microsoft, and EPIC’s Unreal engines have powered triple-A gaming titles for the best part of two decades. With the right ingredients, therein lies the potential for that melt-in-the-mouth cake as long as the oven is set just right.

Convoluted cake metaphors aside, this article set out to test the new Fable Legends benchmark in DirectX 12. As it stands, the software build we received indicated that the benchmark and game is still in 'early access preview' mode, so improvements may happen down the line. Users are interested in how DX12 games will both perform and scale on different hardware and different settings, and we aimed to fill in some of those blanks today. We used several AMD and NVIDIA GPUs, mainly focusing on NVIDIA’s GTX 980 Ti and AMD’s Fury X, with Core i7-X (six cores with HyperThreading), Core i5 (quad core, no HT) and Core i3 (two cores, HT) system configurations. These two GPUs were also tested at 3840x2160 (4K) with Ultra settings, 1920x1080 with Ultra settings and 1280x720 with low settings.

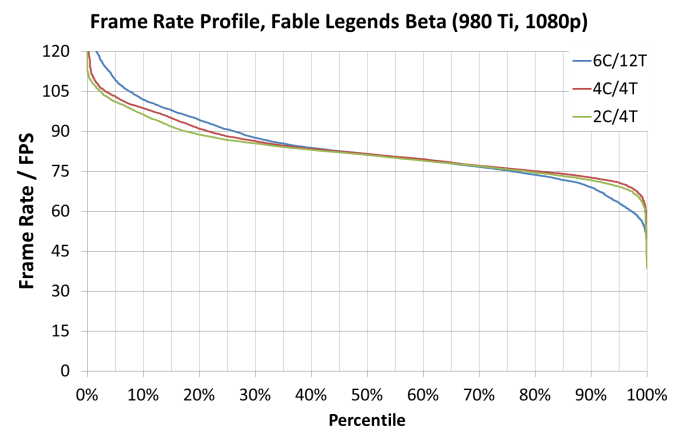

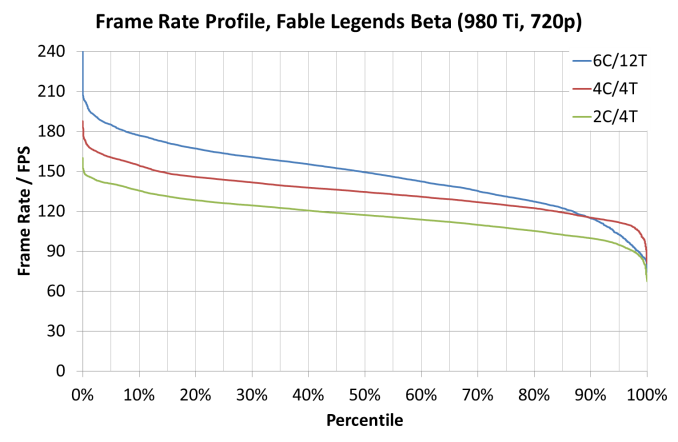

On pure average frame rate numbers, we saw NVIDIA’s GTX 980 Ti by just under 10% in all configurations except for the 1280x720 settings which gave the Fury X a substantial (10%+ on i5 and i3) lead. Looking at CPU scaling, this showed that scaling only ever really occurred at the 1280x720 settings anyway, with both AMD and NVIDIA showing a 20-25% gain moving from a Core i3 to a Core i7. Some of the older cards showed a smaller 7% improvement over the same test.

Looking through the frame rate profile data, specifically looking for minimum benchmark percentile numbers, we saw an interesting correlation with using a Core i7 (six core, HT) platform and the frame rates on complex frames being beaten by the Core i5 and even the Core i3 setups, despite the fact that during the easier frames to compute the Core i7 performed better. In our graphs, it gave a tilted axis akin to a seesaw:

When comparing the separate compute profile time data provided by the benchmark, it showed that the Core i7 was taking longer for a few of the lighting techniques, perhaps relating to cache or scheduling issues either at the CPU end or the GPU end which was alleviated with fewer cores in the mix. This may come down to a memory controller not being bombarded with higher priority requests causing a shuffle in the data request queue.

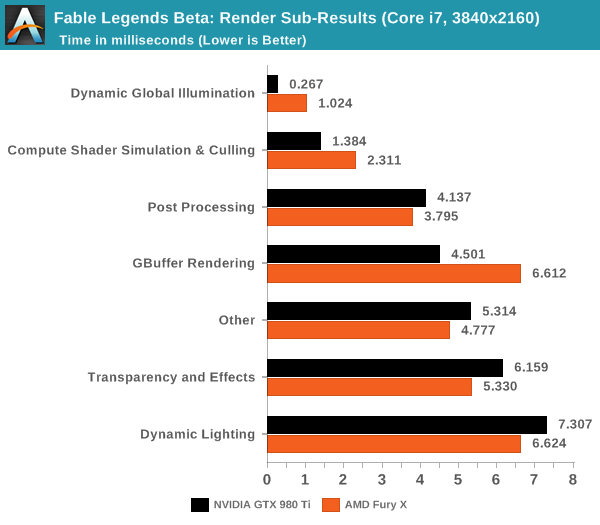

When we do a direct comparison for AMD’s Fury X and NVIDIA’s GTX 980 Ti in the render sub-category results for 4K using a Core i7, both AMD and NVIDIA have their strong points in this benchmark. NVIDIA favors illumination, compute shader work and GBuffer rendering where AMD favors post processing, transparency and dynamic lighting.

DirectX 12 is coming in with new effects to make games look better with new features to allow developers to extract performance out of our hardware. Fable Legends uses EPIC’s Unreal Engine 4 with added effects and represents a multi-year effort to develop the engine around DX12's feature set and ultimately improve performance over DX11. With this benchmark we have begun to peek a little in to what actual graphics performance in games might be like, and if DX12 benefits users on low powered CPUs or high-end GPUs more. That being said, there is a good chance that the performance we’ve seen today will change by release due to driver updates and/or optimizing the game code. Nevertheless, at this point it does appear that a reasonably strong card such as the 290X or GTX 970 are needed to get a smooth 1080p experience (at Ultra settings) with this demo.

141 Comments

View All Comments

Ian Cutress - Thursday, September 24, 2015 - link

Please screenshot any issue like this you find and email it to us. :)Frenetic Pony - Thursday, September 24, 2015 - link

So interesting to note, for compute shader performance we see nvidia clearly in the lead, winning both compute and dynamic gi which here is compute based, once we get to pixel operations we see a clear lead for Amd, ala post processing, direct lighting and transparency. When we switch back to geometry, the gbuffer, Nvidia again leads. Interesting to see where each needs to catch up.NightAntilli - Thursday, September 24, 2015 - link

Once again I wish AMD CPUs were included for the performance and scaling... Both the old FX CPUs and stuff like the Athlon 860k.Oxford Guy - Friday, September 25, 2015 - link

They claimed that readers aren't interested in seeing FX benchmarked. That doesn't explain why like 8 APUs were included in the Broadwell review or whatever and not one decently-clocked FX.I also don't see why the right time to do Ashes wasn't shortly after ArsTechnica's article about it rather than giving it the GTX 960 "coming real soon" treatment.

NightAntilli - Sunday, September 27, 2015 - link

Considering that DX12 is supposed to greatly reduce CPU overhead and be able to scale well across multiple cores, this is one of the most interesting benchmarks that can be shown. But yeah. It seems like there's a political reason behind it.Iridium130m - Thursday, September 24, 2015 - link

Shut hyperthreading off and run the tests again...be curious if the scores for the 6 core chip come up any...we may be in a situation where hyperthreading provides little benefit in this use case if all the logical cores are doing the exact same processing and bottlenecking on the physical resources underneath.Osjur - Friday, September 25, 2015 - link

Dat 7970 vs 960 makes me have wtf moment.gamerk2 - Friday, September 25, 2015 - link

Boy, I'm looking at those 4k Core i3, i5, and i7 numbers, and can't help but notice they're basically identical. Looks like the reduced overhead of DX12 is really going to benefit lower-tier CPUs, especially the Core i3 lineup.ruthan - Sunday, September 27, 2015 - link

Dont worry Intel would find the way, how to cripple i3, even more.Mugur - Friday, September 25, 2015 - link

This game will be DX12 only since it's Microsoft, that's why its Windows 10 and Xbox One exclusivity.Where can I find some benchmarks with the new AMD driver and this Fable Legends?