Analyzing Intel-Micron 3D XPoint: The Next Generation Non-Volatile Memory

by Kristian Vättö, Ian Cutress & Ryan Smith on July 31, 2015 11:00 AM ESTProducts

During the event, Intel and Micron made it clear that this week's announcement is solely about the underlying 3D XPoint technology. Products based on this new technology will follow sometime next year and the companies were quite tight-lipped when it came to details, but they did give away a few hints. First of all, the co-operation between Intel and Micron only exists at the memory technology level and both companies are developing their own 3D XPoint based products, similar to how the two have operated in the SSD/NAND business. Technically this means that the two will be competing against each other, although it's possible that each company will take a unique approach to utilizing 3D XPoint in an end product.

One take away from the presentation and Q&A was Intel's emphasis on NVMe. Intel has been a strong advocate of the technology ever since its inception, and as a matter of fact Intel was the first SSD vendor to ship NVMe SSDs in high volume with the introduction of the DC P3700 and its derivatives last year. While NVMe has mostly been associated with NAND so far since it is mainstream non-volatile memory, the core architecture was built to scale with future memory technologies with even lower latencies (after all, NVMe stands for Non-Volatile Memory Express). Given that software interfaces tend to stick around for at least a decade, it's obvious that NVMe had to be designed with more than just NAND in mind.

With NVMe it's certain that we will see 3D XPoint based PCIe SSDs. Whether these will be add-in cards or 2.5" drives remains to be seen, but I'm more inclined to say add-in cards (at least initially) because of the connector limitations. U.2 (former SFF-8639) supports only four PCIe 3.0 lanes, resulting in effective real world bandwidth of about 3.2GB/s. NAND is already capable of saturating that for read operations, so even though 3D XPoint would improve write and random IO performance, the full potential would ultimately go unused without a higher bandwidth interface. An add-in card doesn't share the limitations of U.2 and could support up to 16 lanes with over 10GB/s of bandwidth available, but the downside would more limited serviceability since add-in cards can't be front-loaded like 2.5" drives can. As the enterprises have used add-in cards in the past (Fusion-io never made anything but add-in cards), I don't see serviceability being a major hurdle for the companies that really need 3D XPoint for their workloads. On the other hand, I wouldn't be surprised to see Intel pushing for an 8-lane U.2-like standard, but it really needs industry-wide support to get air under the wings.

With Intel being the other party in the joint-venture, it's guaranteed that 3D XPoint will get all support and love it needs on the platform side. Intel can integrate more PCIe lanes and/or accelerate the development of PCIe 4.0 for its upcoming platforms to create the necessary bandwidth and push for 3D XPoint if needed, which is something that no other memory vendor could do.

AgigA's DDR4 NVDIMM: A Future 3D XPoint Form Factor?

While Intel will clearly be pursuing the storage aspect of 3D XPoint through NVMe, I suspect Micron might take a more memory-like approach since it's a memory company as much as it's a storage company. It was made clear that 3D XPoint can be used in memory and storage applications because the technology is bit-addressible and can work in a similar fashion as DRAM. Bringing 3D XPoint closer to the CPU and connecting it through a DDR4 interface would obviously yield the best performance and eliminate any bottlenecks that PCIe has. There are already NAND-based products that do this, such as SanDisk's ULLtraDIMM, and a couple of months ago JEDEC paved the way by releasing a standard for DDR4 NVDIMMs, a new standard set to fill the gap between DRAM and SSDs. While NVDIMMs will require driver work due to the lack of standardized software interface like NVMe, I do believe 3D XPoint is the right technology for bringing NVDIMMs to the market and it would make sense for Micron to do so.

Applications

Section by Ryan Smith

The use cases for 3D XPoint are potentially significant in number and Intel/Micron believe that it will open the doors for all sorts of new applications. Overall the computing industry has had access to high speed non-volatile memory technologies before – magnetic core memory is the traditional poster child here – so there is some precedence here and some fundamental research into the field from the early days of computing. However with magnetic core memory having become outmoded before the majority of our readers were even born, the modern computing industry has developed around the current paradigm of fast DRAM and slow permanent storage. As a result while the potential applications are numerous, it’s still in many ways an uncharted area in computer science.

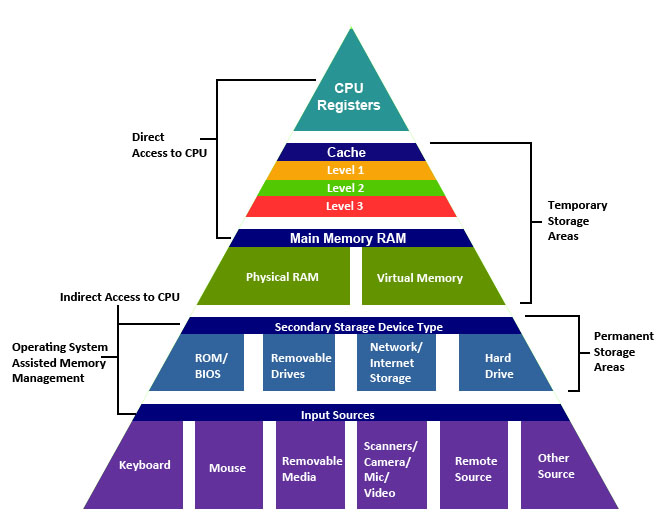

The most immediate application of 3D XPoint based products will be as a layer of storage between DRAM and SSDs. Over the history of computing the number of layers between storage and processors has continued to build – multiple layers of on-die caches, off-die caches, caching SSDs, etc – and 3D XPoint memory would further fit into that heiarchy as a storage medium that bridges the gap between DRAM and the current fastest non-volatile storage. By treating 3D XPoint memory as another layer of cache, 3D XPoint can be used to further speed up applications that are currently bound by either memory capacity or storage latency.

<

<

The Traditional Memory Heiarchy (Image Source: Tommy MacWilliam, Harvard)

Given the costs of 3D XPoint, the first such applications are expected to be on the enterprise side. Enterprise users make heavy use of storage at all layers in order to balance performance needs against the relatively small capacity of DRAM. Database servers in particular adapt well to caching, and it’s easy enough to imagine a next-generation database system using 3D XPoint to backstop DRAM. Since 3D XPoint is non-volatile, it can even be an exclusive cache – that is, its contents don’t need to be in lower layers as well – which eliminates a good deal of overhead. A database system in this context would only need to write contents to SSDs and other, lower layers of storage when data gets expelled from the 3D XPoint cache, an occurrence that may be particularly rare with the properly tuned database.

Many of these benefits of a cache layer are applicable to other types of storage-heavy servers as well, though I expect databases will be the king. Perhaps the more interesting aspect – and certainly more relatable to the public at large – will be what 3D XPoint-backed servers are used for. Intel and Micron are eager to point out the “big science” uses for the technology; projects and systems such as the Large Hadron Collider and Oak Ridge’s Titan supercomputer can generate a massive amount of data, and while processing all of that data is first and foremost a processor issue, feeding that data for processing is a big problem as well. Any kind of analysis that could benefit from individual processors having RAM-like access to an SSD-sized pool of data could benefit.

The catch is that there’s still a lot of research that’s needed into figuring out what the best uses may be. This kind of shift in access times and capacity doesn’t just make computers faster, but it can change the fundamentals of what algorithms are best. Just as how GPUs required scientists to figure out how to spread out their work in a massively parallel (and high latency) nature, putting 3D XPoint to its full use will require newer algorithms that are capable of effectively utilizing direct access to so much data at once.

Meanwhile I would be surprised if the financial industry didn’t jump on this early, as they are prone to jumping on major technologies in order to try to get an edge in a highly competitive and lucrative field. In this aspect it’s not so much that 3D XPoint would improve processing speed – such work is already offloaded to large RAM pools when possible – but rather it would enable traders and analysts to run simulations against much larger datasets much more effectively.

As for the consumer space, the same principles about an additional cache layer would apply, but I’m not so sure we’d see consumers pick it up in the same manner. Much of this has to do with what the eventual costs and capacities of 3D XPoint products would be, as consumers are much more price sensitive than professional users. In the consumer space we’ve seen sporadic use of NAND-backed hard drives, for example, but by and large consumers have stuck with discrete SSDs and HDDs. Consumers either don’t want to pay the premium for SSDs, or have enough money to just buy large SSDs outright, leaving little of a middle ground.

That said I’ve seen some interesting pitches for 3D XPoint in the gaming space that have some merit, as games are something of a special case for consumer workloads. By and large we want fast access to game resources since those resources are accessed on-demand and are needed to progress in a game’s execution, but the assets themselves aren’t volatile. Only a small part of the working set for a game is volatile data – player positions, AI decision trees, game state, etc – while the rest of it is static data such as models, world geometry, and textures. 3D XPoint in turn would be fast enough that it could be used as a replacement for RAM in holding these assets, but as the data is non-volatile it wouldn’t thrash 3D XPoint P/E cycles very much, and any write speed disadvantage compared to DRAM would be immaterial.

But again, this is going to depend on the cost of the technology; if it were to become cheap enough that 50-100GB could be thrown in a game console or gaming PC, then you could store the entirety of most games in 3D XPoint memory, which would reduce load times to the time required to process the data and setup the game state. This is more important in consoles which currently store their games on a mechanical drive, who then could recall data rather quickly on first boot or adjust for large amounts of memory swapping for more detailed titles. High end PCs with large amounds of DRAM can already use RAMDisks perhaps nullifying a point there.

Last but not least of course are the implications for 3D XPoint as a wholesale replacement for DRAM. The more limited lifetime of 3D XPoint relative to DRAM certainly poses some challenges in this respect, but I suspect the bigger issue will be overall bandwidth. By the time 3D XPoint becomes available in bulk, DRAM technology should be to the point where faster-generations of DDR4 are available and HBM is widely deployed. Given that future generations of HBM are targeting 1TB/sec or more of memory bandwidth, it’s unlikely that 3D XPoint is going to be able to match the bandwidth of contemporary high-bandwidth DRAM solutions. So any rumors of the impending death of DRAM are likely premature.

IoT & Embedded, A Good Fit For 3D XPoint?

But with that said, while 3D XPoint isn’t likely to replace DRAM in a wholesale manner for all applications, there is clearly room for it to replace DRAM in some situations where DRAM is used primarily for its bandwidth and latency versus solid state storage. Replacing DRAM with 3D XPoint in embedded applications for example would be very practical – many embedded uses don’t need high bandwidth or low latency as much as they just something better than traditional NAND – and I wouldn’t rule out smartphones here either, at least to an extent. If individual 3D XPoint chips can be produced small and cheap enough, then the most lucrative use for the tech as a DRAM replacement may be in the vast legions of low-performance devices, rather than in high-performance hardware that actually needs the full speed and latency of DRAM.

80 Comments

View All Comments

Kristian Vättö - Monday, August 3, 2015 - link

That's a good point and admittedly something I didn't think about. I would assume 3D XPoint is more robust than NAND given the higher performance and endurance, but Intel/Micron declined to talk about any failure mechanisms, so at this point it's hard to say how robust the technology is.Nilth - Sunday, August 2, 2015 - link

Well, I really hope it won't take 10 years to see this technology at the consumer level.dotpex - Monday, August 3, 2015 - link

From Micron site https://www.micron.com/about/innovations/3d-xpoint..."Memory cells are accessed and written or read by varying the amount of voltage sent to each selector. This eliminates the need for transistors, increasing capacity and reducing cost."

...but 3d xpoint will be expensive, more like $10 per gigabyte.

Adam Bise - Friday, August 7, 2015 - link

"First and foremost, Intel and Micron are making it clear that they are not positioning 3D XPoint as a replacement technology for either NAND or DRAM"I wonder if this is because they would rather create a new market than replace an existing one.

hans_ober - Saturday, August 8, 2015 - link

@Ian. PhD Chem was useful! :)Ian Cutress - Monday, September 28, 2015 - link

Yiss :)duartix - Monday, August 10, 2015 - link

I see two immediate consumer usages:a) Instant Go To / Wake From deep hibernation

b) Scratch disks

MRFS - Monday, August 24, 2015 - link

With proper UEFI/BIOS support, one feature we proposed in a Provisional Patent Application was a "Format RAM" option prior to running Windows Setup. This would format RAM as an NTFS C: partition into which Windows software would be freshly installed. For comparison purposes, imagine a ramdisk in the upper 32-to-64GB of a large 1-to-2 TB DRAM subsystem, in a manner similar to how SuperSpeed's RamDisk Plus allocates RAM addresses. Then, imagine that all 2 TB consist of Non-Volatile DIMMs. I can see this one feature enabling very rapid RESTARTS, even cold RESTARTS after a full power-down (for maintenance). If the UEFI/BIOS is told that the OS is already memory-resident, this one change radically improves the speed with which a routine STARTUP occurs i.e. currently a STARTUP must load all OS software from a storage subsystem into RAM. If that OS software is already loaded into RAM, that "loading" is mostly eliminated under these new assumptions. Moreover, mounting Optane on the 2.5" form factor should free designers to consider more aggressive overclocking of the data cables connecting motherboards to those 2.5" drives: just work backwards from PCIe 4.0's 16GHz clock and 128b/130b jumbo frame. It's possible that Optane will be fast enough to justify data cables that also oscillate at 16GHz, increasing to 32GHz with predictable success. Assuming x4 NVMe lanes at PCIe 4.0, then 4 lanes @ (16G / 8.125) ~= 4 lanes @ 2GB/s ~= 8 GB/s raw bandwidth per 2.5" device. Modern G.Skill DDR4 easily exceeds 25GB/s raw bandwidth. Thus, Optane should allow "overclocked" data cables to achieve blistering NVMe storage performance with JBOD devices, and even higher performance with RAID-0 arrays.FutureCTO - Tuesday, November 15, 2016 - link

I don't know, is it possible to have an educated guess on this? Back in the PS2 days, before the PS3, i was @0zyx on forum or few, talking about NASA RAM, magnet donuts on a metal grid of wires, insisting why don't we do this with memory today? The electricity crosses and creates a charge or reads the charge. This is the RAM of the first space computer. ~ I was made confident by believing this is what AMD "Mirror Bit" Memory was working towards before it flat out evaporated from the internet? Same happened to 48Bit Intel "Iranium" processors with 16cores. Still look in books from time to time, hoping an old edition of hardware lists with intel spy cpu, will confirm the internet is a BlackHole. Not to go Ellery Hale, with being one of those to store curious science bits no one is using, and everyone should be clamoring to own some day. ~ I check the metal recycling at the city dump for computer servers and extra high grade tower cases for my own builds, at least a parts from the towers anyways. ~ Twitter @0zyx ~ either way this is the memory design from the first NASA space capsule to carry people into space, except larger than 1 kilobyte. It may have been 512Bytes back then, not sure what sort of grid that is?FutureCTO - Tuesday, November 15, 2016 - link

educated guess on price? ~ To me it is simpler to make, and faster to verify trace integrity.