Crucial BX100 (120GB, 250GB, 500GB & 1TB) SSD Review

by Kristian Vättö on April 10, 2015 1:20 PM EST- Posted in

- Storage

- SSDs

- Crucial

- Micron

- Silicon Motion

- BX100

- SM2246EN

- Micron 16nm

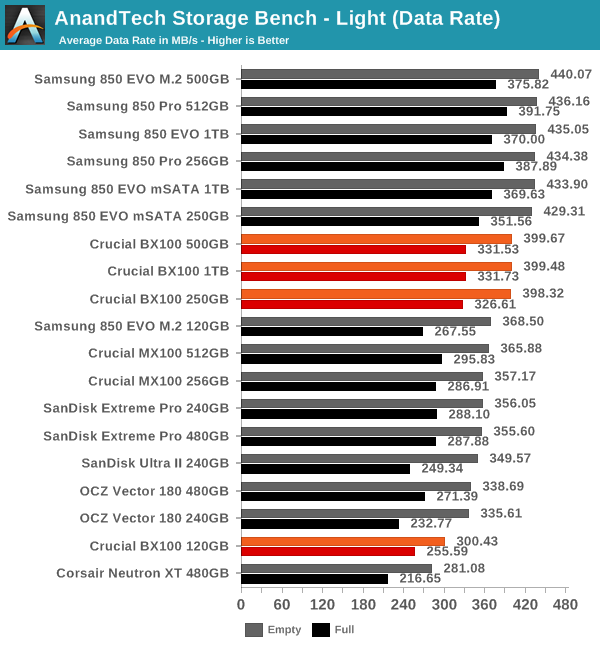

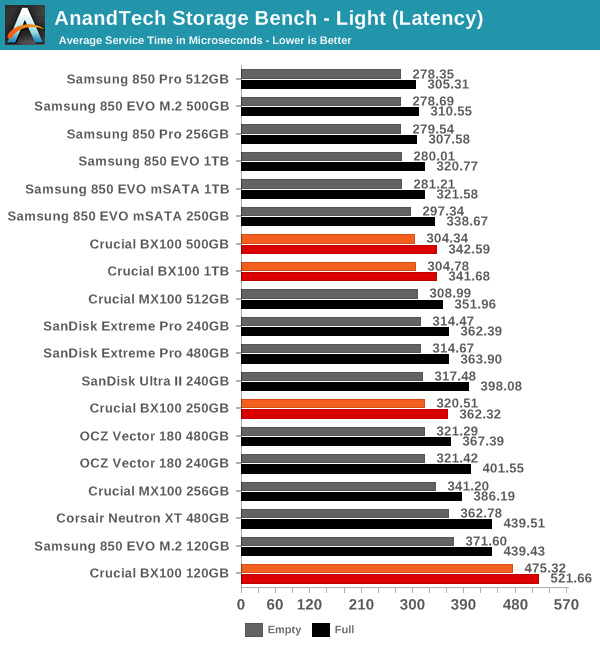

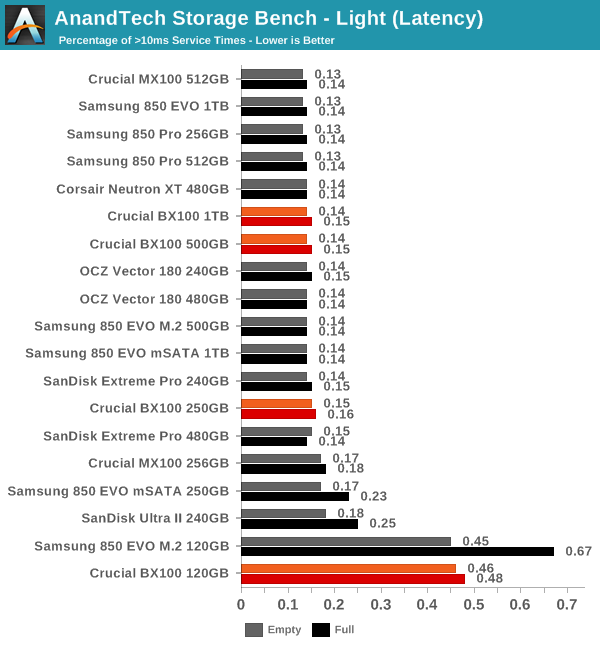

AnandTech Storage Bench - Light

The Light trace is designed to be an accurate illustration of basic usage. It's basically a subset of the Heavy trace, but we've left out some workloads to reduce the writes and make it more read intensive in general. Please refer to this article for full details of the test.

While the differences in our Light trace are typically marginal, the BX100 manages to have a slight advantage over the MX100 and several competing drives, although Samsung still maintains its crown.

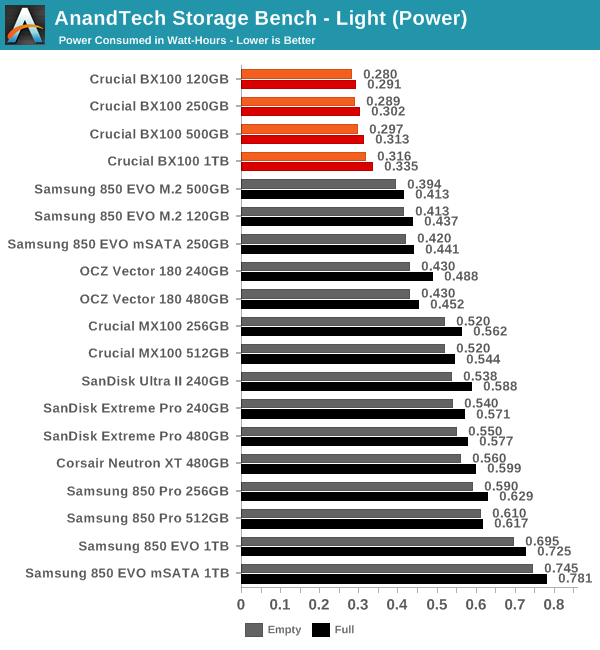

Power consumption remains brilliant and I'm honestly surrpised by how big the difference actually is.

67 Comments

View All Comments

Kristian Vättö - Saturday, April 11, 2015 - link

I plan on testing the SSD370 as soon as I have time, but the past two months have been full of travel and NDAs, thus I've only been able to test a limited number of drive with our new 2015 SSD suite.leexgx - Sunday, April 12, 2015 - link

i just got a SSD370 comming my way now, very annoying it lacks any power managementi am happy you did the review on this as i was mostly ignoring the bx100, as the mx100 is generally cheaper then the BX100 in the UK , but for laptops well wow its worst case power useage is overall better then any other SSD (add a Devsleep supported laptop and the reg Tweek to expose the Lowest Option under balanced power profile for AHCI power management and you get mad power savinging)

http://www.sevenforums.com/tutorials/177819-ahci-l...

http://www.sisoftware.co.uk/?d=qa&f=apu_hsw_di...

leexgx - Sunday, April 12, 2015 - link

be nice if they bring a update out for the SSD370 to turn back on DIPM as it must be the only current SSD in the last 2 years that lacks a 0.150w ish slumber state (most SSDs are stuck in idle 0.330w ish zone without DIPM or HIPM) even though i paid not much for this used ssd370 it be nice if it had the optionjaegerschnitzel - Sunday, April 12, 2015 - link

Great review. But please can you explain me how to determine the Over-Provisioning?For example the drive with 500 GB. It has 8 flash chips with 512Gbit each, a total of 512GiB. User capacity is 465.76. 1 - 465,76/512. Am I right?

Kristian Vättö - Sunday, April 12, 2015 - link

That is correct. The other way to put it would be 1 - (500*1000^3)/(512*1024^3).jaegerschnitzel - Sunday, April 12, 2015 - link

Thanks for your fast reply! Just another question to clarify, why not the other way round?1 - (512*1024^3) / (500*1000^3) = 9.95%?

Kristian Vättö - Sunday, April 12, 2015 - link

That returns a negative number (-9.95%) because (512*1024^3) > (500*1000^3).(512*1024^3) = raw NAND capacity in bytes, i.e. 512GiB (GiB = 1024^3)

(500*1000^3) = user capacity in bytes, i.e. 500GB = 465.76GiB (GB = 1000^3)

jaegerschnitzel - Sunday, April 12, 2015 - link

That was my fault. But this should be right: (512*1024^3) / (500*1000^3) - 1 = 9.95%.I think you misunderstood my second question. Sorry for that, obviously my English is too bad ;-)

Another try. Your percentage is relative to the real physical capacity (9.1%). Why do you not refer the percentage to the end user capacity (9.9%)?

Squuiid - Sunday, April 12, 2015 - link

Given the problems I and many, many others have had with Crucial's MX100, I would not recommend anyone buy a Crucial SSD. Their firmware dev team are incompetent, no two ways about it. There have been serious power management problems with all of Crucial's SSD's since their C300 released years ago.http://forum.crucial.com/t5/Crucial-SSDs/Feedback-...

leexgx - Sunday, April 12, 2015 - link

the current SSDs are not even related to the C300 (witch i agree was not a great SSD as latency was not very good on that drive under some loads it was slow)