Testing SATA Express And Why We Need Faster SSDs

by Kristian Vättö on March 13, 2014 7:00 AM EST- Posted in

- Storage

- SSDs

- Asus

- SATA

- SATA Express

NVMe vs AHCI: Another Win for PCIe

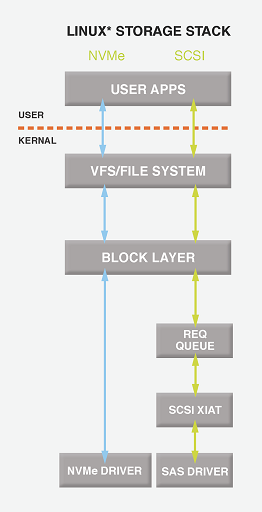

Improving performance is never just about hardware. Faster hardware can only help to reach the limits of software and ultimately more efficient software is needed to take full advantage of the faster hardware. This applies to SSDs as well. With PCIe the potential bandwidth increases dramatically and to take full advantage of the faster physical interface, we need a software interface that is optimized specifically for SSDs and PCIe.

AHCI (Advanced Host Controller Interface) dates back to 2004 and was designed with hard drives in mind. While that doesn't rule out SSDs, AHCI is more optimized for high latency rotating media than low latency non-volatile storage. As a result AHCI can't take full advantage of SSDs and since the future is in non-volatile storage (like NAND and MRAM), the industry had to develop a software interface that abolishes the limits of AHCI.

The result is NVMe, short for Non-Volatile Memory Express. It was developed by an industry consortium with over 80 members and the development was directed by giants like Intel, Samsung, and LSI. NVMe is built specifically for SSDs and PCIe and as software interfaces usually live for at least a decade before being replaced, NVMe was designed to be capable of meeting the industry needs as we move to future memory technologies (i.e. we'll likely see RRAM and MRAM enter the storage market before 2020).

| NVMe | AHCI | |

| Latency | 2.8 µs | 6.0 µs |

| Maximum Queue Depth |

Up to 64K queues with 64K commands each |

Up to 1 queue with 32 commands each |

| Multicore Support | Yes | Limited |

| 4KB Efficiency | One 64B fetch |

Two serialized host DRAM fetches required |

Source: Intel

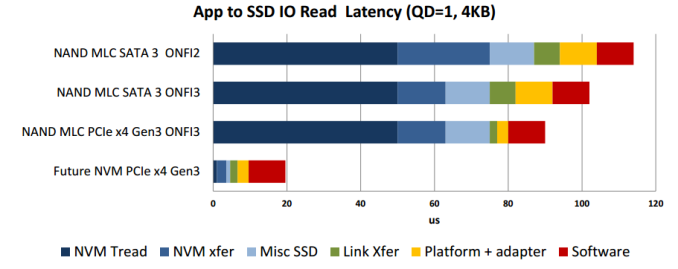

The biggest advantage of NVMe is its lower latency. This is mostly due to a streamlined storage stack and the fact that NVMe requires no register reads to issue a command. AHCI requires four uncachable register reads per command, which results in ~2.5µs of additional latency.

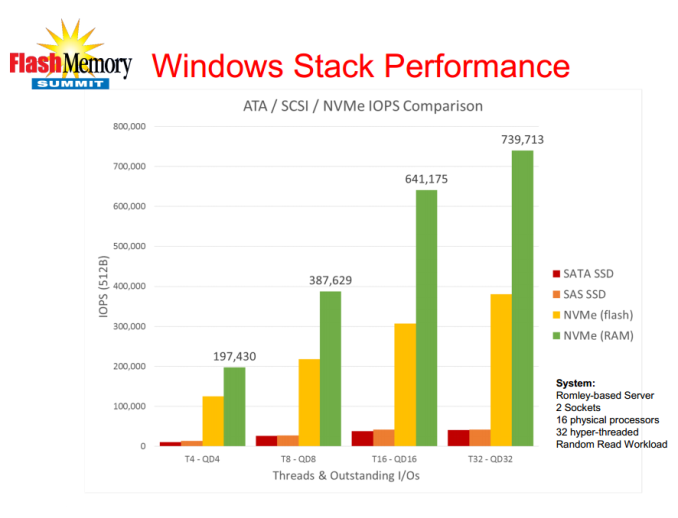

Another important improvement is support for multiple queues and higher queue depths. Multiple queues ensure that the CPU can be used to its full potential and that the IOPS is not bottlenecked by single core limitation.

Source: Microsoft

Obviously enterprise is the biggest beneficiary of NVMe because the workloads are so much heavier and SATA/AHCI can't provide the necessary performance. Nevertheless, the client market does benefit from NVMe but just not as much. As I explained in the previous page, even moderate improvements in performance result in increased battery life and that's what NVMe will offer. Thanks to lower latency the disk usage time will decrease, which results in more time spend at idle and thus increased battery life. There can also be corner cases when the better queue support helps with performance.

Source: Intel

With future non-volatile memory technologies and NVMe the overall latency can be cut to one fifth of the current ~100µs latency and that's an improvement that will be noticeable in everyday client usage too. Currently I don't think any of the client PCIe SSDs support NVMe (enterprise has been faster at adopting NVMe) but the SF-3700 will once it's released later this year. Driver support for both Windows and Linux exists already, so it's now up to SSD OEMs to release compatible SSDs.

131 Comments

View All Comments

Guspaz - Thursday, March 13, 2014 - link

The only justification for why anybody might need something faster than SATA6 seems to be "Uncompressed 4K video is big"...Except nobody uses uncompressed 4K video. Nobody uses it precisely BECAUSE it's so big. 4K cameras all record to compressed formats. REDCODE, ProRes, XAVC, etc. It's true that these still produce a lot of data (they're all intra-frame codecs, which mean they compress each frame independently, taking no advantage of similarities between frames), but they're still way smaller than uncompressed video.

JarredWalton - Thursday, March 13, 2014 - link

But when you edit videos, you end up working with uncompressed data before recompressing, in order to avoid losing quality.willis936 - Thursday, March 13, 2014 - link

The case you described (4K, 12bpc, 24fps) would also take an absolutely monumental amount of RAM. I can't think of using a machine with less than 32GB for that and even then I feel like you'd run out regularly.Guspaz - Thursday, March 13, 2014 - link

Are you rendering from Premiere to uncompressed video as an intermediate format before recompressing in some other tool? If you're working end-to-end with Premiere (or Final Cut) you wouldn't have uncompressed video anywhere in that pipeline. But even if you're rendering to uncompressed 4K video for re-encoding elsewhere, you'd never be doing that to your local SSD, you'd be doing it to big spinning HDDs or file servers. One hour of uncompressed 4K 60FPS video would be ~5TB. Besides, disk transfer rates aren't going to be the bottleneck on rendering and re-encoding uncompressed 4K video.Kevin G - Thursday, March 13, 2014 - link

That highly depends on the media you're working with. 4K consumes far too much storage to be usable in an uncompressed manner. Upto 1.6 GByte/s is needed for uncompressed recording. A 1 TB drive would fill up in a less than 11 minutes.As mentioned by others, losses compression is an option without any reduction in picture quality, though at the expensive of high performance hardware needed for recording and rendering.

JlHADJOE - Thursday, March 13, 2014 - link

You pretty much have to do it during recording.Encoding 4k RAW needs a ton of CPU that you might not have inside your camera, not to mention you probably don't want any lossy compression at that point because there's still a lot of processing work to be done.

JlHADJOE - Friday, March 14, 2014 - link

Here's the Red Epic Dragon, a 6k 100fps camera. It uses a proprietary SSD array (likely RAID 0) for storage:http://www.red.com/products/epic-dragon#features

popej - Thursday, March 13, 2014 - link

"idling (with minimal <0.05W power consumption)"Where did you get this value from? I'm looking at your SSD reviews and clearly see, that idle power consumption is between 0.3 and 1.3W, far away form quoted 0.05W. What is wrong, your assumption here or measurements at reviews? Or maybe you measure some other value?

Kristian Vättö - Thursday, March 13, 2014 - link

<0.05W is normal idle power consumption in a mobile platform with HIPM+DIPM enabled: http://www.anandtech.com/bench/SSD/732We can't measure that in every review because only Anand has the equipment for that. (requires a modified laptop).

dstarr3 - Thursday, March 13, 2014 - link

How does the bandwidth of a single SATAe SSD compare to two SSDs on SATA 6GB/s in Raid0? Risk of failure aside.