NVIDIA's G-Sync: Attempting to Revolutionize Gaming via Smoothness

by Anand Lal Shimpi on October 18, 2013 1:25 PM EST

Earlier today NVIDIA announced G-Sync, its variable refresh rate technology for displays. The basic premise is simple. Displays refresh themselves at a fixed interval, but GPUs render frames at a completely independent frame rate. The disconnect between the two is one source of stuttering. You can disable v-sync to try and work around it but the end result is at best tearing, but at worst stuttering and tearing.

NVIDIA's G-Sync is a combination of software and hardware technologies that allows a modern GeForce GPU to control a variable display refresh rate on a monitor equipped with a G-Sync module. In traditional setups a display will refresh the screen at a fixed interval, but in a G-Sync enabled setup the display won't refresh the screen until it's given a new frame from the GPU.

NVIDIA demonstrated the technology on 144Hz ASUS panels, which obviously caps the max GPU present rate at 144 fps although that's not a limit of G-Sync. There's a lower bound of 30Hz as well, since anything below that and you'll begin to run into issues with flickering. If the frame rate drops below 30 fps, the display will present duplicates of each frame.

There's a bunch of other work done on the G-Sync module side to deal with some funny effects of LCDs when driven asynchronously. NVIDIA wouldn't go into great detail other than to say that there are considerations that need to be taken into account.

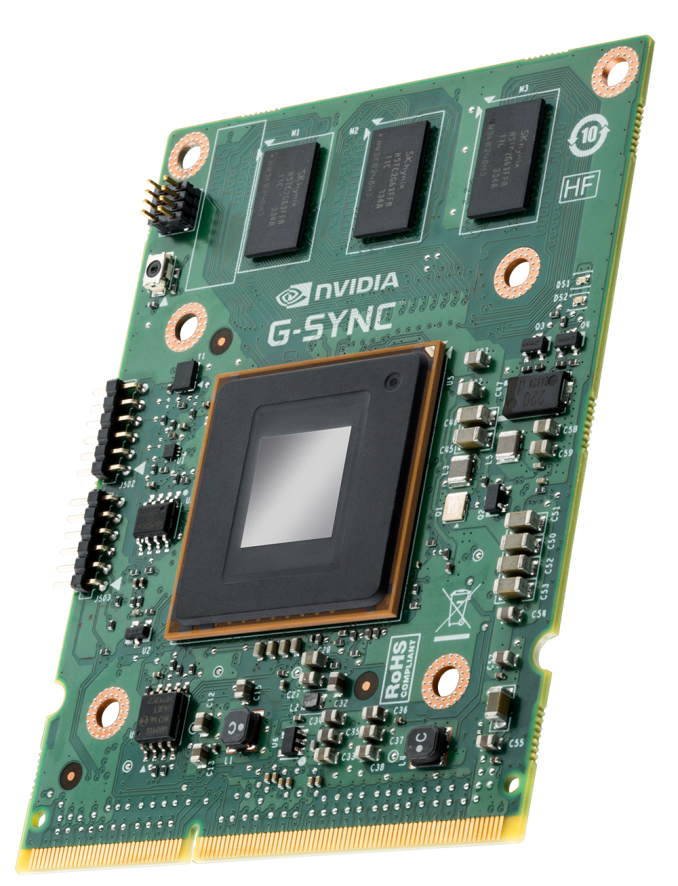

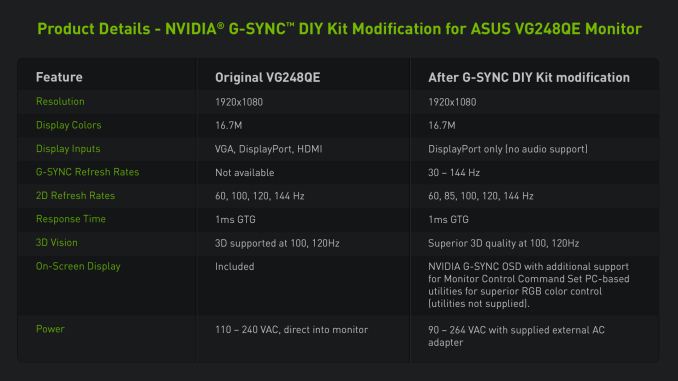

The first native G-Sync enabled monitors won't show up until Q1 next year, however NVIDIA will be releasing the G-Sync board for modding before the end of this year. Initially supporting Asus’s VG248QE monitor, end-users will be able to mod their monitor to install the board, or alternatively professional modders will be selling pre-modified monitors. Otherwise in Q1 of next year ASUS will be selling the VG248QE with the G-Sync board built in for $399, while BenQ, Philips, and ViewSonic are also committing to rolling out their own G-Sync equipped monitors next year too. I'm hearing that NVIDIA wants to try and get the module down to below $100 eventually. The G-Sync module itself looks like this:

There's a controller and at least 3 x 256MB memory devices on the board, although I'm guessing there's more on the back of the board. NVIDIA isn't giving us a lot of detail here so we'll have to deal with just a shot of the board for now.

Meanwhile we do have limited information on the interface itself; G-Sync is designed to work over DisplayPort (since it’s packet based), with NVIDIA manipulating the timing of the v-blank signal to indicate a refresh. Importantly, this indicates that NVIDIA may not be significantly modifying the DisplayPort protocol, which at least cracks open the door to other implementations on the source/video card side.

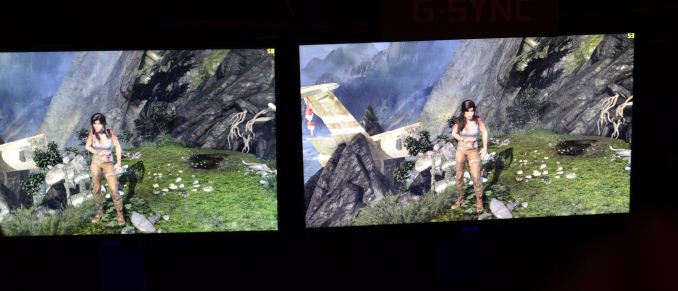

Although we only have limited information on the technology at this time, the good news is we got a bunch of cool demos of G-Sync at the event today. I'm going to have to describe most of what I saw since it's difficult to present this otherwise. NVIDIA had two identical systems configured with GeForce GTX 760s, both featured the same ASUS 144Hz displays but only one of them had NVIDIA's G-Sync module installed. NVIDIA ran through a couple of demos to show the benefits of G-Sync, and they were awesome.

The first demo was a swinging pendulum. NVIDIA's demo harness allows you to set min/max frame times, and for the initial test case we saw both systems running at a fixed 60 fps. The performance on both systems was identical as was the visual experience. I noticed no stuttering, and since v-sync was on there was no visible tearing either. Then things got interesting.

NVIDIA then dropped the frame rate on both systems down to 50 fps, once again static. The traditional system started to exhibit stuttering as we saw the effects of having a mismatched GPU frame rate and monitor refresh rate. Since the case itself was pathological in nature (you don't always have a constant mismatch between the two), the stuttering was extremely pronounced. The same demo on the g-sync system? Flawless, smooth.

NVIDIA then dropped the frame rate even more, down to an average of around 45 fps but also introduced variability in frame times, making the demo even more realistic. Once again, the traditional setup with v-sync enabled was a stuttering mess while the G-Sync system didn't skip a beat.

Next up was disabling v-sync with hopes of reducing stuttering, resulting in both stuttering (still refresh rate/fps mismatch) and now tearing. The G-Sync system, once again, handled the test case perfectly. It delivered the same smoothness and visual experience as if the we were looking at a game rendering perfectly at a constant 60 fps. It's sort of ridiculous and completely changes the overall user experience. Drops in frame rate no longer have to be drops in smoothness. Game devs relying on the presence of G-Sync can throw higher quality effects at a scene since they don't need to be as afraid of drops in frame rate excursions below 60 fps.

Switching gears NVIDIA also ran a real world demonstration by spinning the camera around Lara Croft in Tomb Raider. The stutter/tearing effects weren't as pronounced as in NVIDIA's test case, but they were both definitely present on the traditional system and completely absent on the G-Sync machine. I can't stress enough just how smooth the G-Sync experience was, it's a game changer.

The combination of technologies like GeForce Experience, having a ton of GPU performance and G-Sync can really work together to deliver a new level of smoothness, image quality and experience in games. We've seen a resurgence of PC gaming over the past few years, but G-Sync has the potential to take the PC gaming experience to a completely new level.

Update: NVIDIA has posted a bit more information about G-Sync, including the specs of the modified Asus VG248QE monitor, and the system requirements.

| NVIDIA G-Sync System Requirements | |||||||||

| Video Card | GeForce GTX 650 Ti Boost or Higher | ||||||||

| Display | G-Sync Equipped Display | ||||||||

| Driver | R331.58 or Higher | ||||||||

| Operating System | Windows 7/8/8.1 | ||||||||

217 Comments

View All Comments

MaineG - Saturday, October 19, 2013 - link

take my money. im ready for this technology, this is very game changing and what ive been waiting for. dont need to use lightboost anymore. all i want is smooth buttery gameplay with no input lag and this is it.SleepyFE - Saturday, October 19, 2013 - link

The problem is output "lag". The input (mouse & keys) works fast enough (unless you are slow) and the rest is processing so...SlyNine - Saturday, October 19, 2013 - link

So you're telling me that you could tell the difference between 48 (144/3 what a Vsynced 50 would be) and 50 fps. But couldn't tell the difference between 45 and 50.Now I would agree that 36 is a bit of a dip from 45. But the great thing about 120+ hz screens is with Vsync off its hard to see the tearing at 120hz (8ms before the tear vanishes).

Mondozai - Saturday, October 19, 2013 - link

YazX_, fanboys like you crack me up.soryuuha - Saturday, October 19, 2013 - link

not going to change my 40" inch TV just to enjoy G-sync feature.lilkwarrior - Saturday, October 19, 2013 - link

This might sound stupid, but would tech like this help out Oculus Rift be rid of its motion sickness problem?bji - Saturday, October 19, 2013 - link

I'm no expert on the topic, but it was my understanding that the Oculus Rift motion sickness problem is caused by a disconnect between the visual display of motion and the body's not sensing that motion. I expect it's like how some people (including myself, unfortunately) start to feel sick when reading in the car. When you read in a car, your eyes see a stable picture (unmoving words on a page) and yet your body feels movement as the car bounces around. This mismatch between inputs for some reason causes a feeling of sickness. With the Oculus Rift, the eyes see motion but the body feels nothing, which leads to the same mismatch and the same feeling of sickness.Improving the smoothness of the motion displayed to the eyes, as this G-Sync technology promises to do, will not alter the fundamental problem, unfortunately. Your eyes will see smoother motion but your body will still revolt because it doesn't feel that motion.

valinor89 - Saturday, October 19, 2013 - link

I think you have it right with the cause of motion sickness in the Oculus Rift but you make a wrong conclusion. As you said it is a problem of lag, you move and the image on your eyes stays static for a small time while your inner ear detects movement, that causes motion sickness, true. But this is why you want to get rid of this perceived lag, so this tech works in the sense that it eliminates part of this lag without displaying image defects and ergo the time in witch this lag is apparent can be reduced.What I believe is that when your inner ear detects movement and our eyes don't detect that same movement you get sick but the reverse is not true. I can watch a movie witch moves the camera constantly and not get sick. Or play a FPS controlling the point of view with my mouse and not moving my head and not getting sick.

I hope I make my point clear.

Gigaplex - Sunday, October 20, 2013 - link

In your examples of static images only part of what you see is moving. Your peripheral vision is still static (screen bezel, walls etc behind screen). I don't think that is enough experience to assume you won't get sick with a visor image moving with a static head.lilkwarrior - Saturday, October 19, 2013 - link

Thanks for the thorough analysis; I never had motion sickness before in the contexts you've described, so it' interesting to hear about it from you.