Battle of the 4 TB NAS Drives: WD Red and Seagate NAS HDD Face-Off

by Ganesh T S on September 4, 2013 6:00 AM EST- Posted in

- NAS

- Seagate

- HDDs

- Western Digital

- Enterprise

Feature Set Comparison

Enterprise hard drives such as the WD Re and WD Se come with features such as real time linear and rotational vibration correction, dual actuators to improve head positional accuracy, multi-axis shock sensors to detect and compensate for shock events and dynamic fly-height technology for increasing data access reliability. For the consumer NAS versions, Western Digital incorporates some features in firmware under the NASWare moniker, while Seagate has NASWorks. We have already covered some of these features in our WD Red review last year. These hard drives also expose some of their interesting firmware aspects through their SATA controller, but, before looking into those, let us compare the specifications of the four drives being considered today.

| 4 TB NAS Hard Drive Face-Off Contenders | ||||

| WD Red | Seagate NAS HDD | WD Se | WD Re | |

| Model Number | WD40EFRX | ST4000VN000 | WD4000F9YZ | WD4000FYYZ |

| Interface | SATA 6 Gbps | SATA 6 Gbps | SATA 6 Gbps | SATA 6 Gbps |

| Advanced Format (AF) | Yes | Yes | Yes | No (512N Sector Size) |

| Rotational Speed | IntelliPower (5400 rpm) | 5900 rpm | 7200 rpm | 7200 rpm |

| Cache | 64 MB | 64 MB | 64 MB | 64 MB |

| Rated Load / Unload Cycles | 300K | 600K | 300K | 600K |

| Non-Recoverable Read Errors / Bits Read | 1 per 10E14 | 1 per 10E14 | 1 per 10E14 | 1 per 10E15 |

| MTBF | 1M | 1M | 800K | 1.2M |

| Rated Workload | ~120 - 150 TB/yr | < 180 TB/yr? | 180 TB/yr | 550 TB/yr |

| Operating Temperature Range | 0 - 70 C | 0 - 70 C | 5 - 55 C | 5 - 55 C |

| Acoustics (Seek Average - dBA) | 28 | 25 | 34 | 34 |

| Physical Dimensions | 4 in. x 5.787 in. x 1.028 in. / 680 grams | 4 in. x 5.787 in. x 1.028 in. / 610 grams | 4 in. x 5.787 in. x 1.028 in. / 750 grams | 4 in. x 5.787 in. x 1.028 in. / 750 grams |

| Warranty | 3 years | 3 years | 5 years | 5 years |

| Pricing | $213 | $220 | $280 | $383 |

Some of the interesting aspects are highlighted in bold above. The Seagate model enjoys a 500 rpm advantage in rotational speed. So, it shouldn't be a surprise if it comes out in front in some of the benchmarks. It may also mean that the Seagate NAS HDD consumes more power compared to the WD Red. Seagate also rates the number of load / unload cycles at 600K for the NAS HDD (same as the WD Re). The WD Re and WD Se 4 TB versions weigh 750 grams each and they use five 800 GB platters. The WD Red weighs in at 680 g, but the Seagate NAS HDD (with four 1 TB platters) weighs only 610 g and comes in as the lightest of the lot. Considering the data at our disposal, it appears unlikely that the WD Red 4 TB has five platters, but, we have reached out to Western Digital to confirm the platter density in the unit (Update: WD got back to us with confirmation that the WD Red 4 TB version has four 1 TB platters).

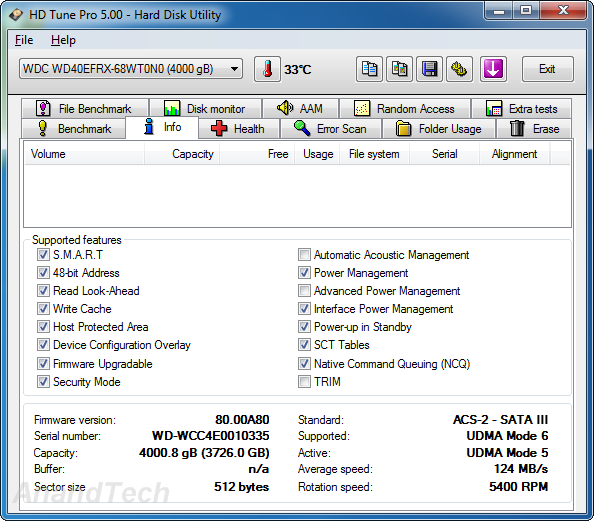

A high level overview of the various supported SATA features is provided by HD Tune Pro v5.00.

The WD Red supports interface power management (termed as HIPM or DIPM depending on whether the power management to alter the status of the SATA link is initiated by the device or the host), but not advanced power management (APM), while the Seagate NAS HDD supports APM, but not HIPM / DIPM. APM allows setting of the head parking interval. As we saw in the WD Red 3 TB review, APM support is available only through proprietary commands using the WDIDLE tool. By default, it is disabled, which is fine considering the target market for the drives. The Seagate drive, on the other hand, makes it possible for the NAS OS to set the head parking interval. HIPM / DIPM allows further fine-tuning of power consumption, and it is a pity that the Seagate NAS HDD doesn't support it. In terms of the supported features above, the WD Re and Seagate NAS HDD are the same. The WD Se differs from the WD Re / Seagate NAS HDD in the fact that device configuration overlay (DCO) is not supported. DCO allows for the hard drive to report modified drive parameters to the host. It is not a big concern for most applications.

We get a better idea of the supported features using FinalWire's AIDA64 system report. The table below summarizes the extra information generated by AIDA64 (that is not already provided by HD Tune Pro).

| Supported Features | ||||

| WD Red | Seagate NAS HDD | WD Se | WD Re | |

| DMA Setup Auto-Activate | Supported, Disabled | Supported, Disabled | Supported, Disabled | Supported, Disabled |

| Extended Power Conditions | Not Supported | Not Supported | Supported, Disabled | Supported, Disabled |

| Free-Fall Control | Not Supported | Not Supported | Not Supported | Not Supported |

| General Purpose Logging | Supported | Supported | Supported | Supported |

| In-Order Data Delivery | Not Supported | Not Supported | Not Supported | Not Supported |

| NCQ Priority Information | Supported | Not Supported | Supported | Supported |

| Phy Event Counters | Supported | Supported | Supported | Supported |

| Release Interrupt | Not Supported | Not Supported | Not Supported | Not Supported |

| Sense Data Reporting | Not Supported | Not Supported | Not Supported | Not Supported |

| Software Settings Preservation | Supported, Enabled | Supported, Enabled | Supported, Enabled | Supported, Enabled |

| Streaming | Supported | Supported | Not Supported | Not Supported |

| Tagged Command Queuing | Not Supported | Not Supported | Not Supported | Not Supported |

Interesting aspects are highlighted in the above table. The extended power conditions (EPC) supported in the enterprise drives (WD Se / WD Re) allow for more power states than the usual parked head / spun down disks. These may include states where the electronics is switched off, the heads are unloaded, the disks are spinning at a reduced rpm and where the motor is completely stopped (or any valid combination thereof). This provies for more fine-tuned tradeoffs between performance (in terms of latency) and power consumption. NCQ priority information adds priority to data in complex workload environments. While WD seems to have it enabled on all its NAS drives, Seagate seems to believe it is unnecessary in the Seagate NAS HDD's target market. A surprising finding in the above run was the fact that the two enterprise drives from WD don't support the NCQ streaming feature which enables isochronous data transfers for multimedia streams while also improving performance of lower priority transfers. This feature could be very useful for media server and video editing use-cases. Fortunately, both the WD Red and Seagate NAS HDD support this feature.

54 Comments

View All Comments

Gigaplex - Wednesday, September 25, 2013 - link

Avoid Storage Spaces from Windows. It's an unproven and slow "re-imagination" of RAID as Microsoft likes to call it. The main selling point is flexibility of adding more drives, but that feature doesn't work as advertised because it doesn't rebalance. If you avoid adding more drives over time it has no benefits over conventional RAID, is far slower, and has had far less real world testing on it.Bob Todd - Monday, September 9, 2013 - link

For home use I've gone from RAID 5 to pooling + snapshot parity (DriveBender and SnapRAID respectively). It's still one big ass pool so it's easy to manage, I can survive two disks failing simultaneously with no data loss, and even in the event of a disaster where 3+ fail simultaneously I'll only lose whatever data was on the individual disks that croaked. Storage Spaces was nice in theory, but the write speed for the parity spaces is _horrendous_, and it's still striped so I'd risk losing everything (not to mention expansion in multiples of your column size is a bitch for home use).coolviper777 - Tuesday, October 1, 2013 - link

If you have a good hardware raid card, with BBU and memory, and decent drives, then I think Raid 5 works just fine for home use.I currently have a Raid 5 array using a 3Ware 9560SE Raid card, consisting of 4 x 1.5TB WD Black drives. This card has battery backup and onboard memory. My RAID 5 array works beautifully for my home use. I ran into an issue with a drive going bad. I was able to get a replacement, and the rebuild worked well. There's an automatic volume scan once a week, and I've seen it fix a few error quite a while ago. But nothing very recent.

I get tremendous speed out of my Raid5, and even boot my Windows7 OS from a partition on the Raid 5. Probably, eventually move that to a SSD, but they're still expensive for the size I need for the C: drive.

My biggest problem with Raid1 is that it's hugely wasteful in terms of disk space, and it can be slower than just a single drive. I can understand for mission critical stuff, Raid5 might give issues. However, for home use, if you combine true hardware Raid5 with backup of important files, I think it's a great solution in terms of reliability and performance.

tjoynt - Wednesday, September 4, 2013 - link

++ this. At work we *always* use raid-6: nowadays single drive redundancy is a disaster just waiting to happen.brshoemak - Wednesday, September 4, 2013 - link

"First off, error checking should in general be done by the RAID system, not by the drive electronic."The "should in general" port is where the crux of the issue lies. A RAID controller SHOULD takeover the error-correcting functions if the drive itself is having a problem - but it doesn't do it exclusively, it lets the drives have a first go at it. A non-ERC/TLER/CCTL drive will keep working on the problem for too long and not pass the reigns to the RAID controller as it should.

Also, RAID1 is the most basic RAID level in terms of complexity and I wouldn't have any qualms about running consumer drives in a consumer setting - as long as I had backups. But deal with any RAID level beyond RAID1 (RAID10/6), especially those that require parity data, and you could be in for a world of hurt if you use consumer drives.

Egg - Wednesday, September 4, 2013 - link

No. Hard drives have, for a very very long time, included their own error checking and correcting codes, to deal with small errors. Ever heard of bad blocks?RAID 1 exists to deal more with catastrophic failures of entire drives.

tjoynt - Wednesday, September 4, 2013 - link

RAID systems can't do error checking at that level because they don't have access to it: only the drive electronics do.The problems with recovering RAID arrays don't usually show up with RAID-1 arrays, but with RAID-5 arrays, because you have a LOT more drives to read.

I swore off consumer level raid-5 when my personal raid-5 (on an Intel Matrix RAID-5 :P) dropped two drives and refused to rebuild with them even though they were still perfectly functional.

Rick83 - Thursday, September 5, 2013 - link

Just fix it by hand - it's not that difficult. Of course, with pseudo hardware RAID, you're buggered, as getting the required access to the disk, and forcing partial rebuilds isn't easily possible.I've had a second disk drop out on me once, and I don't recall how exactly I ended up fixing it, but it was definitely possible. I probably just let the drive "repair" the unreadable sectors by writing 512 rubbish bytes to the relevant locations, and tanked the loss of those few bytes, then rebuilt to the redundancy disk.

So yeah, there probably was some data loss, but bad sectors aren't the end of the world.

And by using surface scans you can make the RAID drop drives with bad sectors at the first sign of an issue, then resync and be done with it. 3-6 drive RAID 5 is perfectly okay, if you only have intermediate availability requirements. For high availability RAID 6/RAID 10 arrays with 6-12 disks are a better choice.

mooninite - Thursday, September 5, 2013 - link

Intel chipsets do not offer hardware RAID. The RAID you see is purely software. The Intel BIOS just formats your hard drive with Intel's IMSM (Intel Matrix Storage Manager) format. The operating system has to interpret the format and do all the RAID parity/stripe calculations. Consider it like a file system.Calling Intel's RAID "hardware" or "pseudo-hardware" is a misconception I'd like to see die. :)

mcfaul - Tuesday, September 10, 2013 - link

"First off, error checking should in general be done by the RAID system, not by the drive electronic. "You need to keep in mind how drives work. they are split into 512b/4k sectors... and each sector has a significant chunk of ECC at the end of the sector, so all drives are continually doing both error checking and error recovery on every single read they do.

plus, if it is possible to quickly recover an error, then obviously it is advantageous for the drive to do this, as there may not be a second copy of the data available (i.e. when rebuilding a RAID 1 or RAID 5 array)