NVIDIA GeForce GTX 770 Review: The $400 Fight

by Ryan Smith on May 30, 2013 9:00 AM ESTPower, Temperature, & Noise

As always, last but not least is our look at power, temperature, and noise. Next to price and performance of course, these are some of the most important aspects of a GPU, due in large part to the impact of noise. All things considered, a loud card is undesirable unless there’s a sufficiently good reason – or sufficiently good performance – to ignore the noise.

GTX 770 ends up being an interesting case study in all 3 factors due to the fact that NVIDIA is pushing the GK104 GPU so hard. Though the old version of GPU Boost muddles things some, there’s no denying that higher clockspeeds coupled with the higher voltages needed to reach those clockspeeds has a notable impact on power consumption. This makes it very hard for NVIDIA to stick to their efficiency curve, since adding voltages and clockspeeds offers diminishing returns for the increase in power consumption.

| GeForce GTX 770 Voltages | ||||

| GTX 770 Max Boost | GTX 680 Max Boost | GTX 770 Idle | ||

| 1.2v | 1.175v | 0.862v | ||

As we can see, NVIDIA has pushed up their voltage from 1.175v on GTX 680 to 1.2v on GTX 770. This buys them the increased clockspeeds they need, but it will drive up power consumption. At the same time GPU Boost 2.0 helps to counter this some, as it will keep leakage from being overwhelming by keeping GPU temperatures at or below 80C.

| GeForce GTX 770 Average Clockspeeds | |||

| Max Boost Clock | 1136MHz | ||

| DiRT:S |

1136MHz

|

||

| Shogun 2 |

1136MHz

|

||

| Hitman |

1136MHz

|

||

| Sleeping Dogs |

1102MHz

|

||

| Crysis |

1136MHz

|

||

| Far Cry 3 |

1136MHz

|

||

| Battlefield 3 |

1136MHz

|

||

| Civilization V |

1136MHz

|

||

| Bioshock Infinite |

1128MHz

|

||

| Crysis 3 |

1136MHz

|

||

Speaking of clockspeeds, we also took the average clockspeeds for GTX 770 in our games. In short, GTX 770 is almost always at its maximum boost bin of 1136; the oversized Titan cooler keeps temperatures just below the thermal throttle, and there’s enough TDP headroom left that the card doesn’t need to pull back to avoid that. This is one of the reasons why GTX 770’s performance advantage over GTX 680 is greater than the clockspeed increases alone.

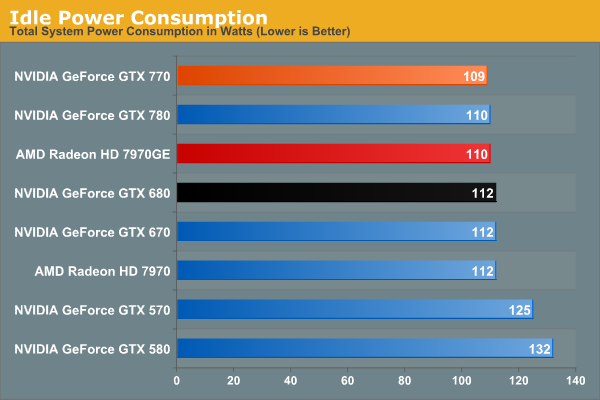

We don’t normally publish this data, but GTX 770 has an extra interesting attribute about it: its idle clockspeed is lower than other Kepler parts. GTX 680 and GTX 780 both idle at 324MHz, but GTX 770 idles at 135MHz. Even 324MHz has proven low enough to keep Kepler’s idle power in check in the past, so it’s not entirely clear just what NVIDIA is expecting here. We’re seeing 1W less at the wall, but by this point the rest of our testbed is drowning out the video card.

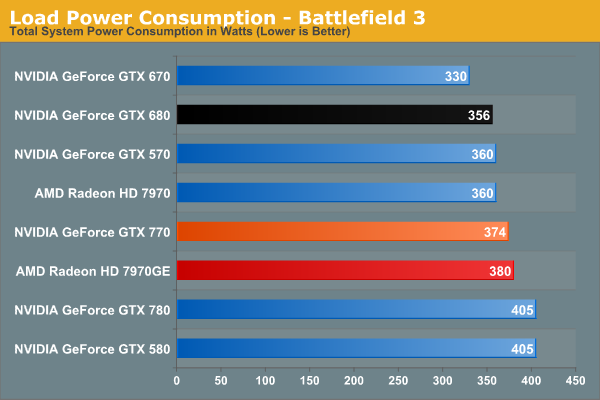

Moving on to BF3 power consumption, we can see the power cost of GTX 770’s performance. 374W at the wall is only 18W more than GTX 680, thanks in part to the fact that GTX 770 isn’t hitting its TDP limit here. At the same time compared to the outgoing GTX 670, this is a 44W difference. This makes it very clear that GTX 770 is not a drop-in replacement for GTX 670 as far as power and cooling go. On the other hand GTX 770 and GTX 570 are very close, even if GTX 770’s TDP is technically a bit higher than GTX 570’s.

Despite this runup, GTX 770 still stays a hair under 7970GE, despite the slightly higher CPU power consumption from GTX 770’s higher performance in this benchmark. It’s only 6W at the wall, but it showcases that NVIDIA didn’t have to completely blow their efficiency curve to get a GK104 card back up to 7970GE performance levels.

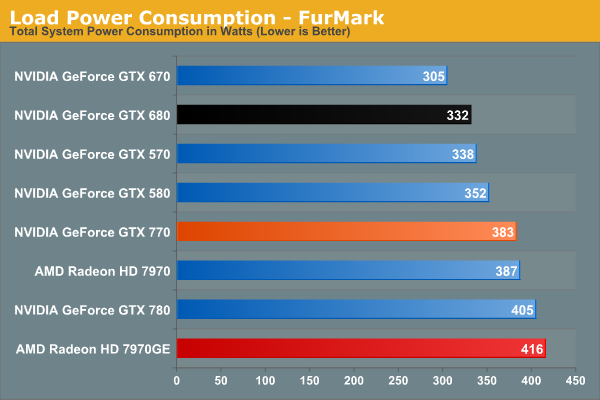

In our TDP constrained scenario we can see the gaps between our cards grow. 78W separates the GTX 770 from GTX 670, and even GTX 680 draws 41W less, almost exactly what we’d expect from their published TDPs. On the flip side of the coin 383W is still less than both 7970 cards, reflecting the fact that GTX 770 is geared for 230W while AMD’s best is geared for 250W.

This is also a reminder however that at a mid-generation product extra performance does not come for free. With the same process and the same architecture, performance increases require power increases. This won’t significantly change until we see 20nm cards next year.

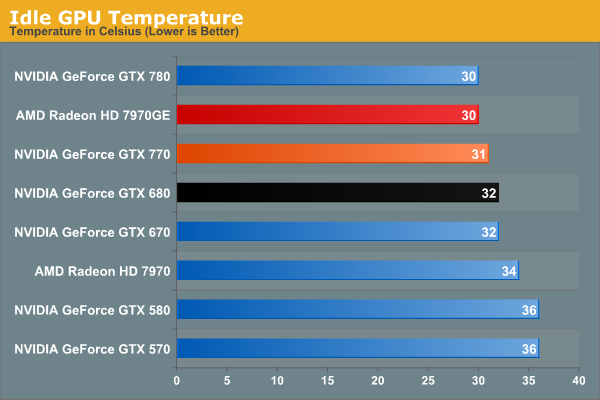

Moving on to temperatures, these are going to be a walk in the part for the reference GTX 770 due to the Titan cooler. At idle we see it hit 31C, which is actually 1C warmer than GTX 780, but this really just comes down to uncontrollable variations in our tests.

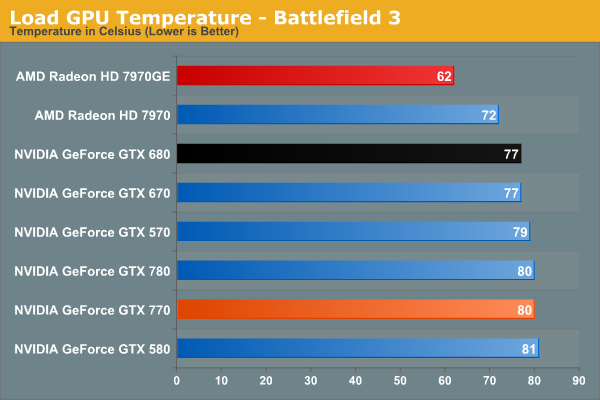

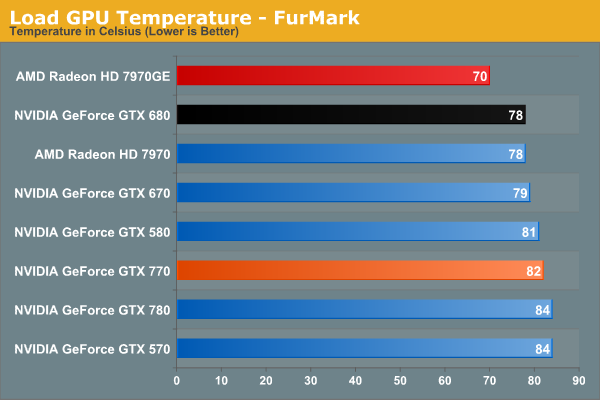

As a GPU Boost 2.0 card temperatures will top out at 80C in games, and that’s exactly what happens here. Interestingly, GTX 770 is just hitting 80C, as evidenced by our clockspeeds earlier. If it was running hotter, it would have needed to drop to lower clockspeeds.

Of course it doesn’t hold a candle here to 7970GE, but that’s the difference between a blower and an open air cooler in action. The blower based 7970 is much closer, as we’d expect.

Under FurMark the temperature situation is largely the same. The GTX 770 comes up to 82C here (favoring TDP throttling over temperature throttling), but the relative rankings are consistent.

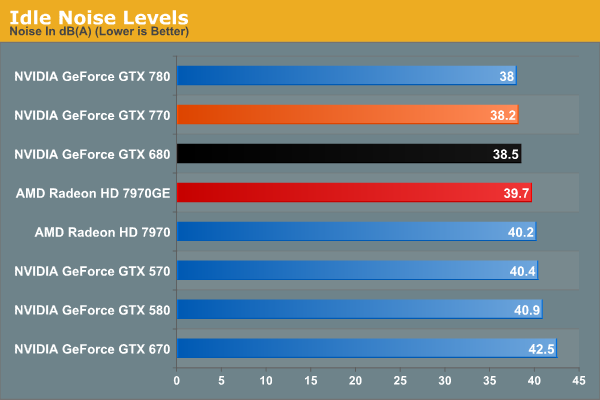

With Titan’s cooler in tow, idle noise looks very good on GTX 770.

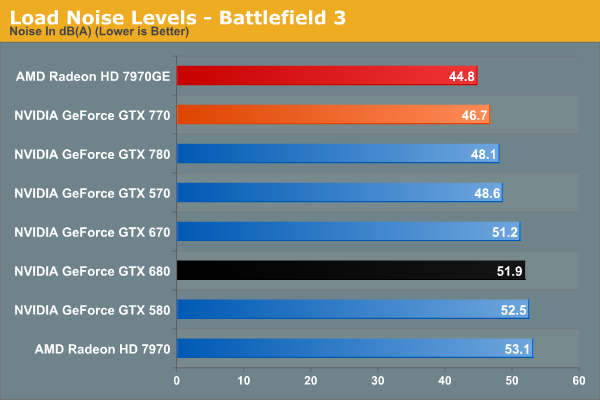

Our noise results under Battlefield 3 are a big part of the reason we’ve been calling the Titan cooler oversized for GTX 770. When is the last time we’ve seen a blower on a 230W card that only hit 46.7dB? The short answer is never. GTX 770’s fan simply doesn’t have to rev up very much to handle the lesser heat output. In fact it’s damn near competitive with the open air cooled 7970GE; there’s still a difference, but it’s under 2dB. More importantly however, despite being a more powerful and more power-hungry card than the GTX 680, the GTX 770 is over 5dB quieter, and this is despite the fact that the GTX 680 is already a solid card own its own. Titan’s cooler is certainly expensive, but it gets results.

Of course this is why it’s all the more a shame that none of NVIDIA’s partners are releasing retail cards with this cooler. There are some blowers in the pipeline, so it will be interesting to see if they can maintain Titan’s performance while giving up the metal.

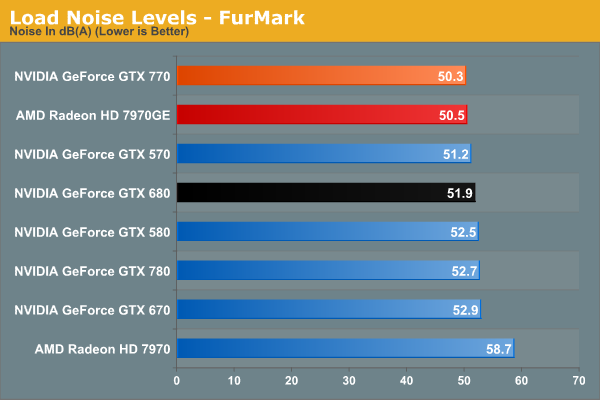

With FurMark pushing our GTX 770 at full TDP, our noise results are still good, but not as impassive as they were under BF3. 50.3dB is still over a dB quieter than GTX 680, though obviously much closer than before. On the other hand the GTX 770 ever so slightly edges out the 7970GE and its open air cooler. Part of this comes down to the TDP difference of course, but beating an open air cooler like that is still quite the feat.

Wrapping things up here, it will be interesting to see where NVIDIA’s partners go with their custom designs. GTX 770, despite being a higher TDP part than both GTX 670 and GTX 680, ends up looking very impressive when it comes to noise, and it would be great to see NVIDIA’s partners match that. At the same time the increased power consumption and heat generation relative to the GeForce 600 series is unfortunate, but not unexpected. But for buyers coming from the GeForce 400 and GeForce 500 series, GTX 770 is in-line with what those previous generation cards were already pulling.

117 Comments

View All Comments

JDG1980 - Thursday, May 30, 2013 - link

TechPowerUp ran tests of three GTX 770s with third-party coolers (Asus DirectCU, Gigabyte WindForce, and Palit JetStream). All three beat the GTX 770 reference on thermals for both idle and load. Noise levels varied, but the DirectCU seemed to be the winner since it was quieter than the reference cooler on both idle and load. That card also was a bit faster in benchmarks than the reference.That said, I agree the build quality of the reference cooler is better than the aftermarket substitutes - but Asus is probably a close second. Their DirectCU series has always been very good.

ArmedandDangerous - Thursday, May 30, 2013 - link

This article is in desperate need of some editing work. Spelling and comprehension errors throughout.Nighyal - Thursday, May 30, 2013 - link

I asked this on the 780 review, and it seems like it might be even more interesting for the 770 considering Nvidia's basically threw more power at a 680, but a performance per watt comparison would be great. If there was something that clearly showed the efficiency of each card in a way (maybe using a fixed work load) it would be interesting to see. Especially when compared to similar architectures or when comparing AMD's efforts with the GHz editions.ThIrD-EyE - Thursday, May 30, 2013 - link

Since when did 70-80C temperatures become acceptable? I had been looking to upgrade my MSI Cyclone GTX 460 which would never hit higher than 62C and I got a great deal on 2 560TIs for less than half the cost of them new. I have run them in single card and SLI; I see 80C+ when I run an overclock program like MSI Afterburner. I use a custom fan profile to bring the temps down to 75C or less at higher fan speed, but still in reasonable noise levels. It's still not quite enough.All these video cards may be fine at these temperatures, but when you are sitting next to the case and there is 80C being pumped out, you really feel it. Especially now with Summer heat finally hitting where I live. My $25 Hyper212+ keeps my OC'ed i7 2600k at a good 45-50C when playing games. I would buy aftermarket coolers if they were not going to take up 3 slots each (I have a card that I need, but would have to be removed.) and didn't cost nearly as much as I paid for the cards.

AMD, NVIDIA and card partners need to work on bringing temperatures down.

quorm - Thursday, May 30, 2013 - link

lower temperature readings do not mean less heat produced. better cooling just moves the heat from the GPU to your room more efficiently.ThIrD-EyE - Thursday, May 30, 2013 - link

The architecture of these video cards were obviously made for performance first. That does not mean they can't also work on lowering power consumption to lower the heat produced. One thing that I've found to help my situation is to set all games to run at 60fps without vsync if possible, which thankfully is most fo the games I play. Some games become unplayable or wonky with vsync and other ways of limiting fps without vsync, so I just deal with the heat from no fps limits.I hope that the developers of console ports from PS4 and Xbox One put in an fps limit option like Borderlands 2 if they don't allow dev console access.

MattM_Super - Friday, May 31, 2013 - link

Although its not currently accessible from the driver control panel, Nvidia drivers have a built in fps limiter that I use in every game I play (never had any issues with it). You can access it with NvidiaInspector.DanNeely - Thursday, May 30, 2013 - link

Since 70-80C has always been the best a blower style cooler can do on a high power GPU without getting obscenely loud, and blowers have proven to be the best option to avoid frying the GPU in a case with horrible ventilation. IOW about when both nVidia and ATI adopted blowers for their reference designs.JPForums - Thursday, May 30, 2013 - link

70C-80C temperatures became acceptable after nVidia decided to release Fermi based cards that regularly hit the mid 90Cs. Since then, the temperatures have in fact come down. Of course, they are still high for my liking and I pay extra for cards with better coolers (I.E. MSI TwinFrozer, Asus DirectCU). That said, there is only so much you can do when pushing 3 times the TDP of an Intel Core i7-3770K while cooling it with a cooler that is both lighter and less ideally formed for the task (Comparing some of the best GPU coolers to any number of heatsinks from Noctua, Thermalright, etc.). Water cooling loops work wonders, but not everyone wants the expense or hassle.Rick83 - Friday, May 31, 2013 - link

The higher the temperatures, the less fan speed you need, because you have higher delta-theta between the air entering the cooler and the cooling fins, which results in more energy transfer at less volume throughput.Obviously the temperature is a pure function of the fan curve under load, and has very little to do with the actual chip (unless you go so far down in energy output, that you can rely on passive convection).