Intel Iris Pro 5200 Graphics Review: Core i7-4950HQ Tested

by Anand Lal Shimpi on June 1, 2013 10:01 AM ESTBattlefield 3

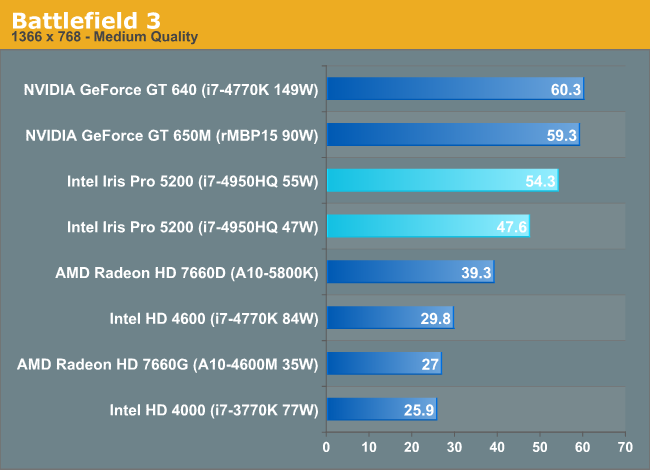

Our multiplayer action game benchmark of choice is Battlefield 3, DICE’s 2011 multiplayer military shooter. Its ability to pose a significant challenge to GPUs has been dulled some by time and drivers at the high-end, but it’s still a challenge for more entry-level GPUs such as the iGPUs found on Intel and AMD's latest parts. Our goal here is to crack 60fps in our benchmark, as our rule of thumb based on experience is that multiplayer framerates in intense firefights will bottom out at roughly half our benchmark average, so hitting medium-high framerates here is not necessarily high enough.

The move to 55W brings Iris Pro much closer to the GT 650M, with NVIDIA's advantage falling to less than 10%. At 47W, Iris Pro isn't able to remain at max turbo for as long. The soft configurable TDP is responsible for nearly a 15% increase in performance here.

Iris Pro continues to put all other integrated graphics solutions to shame. The 55W 5200 is over 2x the speed of the desktop HD 4000 and the same for the mobile Trinity. There's even a healthy gap between it and desktop Trinity/Haswell.

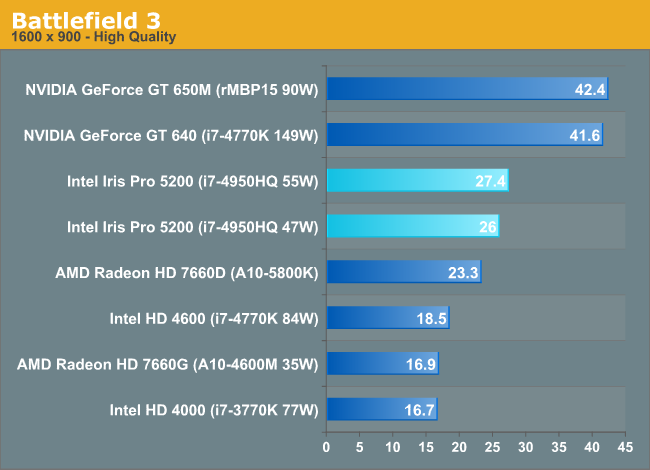

Ramp up resolution and quality settings and Iris Pro once again looks far less like a discrete GPU. NVIDIA holds over a 50% advantage here. Once again I don't believe this is memory bandwidth related, Crystalwell appears to be doing its job. Instead it looks like fundamental GPU architecture issue.

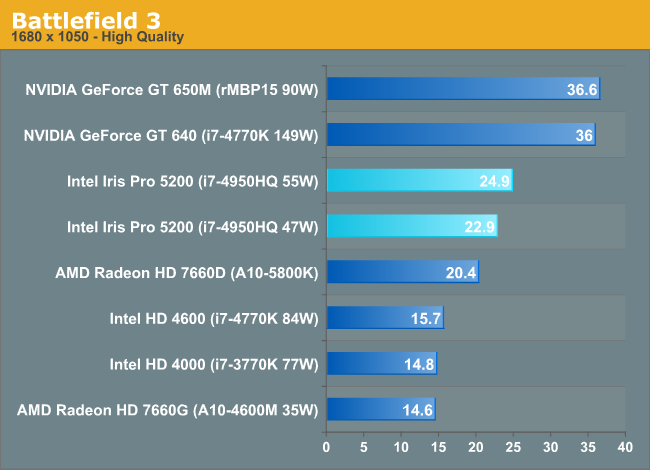

The gap narrows slightly with an increase in resolution, perhaps indicating that as the limits shift to memory bandwidth Crystalwell is able to win some ground. Overall, there's just an appreciable advantage to NVIDIA's architecture here.

The iGPU comparison continues to be an across the board win for Intel. It's amazing what can happen when you actually dedicate transistors to graphics.

177 Comments

View All Comments

Death666Angel - Tuesday, June 4, 2013 - link

"What Intel hopes however is that the power savings by going to a single 47W part will win over OEMs in the long run, after all, we are talking about notebooks here."This plus simpler board designs and fewer voltage regulators and less space used.

And I agree, I want this in a K-SKU.

Death666Angel - Tuesday, June 4, 2013 - link

And doesn't MacOS support Optimus?RE: "In our 15-inch MacBook Pro with Retina Display review we found that simply having the discrete GPU enabled could reduce web browsing battery life by ~25%."

GullLars - Tuesday, June 4, 2013 - link

Those are strong words in the end, but i agree Intel should make a K-series CPU with Crystalwell. What comes to mind is they may be doing that for Broadwell.The Iris Pro solution with eDRAM looks like a nice fit for what i want in my notebook upgrade coming this fall. I've been getting by on a Core2Duo laptop, and didn't go for Ivy Bridge because there were no good models with a 1920x1200 or 1920x1080 display without dedicated graphics. For a system that will not be used for gaming at all, but needs resolution for productivity, it wasn't worth it. I hope this will change with Haswell, and that i will be able to get a 15" laptop with >= 1200p without dedicated graphics. 4950HQ or 4850HQ seems like an ideal fit. I don't mind spending $1500-2000 for a high quality laptop :)

IntelUser2000 - Tuesday, June 4, 2013 - link

ANAND!!You got the FLOPs rating wrong on the Sandy Bridge parts. They are at 1/2 of Ivy Bridge.

1350MHz with 12 EUs and 8 FLOPs/EU will result in 129.6GFlops. While its true in very limited scenarios Sandy Bridge's iGPU can co-issue, its small enough to be non-existent. That is why a 6EU HD 2500 comes close to 12EU HD 3000.

Hrel - Tuesday, June 4, 2013 - link

If they use only the HD4600 and Iris Pro that'd probably be better. As long as it's clearly labeled on laptops. HD 4600 Pro (don't expect to do any video work on this) Iris Pro (it's passable in a pinch).But I don't think that's what's going to happen. Iris Pro could be great for Ultrabooks; I don't really see any use outside of that though. A low end GT740M is still a better option in any laptop that has the thermal room for it. Considering you can put those in 14" or larger ultrabooks I still think Intel's graphics aren't serious. Then you consider the lack of Compute, PhysX, Driver optimization, game specific tuning...

Good to see a hefty performance improvement. Still not good enough though. Also pretty upsetting to see how many graphics SKU's they've released. OEM'S are gonna screw people who don't know just to get the price down.

Hrel - Tuesday, June 4, 2013 - link

The SKU price is 500 DOLLARS!!!! They're charging you 200 bucks for a pretty shitty GPU. Intel's greed is so disgusting it over rides the engineering prowess of their employees. Truly disgusting Intel; to charge that much for that level of performance. AMD we need you!!!!xdesire - Tuesday, June 4, 2013 - link

May i ask a noob question? Question: Do we have no i5s, i7s WITHOUT on board graphics any more? As a gamer i'd prefer to have a CPU + discrete GPU in my gaming machine and i don't like to have extra stuff stuck on the CPU, lying there consuming power and having no use (for my part) whatsoever. No ivy bridge or haswell i5s, i7s without iGPU or whatever you call it?flyingpants1 - Friday, June 7, 2013 - link

They don't consume power while they're not in use.Hrel - Tuesday, June 4, 2013 - link

WHY THE HELL ARE THOSE SO EXPENSIVE!!!!! Holy SHIT! 500 dollars for a 4850HQ? They're charging you 200 dollars for a shitty GPU with no dedicated RAM at all! Just a cache! WTFF!!!Intel's greed is truly disgusting... even in the face of their engineering prowess.

MartenKL - Wednesday, June 5, 2013 - link

What I don't understand is why Intel didn't do a "next-gen console like processor". Like takeing the 4770R and doubling the GPU or een quadrupling, wasn't there space? The thermal headroom must have been there as we are used to CPUs with as high as 130W TDP. Anyhow, combining that with awesome drivers for Linux would have been a real competition to AMD/PS4/XONE for Valve/Steam. A complete system under 150w capable of awesome 1080p60 gaming.So now I am looking for the best performing GPU under 75W, ie no external power. Which is it, still the Radeon HD7750?