NVIDIA GeForce GTX 780 Review: The New High End

by Ryan Smith on May 23, 2013 9:00 AM ESTSoftware, Cont: ShadowPlay and "Reason Flags"

Along with providing the game optimization service and SHIELD’s PC client, GeForce Experience has another service that’s scheduled to be added this summer. That service is called ShadowPlay, and not unlike SHIELD it’s intended to serve as a novel software implementation of some of the hardware functionality present in NVIDIA’s latest hardware.

ShadowPlay will be NVIDIA’s take on video recording, the novel aspect of it coming from the fact that NVIDIA is basing the utility around Kepler’s hardware H.264 encoder. To be straightforward video recording software is nothing new, as we have FRAPS, Afterburner, Precision X, and other utilities that all do basically the same thing. However all of those utilities work entirely in software, fetching frames from the GPU and then encoding them on the CPU. The overhead from this is not insignificant, especially due to the CPU time required for video encoding.

With ShadowPlay NVIDIA is looking to spur on software developers by getting into video recording themselves, and to provide superior performance by using hardware encoding. Notably this isn’t something that was impossible prior to ShadowPlay, but for some reason recording utilities that use NVIDIA’s hardware H.264 encoder have been few and far between. Regardless, the end result should be that most of the overhead is removed by relying on the hardware encoder, minimally affecting the GPU while freeing up the CPU, reducing the amount of time spent on data transit back to the CPU, and producing much smaller recordings all at the same time.

ShadowPlay will feature multiple modes. Its manual mode will be analogous to FRAPS, recording whenever the user desires it. The second mode, shadow mode, is perhaps the more peculiar mode. Because the overhead of recording with the hardware H.264 encoder is so low, NVIDIA wants to simply record everything in a very DVR-like fashion. In shadow mode the utility keeps a rolling window of the last 20 minutes of footage, with the goal being that should something happen that the user decides they want to record after the fact, they can simply pull it out of the ShadowPlay buffer and save it. It’s perhaps a bit odd from the perspective of someone who doesn’t regularly record their gaming sessions, but it’s definitely a novel use of NVIDIA’s hardware H.264 encoder.

NVIDIA hasn’t begun external beta testing of ShadowPlay yet, so for the moment all we have to work from is screenshots and descriptions. The big question right now is what the resulting quality will be like. NVIDIA’s hardware encoder does have some limitations that are necessary for real-time encoding, so as we’ve seen in the past with qualitative looks at NVIDIA’s encoder and offline H.264 encoders like x264, there is a quality tradeoff if everything has to be done in hardware in real time. As such ShadowPlay may not be the best tool for reference quality productions, but for the YouTube/Twitch.tv generation it should be more than enough.

Anyhow, ShadowPlay is expected to be released sometime this summer. But since 95% of the software ShadowPlay requires is also required for the SHIELD client, we wouldn’t be surprised if ShadowPlay was released shortly after a release quality version of the SHIELD client is pushed out, which may come as early as June alongside the SHIELD release.

Reasons: Why NVIDIA Cards Throttle

The final software announcement from NVIDIA to coincide with the launch of the GTX 780 isn’t a software product in and of itself, but rather an expansion of NVIDIA’s 3rd party hardware monitoring API.

One of the common questions/complaints about GPU Boost that NVIDIA has received over the last year is about why a card isn’t boosting as high as it should be, or why it suddenly drops down a boost bin or two for no apparent reason. For technically minded users who know the various cards’ throttle points and specifications this isn’t too complex – just look at the power consumption, GPU load, and temperature – but that’s a bit much to ask of most users. So starting with the recently released 320.14 drivers, NVIDIA is exposing a selection of flags through their API that indicate what throttle point is causing throttling or otherwise holding back the card’s clockspeed. There isn’t an official name for these flags, but “reasons” is as good as anything else, so that’s what we’re going with.

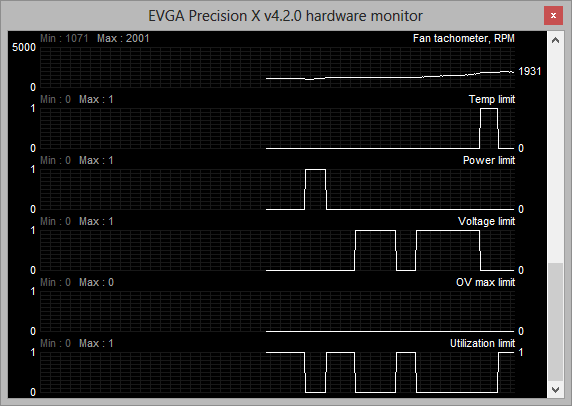

The reasons flags are a simple set of 5 binary flags that NVIDIA’s driver uses to indicate why it isn’t increasing the clockspeed of the card further. These flags are:

- Temperature Limit – the card is at its temperature throttle point

- Power Limit – The card is at its global power/TDP limit

- Voltage Limit – The card is at its highest boost bin

- Overvoltage Max Limit – The card’s absolute maximum voltage limit (“if this were to occur, you’d be at risk of frying your GPU”)

- Utilization Limit – The current workload is not high enough that boosting is necessary

As these are simple flags, it’s up to 3rd party utilities to decide how they want to present these flags. EVGA’s Precision X, which is NVIDIA’s utility of choice for sampling new features to the press, simply records the flags like it does the rest of the hardware monitoring data, and this is likely what most programs will do.

With the reason flags NVIDIA is hoping that this will help users better understand why their card isn’t boosting as high as they’d like to. At the same time the prevalence of GPU Boost 2.0 and its much higher reliance on temperature makes exposing this data all the more helpful, especially for overclockers that would like to know what attribute they need to turn up to unlock more performance.

155 Comments

View All Comments

mac2j - Thursday, May 23, 2013 - link

The problem with $650 vs $500 for this price point is this:I can get 2 x 7950s for <$600 - that's a setup that destroys a 780 for less money.

Even if you're single-GPU limited $250 is a lot of extra cash for a relative small amount of performance gain.

Ytterbium - Thursday, May 23, 2013 - link

I'm disappointed they decided to cut the compute to 1/24 vs 1/3 in Titan, AMD is much better value for compute tasks.BiffaZ - Friday, May 24, 2013 - link

Except much consumer (@home type) compute is SP not DP so it won't make much difference. SP performance is around equal or higher than AMD's in 780.Nighyal - Thursday, May 23, 2013 - link

I don't know if this is possible but it would be great to see a benchmark that showed power, noise and temperature at a standard work load. We can get an inferred idea of clock per watt performance but when you're measuring a whole system other factors come into play (you mentioned CPU loads scaling with increased GPU performance).My interest in this comes from living in a hot climate (Australia) where a computer can throw out a very noticeable amount of heat. The large majority of my usage is light gaming (LoL) but I occasionally play quite demanding single player titles which stretches the legs of my GPU. The amount of heat thrown out is directly proportional to power draw so to be able to clearly see how many less watts a system requires for a controlled work load would be a handy comparison for me.

TL:DR - Please also measure temperature, noise and power at a controlled workload to isolate clock per watt performance.

BiggieShady - Friday, May 24, 2013 - link

Kudos on the FCAT and the delta percentages metrics. So 32,2% for 7990 means that on average one frame is present 32,2% more time than the next. Still, it is only an average. Great extra info would be to show same metrics that averages only the deltas higher then the threshold delta, and display it on the graph with varying thresholds.flexy - Friday, May 24, 2013 - link

NV releases a card with a ridiculous price point of $1000. Then they castrate the exact same card and give it a new name, making it look like it's a "new card" and sell it cheaper than their way overpriced high end card. Which, of course, is a "big deal" (sarcasm) given the crazy price of Titan. So or so, I don't like what NV does, in the slightest.Many ages ago, people could buy *real* top of the line cards which always cost about $400-$500, today you pay $600 for "trash cards" which didn't make it into production for Titan due to sub-par chips. Nvidia:"Hey, let's just make-up a new card and sell those chips too, lols"

Please AMD, help us!!

bds71 - Friday, May 24, 2013 - link

for what it's worth, I would have like to have seen the 780 *truly* fill the gap between the 680 and titan by offering not only the gaming performance, but ALSO the compute performance - if they would have done a 1/6 or even 1/12!! to better fill the gap and round out the performance all around I would HAPPILY pay 650 for this card. as it is, I already have a 690, so I will simply get another for 4k gaming - but a comparison between 3x 780's and 2 690's (both very close to $2k) at 8Mpixels+ resolution would be extremely interesting. note: 3x 30" monitors could easily be configured for 4800x2560 resolution via NVidia surround or eyefinity - and I, for one, would love to see THAT review!!flexy - Friday, May 24, 2013 - link

Well compute performance is the other thing, along with their questionable GPU throttle aka "boost" (yeah right) technology. Paying premium for such a card and then weak compute performance in exchange compared to older gen cards or the AMD offerings... Seriously, there is a lot to not like about Kepler, at least from an enthusiast point of view. I hope that NV doesn't continue that route in the future with their cards becoming less attractive while prices go up.EJS1980 - Wednesday, May 29, 2013 - link

Cynical much?ChefJeff789 - Friday, May 24, 2013 - link

Glad to see the significant upgrade. I just hope that AMD forces the prices back down again soon. I hope the AMD release "at the end of the year" is closer to September than December. It'll be interesting to see how they stack up. BTW, I have shied away from AMD cards ever since I owned an X800 and had SERIOUS issues with the catalyst drivers (constant blue-screens, had to do a Windows clean-install to even get the card working for longer than a few minutes). I know this was a long time ago, and I've heard from numerous people that they're better now. Is this true?