NVIDIA GeForce GTX 780 Review: The New High End

by Ryan Smith on May 23, 2013 9:00 AM ESTSoftware: GeForce Experience, Out of Beta

Along with the launch of the GTX 780 hardware, NVIDIA is also using this opportunity to announce and roll out new software. Though they are (and always will be) fundamentally a hardware company, NVIDIA has been finding that software is increasingly important to the sales of their products. As a result the company has taken on several software initiatives over the years, both on the consumer side and the business side. To that end the products launching today are essentially a spearhead as part of a larger NVIDIA software ecosystem.

The first item on the list is GeForce Experience, NVIDIA’s game settings advisor. You may remember GeForce Experience from the launch of the GTX 690, which is when GeForce Experience was first announced. The actual rollout of GeForce Experience was slower than NVIDIA projected, having gone from an announcement to a final release in just over a year. Never the less, there is a light at the end of the tunnel and with version 1.5, GeForce Experience is finally out of beta and is being qualified as release quality.

So what is GeForce Experience? GFE is in a nutshell NVIDIA’s game settings advisor. The concept itself is not new, as games have auto-detected hardware and tried to set appropriate settings, and even NVIDIA has toyed with the concept before with their Optimal Playable Settings (OPS) service. The difference between those implementations and GFE comes down to who’s doing the work of figuring this out, and how much work is being done.

With OPS NVIDIA was essentially writing out recommended settings by hand based on human play testing. That process is of course slow, making it hard to cover a wide range of hardware and to get settings out for new games in a timely manner. Meanwhile with auto-detection built-in to games the quality of the recommendations is not a particular issue, but most games based their automatic settings around a list of profiles, which means most built-in auto-detection routines were fouled up by newer hardware. Simply put, it doesn’t do NVIDIA any good if a graphical showcase game like Crysis 3 selects the lowest quality settings because it doesn’t know what a GTX 780 is.

NVIDIA’s solution of choice is to take on most of this work themselves, and then move virtually all of it to automation. From a business perspective this makes great sense for NVIDIA as they already have the critical component for such a service, the hardware. NVIDIA already operates large GPU farms in order to test drivers, a process that isn’t all that different from what they would need to do to automate the search for optimal settings. Rather than regression testing and looking for errors, NVIDIA’s GPU farms can iterate through various settings on various GPUs in order to find the best combination of settings that can reach a playable level of performance.

By iterating through the massive matrix of settings most games offer, NVIDIA’s GPU farms can do most of the work required. What’s left for humans is writing test cases for new games, something again necessary for driver/regression testing, and then identifying which settings are more desirable from a quality perspective so that those can be weighted and scored in the benchmarking process. This means that it’s not entirely a human-free experience, but having a handful of engineers writing test cases and assigning weights is a much more productive use of time than having humans test everything by hand like it was for OPS.

Moving on, all of this feeds into NVIDIA’s GFE backend service, which in turn feeds the frontend in the form of the GFE client. The GFE client has a number of features (which we’ll get into in a moment), but for the purposes of GFE its primary role is to find games on a user’s computer, pull optimal settings from NVIDIA, and then apply those settings as necessary. All of this is done through a relatively straightforward UI, which lists the detected games, the games’ current settings, and NVIDIA’s suggested settings.

The big question of course is whether GFE’s settings are any good, and in short the answer is yes. NVIDIA’s settings are overall reasonable, and more often than not have closely matched the settings we use for benchmarking. I’ve noticed that they do have a preference for FXAA and other pseudo-AA modes over real AA modes like MSAA, but at this point that’s probably a losing battle on my part given the performance hit of MSAA.

For casual users NVIDIA is expecting this to be a one-stop solution. Casual users will let GFE go with whatever it thinks are the best settings, and as long as NVIDIA has done their profiling right users will get the best mix of quality at an appropriate framerate. For power users on the other hand the expectation isn’t necessarily that those users will stick with GFE’s recommended settings, but rather GFE will provide a solid baseline to work from. Rather than diving into a new game blindly, power users can start with GFE’s recommended settings and then turn things down if the performance isn’t quite high enough, or adjust some settings for others if they favor a different tradeoff in quality. On a personal note this exactly matches what I’ve been using GFE for since the earlier betas landed in our hands, so it seems like NVIDIA is on the mark when it comes to power users.

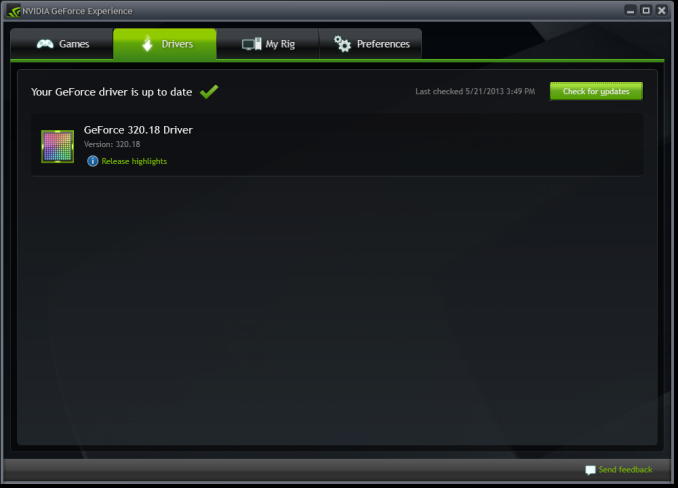

With all of that said, GeForce Experience isn’t going to be a stand-alone game optimization product but rather the start of a larger software suite for consumers. GeForce Experience has already absorbed the NVIDIA Update functionality that previously existed as a small optional install in NVIDIA’s drivers. It’s from here that NVIDIA is going to be building further software products for GeForce users.

The first of these expansions will be for SHIELD, NVIDIA’s handheld game console launching next month. One of SHIELD’s major features is the ability to stream PC games to the console, which in turn requires a utility running on the host PC to provide the SHIELD interface, control mapping, and of course video encoding and streaming. Rather than roll that out as a separate utility, that functionality will be built into future versions of GeForce Experience.

To that end, with the next release of drivers for the GTX 780 GeForce Experience will be bundled with NVIDIA’s drivers, similar to how NVIDIA Update is today. Like NVIDIA Update it will be an optional-but-default item, so users can opt out of it, but if the adoption is anything like NVIDIA Update then the expectation is that most users will end up installing GFE.

It would be remiss of us to not point out the potential for bloat here, but we’ll have to see how this plays out. In terms of file size GeForce Experience is rather tiny at 11MB (versus 169MB for the 320.14 driver package), so after installer overhead is accounted for it should add very little to the size of the GeForce driver package. Similarly it doesn’t seem to have any real appetite for system resources, but this is the wildcard since it’s subject to change as NVIDIA adds more functionality to the client.

155 Comments

View All Comments

SymphonyX7 - Thursday, May 23, 2013 - link

*mildly/narrowly trailing the GTX 680chizow - Thursday, May 23, 2013 - link

AMD released some significant driver updates in ~Oct 2012, branded "Never Settle" drivers that did boost GCN performance significantly, ~10-20% in some cases where they were clearly deficient relative to Nvidia parts. It was enough to make up the difference in a lot of cases or extend the lead to where the GE is generally faster than the 680.On the flipside, some of AMD's performance claims, particularly with CF have come under fire due to concerns about microstutter and frame latency, ie. the ongoing runtframe saga.

Vayra - Thursday, May 23, 2013 - link

Drivers possibly?kallogan - Thursday, May 23, 2013 - link

High end overpriced gpu again ! Next !wumpus - Thursday, May 23, 2013 - link

Except that the 780 is nothing more than a Titan with even more cuda performance disabled. Presumably, they are expecting to get Titan sales to people interested in GPU computing, if only for geeklust/boasting.wumpus - Thursday, May 23, 2013 - link

My above comment was supposed to be a reply. Ignore/delete if possible.ifrit39 - Thursday, May 23, 2013 - link

Shadow Play is the most interesting news here. It costs a not-insignificant amount of money to buy a decent capture card that will record HD video. This is a great alternative as it requires no extra hardware and has little CPU/GPU overhead. Anything that ends up on the net will be compressed by youtube or other service anyway. I can't wait to remove fraps and install shadow play.ahamling27 - Saturday, May 25, 2013 - link

Fraps isn't the best, but they somehow have the market cornered. Look up Bandicam, I use it exclusively and I get great captures at a fraction the size. Plus they aren't cut up into 4 gig files. It has at least 15x more customization like putting watermarks in your capture or if you do like to segment your files you can have it do that at any size or time length you want. Plus you can record two sound sources at once, like your game and mic, or your game and whatever voice chat software you use.Anyway, I probably sound like I work for them now, but I can assure you I don't. This Shadow Play feature is definitely piquing my interest. If it's implemented wisely, it might just shut all the other software solutions down.

garadante - Thursday, May 23, 2013 - link

There were two things that instantly made me dislike this card, much as I've liked Nvidia in the past: completely disabling the compute performance down to 600 series levels which was the reason I was more forgiving towards AMD in the 600/7000 series generation, and that they've priced this card at $650. If I remember correctly, the 680 was priced at $500-550 at launch, and that itself was hard to stomach as it was and still is widely believed GK104 was meant to be their mid-range chip. This 780 is more like what I imagined the 680 having been and if it launched at that price point, I'd be more forgiving.As it is... I'm very much rooting for AMD. I hope with these new hires, of which Anandtech even has an article of their new dream team or some such, that AMD can become competitive. Hopefully the experience developers get with their kind-of-funky architecture with the new consoles, however underwhelming they are, brings software on the PC both better multithreaded programming and performance, and better programming and performance to take advantage of AMD's module scheme. Intel and Nvidia both need some competition so we can get this computer hardware industry a bit less stagnated and better for the consumer.

EJS1980 - Tuesday, May 28, 2013 - link

The 680 was $500 at launch, and was the main reason why AMD received so much flak for their 7970 pricing. At the time it launched, the 680 blew the 7970 away in terms of gaming performance, which was thee reason AMD had to respond with across the board price drops on the 7950/70, even though it took them a few months.