Choosing a Gaming CPU: Single + Multi-GPU at 1440p, April 2013

by Ian Cutress on May 8, 2013 10:00 AM ESTSleeping Dogs

While not necessarily a game on everybody’s lips, Sleeping Dogs is a strenuous game with a pretty hardcore benchmark that scales well with additional GPU power. The team over at Adrenaline.com.br are supreme for making an easy to use benchmark GUI, allowing a numpty like me to charge ahead with a set of four 1440p runs with maximum graphical settings.

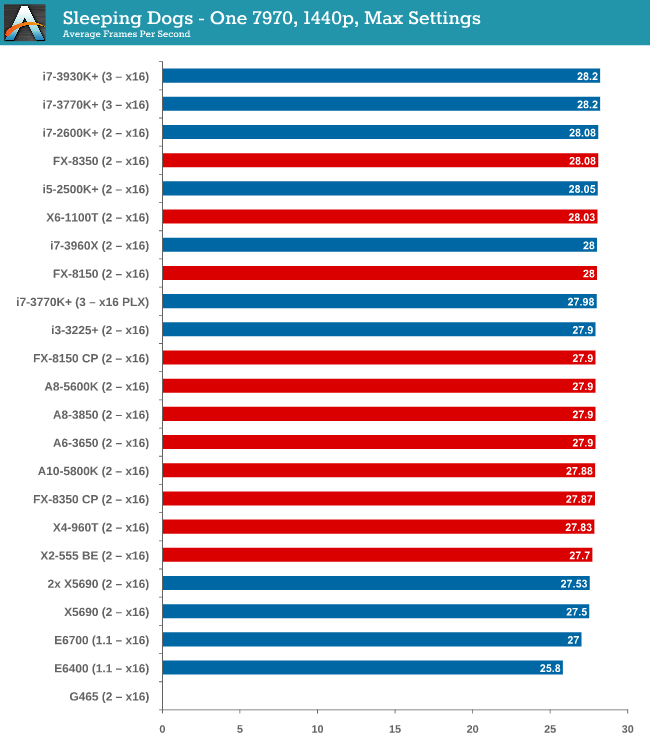

One 7970

Sleeping Dogs seems to tax the CPU so little that the only CPU that falls behind by the smallest of margins is an E6400 (and the G465 which would not run the benchmark). Intel visually takes all the top spots, but AMD is all in the mix with less than 0.5 FPS splitting an X2-555 BE and an i7-3770K.

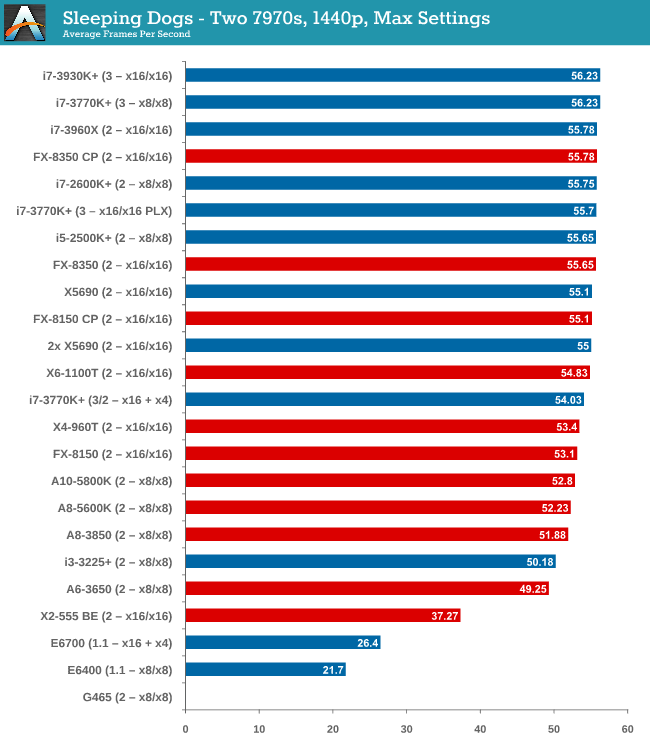

Two 7970s

A split starts to develop between Intel and AMD again, although you would be hard pressed to choose between the CPUs as everything above an i3-3225 scores 50-56 FPS. The X2-555 BE unfortunately drops off, suggesting that Sleeping Dogs is a fan of the cores and this little CPU is a lacking.

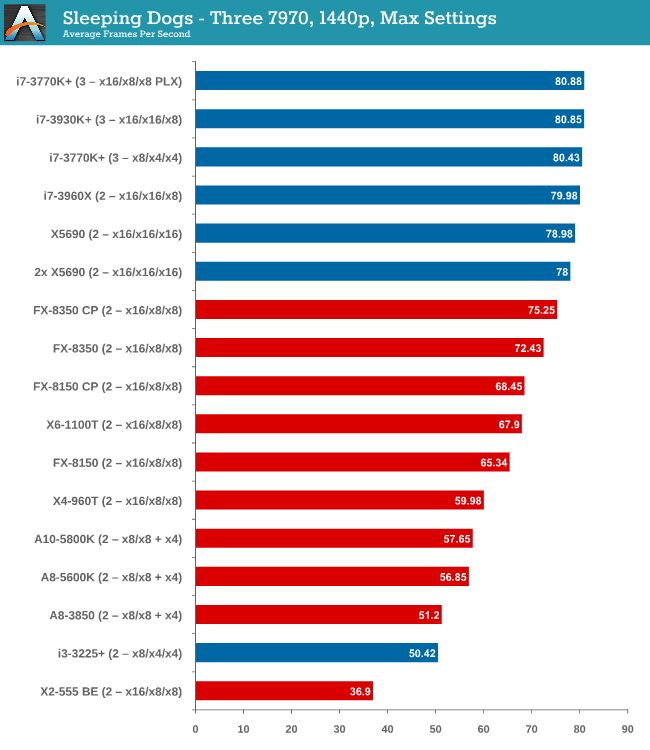

Three 7970s

At three GPUs the gap is there, with the best Intel processors over 10% ahead of the best AMD. Neither PCIe lane allocation or memory seems to be playing a part, just a case of threads then single thread performance.

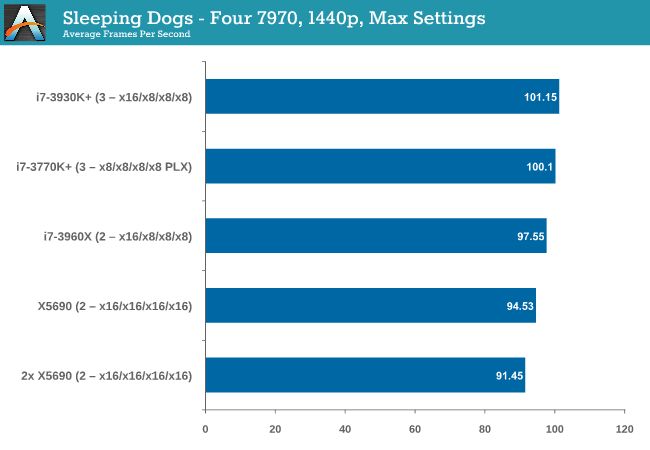

Four 7970s

Despite our Beast machine having double the threads, an i7-3960X in PCIe 3.0 mode takes top spot.

It is worth noting the scaling in Sleeping Dogs. The i7-3960X moved from 28.2 -> 56.23 -> 80.85 -> 101.15 FPS, achieving +71% increase of a single card moving from 3 to 4. This speaks of a well written game more than anything.

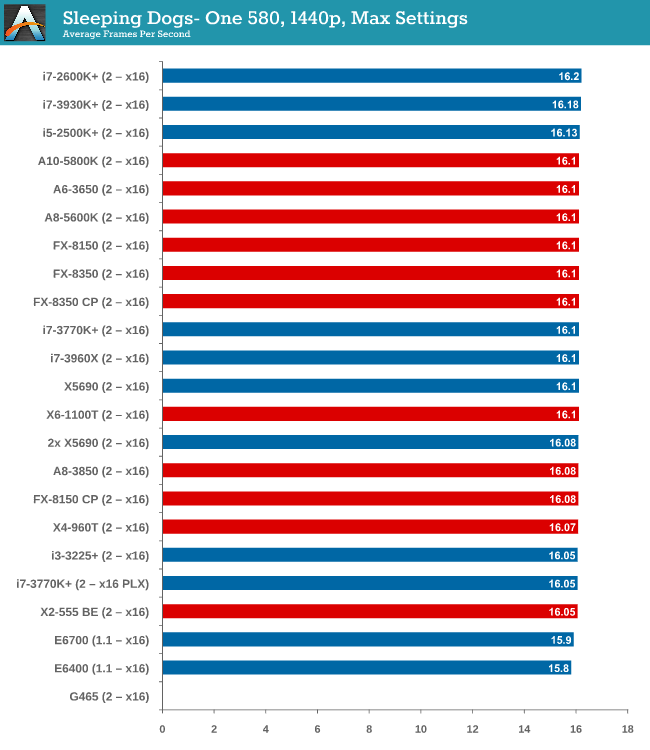

One 580

There is almost nothing to separate every CPU when using a single GTX 580.

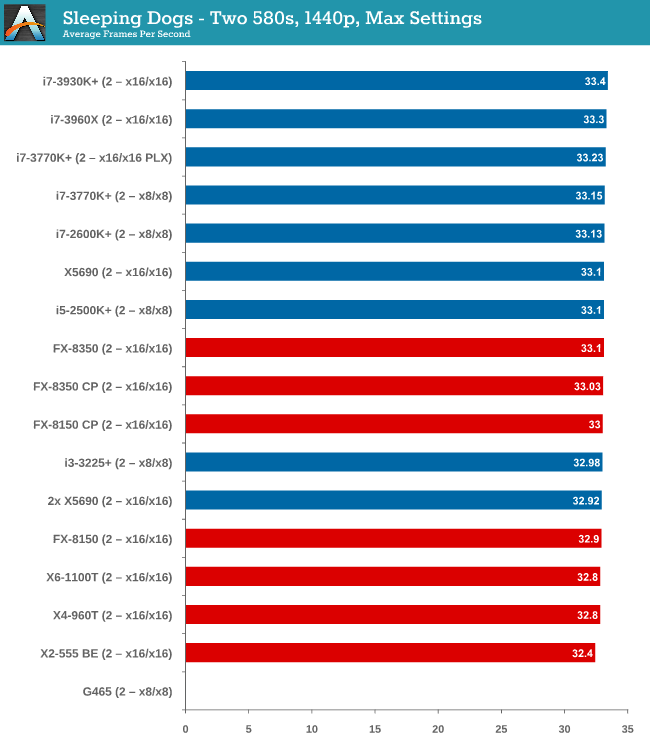

Two 580s

Same thing with two GTX 580s – even an X2-555 BE is within 1 FPS (3%) of an i7-3960X.

Sleeping Dogs Conclusion

Due to the successful scaling and GPU limited nature of Sleeping Dogs, almost any CPU you throw at it will get the same result. When you move into three GPUs or more territory, it seems that having the single thread CPU speed of an Intel processor gets a few more FPS at the end of the day.

242 Comments

View All Comments

Pjotr - Wednesday, May 29, 2013 - link

He wasn't comparing the graphics cards. He was showing how much the CPU matters on an older (580) and newer (7970) card.SetiroN - Thursday, May 9, 2013 - link

Is MCT supposed to refer to SMT/Hyperthreading?I believe you're the only person in the world using that acronym. Where did you pull it from?

IanCutress - Wednesday, May 15, 2013 - link

MultiCore Turbo, as per my article on the subject: http://www.anandtech.com/show/6214/multicore-enhan...Sometimes called MultiCore Enhancement of MultiCore Acceleration. MCT is a compromise name.

DoTT - Thursday, May 9, 2013 - link

Ian, When I saw this article I thought I was hallucinating. It is exactly what I’ve been scrubbing the web looking for. I recently purchased a Korean 1440P IPS display (very happy with it) and it is giving my graphics card (4850X2) a workout for the first time. I’m running an older Phenom II platform and have been trying to determine what the advantages of just buying a new card are versus upgrading the whole platform. Most reviews looking at bottlenecking are quite old and are at ridiculously low resolutions. CPU benchmarks by themselves don’t tell me what I wanted to know. After all, I don’t push the system much except when gaming. This article hit the spot. I wanted to know how a 7950 or 7970 paired with my system would fare. An additional follow up would be to see if any slightly lower end cards (7870, 650Ti etc.) show any CPU related effects. I have been debating the merits of going with a pair of these cards vs a single 7970.Thanks for the great review.

phrank1963 - Thursday, May 9, 2013 - link

I use a G850 and 7700 would like to see lower end testing> I choose the g850 after looking a long time at benchmarks across the web.GamerGirl - Thursday, May 9, 2013 - link

very nice and helpfull article thx!smuff3758 - Thursday, May 9, 2013 - link

Thought we are banning you!spigzone - Thursday, May 9, 2013 - link

Also of note is Digital Foundry polling several developers whether they would recommend Intel or AMD as the better choice to future proof one's computer for next gen games. 100% recommended AMD over Intel.Obviously a lot going on behind the scenes here with AMD leveraging their console wins and deep involvement at every level of next gen game development. With that in mind one might expect Kaveri to be an absolute gaming beast.

http://www.eurogamer.net/articles/digitalfoundry-f...

evonitzer - Thursday, May 9, 2013 - link

Great read. I read through the article and the comments yesterday, but had a extra thought today. Sorry if it has been mentioned by somebody else, but there seems to be a lot of discussion about MMORPG's and their CPU demands. Perhaps you could just do a scaled down test with 3-4 CPU's to see how they handled an online scenario. It won't be perfectly repeatable, but it could give some general advice about what is being stressed. I would assume it is the CPU that is getting hit hard, but perhaps it is simply rendering that causes the FPS to decrease.Other than that, I would like to see my CPU tested, of course :) (Athlon II X4 630) But I think I can infer where my hardware puts me. Off the bottom of the charts!

Treckin - Thursday, May 9, 2013 - link

Great work, however I would certainly question 2 things:Why did you use 580GTXs? Anyone springing for 4 GPUs isnt going to go for two+ year old ones (a point you made yourself in the methodology section explaining why power consumption wasnt at issue here).

Why would you test all of this hardware for single monitor resolutions? Surely you must be aware that people using these setups (unless used for extreme overclocking or something) are almost certainly gaming at 3x1080p, 3x1440p, or even 3x1600p.

Also of concern to me would be 3d performance, although that may be a bit more niche then even 4GPU configurations.