The x86 Power Myth Busted: In-Depth Clover Trail Power Analysis

by Anand Lal Shimpi on December 24, 2012 5:00 PM ESTThe untold story of Intel's desktop (and notebook) CPU dominance after 2006 has nothing to do with novel new approaches to chip design or spending billions on keeping its army of fabs up to date. While both of those are critical components to the formula, its Intel's internal performance modeling team that plays a major role in providing targets for both the architects and fab engineers to hit. After losing face (and sales) to AMD's Athlon 64 in the early 2000s, Intel adopted a "no more surprises" policy. Intel would never again be caught off guard by a performance upset.

Over the past few years however the focus of meaningful performance has shifted. Just as important as absolute performance, is power consumption. Intel has been going through a slow waking up process over the past few years as it's been adapting to the new ultra mobile world. One of the first things to change however was the scope and focus of its internal performance modeling. User experience (quantified through high speed cameras mapping frame rates to user survey data) and power efficiency are now both incorporated into all architecture targets going forward. Building its next-generation CPU cores no longer means picking a SPECCPU performance target and working towards it, but delivering a certain user experience as well.

Intel's role in the industry has started to change. It worked very closely with Acer on bringing the W510, W700 and S7 to market. With Haswell, Intel will work even closer with its partners - going as far as to specify other, non-Intel components on the motherboard in pursuit of ultimate battery life. The pieces are beginning to fall into place, and if all goes according to Intel's plan we should start to see the fruits of its labor next year. The goal is to bring Core down to very low power levels, and to take Atom even lower. Don't underestimate the significance of Intel's 10W Ivy Bridge announcement. Although desktop and mobile Haswell will appear in mid to late Q2-2013, the exciting ultra mobile parts won't arrive until Q3. Intel's 10W Ivy Bridge will be responsible for at least bringing some more exciting form factors to market between now and then. While we're not exactly at Core-in-an-iPad level of integration, we are getting very close.

To kick off what is bound to be an exciting year, Intel made a couple of stops around the country showing off that even its existing architectures are quite power efficient. Intel carried around a pair of Windows tablets, wired up to measure power consumption at both the device and component level, to demonstrate what many of you will find obvious at this point: that Intel's 32nm Clover Trail is more power efficient than NVIDIA's Tegra 3.

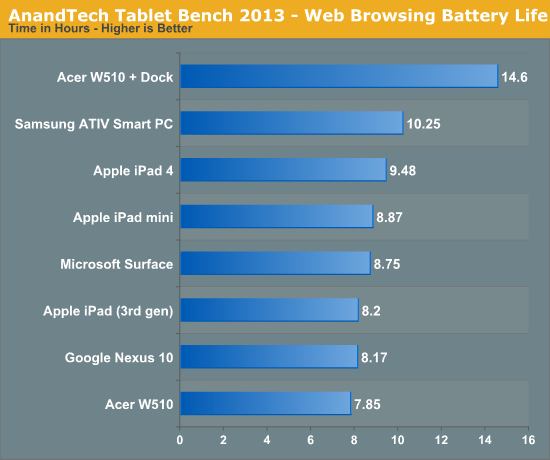

We've demonstrated this in our battery life tests already. Samsung's ATIV Smart PC uses an Atom Z2760 and features a 30Wh battery with an 11.6-inch 1366x768 display. Microsoft's Surface RT uses NVIDIA's Tegra 3 powered by a 31Wh battery with a 10.6-inch, 1366x768 display. In our 2013 wireless web browsing battery life test we showed Samsung with a 17% battery life advantage, despite the 3% smaller battery. Our video playback battery life test showed a smaller advantage of 3%.

For us, the power advantage made a lot of sense. We've already proven that Intel's Atom core is faster than ARM's Cortex A9 (even four of them under Windows RT). Combine that with the fact that NVIDIA's Tegra 3 features four Cortex A9s on TSMC's 40nm G process and you get a recipe for worse battery life, all else being equal.

Intel's method of hammering this point home isn't all that unique in the industry. Rather than measuring power consumption at the application level, Intel chose to do so at the component level. This is commonly done by taking the device apart and either replacing the battery with an external power supply that you can measure, or by measuring current delivered by the battery itself. Clip the voltage input leads coming from the battery to the PCB, toss a resistor inline and measure voltage drop across the resistor to calculate power (good ol' Ohm's law).

Where Intel's power modeling gets a little more aggressive is what happens next. Measuring power at the battery gives you an idea of total platform power consumption including display, SoC, memory, network stack and everything else on the motherboard. This approach is useful for understanding how long a device will last on a single charge, but if you're a component vendor you typically care a little more about the specific power consumption of your competitors' components.

What follows is a good mixture of art and science. Intel's power engineers will take apart a competing device and probe whatever looks to be a power delivery or filtering circuit while running various workloads on the device itself. By correlating the type of workload to spikes in voltage in these circuits, you can figure out what components on a smartphone or tablet motherboard are likely responsible for delivering power to individual blocks of an SoC. Despite the high level of integration in modern mobile SoCs, the major players on the chip (e.g. CPU and GPU) tend to operate on their own independent voltage planes.

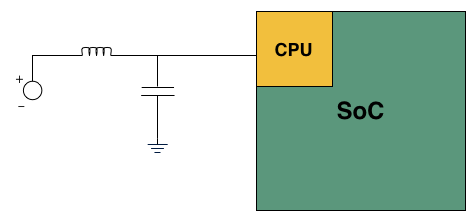

A basic LC filter

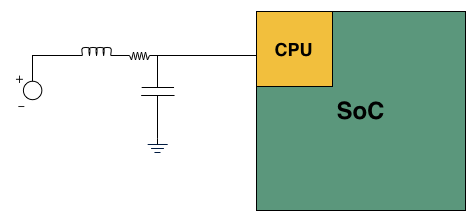

What usually happens is you'll find a standard LC filter (inductor + capacitor) supplying power to a block on the SoC. Once the right LC filter has been identified, all you need to do is lift the inductor, insert a very small resistor (2 - 20 mΩ) and measure the voltage drop across the resistor. With voltage and resistance values known, you can determine current and power. Using good external instruments you can plot power over time and now get a good idea of the power consumption of individual IP blocks within an SoC.

Basic LC filter modified with an inline resistor

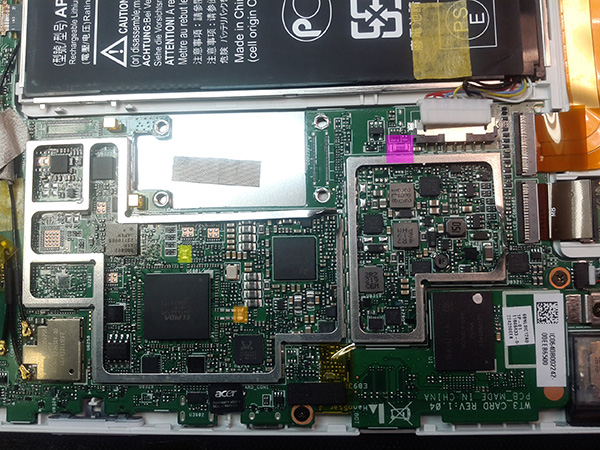

Intel brought one of its best power engineers along with a couple of tablets and a National Instruments USB-6289 data acquisition box to demonstrate its findings. Intel brought along Microsoft's Surface RT using NVIDIA's Tegra 3, and Acer's W510 using Intel's own Atom Z2760 (Clover Trail). Both of these were retail samples running the latest software/drivers available as of 12/21/12. The Acer unit in particular featured the latest driver update from Acer (version 1.01, released on 12/18/12) which improves battery life on the tablet (remember me pointing out that the W510 seemed to have a problem that caused it to underperform in the battery life department compared to Samsung's ATIV Smart PC? it seems like this driver update fixes that problem).

I personally calibrated both displays to our usual 200 nits setting and ensured the software and configurations were as close to equal as possible. Both tablets were purchased by Intel, but I verified their performance against my own review samples and noticed no meaningful deviation. All tests and I've also attached diagrams of where Intel is measuring CPU and GPU power on the two tablets:

Microsoft Surface RT: The yellow block is where Intel measures GPU power, the orange block is where it measures CPU power

Acer's W510: The purple block is a resistor from Intel's reference design used for measuring power at the battery. Yellow and orange are inductors for GPU and CPU power delivery, respectively.

The complete setup is surprisingly mobile, even relying on a notebook to run SignalExpress for recording output from the NI data acquisition box:

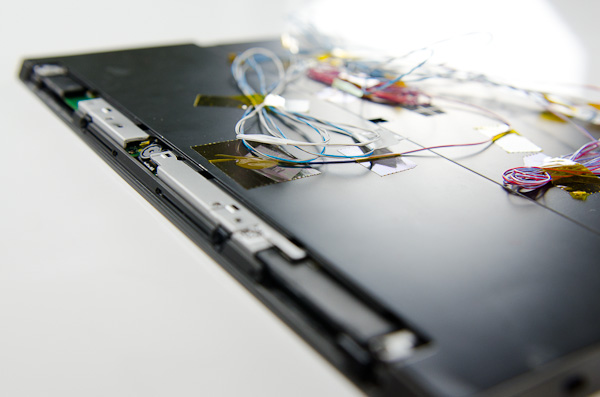

Wiring up the tablets is a bit of a mess. Intel wired up far more than just CPU and GPU, depending on the device and what was easily exposed you could get power readings on the memory subsystem and things like NAND as well.

Intel only supplied the test setup, for everything you're about to see I picked and ran whatever I wanted, however I wanted. Comparing Clover Trail to Tegra 3 is nothing new, but the data I gathered is at least interesting to look at. We typically don't get to break out CPU and GPU power consumption in our tests, making this experiment a bit more illuminating.

Keep in mind that we are looking at power delivery on voltage rails that spike with CPU or GPU activity. It's not uncommon to run multiple things off of the same voltage rail. In particular, I'm not super confident in what's going on with Tegra 3's GPU rail although the CPU rails are likely fairly comparable. One last note: unlike under Android, NVIDIA doesn't use its 5th/companion core under Windows RT. Microsoft still doesn't support heterogeneous computing environments, so NVIDIA had to disable its companion core under Windows RT.

163 Comments

View All Comments

snoozemode - Monday, December 24, 2012 - link

The difference this time is huge compared to competitors Intel has faced in its history. Its not just about specs anymore, no matter if its performance or performance per watt, its a lot more about economy and margins. Anyone with an ARM license can build a SoC and a lot of new players are coming to the game, especially from China. And players like Samsung who produces own SoCs for their own devices, soon LG and possibly Apple aswell means that the days of Intel dictating the terms and dominating the industry is just not going to happen this time.KitsuneKnight - Monday, December 24, 2012 - link

You mean it's much closer to Intel's early days, when it faced dozens of competing companies, instead of just a couple tiny ones.snoozemode - Tuesday, December 25, 2012 - link

Still very different because of ARMs business model which is nothing like the past with Intel, AMD, Cyrix, IBM etc. Intel definitely didn't became the giant it is because of products with good value. They are what they are because of continously raising the bar above everyone else from a performance standpoint at a time when performance was everyting and energy efficiency was close to nothing. And when they sometimes have failed to compete from a performance standpoint (ex AMD around 2000) they have resorted to some serious foul play.ay@m - Thursday, December 27, 2012 - link

well, what if Intel is now measuring the energy efficiency as the new performance benchmark?that's exactly what this article is pointing out, that Intel's Atom chip is starting to focus on energy efficiency and not focusing on the performance anymore...right?

so i think Intel understands now what it takes to enter the mobile market and what the end user will perceive as the new performance benchmark.

Kidster3001 - Friday, January 4, 2013 - link

You make Intel's point!Intel will continue to have the performance advantage. They are now going after the power part of the equation. When they are best at both (or at least significantly better at one and same at the other) what do think the result will be?

Kevin G - Tuesday, December 25, 2012 - link

The problem for Intel is that they'll design the SoC that they want to manufacture and not necessarily what the OEM wants. Intel could over spec an SoC to cover a broader market but at an increased die size and power consumption. Intel's process advantage mitigates those factors but they'll still be at a disadvantage compared to another SoC designer that'll make a product with the bare necessary functional blocks. For Intel to become a major mobile player, they'll have to start listening to OEM's and designing chips specific to them.yyrkoon - Tuesday, December 25, 2012 - link

Intel already is a major player in the mobile market. They have been far longer than anyone producing ARM based processors.Granted according to an article I read a few months back. Tablet / smart phone sales are supposedly eclipsing the sales of laptops, and desktops combined. whether true or not, I can see it being possible soon, if not already.

p3ngwin1 - Tuesday, December 25, 2012 - link

yep, it's also about PRICE.if you've seen the price of the cheap Android tablets and devices, you know Intel will have to either convince us we need to pay a premium for the extra battery-life and performance, or they're going to have to lower their prices to compete aggressively.

you can buy decent SoC's from Allwinner, Rockchip, Nvidia, Qualcomm, etc that deliver "good enough" specs and a decent price, while Intel charges a premium for it's processors.

those cheap China SoC's like Rockwell and Allwinner, etc are ridiculously cheap and you can get Android 4.1 Tablets with 1.6Ghz dual-core ARM A9 processors with Mali400 GPU's and 7" 1200x720 screens for less than $150.

Intel charges way more for their processors compared to the ARM equivalents.

name99 - Tuesday, December 25, 2012 - link

The price issue is a good point.A second point is to ask WHY Intel does better in this comparison. I'd love to see a more detailed exploration of this, but maybe the tools don't exist. However one datapoint we do know is this

(a) SMT on Atom is substantially more effective than on Nehalem/SB/IB. Whereas on the desktop processors it gets you maybe a 25% boost (on IB, worse on older models), on Atom it gets you about 50%. (This isn't surprising because Atom's in-order'ness means there are just that many more execution slots going vacant.)

(b) SMT on Atom (and IB and SB, not on Nehalem) is extremely power efficient.

So one way to run an Atom and get better energy performance than CURRENT ARM devices is to be careful about using only one core, dual threaded, for as long as you can (which is probably almost all the time --- there is very little code that makes use of four cores, or even two cores both at max speed).

I bring this up because this sort of Intel advantage is easily copied. (I'm actually surprised it hasn't already been done --- in my eyes it's a toss up whether Apple's A7 will be a 64-bit Swift or a Swift with SMT. There are valid arguments either way --- it's in Apple's interests to get to 64-bit and a clean switchover as fast as possible, but the power advantages in SMT are substantial, since you can keep your second core asleep so much more often.)

Once you accept the value of companion cores it becomes even more interesting. One could imagine (especially for an Apple with so much control over the OS and CPU) a three-way graduated SOC, with dual-core OoO high performance cores (to give a snappiness to the UI), a single in-order SMT core (for work most of the time), and a low-end in-order core (for those long periods of idle punctuated by waking up to examine the world before going back to sleep). The area costs would be low (because each core could be a quarter or less of the area of its larger sibling; the real pain would be in writing and refining the OS smarts to use such a device well. But the payoff would be immense...

Point is --- I wouldn't write off ARM yet. Intel has process advantages, and some µArch advantages. But the µArch advantages can be copied; and is the process advantage enough to offset the extra cost (in dollars) that it imposes?

Kidster3001 - Friday, January 4, 2013 - link

big.LITTLE is a waste of time. It is a stop-gap ARM manufacturers are using to try and keep the power down. As they increase performance they are not able to keep power in the envelopes they thought they could. It is far more efficient to have cores that are capable of filling all the roles you suggest by dynamically changing how they operate. This is where Intel will succeed, just look at what Haswell can do.