Memory Performance: 16GB DDR3-1333 to DDR3-2400 on Ivy Bridge IGP with G.Skill

by Ian Cutress on October 18, 2012 12:00 PM EST- Posted in

- Memory

- G.Skill

- Ivy Bridge

- DDR3

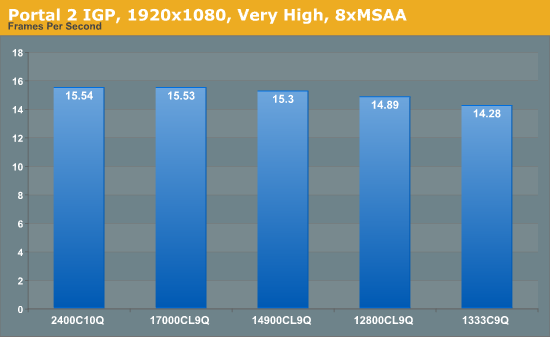

Portal 2

A stalwart of the Source engine, Portal 2 is the big hit of 2011 following on from the original award-winning Portal. In our testing suite, Portal 2 performance should be indicative of CS:GO performance to a certain extent. Here we test Portal 2 at 1920x1080 with High/Very High graphical settings.

Portal 2 mirrors previous testing, albeit our frame rate increases as a percentage are not that great – 1333 to 1600 is a 4.3% increase, but 1333 to 2400 is only an 8.8% increase.

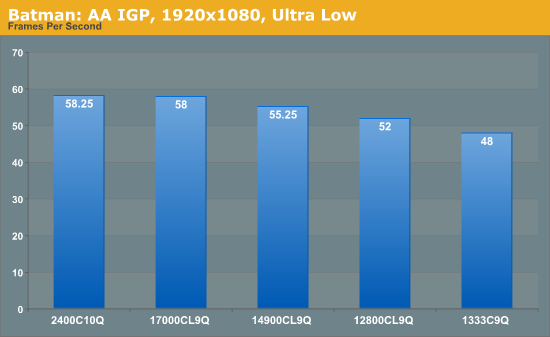

Batman Arkham Asylum

Made in 2009, Batman:AA uses the Unreal Engine 3 to create what was called “the Most Critically Acclaimed Superhero Game Ever”, awarded in the Guinness World Record books with an average score of 91.67 from reviewers. The game boasts several awards including a BAFTA. Here we use the in-game benchmark while at the lowest specification settings without PhysX at 1920x1080. Results are reported to the nearest FPS, and as such we take 4 runs and take the average value of the final three, as the first result is sometimes +33% more than normal.

Batman: AA represents some of the best increases of any application in our testing. Jumps from 1333 C9 to 1600 C9 and 1866 C9 gives an 8% then another 7% boost, ending with a 21% increase in frame rates moving from 1333 C9 to 2400 C10.

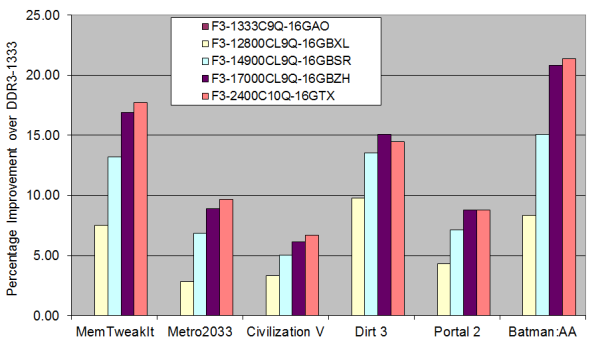

Overall IGP Results

Taking all our IGP results gives us the following graph:

The only game that beats the MemTweakIt predictions is Batman: AA, but most games follow the similar shape of increases just scaled differently. Bearing in mind the price differences between the kits, if IGP is your goal then either the 1600 C9 or 1866 C9 seem best in terms of bang-for-buck, but 2133 C9 will provide extra performance if the budget stretches that far.

114 Comments

View All Comments

andrewaggb - Friday, October 19, 2012 - link

Fair enough :-)HisDivineOrder - Thursday, October 18, 2012 - link

You "remember" getting your first memory kit and it was for a E6400. You act like that's just this classic thing.I remember getting a memory kit for my Celeron 300a. I remember getting a memory kit for my AMD K6 with 3dNow!.

Wow, I'm old.

silverblue - Thursday, October 18, 2012 - link

I remember getting a 64MB PC100 DIMM in 2000... it was pretty much £1 a MB. Made a difference, so it was *gulp* worth it.StormyParis - Thursday, October 18, 2012 - link

Very interesting read. Thank you.rscoot - Thursday, October 18, 2012 - link

I remember paying upwards of $400 for a pair of matched 2x512MB Kingston HyperX modules with BH-5 chips. Those were the days! 300MHz at 2-2-2-5 1T in dual channel if you could put enough volts through them. Nowadays I don't think memory matters nearly as much as it did back then.superflex - Thursday, October 18, 2012 - link

Your first kit was an E6400?Let me know when you get hair down there.

My first computer was an Apple IIe in 1984, and my first build was an Opteron 170 with 400 MHz 2,2,2,5 DDR.

Magnus101 - Thursday, October 18, 2012 - link

Once again this only confirms that memory speed makes no real world difference.I mean, who in their right mind use the integrated GPU on an expensive i7-system to play metro-2033 with single digit framerate?

The only thing standing out is the Winrar compression, but, how many use winrar for compression?

Yes to decompress files it is very common but I only remember using it 2-3 times in my whole life to compress my own files.

So that isn't important to most users, except for the ones that actually use winrar to compress files.

And I don't get why the x264 encoding seemed like a big deal. The differences were very small.

It's beem the same story all the way back to the late 90;s were tests between sdr memory at 100 and 133 MHz or at different timings showed no differences in real life applications in contrast to synthetics.

But sure, if you are building a new system and choose between, let say 1333 or 1600, then a $5 difference is a no brainer.

Then again, it would make no noticeable difference anyway.

silverblue - Thursday, October 18, 2012 - link

Here's one - will it affect QuickSync in any way?twoodpecker - Monday, October 22, 2012 - link

I'd be interested in QuickSync results too. In my experience, not proven, it makes a big difference. I adjusted my memory speeds from 1600 to 2000 and noticed at some point that encoding is 25x instead of 15x. This might be due to different factors though, like software optimizations, because I didn't benchmark after adjusting mem speeds.Geofram - Thursday, October 18, 2012 - link

I don't believe he's implying that single digit frame rates on a game are going to real-life usable for anyone. I believe the point of the test was simply: "Lets take a system that is generally fast and put it in a situation where the IGP is being stressed. This will be the best-case scenario for faster RAM helping it. Lets see if it does".To me the idea was not showing everyone everyday situations where faster RAM will help them, instead it was to see where those situations might lay, by setting up a stressful situation and seeing the results. Most of the results were extremely small differences.

I agree it's not a noticeable difference in most cases. It doesn't make me feel like I should get rid of PC1333 RAM. I don't fault the logic for the tests used however. It was nice to see someone actually comparing the slight differences caused by RAM speed.