The iPhone 5's A6 SoC: Not A15 or A9, a Custom Apple Core Instead

by Anand Lal Shimpi on September 15, 2012 2:15 PM EST- Posted in

- Smartphones

- Apple

- Mobile

- SoCs

- iPhone 5

When Apple announced the iPhone 5, Phil Schiller officially announced what had leaked several days earlier: the phone is powered by Apple's new A6 SoC.

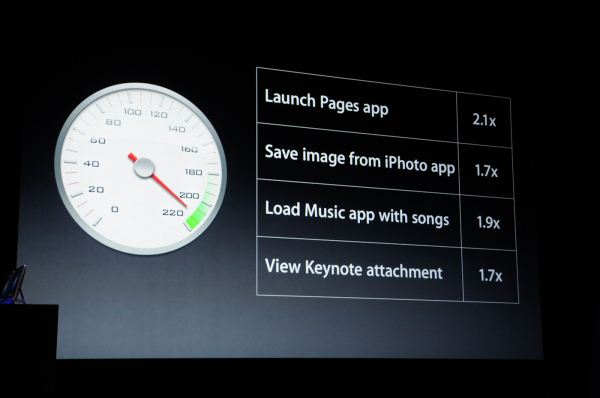

As always, Apple didn't announce clock speeds, CPU microarchitecture, memory bandwidth or GPU details. It did however give us an indication of expected CPU performance:

Prior to the announcement we speculated the iPhone 5's SoC would simply be a higher clocked version of the 32nm A5r2 used in the iPad 2,4. After all, Apple seems to like saving major architecture shifts for the iPad.

However, just prior to the announcement I received some information pointing to a move away from the ARM Cortex A9 used in the A5. Given Apple's reliance on fully licensed ARM cores in the past, the expected performance gains and unpublishable information that started all of this I concluded Apple's A6 SoC likely featured two ARM Cortex A15 cores.

It turns out I was wrong. But pleasantly surprised.

The A6 is the first Apple SoC to use its own ARMv7 based processor design. The CPU core(s) aren't based on a vanilla A9 or A15 design from ARM IP, but instead are something of Apple's own creation.

Hints in Xcode 4.5

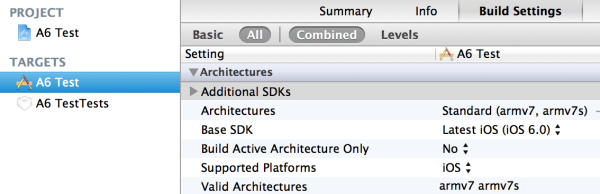

The iPhone 5 will ship with and only run iOS 6.0. To coincide with the launch of iOS 6.0, Apple has seeded developers with a newer version of its development tools. Xcode 4.5 makes two major changes: it drops support for the ARMv6 ISA (used by the ARM11 core in the iPhone 2G and iPhone 3G), keeps support for ARMv7 (used by modern ARM cores) and it adds support for a new architecture target designed to support the new A6 SoC: armv7s.

What's the main difference between the armv7 and armv7s architecture targets for the LLVM C compiler? The presence of VFPv4 support. The armv7s target supports it, the v7 target doesn't. Why does this matter?

Only the Cortex A5, A7 and A15 support the VFPv4 extensions to the ARMv7-A ISA. The Cortex A8 and A9 top out at VFPv3. If you want to get really specific, the Cortex A5 and A7 implement a 16 register VFPv4 FPU, while the A15 features a 32 register implementation. The point is, if your architecture supports VFPv4 then it isn't a Cortex A8 or A9.

It's pretty easy to dismiss the A5 and A7 as neither of those architectures is significantly faster than the Cortex A9 used in Apple's A5. The obvious conclusion then is Apple implemented a pair of A15s in its A6 SoC.

For unpublishable reasons, I knew the A6 SoC wasn't based on ARM's Cortex A9, but I immediately assumed that the only other option was the Cortex A15. I foolishly cast aside the other major possibility: an Apple developed ARMv7 processor core.

Balancing Battery Life and Performance

There are two types of ARM licensees: those who license a specific processor core (e.g. Cortex A8, A9, A15), and those who license an ARM instruction set architecture for custom implementation (e.g. ARMv7 ISA). For a long time it's been known that Apple has both types of licenses. Qualcomm is in a similar situation; it licenses individual ARM cores for use in some SoCs (e.g. the MSM8x25/Snapdragon S4 Play uses ARM Cortex A5s) as well as licenses the ARM instruction set for use by its own processors (e.g. Scorpion/Krait implement in the ARMv7 ISA).

For a while now I'd heard that Apple was working on its own ARM based CPU core, but last I heard Apple was having issues making it work. I assumed that it was too early for Apple's own design to be ready. It turns out that it's not. Based on a lot of digging over the past couple of days, and conversations with the right people, I've confirmed that Apple's A6 SoC is based on Apple's own ARM based CPU core and not the Cortex A15.

Implementing VFPv4 tells us that this isn't simply another Cortex A9 design targeted at higher clocks. If I had to guess, I would assume Apple did something similar to Qualcomm this generation: go wider without going substantially deeper. Remember Qualcomm moved from a dual-issue mostly in-order architecture to a three-wide out-of-order machine with Krait. ARM went from two-wide OoO to three-wide OoO but in the process also heavily pursued clock speed by dramatically increasing the depth of the machine.

The deeper machine plus much wider front end and execution engines drives both power and performance up. Rumor has it that the original design goal for ARM's Cortex A15 was servers, and it's only through big.LITTLE (or other clever techniques) that the A15 would be suitable for smartphones. Given Apple's intense focus on power consumption, skipping the A15 would make sense but performance still had to improve.

Why not just run the Cortex A9 cores from Apple's A5 at higher frequencies? It's tempting, after all that's what many others have done in the space, but sub-optimal from a design perspective. As we learned during the Pentium 4 days, simply relying on frequency scaling to deliver generational performance improvements results in reduced power efficiency over the long run.

To push frequency you have to push voltage, which has an exponential impact on power consumption. Running your cores as close as possible to their minimum voltage is ideal for battery life. The right approach to scaling CPU performance is a combination of increasing architectural efficiency (instructions executed per clock goes up), multithreading and conservative frequency scaling. Remember that in 2005 Intel hit 3.73GHz with the Pentium Extreme Edition. Seven years later Intel's fastest client CPU only runs at 3.5GHz (3.9GHz with turbo) but has four times the cores and up to 3x the single threaded performance. Architecture, not just frequency, must improve over time.

At its keynote, Apple promised longer battery life and 2x better CPU performance. It's clear that the A6 moved to 32nm but it's impossible to extract 2x better performance from the same CPU architecture while improving battery life over only a single process node shrink.

Despite all of this, had it not been for some external confirmation, I would've probably settled on a pair of higher clocked A9s as the likely option for the A6. In fact, higher clocked A9s was what we originally claimed would be in the iPhone 5 in our NFC post.

I should probably give Apple's CPU team more credit in the future.

The bad news is I have no details on the design of Apple's custom core. Despite Apple's willingness to spend on die area, I believe an A15/Krait class CPU core is a likely target. Slightly wider front end, more execution resources, more flexible OoO execution engine, deeper buffers, bigger windows, etc... Support for VFPv4 guarantees a bigger core size than the Cortex A9, it only makes sense that Apple would push the envelope everywhere else as well. I'm particularly interested in frequency targets and whether there's any clever dynamic clock work happening. Someone needs to run Geekbench on an iPhone 5 pronto.

I also have no indication how many cores there are. I am assuming two but Apple was careful not to report core count (as it has in the past). We'll get more details as we get our hands on devices in a week. I'm really interested to see what happens once Chipworks and UBM go to town on the A6.

163 Comments

View All Comments

solipsism - Saturday, September 15, 2012 - link

They do own part of Img Tech so that wouldn't be too hard to do, but I wonder if it is necessary as they can likely get Img Tech to build to their specs.jjj - Saturday, September 15, 2012 - link

Always expected A15 .A9 could have been an option, Apple quoted some perf numbers in some tests and since they are not all that honest it could have been higher clocks combined with faster RAM and much faster NAND.

Custom core is far more interesting since they can build the silicon and the software together and they do have the units vol to afford it.

Now they got to integrate the baseband soon and more in the next few years.

Lucian Armasu - Saturday, September 15, 2012 - link

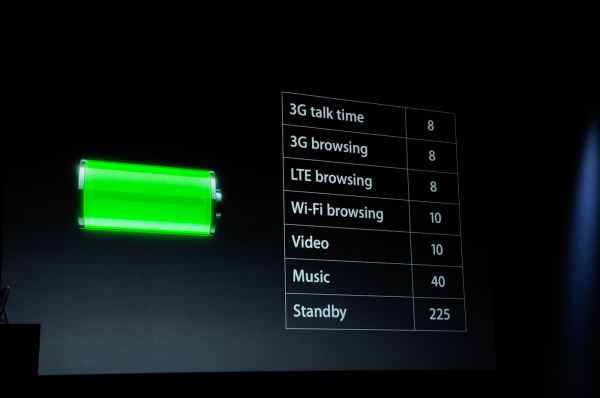

I'm curious what the performance of this will be like. Even though you seem to think that they've focused a lot on power consumption, the power consumption has barely improved in the new iPhone, according to Apple's own numbers. So it remains to seen if their CPU can compete with Qualcomm's S4 Pro and Cortex A15. My guess is it won't because this is a first for Apple, so it's unlikely they made something better than Qualcomm or ARM in terms of architecture, and knowing Apple they probably kept the frequency low, too (probably around 1.2 Ghz per core).As for the GPU, if they went with PowerVR SGX543 again, that means they won't support OpenGL ES 3.0 in the iPhone for a year, until the next iPhone arrives, even though there will be phones supporting it as soon as this year.

WaltFrench - Saturday, September 15, 2012 - link

I'm not sure that Apple needs to develop something “better than Qualcomm” to out-perform for its devices. It'd seem most high-performance, low-power designs would optimize for the Dalvik JIT instruction stream, which is very likely quite different from what Xcode generates.After all, the confirmation process seems to have started in Xcode; it's obvious that Apple is attempting to exploit its tight linkage between OS, development tools and silicon.

Given the task-specific nature of CPU optimization, I'm not even sure what instruction mix would even be available for the unbiased analysis you seem to think would be out there.

tipoo - Saturday, September 15, 2012 - link

Krait already outperforms Apples 2x claim on CPU only benchmarks, I don't think the A6 will beat Krait there. But once more Apple will have the fastest GPU by a long shot, other phones had just started to get close to the 4S.I still wonder how after all this time no one else picked up SGX? It's in the PS Vita, but what smartphones besides the iPhone?

Death666Angel - Sunday, September 16, 2012 - link

Qualcomm has Adreno, so they will never use anything else. TI uses SGX graphics in its OMAP3/4 and upcoming OMAP5 SoCs. For example all current 44x0 SoCs have some form of SGX in it (the 4460 can be found in the Galaxy Nexus, the 4470 is in the new Archos tablets etc.). ST-Ericsson will use Rogue in its upcoming Nova SoC. Intel uses the SGX540 and Samsung used SGX in its old Hummingbird SoC.Death666Angel - Sunday, September 16, 2012 - link

Hit "post" too soon. :DIf you are wondering why no one else uses the multicore variants of the SGX, that is most likely because no one wants to build a SoC that large in the Android world. It costs too much and doesn't have enough benefits. Apple can do that easily as it has enough margins, can make good use of the graphics part because they are coding their own OS and having that graphics part across all their platforms means developers can exploit it easily as well. If you have an Android handset with great graphics developers still need to code for all the others with mediocre graphics and the good graphics might not make the game look better or run better.

tipoo - Sunday, September 16, 2012 - link

It makes sense that no one else wants to create something with that die size as that would be costly for a few reasons, but maybe with 28 and 32mn processes they could at least use the MP2, which Apple has been at 45nm for a year.Death666Angel - Sunday, September 16, 2012 - link

Yeah, TI will use MP2 in OMAP5 (SGX544 at 532MHz).Samsung will likely stay with Mali GPUs, they are doing quite well. Nvidia will stay with their GeForce GPUs as well. Qualcomm has Adreno. Many of the Chinese SoCs are also using Mali this time around. So 3 of the big SoCs manufacturers at the high end have no need for SGX multicore solutions in the future. And it seems that Nvidia has the dedicated Android gaming market mostly in their hands. :-)

joelypolly - Saturday, September 15, 2012 - link

I might give Apple a bit more credit since they use to build their own CPUs as well as being the owners of ARM itself in the past. Also the purchase of both PA Semi and Intrinsity should to some degree put Apple on equal footing with Qualcomm.