The GeForce GTX 660 Ti Review, Feat. EVGA, Zotac, and Gigabyte

by Ryan Smith on August 16, 2012 9:00 AM ESTMeet The Gigabyte GeForce GTX 660 Ti OC

Our final GTX 660 Ti of the day is Gigabyte’s entry, the Gigabyte GeForce GTX 660 Ti OC. Unlike the other cards in our review today this is not a semi-custom card but rather a fully-custom card, which brings with it some interesting performance ramifications.

| GeForce GTX 660 Ti Partner Card Specification Comparison | ||||||

| GeForce GTX 660 Ti(Ref) | EVGA GTX 660 Ti Superclocked | Zotac GTX 660 Ti AMP! | Gigabyte GTX 660 Ti OC | |||

| Base Clock | 915MHz | 980MHz | 1033MHz | 1033MHz | ||

| Boost Clock | 980MHz | 1059MHz | 1111MHz | 1111MHz | ||

| Memory Clock | 6008MHz | 6008MHz | 6608MHz | 6008MHz | ||

| Frame Buffer | 2GB | 2GB | 2GB | 2GB | ||

| TDP | 150W | 150W | 150W | ~170W | ||

| Width | Double Slot | Double Slot | Double Slot | Double Slot | ||

| Length | N/A | 9.5" | 7.5" | 10,5" | ||

| Warranty | N/A | 3 Year | 3 Year + Life | 3 Year | ||

| Price Point | $299 | $309 | $329 | $319 | ||

The big difference between a semi-custom and fully-custom card is of course the PCB; fully-custom cards pair a custom cooler with a custom PCB instead of a reference PCB. Partners can go in a few different directions with custom PCBs, using them to reduce the BoM, reduce the size of the card, or even to increase the capabilities of a product. For their GTX 660 Ti OC, Gigabyte has gone in the latter direction, using a custom PCB to improve the card.

On the surface the specs of the Gigabyte GeForce GTX 660 Ti OC are relatively close to our other cards, primarily the Zotac. Like Zotac Gigabyte is pushing the base clock to 1033MHz and the boost clock to 1111MHz, representing a sizable 118MHz (13%) base overclock and a 131MHz (13%) boost overclock respectively. Unlike the Zotac however there is no memory overclocking taking place, with Gigabyte shipping the card at the standard 6GHz.

What sets Gigabyte apart here in the specs is that they’ve equipped their custom PCB with better VRM circuitry, which means NVIDIA is allowing them to increase their power target from the GTX 660 Ti standard of 134W to an estimated 141W. This may not sound like much (especially since we’re working with an estimate on the Gigabyte board), but as we’ve seen time and time again GK104 is power-limited in most scenarios. A good GPU can boost to higher bins than there is power available to allow it, which means increasing the power target in a roundabout way increases performance. We’ll see how this works in detail in our benchmarks, but for now it’s good enough to say that even with the same GPU overclock as Zotac the Gigabyte card is usually clocking higher.

Moving on, Gigabyte’s custom PCB measures 8.4” long, and in terms of design it doesn’t bear a great resemblance to either the reference GTX 680 PCB nor the reference GTX 670 PCB; as near as we can tell it’s completely custom. In terms of design it’s nothing fancy – though like the reference GTX 670 the VRMs are located in the front – and as we’ve said before the real significance is the higher power target it allows. Otherwise the memory layout is the same as the reference GTX 660 Ti with 6 chips on the front and 2 on the back. Due to its length we’d normally insist on there being some kind of stiffener for an open air card, but since Gigabyte has put the GPU back far enough, the heatsink mounting alone provides enough rigidity to the card.

Sitting on top of Gigabyte’s PCB is a dual fan version of Gigabyte’s new Windforce cooler. The Windforce 2X cooler on their GTX 660 Ti is a bit of an abnormal dual fan cooler, with a relatively sparse aluminum heatsink attached to unusually large 100mm fans. This makes the card quite large and more fan than heatsink in the process, which is not something we’ve seen before.

The heatsink itself is divided up into three segments over the length of the card, with a pair of copper heatpipes connecting them. The bulk of the heatsink is over the GPU, while a smaller portion is at the rear and an even smaller portion is at the front, which is also attached to the VRMs. The frame holding the 100mm fans is then attached at the top, anchored at either end of the heatsink. Altogether this cooling contraption is both longer and taller than the PCB itself, making the final length of the card nearly 10” long.

Finishing up the card we find the usual collection of ports and connections. This means 2 PCIe power sockets and 2 SLI connectors on the top, and 1 DL-DVI-D port, 1 DL-DVI-I port, 1 full size HDMI 1.4 port, and 1 full size DisplayPort 1.2 on the front. Meanwhile toolless case users will be happy to see that the heatsink is well clear of the bracket, so toolless clips are more or less guaranteed to work here.

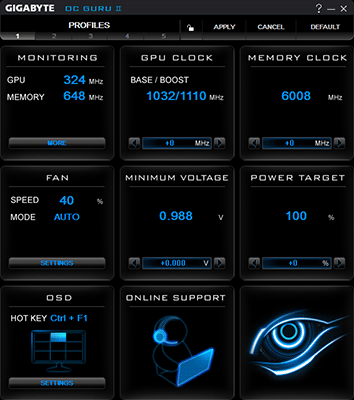

Rounding out the package is the usual collection of power adapters and a quick start guide. While it’s not included in the box or listed on the box, the Gigabyte GeForce GTX 660 Ti OC works with Gigabyte’s OC Guru II overclocking software, which is available on Gigabyte’s website. Gigabyte has had OC Guru for a number of years now, and with this being the first time we’ve seen OC Guru II we can say it’s greatly improved from the functional and aesthetic mess that defined the previous versions.

While it won’t be winning any gold medals, in our testing OC Guru II gets the job done. Gigabyte offers all of the usual tweaking controls (including the necessary power target control), along with card monitoring/graphing and an OSD. It’s only real sin is that Gigabyte hasn’t implemented sliders on their controls, meaning that you’ll need to press and hold down buttons in order to dial in a setting. This is less than ideal, especially when you’re trying to crank up the 6000MHz memory clock by an appreciable amount.

Wrapping things up, the Gigebyte GeForce GTX 660 Ti OC comes with Gigabyte’s standard 3 year warranty. Gigabyte will be releasing it at an MSRP of $319, $20 over the price of a reference-clocked GTX 660 Ti and $10 less than the most expensive card in our roundup today.

313 Comments

View All Comments

CeriseCogburn - Sunday, August 19, 2012 - link

If they can't supply it - it cannot lower competitor prices, and can't be bought, so they make little or no money, and everyone else buys the available competitors product.Why doesn't AMD release a card that drives down the 680's price $170 per card and makes nVidia give away 3 free games with it too ?

That would make too much sense for amd and we consumers and some competition that crushes evil corporate profiteering nVidia, so AMD should do it.

(roll eyes)

To answer your question> nVidia is being nice not draining all the red blood from amd's jugular since amd is bleeding out so badly already that if nVidia took them out a million raging in 3d fanboys would scream for billions in payola in a giant lawsuit they protest for in front of the UN and the IMF and the International Court and the 25k persons traveling EURO unelected power bureaucrats.

So instead of all that terribleness and making amd fans cry, nVidia is nice about it.

Galidou - Tuesday, August 21, 2012 - link

This card at 249$ would be very bad for AMD but not very good for Nvidia either. Considering how close it already is to it's bigger brother, it would probably cut a good percentage of gtx 670 sales.So yeah, 249$ might seem a good price for US but they don't want to harm themselves either.

Belard - Thursday, August 16, 2012 - link

What does TI mean?Where is the GTX 660? So its really a 670 with a hand chopped off?

ericloewe - Thursday, August 16, 2012 - link

TI means something along the lines of "We'll release a crap version later on that only OEMs will buy, called the GTX 660."Patflute - Thursday, August 16, 2012 - link

lolwutOmega215D - Thursday, August 16, 2012 - link

I think it still means "Titanium" version of a chip which was supposedly better than the non-Ti.MrSpadge - Sunday, August 19, 2012 - link

It means "We can't figure out how to distinguish our products using 3 decimal numbers and up to 3 letters in front of it (or the lack thereof), so we'll add some more letters".R3MF - Thursday, August 16, 2012 - link

in the anand review of the 450 where Nvidia first showed the lopsided memory bus arrangement it was noted that CUDA apps would not recognise the full memory complement.has this now been fixed?

Ryan Smith - Thursday, August 16, 2012 - link

Yes. That was fixed almost immediately.R3MF - Thursday, August 16, 2012 - link

thanks Ryan