The GeForce GTX 660 Ti Review, Feat. EVGA, Zotac, and Gigabyte

by Ryan Smith on August 16, 2012 9:00 AM ESTBattlefield 3

Its popularity aside, Battlefield 3 may be the most interesting game in our benchmark suite for a single reason: it’s the first AAA DX10+ game. It’s been 5 years since the launch of the first DX10 GPUs, and 3 whole process node shrinks later we’re finally to the point where games are using DX10’s functionality as a baseline rather than an addition. Not surprisingly BF3 is one of the best looking games in our suite, but as with past Battlefield games that beauty comes with a high performance cost.

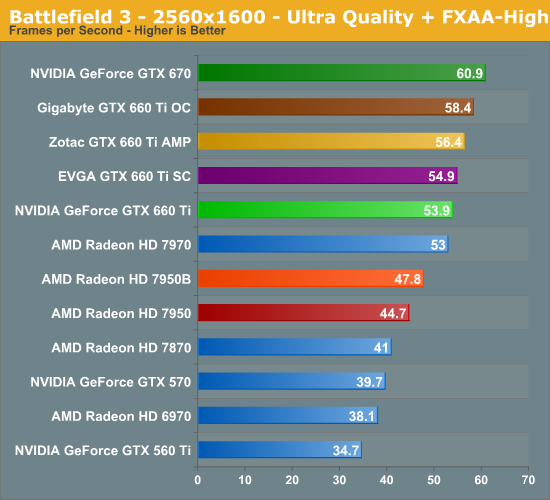

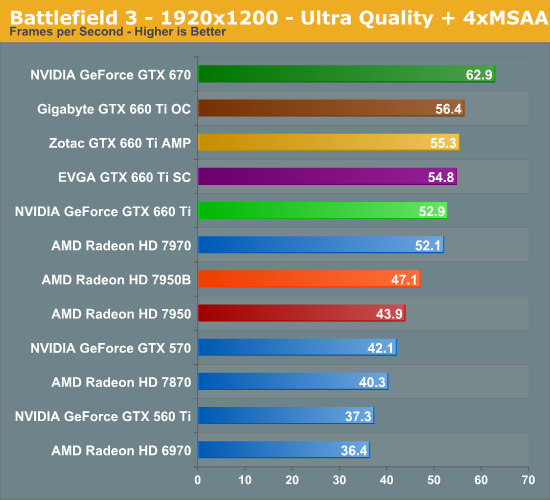

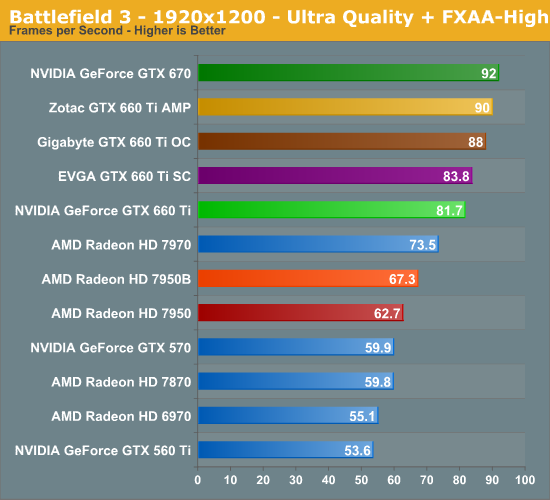

The reduction in memory bandwidth and ROP throughput coming from the GTX 670 comes with roughly an 11% performance cost here, just about splitting the difference between the best and worst case scenarios. This is important for the GTX 660 Ti since it means the card doesn’t surrender NVIDIA’s performance advantage in BF3. At 1920 with FXAA that means the GTX 660 Ti has a huge 30% performance lead over the 7950, and even the 7970 falls behind the GTX 660 Ti. The only real disappointment here is that 1920 with MSAA isn’t quite playable – 53fps means that framerates will bottom out in the mid-20s, which isn’t desirable.

Meanwhile the factory overclocked cards continue to up the ante, and ends up being another game that factory overclocks offer a decent improvement. Zotac tops the factory cards at 10%, followed by Gigabyte and EVGA. We’re once again seeing the impact of Zotac’s memory overclock, and how in memory bandwidth limited situations it’s more important than Gigabyte’s higher power target, though Gigabyte does come close.

313 Comments

View All Comments

TheJian - Monday, August 20, 2012 - link

Please point me to a 7970 for $360. The cheapest on newegg even after rebate is $410.Nice try though. "I'm pretty disappointed". Why? You got a 30in monitor or something? At 1920x1200 this card beats the 7970 ghz edition in a lot of games. :) Skyrim being one and by 10fps right here in this article...LOL.

Mod to 670 isn't worth it when the shipped cards already beat it (3 of them did here). Remember, you should be looking at 1920x1200 and ignoring Ryans BS resolution only 2% or less use (it's a decimal point at steampowered hardware survey). If you're not running at 2560x1600 read the article again ignoring ryans comments. It's the best card at 1920x1200, regardless of Ryans stupid page titles "that darned memory"...ROFL. Why? STill tromps everything at 1920x1200...LOL.

Got anything to say Ryan? Any proof we'll use 2560x1600 in the world? Can you point to anything that says >2% use it? Can you point to a monitor using it that isn't a 27/30in? Raise your hand if you have a 30in...LOL.

JarredWalton - Tuesday, August 21, 2012 - link

http://www.microcenter.com/single_product_results....That's at least $20 cheaper than what you state.

CeriseCogburn - Thursday, August 23, 2012 - link

That's a whitebox version card only. LOLTheJian - Friday, August 24, 2012 - link

And the prices just dropped, so yeah, I should be off by ~20 by now :) White box, as stated. No game. Well, dirt showdown don't count it's rated so low ;)But nothing that states my analysis is incorrect. His recommendations were made based on 2560x1600 even though as proven 98% play 1920x1200 or less and the monitor he pointed me to isn't even sold in the USA. YOu have to buy it in Korea. With a blank faq page, help is blank, no phone and a gmail acct for help. No returns. Are you going to buy one from out of country from a site like that? Nothing I said wasn't true.

Mr Perfect - Thursday, August 16, 2012 - link

I wonder if any board partners will try making the board symetrical again by pushing it up to 3GB? It's not like the extra ram would do any good, but if you could keep an already memory bandwidth starved card humming along at 144GB/s and prevent it from dropping all the way down to 48GB/s, it might help.CeriseCogburn - Sunday, August 19, 2012 - link

It doesn't drop to 48GB, that was just the reviewers little attack.You should have noticed the reviewer can't find anything wrong, including sudden loss of bandwidth, in this card, or the prior released nVidia models with a similar weighted setup.

The SPECULATION is what the amd fanboys get into, then for a year, or two, or more, they will keep talking about it, with zero evidence, and talk about the future date when it might matter.... or they might "discover" and issue they have desperately been hunting for.

In the mean time, they'll cover up amd's actual flaws.

It's like the hallowed and holy of holies amd perfect circle algorithm.

After years of the candy love for it, it was admitted it had major flaws in game, with disturbing border lines at shader transitions.

That after the endless praise for the perfect circle algorithm, that, we were told - when push came to shove, and only in obscurity, that no in game advantage for it could be found, never mind the endless hours and tests spent searching for that desperately needed big amd fanboy win...

So that's how it goes here. A huge nVidia advantage is either forgotten about and not mentioned, or actually derided and put down with misinformation and lies, until some amd next release, when it has appeared it is the time that it can finally be admitted that amd has had a huge fault in the exact area that was praised, and nVidia has a huge advantage and no fault even though it was criticized, and now it's okay because amd has fixed the problem in the new release... ( then you find out the new release didn't really fix the problem, and new set of sdpins and half truths starts after a single mention of what wrong).

Happened on AA issues here as well. Same thing.

JarredWalton - Tuesday, August 21, 2012 - link

Most games are made to target specific amounts of memory, and often you won't hit the bottlenecks unless you run at higher detail settings. 1920x1200 even with 4xAA isn't likely to hit such limits, which is why the 2560x1600 numbers can tell us a bit more.Best case for accessing the full 2GB, NVIDIA would interleave the memory over the three 64-bit connections in a 1:1:2 ratio. That means in aggregate you would typically get 3/4 of the maximum bandwidth once you pass 1.5GB of usage. This would explain why the drop isn't as severe at the final 512MB, but however you want to look at it there is technically a portion of RAM that can only be accessed at 1/3 the speed of the rest of the RAM.

The better question to ask is: are we not seeing any major differences because NVIDIA masks this, or because the added bandwidth isn't needed by the current crop of games? Probably both are true to varying degrees.

CeriseCogburn - Thursday, August 23, 2012 - link

" GTX 660 Ti and 7950 tied at roughly 67fps. If you want a brief summary of where this is going, there you go. Though the fact that the GTX 660 Ti actually increases its lead at 2560 is unexpected. "Theory vs fact.

TheJian - Monday, August 20, 2012 - link

Memory starved at what? NEVER at 1920x1200 or less. Are you running a 30in monitor? All 24in monitors are 1920x1200 or below on newegg (68 of them!). 80% of the 27inchers are also this way on newegg.com. 3GB has been proven useless (well 4gb was):http://www.guru3d.com/article/palit-geforce-gtx-68...

"The 4GB -- Realistically there was NOT ONE game that we tested that could benefit from the two extra GB's of graphics memory. Even at 2560x1600 (which is a massive 4 Mpixels resolution) there was just no measurable difference."

"But 2GB really covers 98% of the games in the highest resolutions. "

Game over even on 2560x1600 for 4GB or 3GB. Ryan is misleading you...Sorry. Though he's talking bandwidth mostly, the point is 98% of us (all 24in and down, most 27in) are running at 1920x1200 or BELOW.

Galcobar - Thursday, August 16, 2012 - link

Was wondering about how the Zotac was altered to stand in as a reference 660 Ti.Were the clock speeds and voltages lowered through one of the overclocking programs, or was a reference BIOS flashed onto it? I ask because as I understand AMD's base/boost clock implementation, the base clock is set by the BIOS and is not alterable by outside software.