SandForce TRIM Issue & Corsair Force Series GS (240GB) Review

by Kristian Vättö on November 22, 2012 1:00 PM ESTIntroduction to the TRIM Issue

TRIM in SandForce based SSDs has always been trickier than with other SSDs due to the fact that SandForce's way to deal with data is a lot more complicated. Instead of just writing the data to the NAND as other SSDs do, SandForce employs a real-time compression and de-duplication engine. When even your basic design is a lot more complex, there is a higher chance that something will go wrong. When other SSDs receive a TRIM command, they can simply clean the blocks with invalid data and that's it. SandForce, on the other hand, has to check if the data is used by something else (i.e. thanks to de-duplication). You don't want your SSD to erase data that can be crucial to your system's stability, do you?

As we have shown in dozens of reviews, TRIM doesn't work properly when dealing with incompressible data. It never has. That means when the drive is filled and tortured with incompressible data, it's put to a state where even TRIM does not fully restore performance. Since even Intel's own proprietary firmware didn't fix this, I believe the problem is so deep in the design that there is just no way to completely fix it. However, the TRIM issue we are dealing with today has nothing to do with incompressible data: now TRIM doesn't work properly with compressible data either.

Testing TRIM: It's Broken

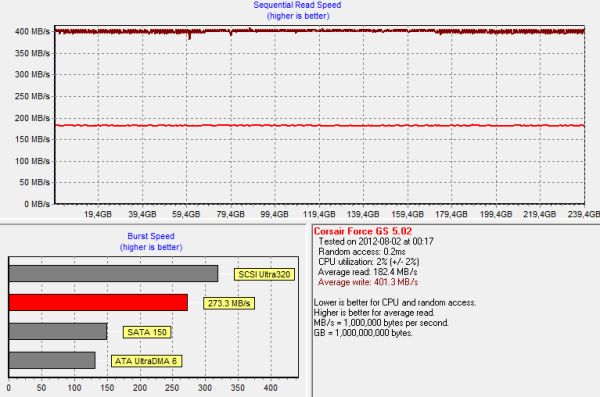

SandForce doesn't behave normally when we put it through our torture test with compressible data. While other SSDs experience a slowdown in write speed, SandForce's write speed remains the same but read speed degrades instead. Below is an HD Tach graph of 240GB Corsair Force GS, which was first filled with compressible data and then peppered with compressible 4KB random writes (100% LBA space, QD=32):

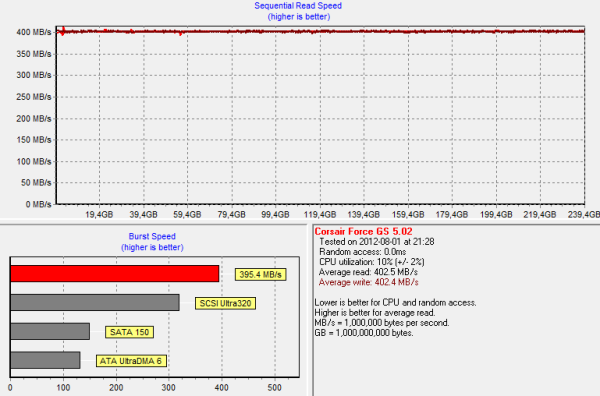

And for comparison, here is the same HD Tach graph ran on a secure erased Force GS:

As you can see, write speed wasn't affected at all by the torture. However, read performance degraded by more than 50% from 402MB/s to 182MB/s. That is actually quite odd because reading from NAND is a far simpler process: You simply keep applying voltages until you get the desired outcome. There is no read-modify-write scheme, which is the reason why write speed degrades in the first place. We don't know the exact reason why read speed degrades in SandForce based SSDs but once again, it seems to be the way it was designed. My guess is that the degradation has something to do with how the data is decompressed but most likely there is something much more complicated in here.

Read speed degradation is not the real problem, however. So far we haven't faced a consumer SSD that wouldn't experience any degradation after enough torture. Given that consumer SSDs typically have only 7-15% of over-provisioned NAND, sooner than later you will run into a situation where read-modify-write is triggered, which will result in a substantial decline in write performance. With SandForce your write speed won't change (at least not by much) but the read speed goes downhill instead. It's a trade-off but neither is worse than the other as all workloads consist of both reads and writes.

To test TRIM, I TRIM'ed the drive after our 20 minute torture:

And here is the real issue. Normally TRIM would restore performance to clean-level state, but this is not the case. Read speed is definitely up from dirty state but it's not fully restored. Running TRIM again didn't yield any improvement either, so something is clearly broken here. Also, it didn't matter if I filled and tortured with drive with compressible, pseudo-random data, or incompressible data; the end result was always the same when I ran HD Tach.

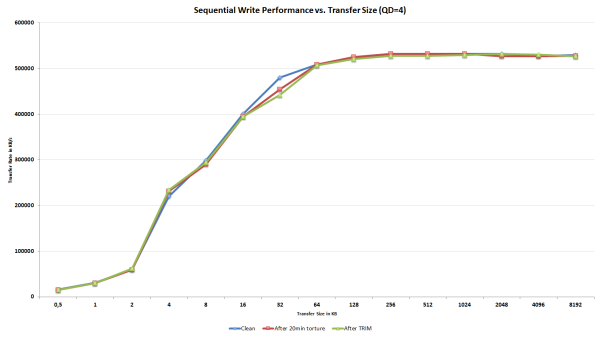

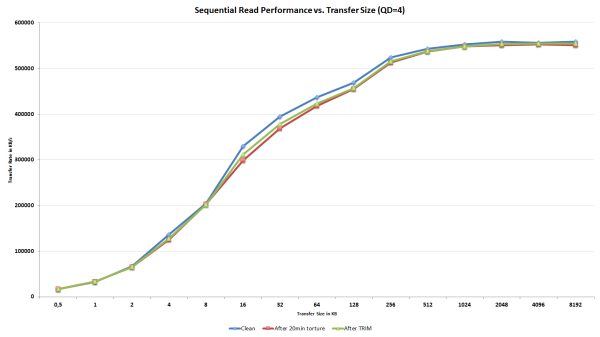

I didn't want to rely completely on HD Tach as it's always possible that one benchmark behaves differently from others, especially when it comes to SandForce. I turned to ATTO since it uses highly compressible data as well to see if it would report data similar to our HD Tach results. Once again, I first secure erased the drive, filled it with sequential data and proceeded to torture the drive with 4KB random writes (LBA space 100%, QD=32) for 20 minutes:

As expected, write speed is not affected except for an odd bump at transfer size of 32KB. Since we are only talking about one IO size and performance actually degraded after TRIM, it's completely possible that this is simply an error.

The read speed ATTO graph is telling the same story as our HD Tach graphs; read speed does indeed degrade and is not fully restored after TRIM. The decrease in read speed is a lot smaller compared to our HD Tach results, but it should be kept in mind that ATTO reads/writes a lot less data to the drive compared to HD Tach, which reads/writes across the whole drive.

What we can conclude from the results is that TRIM is definitely broken in SandForce SSDs with firmware 5.0.0, 5.0.1, or 5.0.2. If your SandForce SSD is running the older 3.x.x firmware, you have nothing to worry about because this TRIM issue is limited strictly to 5.x.x firmwares. Luckily, this is not the end of the world because SandForce has been aware of this issue for a long time and a fix is already available for some drives. Let's have a look how the fix works.

_575px.PNG)

56 Comments

View All Comments

Kristian Vättö - Friday, November 23, 2012 - link

That is correct, Storage bench tests are run on a drive without a partition.Running tests on a drive with a partition vs without a partition is something I've discussed with other storage editors quite a bit and there isn't really an optimal way to test things. We prefer to test without a partition because that is the only way we can ensure that the OS doesn't cause any additional anomalies but that means the drive may behave slightly differently with a file system than what you see in our tests.

JellyRoll - Friday, November 23, 2012 - link

Well personally I think that testing devices that are designed to operate in a certain environment is important. You are testing SSDs that are designed for a filesystem and TRIM, without a filesystem and TRIM. This means that the traces that you are running aren't indicative of real performance at all, the drives are functioning without the benefit of their most important aspect, TRIM. This explains why Anandtech is just now reporting the lack of TRIM support when other sites have been reporting this for months.Testing in an unrealistic environment with different datasets than those that are actually used when recording (your tools do not use the actual data, it uses substituted data that is highly compressible), in a TRIM free environment is like testing a Formula One car in a school zone.

This is the problem with proprietary traces. Readers have absolutely no idea if these results are valid, and surprise, they are not!

extide - Saturday, November 24, 2012 - link

The drive has NO IDEA id there is a partition on it or not. All the drive has to do is store data at a bunch of different addresses. That's it. Whether there is a partition or not has no difference, it's all just 0's and 1's to the drive.JellyRoll - Saturday, November 24, 2012 - link

it IS all ones and zeros my friend, but TRIM is a command issued by the Operating System. NOT the drive. This is why XP does not support TRIM for instance, and several older operating systems also do not support it. That is merely because they do not issue the TRIM command. The OS issues the TRIM commands, but only as a function of the file system that is managing it. :)No file system=no TRIM.

JellyRoll - Saturday, November 24, 2012 - link

exceprts from the defiition of TRIM from WIKI:Because of the way that file systems typically handle delete operations, storage media (SSDs, but also traditional hard drives) generally do not know which sectors/pages are truly in use and which can be considered free space.

Since a common SSD has no access to the file system structures, including the list of unused clusters, the storage medium remains unaware that the blocks have become available.

popej - Friday, November 23, 2012 - link

Different drives, different algorithms and different results. But since you are testing drive well outside normal use you should draw conclusion with care, not all could be relevant to standard application.R3dox - Friday, November 23, 2012 - link

I've read everything (afaik) on AT on SSDs the past few years and the powersaving features used to be disabled in reviews. They, at least at some point, significantly affect performance. Back then I bought an Intel 80GB postville SSD and all tests I ran confirmed that these settings have quite a big impact.I currently have an Intel 520 (though sadly limited by a 3Gbps SATA controller on my old core i7 920 platform) and I never thought of turning everything on again, so I wonder whether the problem is solved with newer drives. Did I miss something or why aren't these settings disabled anymore? Hopefully it's not a feature of newer platforms.

It would be nice if the next big SSD piece would cover this (or feel free to point me to an older one that does :)). I'd really like this to be clarified, if possible.

Bullwinkle J Moose - Friday, November 23, 2012 - link

I was kinda wondering something similarAHCI might give you a sleight performance boost, but does it affect the "Consistency" of the test results having NCQ enabled or the power saving features of AHCI or the O.S. itself

I always test my SSD's in the worst case scenario's to find bottom

XP

No Trim (Not even O&O Defrag Pro's manual Trim)

Heavy Defragging to test reliability while still under return policy

yank the drives power while running

Stuff like that

I predicted that my Vertex 2 would die if I ever updated the firmware as I have been tellyng people for the past few years and YES, it finally Died right after the firmware update

It was still under warranty but I seriously do not want another one

Time for me to thrash a Samsung 256GB 840 pro

I feel sorry for it already

Sniff

Bullwinkle J Moose - Friday, November 23, 2012 - link

I forgotMisaligned partitions and firmware updates during the return policy will also be used for testing any of my new drives during the return policy

I don't trust my data to a drive I haven't tested in a worst case scenario

Kristian Vättö - Friday, November 23, 2012 - link

EIST and Turbo were disabled when we ran our tests on a Nehalem based platform (that was before AnandTech Storage Bench 2011) but have been enabled since that. Some of our older SSD reviews have a typo in the table which shows that those would be disabled in our current setup as well, but that's because Anand forget to remove those lines when updating the spec table for our Sandy Bridge build.