What We've Been Waiting For: Testing OpenCL Accelerated Handbrake with AMD's Trinity

by Anand Lal Shimpi on May 15, 2012 1:23 PM ESTAMD, and NVIDIA before it, has been trying to convince us of the usefulness of its GPUs for general purpose applications for years now. For a while it seemed as if video transcoding would be the killer application for GPUs, that was until Intel's Quick Sync showed up last year.

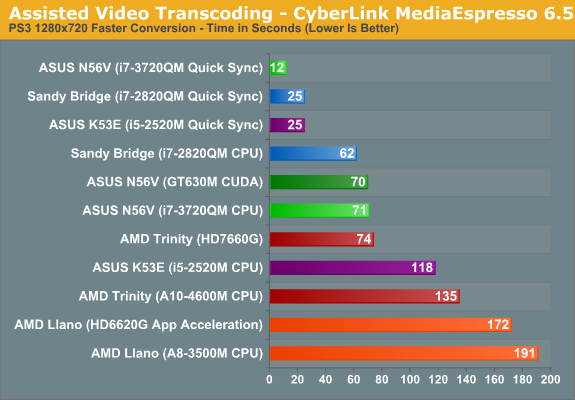

With Trinity, AMD has an answer to Quick Sync with its integrated VCE, however the performance is hardly as similar as the concept. In applications that take advantage of both Quick Sync and VCE, the Intel solution is considerably faster. While this first implementation of working VCE is better than x86 based transcoding on AMD's APUs, it still needs work:

Quick Sync's performance didn't move all users to Sandy/Ivy Bridge based video transcoding. One of its biggest limitations is the lack of good software support for the standard. We use applications like Arcsoft's Media Converter 7.5 and Cyber Link's Media Espresso 6.5 not because we want to, but because they are among the few transcoding applications that support Quick Sync. What we'd really like to see is support for Quick Sync in x264 or through an application like Handbrake.

The open source community thus far hasn't been very interested in supporting Intel's proprietary technologies. As a result, Quick Sync remains unused by the applications we want to use for video transcoding.

In our conclusion to this morning's Trinity review, we wrote that AMD's portfolio of GPU accelerated consumer applications is stronger now than it has ever been before. Photoshop CS6, GIMP, Media Converter/Media Espresso and WinZip 16.5 for the most part aren't a list of hardly used applications. These are big names that everyone is familiar, that many have actual seat time with. Now there's always the debate of whether or not the things you do with these applications are actually GPU accelerated, but AMD is at least targeting the right apps with its GPU compute efforts.

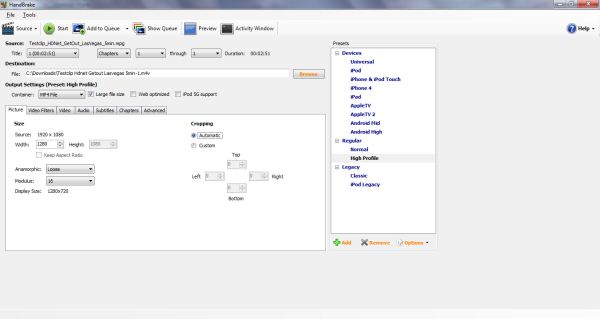

The list is actually a bit more impressive than what we've published thus far. Several weeks ago AMD dropped a bombshell: x264 and Handbrake would both feature GPU acceleration, largely via OpenCL, in the near future. I begged for an early build of both of them and eventually got just that. What you see below may look like a standard Handbrake screenshot, but it's actually a look at an early build of the OpenCL accelerated version of Handbrake:

As I mentioned before, the application isn't ready for prime time yet. The version I have is currently 32-bit only and it doesn't allow you to manually enable/disable GPU acceleration. Instead, to compare the x86 and OpenCL paths we have to run the beta Handbrake release against the latest publicly available version of the software.

GPU acceleration in Handbrake comes via three avenues: DXVA support for GPU accelerated video decode, OpenCL/GPU acceleration for video scaling and color space conversion, and OpenCL/GPU acceleration of the lookahead function of the x264 encoding process.

Video decode is the lowest hanging fruit to improving video transcode performance, and by using the DXVA API Handbrake can leverage the hardware video decode engine (UVD) on Trinity as well as its counterpart in Intel's Sandy/Ivy Bridge.

The scaling, color conversion and lookahead functions of the encode process are similarly obvious candidates for offloading to the GPU. The latter in particular is already data parallel and runs in its own thread, making it a logical fit for the GPU. The lookahead function determines how many frames the encoder should look ahead in time in the input stream to achieve better image quality. Remember that video encoding is fundamentally a task of figuring out which parts of frames remain unchanged over time and compressing that redundant data.

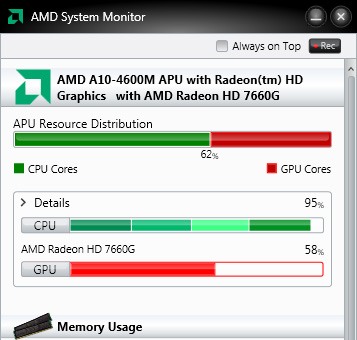

GPU usage during transcode in the OpenCL enhanced version of Handbrake

We're still working on a lot of performance/quality characterization of Handbrake, but to quickly illustrate what it can do we performed a simple transcode of a 1080p MPEG-2 source using Handbrake's High Profile defaults and a 720p output resolution.

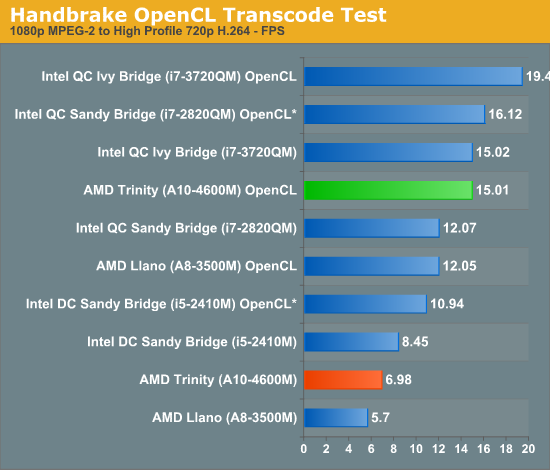

The OpenCL accelerated Handbrake build worked on Sandy Bridge, Ivy Bridge as well as the AMD APUs, although obviously Sandy Bridge saw no benefit from the OpenCL optimizations. All platforms saw speedups however, implying that Intel benefitted handsomely from the DXVA decode work. We ran both 32-bit x86 and 32-bit GPU accelerated results on all platforms. The results are below:

*SNB's GPU doesn't support OpenCL, video decode should be GPU accelerated, all OpenCL work is handled by the CPU

While video transcoding is significantly slower on Trinity compared to Intel's Sandy Bridge on the traditional x86 path, the OpenCL version of Handbrake narrows the gap considerably. A quad-core Sandy Bridge goes from being 73% faster down to 7% faster than Trinity. Ivy Bridge on the other hand goes from being 2.15x the speed of Trinity to a smaller but still pronounced 29.6% lead. Image quality appeared to be comparable between all OpenCL outputs, although we did get higher bitrate files from the x86 transcode path. The bottom line is that AMD goes from a position of not really competitive, to easily holding its own against similarly priced Intel parts.

This truly is the holy grail for what AMD is hoping to deliver with heterogeneous compute in the short term. The Sandy Bridge comparison is particularly telling. What once was a significant performance advantage for Intel, shrinks to something unnoticeable. If AMD could achieve similar gains in other key applications, I think more users would be just fine in ignoring the CPU deficit and would treat Trinity as a balanced alternative to Intel. The Ivy Bridge gap is still more significant but it's also a much more expensive chip, and likely won't appear at the same price points as AMD's A10 for a while.

We're working on even more examples of where AMD's work in enabling OpenCL accelerated applications are changing the balance of power in the desktop. Handbrake is simply the one we were most excited about. It will still be a little while before there are public betas of x264 and Handbrake, but it's at least something we can now look forward to.

60 Comments

View All Comments

CeriseCogburn - Wednesday, May 23, 2012 - link

AMD has their evil proprietary iron fist on the code. Good luck.Roman2K - Wednesday, May 16, 2012 - link

This is one of the most interesting AnandTech articles I've read in years.First, because I'm extremely excited by delegation of tasks to GPUs in general, and second, because x264 transcoding performance is one less reason to buy Intel instead of AMD processors.

With this criteria out of the way, I don't really care for x86 performance anymore. Let's hope the power efficiency of Vishera will be on par with Intel's.

Thanks to AMD for pushing OpenCL forward (in their interest as well as consumers', even Intel's who have Linux OpenCL drivers for their iGPUs) and to AnandTech for both unveiling a terrific news and publishing some comparison tests.

aegisofrime - Wednesday, May 16, 2012 - link

It's not that simple.Remember that lookahead is the only function offloaded to OpenCL at this point (probably forever). A lot of people have asked about GPGPU support for x264 and the developers have always said that the only function feasible for GPGPU is lookahead, which is what you are getting now. Other functions will still run on the CPU.

So, don't be so quick to throw away that Intel CPU.

BenchPress - Wednesday, May 16, 2012 - link

The AVX2 instruction set extension will double the CPU's throughput, and also adds 'gather' support (accessing eight memory locations with a single instruction).This is in fact the same sort of technology which makes a GPU fast at throughput computing, but next year it will be merged into the CPU. So there's no need to get excited about delegating tasks to the GPU when the CPU has the same capabilities. Its cache and out-of-order execution even give it unique advantages in avoiding memory bottlenecks and stalls.

Note that in the above benchmarks the Trinity GPU still loses against the Intel CPU. That gap will widen with AVX2, and I don't see how AMD could counter that unless they implement AVX2 as well and sell us more cores (modules) for less.

Roman2K - Thursday, May 17, 2012 - link

@aegisofrime & BenchPressThanks for your informative replies.

CeriseCogburn - Thursday, May 24, 2012 - link

" Note that in the above benchmarks the Trinity GPU still loses against the Intel CPU. That gap will widen with AVX2, and I don't see how AMD could counter that unless they implement AVX2 as well and sell us more cores (modules) for less."Amd, too little, too late. It's not a problem though, fanboy fever will take care of the sad realities, at least for the vast majority here.

I love the new cpu doesn't really matter for a laptop lines were getting now, too, from the very same. The fever is reaching near 106F and stroking out comes soon (at least they will die happily in ignorant bliss).

LOL

I'm going to have to wait at least one more amd generation, perhaps two, until their apu/GPU part, really is worth something. I see HD4000 winning or tying about half the tests, not that either contestant has playable frame rates (portal2 excepted).

Riek - Thursday, May 24, 2012 - link

AMD will also support AVX2 eventually, so mooth point.Intel doesn't have AVX2 for at least a year and even then its not clear cut when an application will support it either.

AMD delivers the same performance in above benchmarks as a higher TDP intel part that has a far bigger cpu and costs double or more.

gcor - Wednesday, May 16, 2012 - link

I ask because I used to work on a Telecom's platform that used PPC chips, with vector processors that *I think* are quite analogous to GPGPU programming. We off loaded as much as possible to the vector processors (e.g. huge quantities of realtime audio processing). Unfortunately it was extremely difficult to write reliable code for the vector processors. The software engineering costs wound up being so high, that after 4-5 years of struggling, the company decided to ditch the vector processing entirely and put in more general compute hardware power instead. This was on a project with slightly less than 5,000 software engineers, so there were a lot of bodies available. The problem wasn't so much the number of people, as the number of very high calibre people required. In fact, having migrated back to generalised code, the build system took out the compiler support for the vector processing to ensure that it could never be used again. Those vector processors now sit idle in telecoms nodes all over the world.Anyway, I hope the problem isn't intrinsically too hard for mainstream adoption. It'll be interesting to see how x264 development gets through it's present quality issues with OpenCL.

gcor - Wednesday, May 16, 2012 - link

Opps, I forgot to also ask...Wasn't the lack of developer take up of vector processing one of the reasons why Apple gave up on PPC and moved to Intel? Apple initially touted that they had massively more compute available than Windows Intel based machines. However, in the long run no, or almost no, applications used the vector processing compute power available, making the PPC platform no better.

gcor - Wednesday, May 16, 2012 - link

Gah! Should have asked these questions on the other thread. Doh.