The AMD Trinity Review (A10-4600M): A New Hope

by Jarred Walton on May 15, 2012 12:00 AM ESTAMD Trinity General Performance

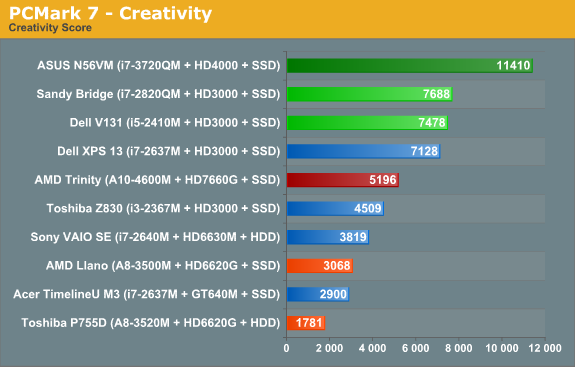

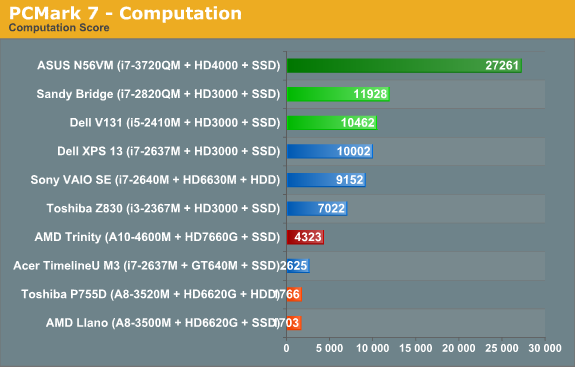

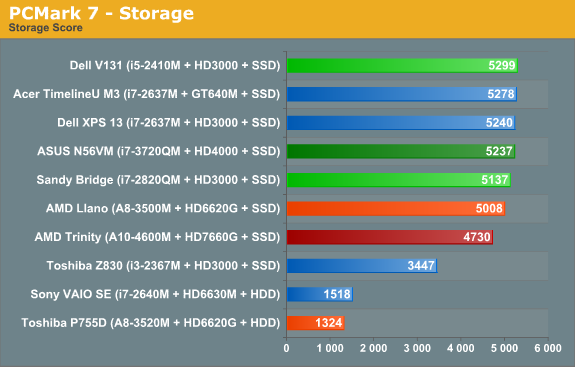

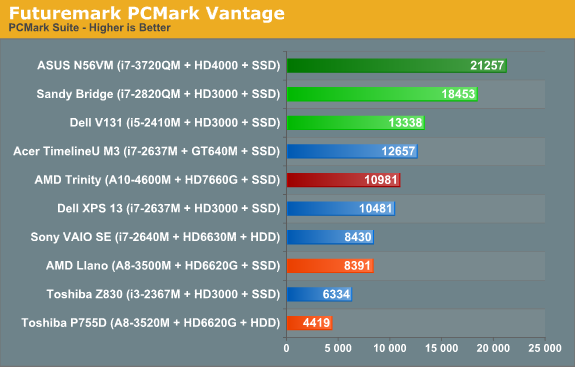

Starting as usual with our general performance assessment, we’ve got several Futuremark benchmarks along with Cinebench and x264 HD encoding. The latter two focus specifically on stressing the CPU while PCMarks will cover most areas of system performance (including a large emphasis on storage) and 3DMarks will give us a hint at graphics performance. First up, PCMark 7 and Vantage:

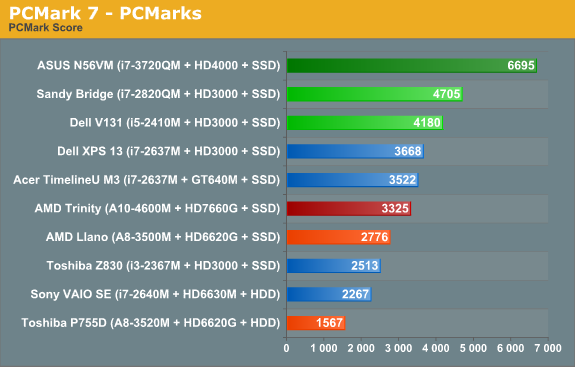

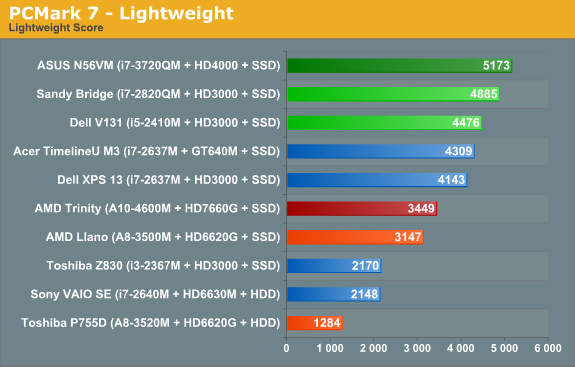

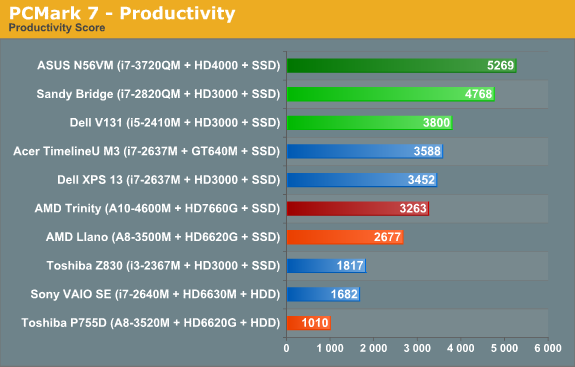

As noted earlier, we ran several other laptops through PCMark 7 and PCMark Vantage testing using the same Intel 520 240GB SSD, plus all the ultrabooks come with SSDs. That removes the SSD as a factor from most of the PCMark comparisons, leaving the rest of the platform to sink or swim on its own. And just how does AMD Trinity do here? Honestly, it’s not too bad, despite positioning within the charts.

Obviously, Intel’s quad-core Ivy Bridge is a beast when it comes to performance, but it’s a 45W beast that costs over $300 just for the CPU. We’ll have to wait for dual-core Ivy Bridge to see exactly how Intel’s latest stacks up against AMD, but if you remember the Llano vs. Sandy Bridge comparisons it looks like we’re in for more of the same. Intel continues to offer superior CPU performance, and even their Sandy Bridge ULV processors can often surpass Llano and Trinity. In the overall PCMark 7 metric, Trinity ends up being 20% faster than a Llano A8-3500M laptop, while Intel’s midrange i5-2410M posts a similar 25% lead on Trinity. Outside of the SSD, we’d expect Trinity and the Vostro V131 to both sell for around $600 as equipped.

A 25% lead for Intel is pretty big, but what you don’t necessarily get from the charts is that for many users, it just doesn’t matter. I know plenty of people using older Core 2 Duo (and even a few Core Duo!) laptops, and for general office tasks and Internet surfing they’re fine. Llano was already faster in general use than Core 2 Duo and Athlon X2 class hardware, and it delivered great battery life. Trinity boosts performance and [spoiler alert!] battery life, so it’s a net win. If you’re looking for a mobile workstation or something to do some hardcore gaming, Trinity won’t cut it—you’d want a quad-core Intel CPU for the former, and something with a discrete GPU for the latter—but for everything else, we’re in the very broad category known as “good enough”.

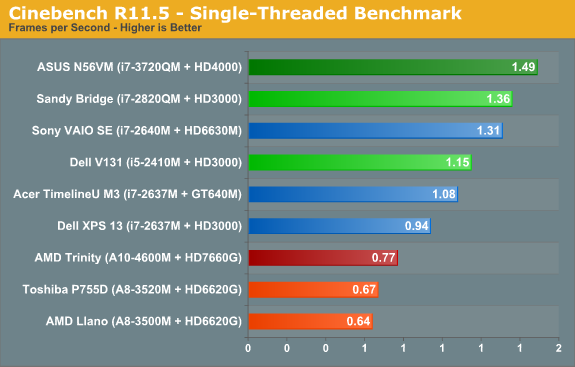

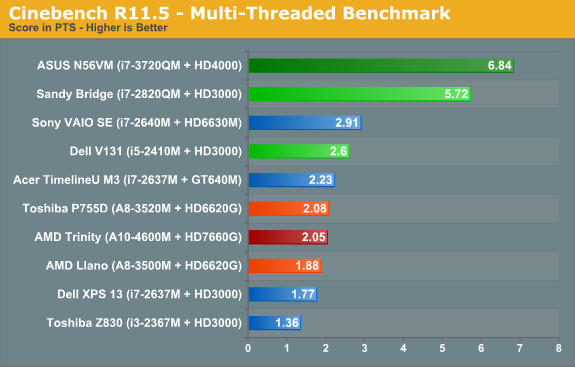

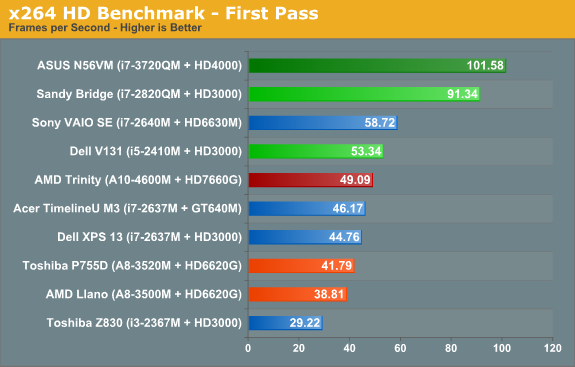

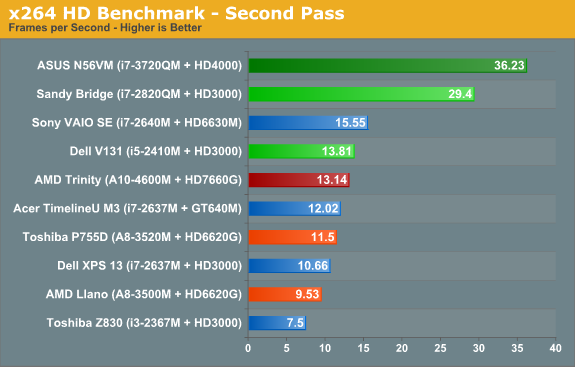

When we start drilling down into other performance metrics, AMD’s CPU performance deficiency becomes pretty obvious. The Cinebench single-threaded score is up 15% from 35W Llano, but in a bit of a surprise the multi-threaded score is basically a wash. Turn to the x264 HD encoding test however and Trinity once again shows a decent 15% improvement over Llano. Against Sandy Bridge and Ivy Bridge, though? AMD’s Trinity doesn’t stand a chance: i5-2410M is 50% faster in single-threaded Cinebench, 27% faster in multi-threaded, and 5-10% faster in x264. It’s a good thing 99.99% of laptop users never actually run applications like Cinebench for “real work”, but if you want to do video encoding a 10% increase can be very noticeable.

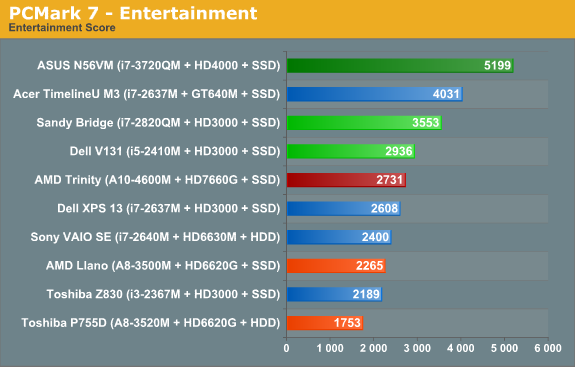

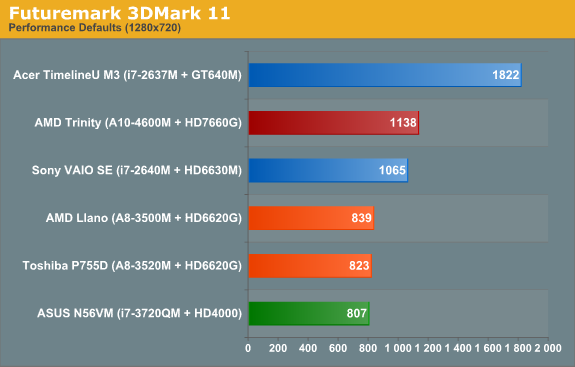

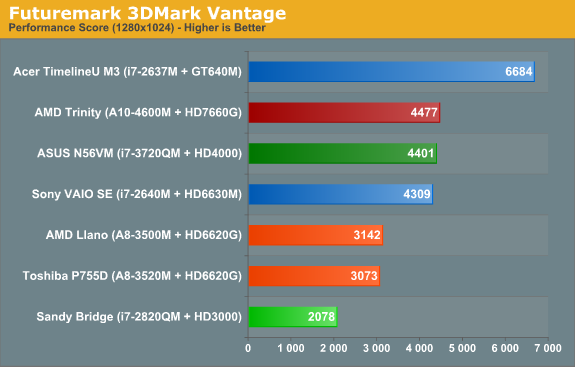

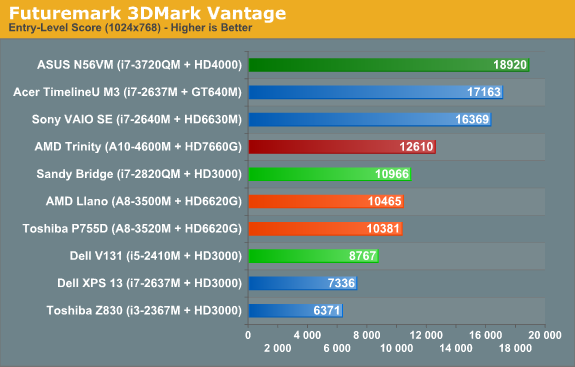

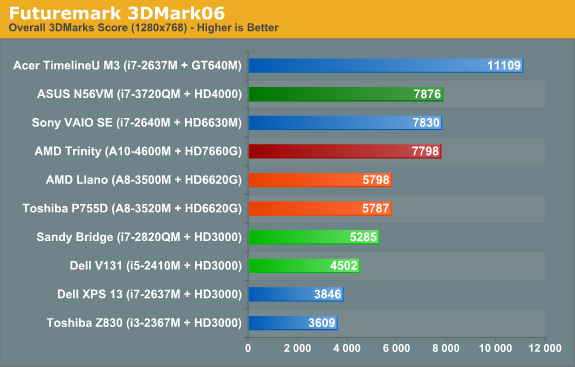

Shift over to graphics oriented benchmarks and the tables turn once again...sort of. Sandy Bridge can’t run 3DMark11, since it only has a DX10 class GPU, but in Vantage Performance and 3DMark06 Trinity is more than twice as fast as HD 3000. Of course, Ivy Bridge’s HD 4000 is the new Intel IGP Sheriff around these parts, and interestingly we see Trinity and i7-3720QM basically tied in these two synthetics. (We’ll just ignore 3DMark Vantage’s Entry benchmark, as it’s so light on graphics quality that we’ve found it doesn’t really stress most GPUs much—even low-end GPUs like HD 3000 score quite well.) We’ll dig into graphics performance more with our gaming benchmarks next.

271 Comments

View All Comments

raghu78 - Tuesday, May 15, 2012 - link

AMD needs to do much better with their CPU performance otherwise its looking pretty bad from here.Intel Haswell is going to improve the graphics performance much more significantly. With some rumours of stacked DRAM making it to haswell it looks pretty grim from here. And we don't know the magnitude of CPU performance improvements in Haswell ? AMD runs the risk of becoming completely outclassed in both departments. AMD needs to have a much better processor with Steamroller or its pretty much game over. AMD's efforts with HSA and OpenCL are going to be very crucial in differentiating their products. Also when adding more GPU performance AMD needs to address the bandwidth issue with some kind of stacked DRAM solution. AMD Kaveri with 512 GCN cores is going to be more bottlenecked than Trinity if their CPU part isn't much more powerful and their bandwidth issues are not addressed. I am still hoping AMD does not become irrelevant cause comeptition is crucial for maximum benefit to the industry and the market.

Kjella - Tuesday, May 15, 2012 - link

Well it's hard to tell facts from fiction but some have said Haswell will get 40 EUs as opposed to Ivy Bridge's 16. Hard to say but we know:1. Intel has the TDP headroom if they raise it back up to 95W for the new EUs.

2. Intel has the die room, the Ivy Bridge chips are Intel's smallest in a long time.

3. Graphics performance is heavily tied to number of shaders.

In other words, if Intel wants to make a much more graphics-heavy chip - it'll be more GPU than CPU at that point - they can, and I don't really see a good reason why not. Giving AMD and nVidia's low end a good punch must be good for Intel.

mschira - Tuesday, May 15, 2012 - link

Hellooouuu?Do I see this right? The new AMD part offers better battery life with a 32 nm part than Intel with a spanking new 22nm part?

And CPU performance is good (though not great...)?

AND they will offer a 25W part that will probably offer very decent performance but even better battery life?

And you call this NOT earth shattering?

I don't understand you guys.

I just don't.

M.

JarredWalton - Tuesday, May 15, 2012 - link

Intel's own 32nm part beats their 22nm part, so no, I'm not surprised that a mature 32nm CPU from AMD is doing the same.Spunjji - Tuesday, May 15, 2012 - link

...that makes sense if you're ignoring GPU performance. If you're not, this does indeed look pretty fantastic and is a frankly amazing turnaround from the folks that only very recently brought us Faildozer.I'm not going to chime in with the "INTEL BIAS" blowhards about, but I do agree with mschira that this is a hell of a feat of engineering.

texasti89 - Tuesday, May 15, 2012 - link

"Intel's own 32nm part beats their 22nm part", how so?CPU improvement (clk-per-clk) = 5-10%

GPU improvement around 200%

Power efficiency (for similar models) = 20-30% power reduction.

JarredWalton - Tuesday, May 15, 2012 - link

Just in case you're wondering, I might have access to some other hardware that confirms my feeling that IVB is using more power under light loads than SNB. Note that we're talking notebooks here, not desktops, and we're looking at battery life, not system power draw. So I was specifically referring to the fact that several SNB laptops were able to surpass the initial IVB laptop on normalized battery life -- nothing more.vegemeister - Tuesday, May 15, 2012 - link

Speaking of which, why aren't you directly measuring system power draw? Much less room for error than relying on manufacturer battery specifications, and you don't have to wait for the battery to run down.JarredWalton - Wednesday, May 16, 2012 - link

Because measuring system power draw introduces other variables, like AC adapter efficiency for one. Whether we're on batter power or plugged in, the reality is that BIOS/firmware can have an impact on these areas. While it may only be a couple watts, for a laptop that's significant -- most laptops now idle at less than 9W for example (unless they have an always on discrete GPU).vegemeister - Wednesday, May 16, 2012 - link

You could measure on the DC side. And if you want to minimize non-CPU-related variation, it would be best to do these tests with the display turned off. At 100 nits you'll still get variation from the size of the display and the efficiency of the inverter and backlight arrangement.