Samsung Galaxy S III Performance Preview: It's Fast

by Brian Klug & Anand Lal Shimpi on May 3, 2012 6:13 PM EST- Posted in

- Smartphones

- Samsung

- Mobile

- SoCs

- Exynos 4 Quad

- Galaxy S III

Earlier today Samsung unveiled its Galaxy S III, at the heart of which is Samsung's new Exynos 4 Quad SoC. Fortunately we got a ton of hands on time with the device at Samsung's unpacked event in London and are able to bring you a full performance preview of the new flagship, due to be shipping in Europe on May 29th.

The Exynos 4 Quad is an obvious evolution of the dual-core Exynos in many of the Galaxy S II devices. Built on Samsung's 32nm high-k + metal gate LP process, the new Exynos integrates four ARM Cortex A9s running at up to 1.4GHz (200MHz minimum clock). Each core can be power gated individually to prevent the extra cores from being a power burden in normal usage. Each core also operates on its own voltage and frequency plane, taking a page from Qualcomm's philosophies on clocking. There is no fifth companion core, but the assumption is Samsung's 32nm HK+MG LP process should have good enough leakage characteristics to reduce the need for such a design.

The GPU is still ARM's Mali-400/MP4, however we're not sure of its clocks. Similar to the dual-core Exynos, there's a dual-channel LPDDR2 memory controller that feeds the entire SoC. The combination should result in performance competitive with NVIDIA's Tegra 3 (and a bit higher in memory bandwidth limited scenarios), but potentially at lower power levels thanks to Samsung's 32nm process.

While we won't know much about the power side of things until we get a review device in hand, we can look at its performance today.

Browser & CPU Performance: Very Good

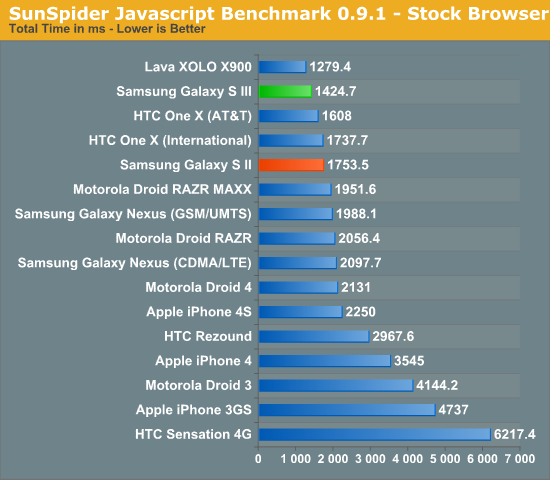

As always, we start with our Javascript performance tests that measure a combination of the hardware's performance in addition to the software on the device itself. Sunspider performance is extremely good:

While we thought we hit a performance wall around 1800ms, the One X from HTC, the Lava XOLO and now the Samsung Galaxy S III have reset the barrier for us. In this case the performance boost is likely more due to software than hardware, but the combination of the two results in performance that's better than almost anything we've seen thus far. The obvious exception being Intel's Medfield in the X900.

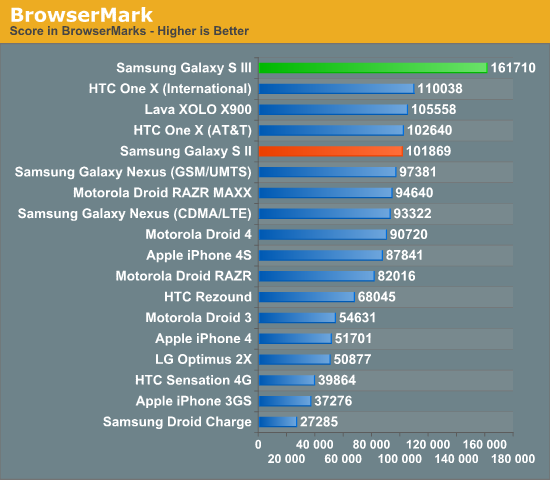

BrowserMark is another solid js benchmark, but here we're really able to see just how much tuning Samsung has done in its browser:

The Galaxy S III is significantly faster than anything else we've ever tested thus far. The browsing experience in general is very good on the SGS3, and the advantage here likely has more to do with Samsung's browser code and the fact that it's running Android 4.0.4 rather than any inherent SoC advantage. We know how 1.4GHz Cortex A9s should perform, and this is clearly much better than that.

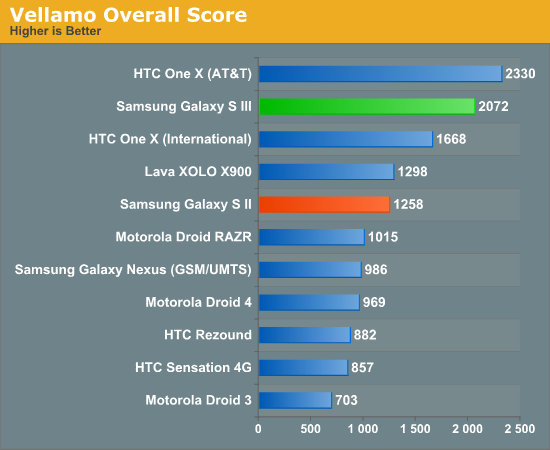

Once again we turn to Qualcomm's Vellamo to get an idea for browser and UI scrolling performance:

Although (understandably) not as quick as the Snapdragon S4 based One X, the SGS3 does extremely well here - likely due in no small part to whatever browser optimizations ship in Samsung's 4.0.4 build. As Brian put it when he first got time with the device: it's butter.

GPU Performance: Insanely Fast

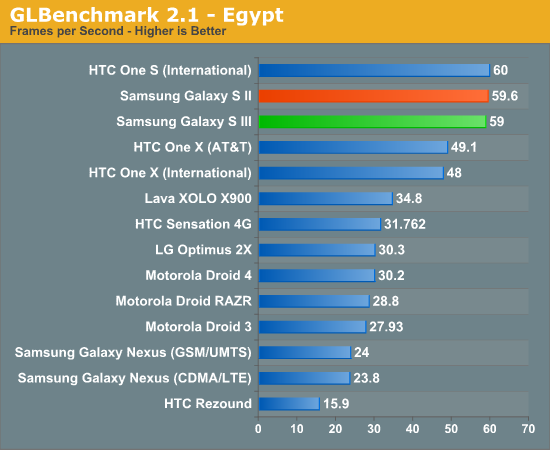

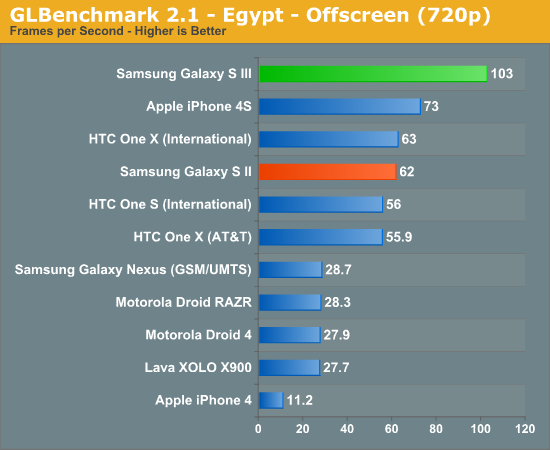

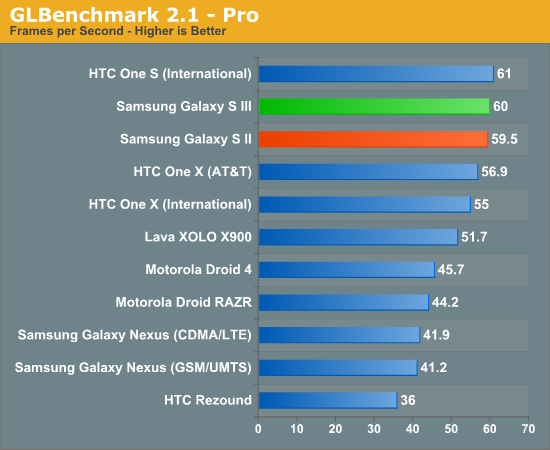

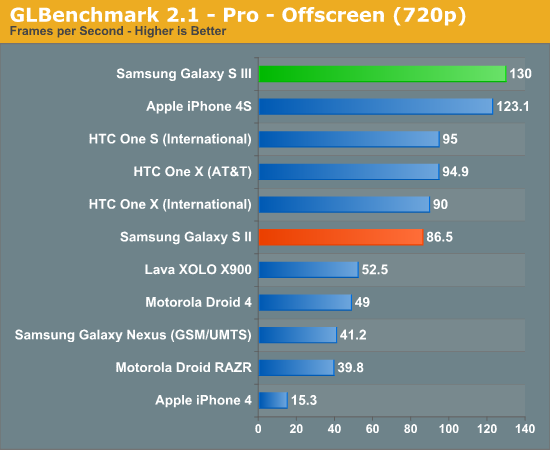

While we don't know the clocks of the Mali-400/MP4 GPU in the SGS3, it's obviously significantly quicker than its predecessor. Similar to what we saw when the Galaxy S II launched, Samsung once again takes the crown for fastest smartphone GPU in our performance tests.

The onscreen GLBenchmark Egypt and Pro results are understandably v-sync limited, but if you look at how much headroom is available thanks to the faster GPU it's clear that the Galaxy S III should be able to handle newer, more complex games, better than its predecessor.

What's particularly insane is that Samsung is able to deliver better performance than the iPhone 4S, the previous king-of-the-GPU-hill in these tests.

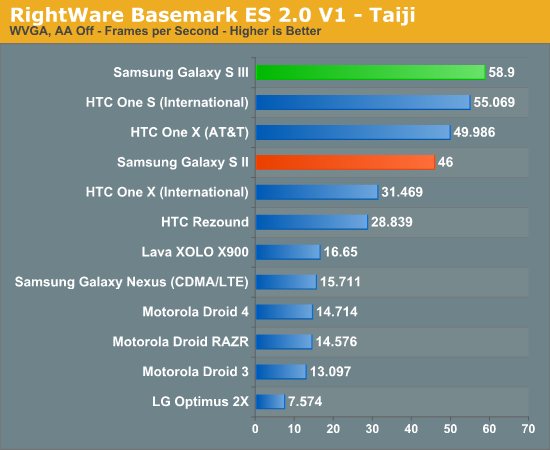

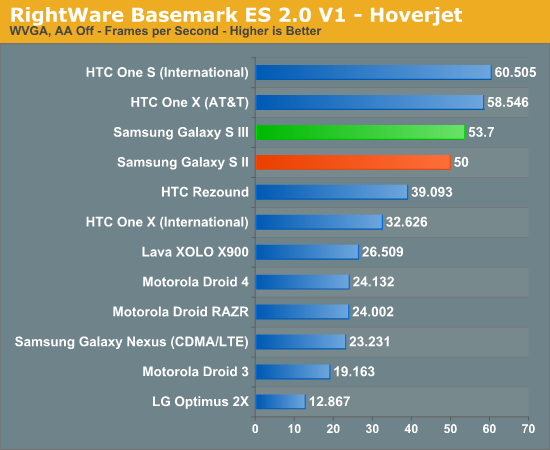

The performance advantage isn't anywhere near as staggering if we look as BaseMark ES 2.0, however as we've mentioned before this benchmark is definitely showing its age at this point. Despite the aggressive tuning Qualcomm has done for these benchmarks, Samsung is actually able to remain competitive and even pull out a slight win in the Taiji test. Both benchmarks are v-sync limited on the fastest platforms however.

Final Words

Our first interactions with Samsung's Exynos 4 Quad are promising, but there's still much more to understand. Samsung clearly used 32nm as a means to higher GPU clock speeds, which in turn gives us much better GPU performance. The big unknown, as always, is power consumption. Based on what we've seen thus far from Samsung's 32nm LP process in Apple's iPad 2,4 (review forthcoming), Exynos 4 Quad should be a pretty good step forward in the power department as well.

As soon as we can get our hands on final hardware you can expect a full review of the Galaxy S III, including power and battery life analysis.

Initial reactions to the Galaxy S III announcement seemed almost disappointing, however stay tuned for our hands on impressions of the device as well as even more depth/detail on the hardware platform - you may be surprised.

92 Comments

View All Comments

Shri - Wednesday, May 9, 2012 - link

Yes.. Earlier release had a stuttering due to Sense 4.0..But a software update has made it all clear and is now butter smooth...

Seriously, I have nothing to do with a such a high performance S3... Having a top notch score doesnt tell the whole story to a must buy phone.... Surely the look also counts.. First look will be the first impression.... And HTC One X has it with an decent scores wit All round positives...

jayhawk11 - Thursday, May 3, 2012 - link

Looks like they aren't messing around with this new Mali-400. It will be interesting to see how Apple responds.Aenean144 - Thursday, May 3, 2012 - link

A 32 nm A5X will be more than competitive. It's a good question whether Apple will choose to use it rather than a die shrunk A5 though. A higher clock A5 will match these benchmarks. An A5X will be 50% faster. Maybe even close to 100%.B3an - Friday, May 4, 2012 - link

A5X with the same GPU is still too large and power hungry even at 32nm to go in to a phone. Look at the insanely large batteries already needed for iFad 3, a one node shrink isn't going to massively change the power usage enough. The only way they could possibly get that in a phone at 32nm is by using extremely low clocks. Then the GPU might still compare but the CPU would be much slower, even slower than the iPhone 4S for CPU performance. 32nm A5 is more likely, which i highly doubt would match the Exynos 4 Quad atleast on CPU performance.Anaxarxes - Friday, May 4, 2012 - link

The reason why the new iPad needs such an enormous battery is its 2048*1536 screen. A5X is more power hungry than A5 of course, but only in high workload. idle and even in small tasks, A5X equals A5 in terms of power consumption if not beats it. So a 32nm A5X with little tweaks would take it dangerously close to the S3.S3 is a monstrous phone both in size and in performance. I think Samsung used a bigger screen:

-720p on a 4.2" display is harder and much more expensive to build due to high ppi.

-4,8 inch gives more room for the battery which the quad core CPU needs more than anything.

But I think that people might not want a bigger screen than already over sized S2. But again geeks tend to droll over specs than usability, so it'll win their hearts.

B3an - Friday, May 4, 2012 - link

A5X uses way more power with that GPU. It also need extra cooling added on top of the chip and still gets hotter.And as proven many times with Tegra 3 already, quad core does not use more power. So once again you're wrong. And being as the S3 has the first 32nm quad core it will likely use considerably less power than the A5 and other dual-core SoC's.

The S2 wasn't at all over sized, it's also thinner than the iPhone as well which helps compensate. It's funny you mention usability because the tiny midget screen the iPhone has is totally unacceptable and makes using it harder even if you dont have large hands. Theres a reason nearly all other phones are using larger screens - because they're better!

Anaxarxes - Friday, May 4, 2012 - link

You just cant compare Tegra 3 with a normal quad core. Tegra is an hybrid product with a companion core that switches the quad cores on and off and simply becomes single core under idle load. The reason why A5X has a heat spreader is because of the quad core GPU. Under heavy load it becomes quite hot. But under normal load it is THE SAME CPU as the A5 if not further developed to use less power.Hold an S2 with one hand and try to touch the upper cross corner of the screen with your thumb while firmly holding it in your palm. You just CAN'T. Because it's too big. And don't get me started with the Galaxy Note joke.

Do you think Apple sticks to 3,5" screen because they cannot develop a larger screen product? Do you seriously believe that?

Apple strategy is simple, really. Do something that makes sense for the average user. Design in a such way that the product just feels right ergonomically and aesthetically.

Samsung is more like an OEM. Pick the best shit out there and combine. There you go! The fastest phone with the brightest screen. No, thanks. I'd have a design that is thoroughly thought and developed.

SamsungAppleFan - Friday, May 4, 2012 - link

Do you think really think Samsung didn't develop a 3.5 inch screen for their G series cause they couldn't? Do you seriously believe that? ;)Apple stuck to 3.5 because that's what dictator Steve said is the perfect magical size. Now that people know better they're upping to 4 inches with the next iPhone. They're just following the trend like any other company. Wake up. Meeehhhh Meeehhh.

And unfortunately for you, everyone review of the note was full of praise. Are you saying all those 5 million people who bought the galaxy note is a fool? Hardly. Need a napkin? You are oozing your hate sauce everywhere.

Anaxarxes - Friday, May 4, 2012 - link

I'm not hating anything. I love competition, but Samsung's products are not for me.Samsung couldn't have done a 3.5" galaxy simply because they could not have differentiated themselves enough to make a sale. If all specs and sizes are similar, who do you think will sell more, Apple or Samsung?

And yes 3.2-3.7" is still the best size for a smartphone for useability.

But keep in mind that without dictator Steve, you'd never have had Galaxy. You'd be typing with your 8mp camera/qwerty/ BlackJack.

Apple's screen size increase is purely battery limitation related. If there could be a 8Whr battery in the size of the current iPhone's battery, Apple would have never upped the screen size in the next gen.

I tend to be a fanboy of good products that have been designed well. Unfortunately Samsung products does not feel that way.

teldar - Saturday, May 5, 2012 - link

I'm 6'2", don't have huge hands for a guy my size. I have a droid X in an otter box case.I use it ALL THE TIME.

I can hold it comfortably in one hand and get to anything I want to reach with that hand's thumb. Again, this is a 4.3" screen phone in an otter box.

Just because your hands are too small for a large screen phone and you don't want a large screen phone does not mean that nobody can or wants to use one.

Please try to keep in mind there is a difference between your preferences and everyone else's.

As far as the 5" screen goes, I have been saying for two years that I would like a 5" screen. I don't care that much about size as I was carrying a phone and a PDA until I got my X.

Bring on the 5" HTC flagship with the quad core S4 and Adreno 320 which is supposed to be released later this year.