Intel Z77 Panther Point Chipset and Motherboard Preview – ASRock, ASUS, Gigabyte, MSI, ECS and Biostar

by Ian Cutress on April 8, 2012 12:00 AM EST- Posted in

- Motherboards

- Intel

- Biostar

- MSI

- Gigabyte

- ASRock

- Asus

- Ivy Bridge

- ECS

- Z77

LucidLogix Virtu MVP Technology and HyperFormance

While not specifically a feature of the chipset, Z77 will be one of the first chipsets to use this remarkable new technology. LucidLogix was the brains behind the Hydra chip—a hardware/software combination solution to allow GPUs from different manufacturers to work together (as we reviewed the last iteration on the ECS P62H2-A). Lucid was also behind the original Virtu software, designed to allow a discrete GPU to remain idle until needed, and let the integrated GPU deal with the video output (as we reviewed with the ASUS P8Z68-V Pro). This time, we get to see Virtu MVP, a new technology designed to increase gaming performance.

To explain how Virtu MVP works, I am going to liberally utilize and condense what is said in the Lucid whitepaper about Lucid MVP, however everyone is free to read what is a rather interesting ten pages.

The basic concept behind Virtu MVP is the relationship between how many frames per second the discrete GPU can calculate, against what is shown on the screen to the user, in an effort to increase the 'immersive experience'.

Each screen/monitor the user has comes with a refresh rate, typically 60 Hz, 75 Hz or 120 Hz with 3D monitors (Hz = Hertz, or times ‘per second’). This means that at 60 times per second, the system will pull out what is in the frame buffer (the bit of the output that holds what the GPU has computed) and display what is on the screen.

With standard V-Sync, the system will only pull out what is in the buffer at certain intervals—namely at factors of the base frequency (e.g. 60, 30, 20, 15, 12, 10, 6, 5, 3, 2, or 1 for 60Hz) depending on the monitor being used. The issue is with what happens when the GPU is much faster (or slower) than the refresh rate.

The key tenet of Lucid’s new technology is the term responsiveness. Responsiveness is a wide-ranging term which could mean many things. Lucid distils it into two key areas:

a) How many frames per second can the human eye see?

b) How many frames per second can the human hand respond to?

To clarify, these are NOT the same questions as:

i) How many frames per second do I need to make the motion look fluid?

ii) How many frames per second makes a movie stop flickering?

iii) What is the fastest frame (shortest time) a human eye would notice

If the display refreshes at 60 Hz, and the game runs at 50 fps, would this need to be synchronized? Would a divisor of 60 Hz be better? Alternatively, perhaps if you were at 100 fps, woud 60 fps be better? The other part of responsiveness is how a person deals with hand-to-eye coordination, and if the human mind can correctly interpolate between a screen's refresh rate and the output of the GPU. While a ~25 Hz rate may be applicable for a human eye, the human hand can be as sensitive as 1000 Hz, and so having the correlation between hand movement and the eye is all-important for 'immersive' gaming.

Take the following scenarios:

Scenario 1: GPU is faster than Refresh Rate, VSync Off

Refresh rate: 60 Hz

GPU: 87 fps

Mouse/Keyboard responsiveness is 1-2 frames, or ~11.5 to 23 milliseconds

Effective responsiveness makes the game feel like it is between 42 and 85 FPS

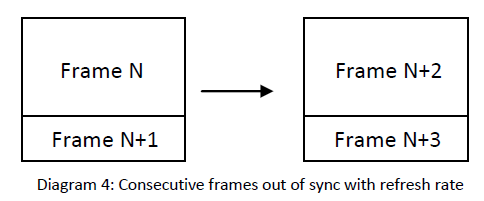

In this case, the GPU is 45% faster than the screen. This means that as the GPU fills the frame buffer, it will continuously be between frames when the display dumps the buffer contents on screen, such that the computation of the old frame and the new frame is still in the buffer:

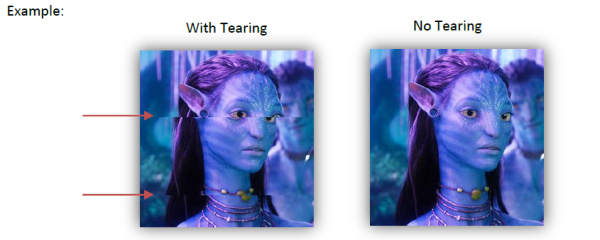

This is a phenomenon known as Tearing (which many of you are likely familiar with). Depending on the scenario you are in, tearing may be something you ignore, notice occasionally, or find rather annoying. For example:

So the question becomes, was it worth computing that small amount of frame N+1 or N+3?

Scenario 2: GPU is slower than Refresh Rate, VSync Off

Refresh rate: 60 Hz

GPU: 47 fps

Mouse/Keyboard responsiveness is 1-2 frames, or ~21.3 to 43 milliseconds

Effective responsiveness makes the game feel it is between 25 and 47 FPS

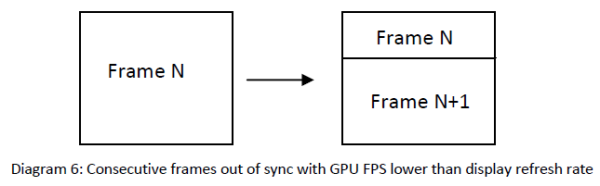

In this case, the GPU is ~37% slower than the screen. This means that as the GPU fills the frame buffer slower than what the screen requests and it will continuously be between frames when the display dumps the buffer contents on screen, such that the computation of the old frame and the new frame is still in the buffer.

So does this mean that for a better experience, computing frame N+1 was not needed, and N+2 should have been the focus of computation?

So does this mean that for a better experience, computing frame N+1 was not needed, and N+2 should have been the focus of computation?

Scenario 3: GPU can handle the refresh rate, V -Sync On

This setting allows the GPU to synchronize to every frame. Now all elements of the system are synchronized to 60 Hz—CPU, application, GPU and display will aim for 60 Hz, but also at lower intervals (30, 20, etc.) as required.

While this produces the best visual experience with clean images, the input devices for haptic feedback are limited to the V-Sync rate. So while the GPU could enable more performance, this artificial setting is capping all input and output.

Result:

If the GPU is slower than the display or faster than the display, there is no guarantee that the frame buffer that is drawn on the display is of a complete frame. A GPU has multiple frames in its pipeline, but only few are ever displayed at high speeds, or frames are in-between when the GPU is slow. When the system is set a software limit, responsiveness decreases. Is there a way to take advantage of the increased power of systems while working with a limited refresh rate—is there a way to ignore these redundant tasks to provide a more 'immersive' experience?

LucidLogix apparently has the answer…

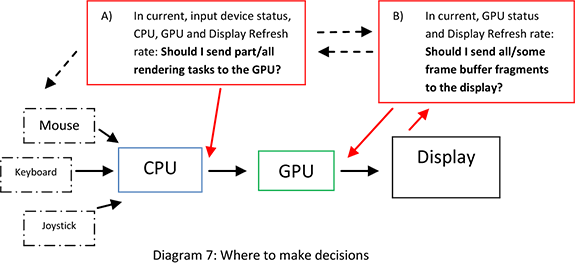

The answer from Lucid is Virtu MVP. Back in September 2011, Ryan gave his analysis on the principles of the solution. We are still restricted to the high level overview (due to patents) explanation as Ryan was back then. Nevertheless, it all boils down to the following image:

Situation (A) determines whether a rendering task/frame should be processed by the GPU, and situation (B) decides which frames should go to the display. (B) helps with tearing, while (A) better utilizes the GPU. Nevertheless, the GPU is doing multiple tasks—snooping to determine which frames are required, rendering the desired frame, and outputting to a display. Lucid is using hybrid systems (those with an integrated GPU and a discrete GPU) to overcome this.

Situation (B) is what Lucid calls its Virtual V-Sync, an adaptive V-Sync technology currently in Virtu. Situation (A) is an extension of this, called HyperFormance, designed to reduce input lag by only sending required work to the GPU rather than redundant tasks.

Within the hybrid system, the integrated GPU takes over two of the tasks for the GPU—snooping for required frames, and display output. This requires a system to run in i-Mode, where the display is connected to the integrated GPU. Users of Virtu on Z68 may remember this: back then it caused a 10% decrease in output FPS. This generation of drivers and tools should alleviate some of this decrease.

What this means for Joe Public

Lucid’s goal is to improve the 'immersive experience' by removing redundant rendering tasks, making the GPU synchronize with the refresh rate of the connected display and reduce input lag.

By introducing a level of middleware that intercepts rendering calls, Virtual V-Sync and HyperFormance are both tools that decide whether a frame should be rendered and then delivered to the display. However the FPS counter within a title counts frame calls, not completed frames. So as the software intercepts a call, the frame rate counter is increased, whether the frame is rendered or not. This could lead to many unrendered frames, and an artificially high FPS number, when in reality the software is merely optimizing the sequence of rendering tasks rather than increasing FPS.

If it helps the 'immersion factor' of a game (less tearing, more responsiveness), then it could be highly beneficial to gamers. Currently, to work as Lucid has intended, they have validated around 100 titles. We spoke to Lucid (see next page), and they say that the technology should work with most, if not all titles. Users will have to add programs manually to take advantage of the technology if the software is not in the list. The reason for only 100 titles being validated is that each game has to be validated with a lot of settings, on lots of different kit, making the validation matrix huge (for example, 100 games x 12 different settings x 48 different system hardware configurations = time and lots of it).

Virtu MVP causes many issues when it comes to benchmarking and comparison of systems as well. The method of telling the performance of systems apart has typically been the FPS values. With this new technology, the FPS value is almost meaningless as it counts the frames that are not rendered. This has consequences for benchmarking companies like Futuremark and overclockers who like to compare systems (Futuremark have released a statement about this). Technically all you would need to do (if we understand the software correctly) to increase your score/FPS would be to reduce the refresh rate of your monitor.

Since this article was started, we have had an opportunity to speak to Lucid regarding these technologies, and they have pointed out several usage scenarios that have perhaps been neglected in other earlier reviews regarding this technology. In the next page, we will discuss what Lucid considers ‘normal’ usage.

145 Comments

View All Comments

extide - Tuesday, April 10, 2012 - link

Do you even know what it means to preempt a frame? Cavalcade is describing the technology correctly. He is explaining pretty much the same thing as you are but you just don't get it..Also separate input and rendering modules means a lot. Typically a game engine will have a big loop that will check input, draw the frame, and restart (amongst other things of course) but to split that into two independent loops is what he is talking about.

Iketh - Wednesday, April 11, 2012 - link

You really should look up "preemption." This is not what is happening... CLOSE, but not quite. Preemption is not the right word at all. This makes him incorrect and I kindly tried explaining. You are incorrect in backing him up and then accusing me of being inept. Guess what that makes you?On top of that, he's also not talking about splitting input and rendering into two loops. Not even close. How did you come up with this idea? He's asking how the input polling is affected with this technology. It is not, and can not, unless polling is strictly tied to framerate.

I want to be clear that I'm not for this technology. I think it won't offer any tangible benefits, especially if you're already over 100 fps, and they want to power up a second GPU in the process... I'm just trying to help explain how it's supposed to work.

Iketh - Sunday, April 8, 2012 - link

"handling input in a game engine" means nothing here. What matters is when your input is reflected in a rendered image and displayed on your monitor. That involves the entire package. Lucid basically prevents GPUs from rendering an image that won't get displayed in its entirety, allowing the GPU to begin work on the next image, effectively narrowing the gap from your input to the screen.Iketh - Sunday, April 8, 2012 - link

mistake post, sorryRyan Smith - Sunday, April 8, 2012 - link

The bug comment is in regards to HyperFormance. Virtual V-Sync is rather simple (it's just more buffers) and should not introduce rendering errors.Ryan Smith - Sunday, April 8, 2012 - link

Virtual V-Sync is totally a glorified triple buffering, however this is a good thing.http://images.anandtech.com/reviews/video/triplebu...

Triple buffering as we know it - with 2 back buffers and the ability to disregard a buffer if it's too old - doesn't exist in most DirectX games and can't be forced by the video card. Triple buffering as implemented for most DirectX games is a 3 buffer queue, which means every frame drawn is shown, and the 3rd buffer adds another frame of input lag.

On paper (note: I have yet to test this), Virtual V-Sync should behave exactly like triple buffering. The iGPU back buffer allows Lucid to accept a newer frame regardless of whether the existing frame has been used or not, as opposed to operating as a queue. This has the same outcome as triple buffering, primarily that the GPU never goes idle due to full buffers and there isn't an additional frame of input lag.

The overhead of course remains to be seen. Lucid seems confident, but this is what benchmarking is for. But should it work, I'd be more than happy to see the return of traditional triple buffering.

HyperFormance is another matter of course. Frame rendering time prediction is very hard. The potential for reduced input lag is clear, but this is something that we need to test.

DanNeely - Monday, April 9, 2012 - link

Lucid was very confident in their Hydra solution; but it never performed even close to SLI/xFire; and after much initial hype being echoed by the tech press it just disappeared. I'll believe they have something working well when I see it; but not before.JNo - Monday, April 9, 2012 - link

Thisvailr - Sunday, April 8, 2012 - link

Page 8 quote: "The VRM power delivery weighs in at 6 + 4 phase, which is by no means substantial (remember the ASRock Z77 Extreme4 was 8 + 4 and less expensive)."Yet: the "Conclusions" chart (page 14) shows the same board having 10 + 4 power.

Which is correct?

flensr - Sunday, April 8, 2012 - link

I'm bummed that ASUS didn't include mSATA connectors. Small mSATA SSDs would make for great cache or boot drives with no installation hassles and they're pretty cheap and available at the low capacities you'd want for a cache drive. That's a feature I will be looking for with my next mobo purchase.Ditching USB 2.0 is also one of the next steps I'll be looking for. Not having to spend a second thinking about which port to plug something in to will be nice once USB 2.0 is finally laid to rest. Having only 4 USB 3.0 ports is stupidly low this long after the release of the standard, and it's hampering the development of USB 3.0 devices.

Finally, I've been repeatedly impressed by my Intel NICs over the last decade. They simply perform faster and more reliably than the other chips. I look for an Intel NIC when I shop for mobos.