The Xeon E5-2600: Dual Sandy Bridge for Servers

by Johan De Gelas on March 6, 2012 9:27 AM EST- Posted in

- IT Computing

- Virtualization

- Xeon

- Opteron

- Cloud Computing

LS-DYNA

LS-DYNA is a "general purpose structural and fluid analysis simulation software package capable of simulating complex real world problems", developed by the Livermore Software Technology Corporation (LSTC). It is used by the automobile, aerospace, construction, military, manufacturing and bioengineering industry. Even simple simulations take hours to complete, so even a small performance increase results in tangible savings. Add to that that many of our readers have been asking that we perform some benchmarking with HPC workloads. So reasons enough to include our own LS-DYNA benchmarking.

These numbers are not directly comparable with AMD's and Intel's benchmarks as we did not perform any special tuning besides using the message passing interface (MPI) version of LS-DYNA ( ls971_mpp_hpmpi ) to run the LS-DYNA solver to get maximum scalability. This is HP-MPI version of LS-DYNA 9.71.

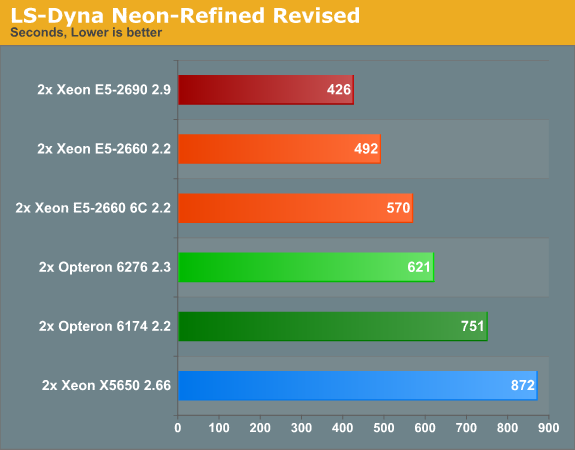

Our first test is a refined revised Neon crash test simulation.

This is one of the few benchmarks (besides SAP) where the Opteron 6276 outperforms the older Opteron 6174 by a tangible margin (about 20% faster) and is significantly faster than the Xeon 5600, by 40% to be more precise. However, the direct competitor of the 6276, the Xeon E5-2630, will do a bit better (see the E5-2660 6C score). When you are aiming for the best performance, it is impossible to beat the best Xeons: the Xeon E5-2660 offers 26% better performance, the 2690 is 46% faster. It is interesting to note that LS-Dyna does not scale well with clockspeed: the 32% higher clockspeed of the Xeon E5-2690 results in only a 15% speed increase.

A few other interesting things to note: we saw only a very smal performance increase (+5%) due to Hyperthreading. Memory bandwidth does not seem to be critical either, as performance increased by only 6% when we replaced DDR3-1333 with DDR3-1600. If LS-Dyna was bottlenecked severely by the memory speed we should have seen a performance increase close to 20% (1600 vs 1333).

CMT boosted the Opteron 6276's performance by up to 33%, which seems weird at first since LS-DYNA is a typical floating point intensive application. As the shared floating point "outsources" load and stores to the integer cores, the most logical explanation is that LS-DYNA is limited by the load/store bandwidth. This is in sharp contrast with for example 3DS Max where the additional overhead of 16 extra threads slowed the shared FP down instead of speeding it up.

Also, both CPUs seem to have made good use of their turbo capabilities. The AMD Opteron was running at 2.6 GHz most of the time, the Xeon 2690 at 3.3 GHz and the Xeon 2660 at 2.6 GHz.

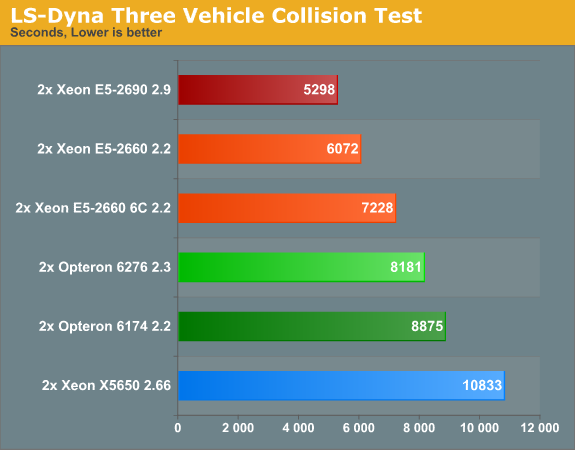

The second test is the "Three Vehicle Collision Test" simulation, which runs a lot longer.

The three vehicle collision test does not change the benchmarking picture, it confirms our early findings. The Opteron Interlagos does well, but the Xeon E5 is the new HPC champion.

81 Comments

View All Comments

JohanAnandtech - Wednesday, March 7, 2012 - link

Argh. You are absolutely right. I reversed all divisions. I am fixing this as we type. Luckily this does not alter the conclusion: LS-DYNA does not scale with clockspeed very well.alpha754293 - Wednesday, March 7, 2012 - link

I think that I might have an answer for you as to why it might not scale well with clock speed.When you start a multiprocessor LS-DYNA run, it goes through a stage where it decomposes the problem (through a process called recursive coordinate bisection (RCB)).

This decomposition phase is done every time you start the run, and it only runs on a single processor/core. So, suppose that you have a dual-socket server where the processors say...are hitting 4 GHz. That can potentially be faster than say if you had a four-socket server, but each of the processors are only 2.4 GHz.

In the first case, you have a small number of really fast cores (and so it will decompose the domain very quickly), whereas in the latter, you have a large number of much slower cores, so the decomposition will happen slowly, but it MIGHT be able to solve the rest of it slightly faster (to make up for the difference) just because you're throwing more hardware at it.

Here's where you can do a little more experimenting if you like.

Using the pfile (command line option/flag 'p=file'), not only can you control the decomposition method, but you can also tell it to write the decomposition to a file.

So had you had more time, what I would have probably done is written out the decompositions for all of the various permutations you're going to be running. (n-cores, m-number of files.)

When you start the run, instead of it having to decompose the problem over and over again each time it starts, you just use the decomposition that it's already done (once) and then that way, you would only be testing PURELY the solving part of the run, rather than from beginning to end. (That isn't to say that the results you've got is bad - it's good data), but that should help to take more variables out of the equation when it comes to why it doesn't scale well with clock speed. (It should).

IntelUser2000 - Tuesday, March 6, 2012 - link

Please refrain from creating flamebait in your posts. Your post is almost like spam, almost no useful information is there. If you are going to love one side, don't hate the other.Alexko - Tuesday, March 6, 2012 - link

It's not "like spam", it's just plain spam at this point. A little ban + mass delete combo seems to be in order, just to cleanup this thread—and probably others.ultimav - Wednesday, March 7, 2012 - link

My troll meter is reading off the charts with this guy. Reading between the lines, he's actually a hardcore AMD fan trying to come across as the Intel version of Sharikou to paint Intel fans in a bad light. Pretty obvious actually.JohanAnandtech - Wednesday, March 7, 2012 - link

We had to mass delete his posts as they indeed did not contain any useful info and were full of insults. The signal to noise ratio has been good the last years, so we must keep it that way.Inteluser2000, Alexko, Ultimav, tipoo: thx for helping to keep the tone civil here. Appreciate it.

- Johan.

tipoo - Wednesday, March 7, 2012 - link

And thank you for removing that stuff.tipoo - Tuesday, March 6, 2012 - link

We get it. Don't spam the whole place with the same post.tipoo - Tuesday, March 6, 2012 - link

No, he's just a rational persons. I don't care which company you like, if you say the same thing 10 times in one article someones sure to get annoyed and with justification.MySchizoBuddy - Tuesday, March 6, 2012 - link

I'm again requesting that when you do the benchmarks please do a Performance per watt metric along with stress testing by running folding@home for straight 48hours.