Apple iPhone 4S: Thoroughly Reviewed

by Anand Lal Shimpi & Brian Klug on October 31, 2011 7:45 PM EST- Posted in

- Smartphones

- Apple

- Mobile

- iPhone

- iPhone 4S

WiFi Performance

Apple hasn’t spared upgrading WLAN connectivity on the 4S, though the improvement isn’t quite as dramatic as what I was hoping for. The 4S uses BCM4330, Broadcom’s newest WLAN, Bluetooth, and FM combo chip (though the latter still isn’t used). We’ve seen this particular combo chip in the Samsung Galaxy S2, and no doubt BCM4330 will start popping up a lot more in places where its predecessor, BCM4329 was used, which was everything from the 3GS to the 4 and in virtually innumerable Android devices. BCM4330 brings Bluetooth 4.0 support, whereas BCM4329 was previously Bluetooth 2.1, and still includes the same 802.11b/g/n (2.4 GHz, single spatial stream) connectivity as the former, including only tuning 20MHz channels (HT20). I was hoping that the 4S would also include 5 GHz support, after seeing SGS2 include it, however the 4S still is 2.4GHz only.

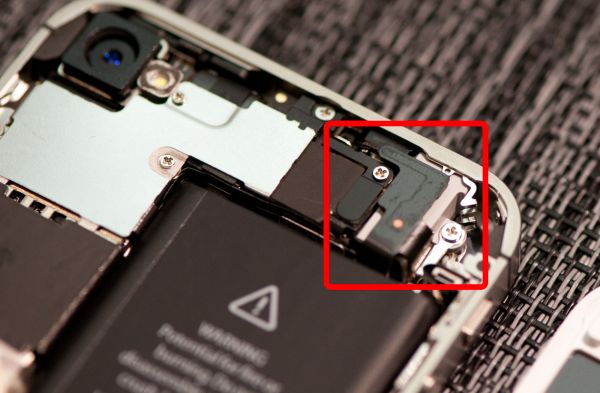

Encircled in red: The iPhone 4S' 2.4 GHz WiFi+BT Antenna

Encircled in red: The iPhone 4S' 2.4 GHz WiFi+BT Antenna

In addition, the 4S locates the WiFi antenna in the same place as the CDMA iPhone 4. If you missed it back then, and have read the previous cellular connectivity section, you’re probably wondering where the WiFi and Bluetooth antennas went, given the absence of a stainless steel band for them. The answer is inside, printed on a flex board, like virtually everyone else does for their cellular antennas. It’s noted on the FCC-submitted schematic, but I also opened up the 4S I purchased and grabbed a picture.

Left: iPhone 4S with WiFi RSSI circled, Right: iPhone 4

Given the small size of this antenna, you might be led (deceptively) to think it has worse sensitivity or isotropy. It’s interesting to me that this is actually not the case. Subjectively, I measured slightly better received signal strength on the 4S compared to a 4 side by side, and upon checking the FCC documents learned the 4S’ WLAN antenna has a peak gain of –1.5 dBi compared to –1.89 dBi on the 4, making it better than the previous model. That said, the two devices have approximately the same EIRP (Equivalent Isotropically Radiated Power) for transmit when you actually work the math out.

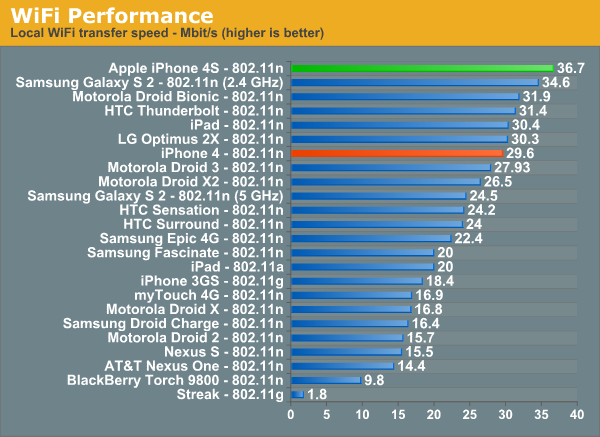

Moving to a newer WLAN combo chip helps speed WiFi throughput up considerably in our test, though I’m starting to think that the bigger boost is actually thanks in part to a faster SoC. As a reminder, this test consists of a 100MB PDF hosted locally loaded over 802.11n (Airport Extreme Gen.5), throughput is measured on the server. On MobileSafari, the PDF document is loaded in its entirety before being rendered, so we’re really seeing WiFi throughput.

GPS

The iPhone 4 previously used a BCM4750 single chip GPS receiver, and shared the 2.4 GHz WiFi antenna as shown many times in diagrams. We reported with the CDMA iPhone 4 that Qualcomm’s GPS inside MDM6600 was being used in place of some discrete solution, and showed a video demonstrating its improved GPS fix. I suspected at the time that the CDMA iPhone 4 might be using GLONASS from MDM6600 (in fact, the MDM6600 amss actually flashed onto the CDMA iPhone 4 includes many GLONASS references), but never was able to concretely confirm it was actually being used.

MDM6610 inside the 4S inherits the same Qualcomm GNSS (Global Navigation Satellite System) Gen8 support, namely GPS and its Russian equivalent, GLONASS. The two can be used in conjunction at the same time and deliver a more reliable 3D fix onboad MDM6610, which is what the 4S does indeed appear to be using. GPS and GLONASS are functionally very similar, and combined support for GPS and GLONASS at the same time is something most modern receivers do now. There are even receivers which support the EU’s standard, Galileo, though it isn’t completed yet. This time around, Apple is being direct about its inclusion of GLONASS. The GPS inside MDM6610 fully supports standalone mode, and assisted mode from UMTS, GSM, OMA, and gpsOneXTRA.

Just like with the CDMA iPhone 4, I drove around and recorded a video to illustrate GPS performance, since unfortunately iDevices still don’t report direct GPS NMEA data. The 4S has a very constant error radius circle in the Maps application and shows little deviation while traveling, whereas the 4 sometimes wanders, changes horizontal accuracy, and velocity. In addition, the 4S GPS reports the present position in the proper lane the whole time as well, while the 4 is slightly shifted. I don’t think many people complained about the GPS performance on the 4, but both time to fix and overall precision are without a doubt improved over the GSM/UMTS 4. Subjectively, indoor performance seems much improved, and I’ve noticed that the iPhone 4S will report slightly better horizontal accuracy than the 4 (using MotionX-GPS on iOS) indoors. Unfortunately we can’t perform much more analysis since again real NMEA data isn’t presented on iOS, instead location is abstracted away using Apple’s location services APIs.

Noise Cancelation

The iPhone 4 included a discrete Audience noise processor and second microphone for doing some advanced common mode noise rejection. This reduced the amount of background noise audible to other parties when calling from a noisy environment, and is a feature that virtually all of this latest generation of smartphones has included. The 4S still includes that second microphone (up at the top, right next to the headset jack), though the discrete Audience IC is gone. It’s possible that Audience has been integrated into the A5 SoC itself, or elsewhere, or the 4S is using Qualcomm’s Fluence noise cancelation. I spent considerable time digging around and couldn’t find anything conclusive to indicate one possible situation over the other.

We recently started measuring noise rejection by placing a call between a phone under test and another phone connected to line-in on an audio card, then ramping volume up and talking into the handset. The 4S doesn’t get spared this treatment, and I’ve also included the 4 and 3GS (which has no such common mode noise rejection) for comparison.

Subjectively, the 4S has further improved ambient noise rejection over the 4. I ran this test twice to make sure it wasn’t a fluke, and indeed the 4S subjectively has less noticeable ambient noise than the 4 even at absurd volume levels.

We’ve also placed the usual test calls to the local ASOS weather station and recorded the output. I can’t detect any difference in line-out quality of the voice call for better or worse, at least on GSM/UMTS. I’d expect the 4S to offer exactly the same quality on CDMA as the CDMA iPhone 4.

One thing I should note is that there does seem to be a bit more perceptible line noise on the 4S’ earpiece when on phone calls. It isn’t a huge difference, but there is definitely a bit more background noise on the 4S earpiece than the 4 in calls. The original 4S that Anand purchased had a noticeable and distracting amount of background noise, though swapping that unit out seems to have somewhat mitigated the problem (he still complains of audible cracking via the earpiece during calls). I’ve tested enough iPhone 4 handsets (and been through several) to know that there is a huge amount of variance in earpiece quality, (even going through one with an earpiece that sounded saturated/overmodulated at every volume setting), so I wager this might have been what was going on.

199 Comments

View All Comments

doobydoo - Friday, December 2, 2011 - link

Its still absolute nonsense to claim that the iPhone 4S can only use '2x' the power when it has available power of 7x.Not only does the iPhone 4s support wireless streaming to TV's, making performance very important, there are also games ALREADY out which require this kind of GPU in order to run fast on the superior resolution of the iPhone 4S.

Not only that, but you failed to take into account the typical life-cycle of iPhones - this phone has to be capable of performing well for around a year.

The bottom line is that Apple really got one over all Android manufacturers with the GPU in the iPhone 4S - it's the best there is, in any phone, full stop. Trying to turn that into a criticism is outrageous.

PeteH - Tuesday, November 1, 2011 - link

Actually it is about the architecture. How GPU performance scales with size is in large part dictated by the GPU architecture, and Imagination's architecture scales better than the other solutions.loganin - Tuesday, November 1, 2011 - link

And I showed it above Apple's chip isn't larger than Samsung's.PeteH - Tuesday, November 1, 2011 - link

But chip size isn't relevant, only GPU size is.All I'm pointing out is that not all GPU architectures scale equivalently with size.

loganin - Tuesday, November 1, 2011 - link

But you're comparing two different architectures here, not two carrying the same architecture so the scalability doesn't really matter. Also is Samsung's GPU significantly smaller than A5's?Now we've discussed back and forth about nothing, you can see the problem with Lucian's argument. It was simply an attempt to make Apple look bad and the technical correctness didn't really matter.

PeteH - Tuesday, November 1, 2011 - link

What I'm saying is that Lucian's assertion, that the A5's GPU is faster because it's bigger, ignores the fact that not all GPU architectures scale the same way with size. A GPU of the same size but with a different architecture would have worse performance because of this.Put simply architecture matters. You can't just throw silicon at a performance problem to fix it.

metafor - Tuesday, November 1, 2011 - link

Well, you can. But it might be more efficient not to. At least with GPU's, putting two in there will pretty much double your performance on GPU-limited tasks.This is true of desktops (SLI) as well as mobile.

Certain architectures are more area-efficient. But the point is, if all you care about is performance and can eat the die-area, you can just shove another GPU in there.

The same can't be said of CPU tasks, for example.

PeteH - Tuesday, November 1, 2011 - link

I should have been clearer. You can always throw area at the problem, but the architecture dictates how much area is needed to add the desired performance, even on GPUs.Compare the GeForce and the SGX architectures. The GeForce provides an equal number of vertex and pixel shader cores, and thus can only achieve theoretical maximum performance if it gets an even mix of vertex and pixel shader operations. The SGX on the other hand provides general purpose cores that work can do either vertex or pixel shader operations.

This means that as the SGX adds cores it's performance scales linearly under all scenarios, while the GeForce (which adds a vertex and a pixel shader core as a pair) gains only half the benefit under some conditions. Put simply, if a GeForce core is limited by the number of pixel shader cores available, the addition of a vertex shader core adds no benefit.

Throwing enough core pairs onto silicon will give you the performance you need, but not as efficiently as general purpose cores would. Of course a general purpose core architecture will be bigger, but that's a separate discussion.

metafor - Tuesday, November 1, 2011 - link

I think you need to check your math. If you double the number of cores in a Geforce, you'll still gain 2x the relative performance.Double is a multiplier, not an adder.

If a task was vertex-shader bound before, doubling the number of vertex-shaders (which comes with doubling the number of cores) will improve performance by 100%.

Of course, in the case of 543MP2, we're not just talking about doubling computational cores.

It's literally 2 GPU's (I don't think much is shared, maybe the various caches).

Think SLI but on silicon.

If you put 2 Geforce GPU's on a single die, the effect will be the same: double the performance for double the area.

Architecture dictates the perf/GPU. That doesn't mean you can't simply double it at any time to get double the performance.

PeteH - Tuesday, November 1, 2011 - link

But I'm not talking about relative performance, I'm talking about performance per unit area added. When bound by one operation adding a core that supports a different operation is wasted space.So yes, doubling space always doubles relative performance, but adding 20 square millimeters means different things to the performance of different architectures.