The SandForce Roundup: Corsair, Kingston, Patriot, OCZ, OWC & MemoRight SSDs Compared

by Anand Lal Shimpi on August 11, 2011 12:01 AM ESTRandom Read/Write Speed

The four corners of SSD performance are as follows: random read, random write, sequential read and sequential write speed. Random accesses are generally small in size, while sequential accesses tend to be larger and thus we have the four Iometer tests we use in all of our reviews.

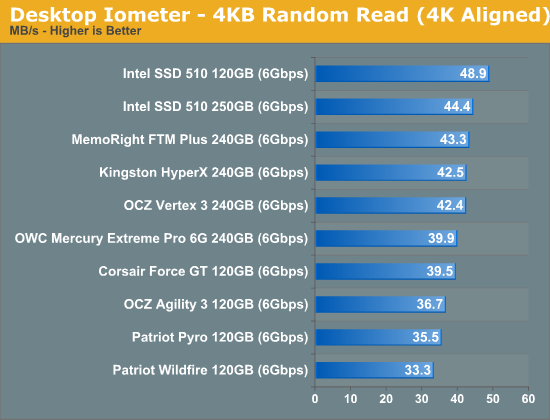

Our first test writes 4KB in a completely random pattern over an 8GB space of the drive to simulate the sort of random access that you'd see on an OS drive (even this is more stressful than a normal desktop user would see). I perform three concurrent IOs and run the test for 3 minutes. The results reported are in average MB/s over the entire time. We use both standard pseudo randomly generated data for each write as well as fully random data to show you both the maximum and minimum performance offered by SandForce based drives in these tests. The average performance of SF drives will likely be somewhere in between the two values for each drive you see in the graphs. For an understanding of why this matters, read our original SandForce article.

Random read performance is pretty consistent across all of the SF-2281 drives. The Patriot drives lose a bit of performance thanks to their choice in NAND (asynchronous IMFT in the case of the Pyro and Toggle NAND in the case of the Wildfire).

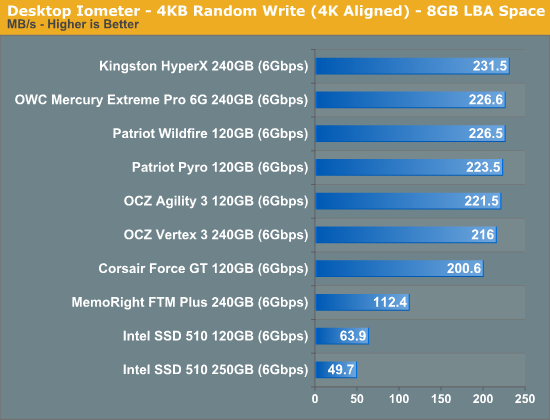

Most random writes are highly compressible and thus all of the SF-2281 drives do very well here. There's no real advantage to synchronous vs. asynchronous NAND here since most of the writes never make it to NAND in the first place. The Agility 3 and Vertex 3 here both use their original firmware while the newer drives are running the latest firmware updates from SandForce. The result is a slight gain in performance, but all things equal you won't see a difference in performance between these drives.

The MemoRight FTM Plus is the only exception here. Its firmware caps peak random write performance over an extended period of time. This is a trick you may remember from the SF-1200 days. It's almost entirely gone from the SF-2281 drives we've reviewed. The performance cap here will almost never surface in real world performance. Based on what we've seen, if you can sustain more than 50MB/s in random writes you're golden for desktop workloads. The advantage SandForce drives have is they tend to maintain these performance levels better than other controllers thanks to their real-time compression/dedupe logic.

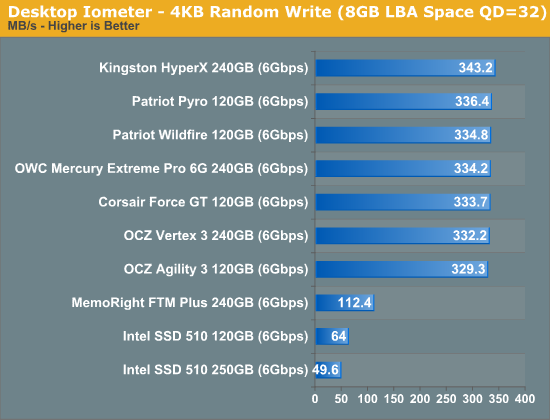

Many of you have asked for random write performance at higher queue depths. What I have below is our 4KB random write test performed at a queue depth of 32 instead of 3. While the vast majority of desktop usage models experience queue depths of 0 - 5, higher depths are possible in heavy I/O (and multi-user) workloads:

All of the SF-2281 drives do better with a heavier load. The MemoRight drive is still capped at around 112MB/s here.

Sequential Read/Write Speed

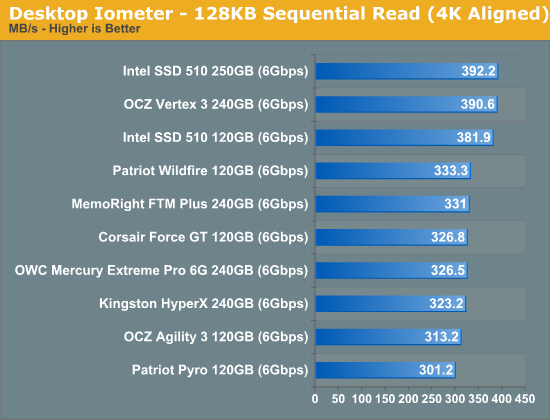

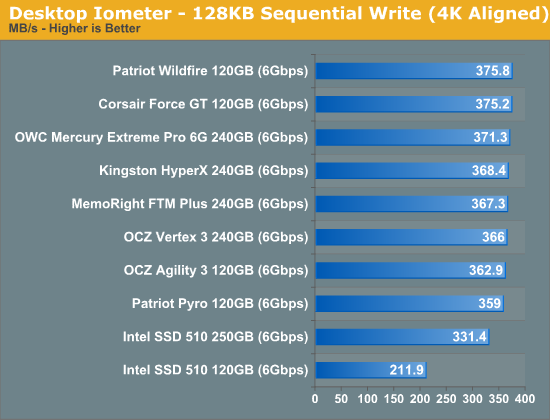

To measure sequential performance I ran a 1 minute long 128KB sequential test over the entire span of the drive at a queue depth of 1. The results reported are in average MB/s over the entire test length.

The older SF-2281 firmwares did a bit better in some tests than the newer versions, hence the Vertex 3 being at the top here. All of the newer drives perform pretty similarly in our sequential read test.

The same goes for our sequential write test - all of the SF-2281 drives perform very similarly.

90 Comments

View All Comments

Ipatinga - Thursday, August 11, 2011 - link

So, the Corsair Force GT is really going against OCZ Vertex 3? I thought it was agains Vertex 3 Max IOPS.In this case, the Corsair Force 3 is going after Agility 3?

And Corsair Performance 3 is going after Solid 3?

Thanks :)

Would like to hear more about NAND Flash that is Async and Sync and Toogle.

bob102938 - Thursday, August 11, 2011 - link

There are some factors that were not considered on the first page of the article. The number of dies per wafer is important, but you are forgetting the cost of producing a flash memory wafer vs a VLSI wafer. Flash memory is a ~20 layer process that has margins for error which can be worked around. VLSI is a 60+ layer process that has 0 margin for error. Producing flash memory wafers is more than an order of magnitude cheaper than producing the same-size VLSI wafer. Additionally, turnaround time on a flash wafer can be achieved in ~20 days, whereas a VLSI wafer can require 3 months.Also the internal cost of a 300mm flash memory wafer is more like $1000. A VLSI wafer is around $8000.

philosofool - Thursday, August 11, 2011 - link

I don't want to blame the victims, end users. Obviously, manufacturers have a responsibility to QA.Still, when you look at the market forces here, it seems obvious that market forces are driving the problem.

Manufacturer makes the COOL drive that gets the best performances marks of any drive out there. One year later, the COOLER drive is released. No one wants a COOL drive anymore. Plus, the margin making COOL drives is so small, you can't drop your price on a COOL drive to make it an attractive "midrange" option. So you have to start developing a new controller to make something down-right freezing.

Because there's such an emphasis on performance, controllers and the drives they run become obsolete before a water-tight reliable version of the controller can be made. Of course, they're not really obsolete--there's nothing wrong with the X-25M controller--but they can't compete in a market with drives that show twice the random read performance of an unreliable competitor.

Constant R&D on new controllers and the demand for performance mean that reliability takes a backseat. You can't sell COOL drives as long as someone makes a COOLER drive, even if cooler drives have reliability problems. Think about yourself: would you buy an X-25 M knowing that you could get a Vertex 3 instead?

Bannon - Thursday, August 11, 2011 - link

I built a system on an Asus P8Z68 Deluxe motherboard and used two Intel 510 250GB drives with it. One is the system drive and the other data drive with firmwares PWG2 and PWG4 respectively. To date I have not experienced a BSOD BUT my system drive will drop from 6Gbs to 3Gbs for no apparent reason and stay there until I power the system off. My data drive is rock solid at 6Gbs and stays there. I've just started working with Intel so I don't know where that is going to lead. Hopefully it end up with a new drive with the latest firmware and 6Gbs performance. Given my druthers I'd rather have this problem than the Sandforce BSOD's but I wanted to point out that everything isn't perfect in Intel-land.Coup27 - Thursday, August 11, 2011 - link

Anand,Can we ever expect a 470 review?

nish0323 - Thursday, August 11, 2011 - link

or am I the only one about the fact that the OWC drive is the ONLY one with a 5 year warranty on it!! That's nuts... they actually back up the claim of their SSD drive longevity by giving you such a long warranty. I love SSDs.OWC Grant - Friday, August 12, 2011 - link

Glad you noticed that warranty term because it's somewhat related to topic of this article. I've been in direct contact with Anand on this as the tone of article is all-encompassing and I wanted to shed some light on that from our perspective.While many SF based SSDs share firmware, not all hardware is the same. Our SSDs have subtle design and/or component differences which is what we feel reduces or eliminates our products susceptibility to the BSOD issue.

The honest truth is we have not been able to create a BSOD issue here with our SSDs using the same procedures that caused other brands' SSDs to experience BSOD. Nor have we received or read one direct report of such an occurrence using our drives.

And while we cut our teeth so to speak in the Mac industry, PLENTY of PC users have our SSDs in their systems...as well as that we do extensive testing on a variety of motherboards/system configs to ensure long term reliable operation.

More supportive perhaps is the fact that we've had other brand users who experienced BSOD, but after buying our SSD, they reported back that it eliminated any issues they were experiencing.

ckryan - Thursday, August 11, 2011 - link

should be getting more reliable, not less. As profit margins get slimmer and slimmer, shouldn't manufactures be producing more reliable drives? Also, Intel might be making less money per drive, but surely their enterprise sales require the same levels of validation (required previously).Conscript - Thursday, August 11, 2011 - link

am I nuts after reading multiple reviews from Anand as well as elsewhere, that I keep thinking I'm best off with a 256GB Crucial M4? I've had my 160GB X-25 for a while now, and think I'm going to hand it down to the wifey.Bannon - Thursday, August 11, 2011 - link

I had a 256GB M4 which worked fine except it would BSOD if I let my system sleep.