The AMD A8-3850 Review: Llano on the Desktop

by Anand Lal Shimpi on June 30, 2011 3:11 AM ESTPerformance in Older Games

In response to our preview a number of you asked for performance in older titles. We dusted off a couple of our benchmarks from a few years ago to see how Intel's HD 3000 and AMD's Radeon HD 6550D handled these golden oldies.

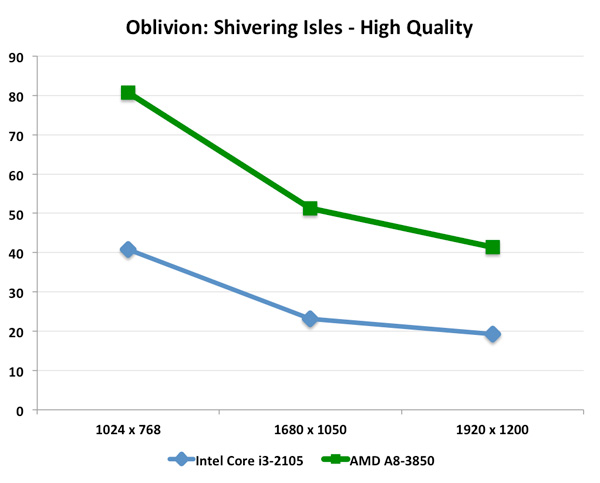

First up is a personal favorite: Oblivion. Our test remains unchanged from when we used to run this test, the only difference is we're actually able to get playable frame rates from integrated graphics now. We set the game to High Quality defaults, although the Intel platform had to disable HDR in order to get the game to render properly:

The Core i3-2105 with its HD Graphics 3000 can actually deliver a playable experience at 1024 x 768 with just over 40 fps. Move to higher resolutions however and you either have to drop quality settings or sacrifice playability. The A8-3850 gives you no such tradeoff. Even at 1920 x 1200 the A8 manages to deliver over 40 fps using Oblivion's High Quality defaults.

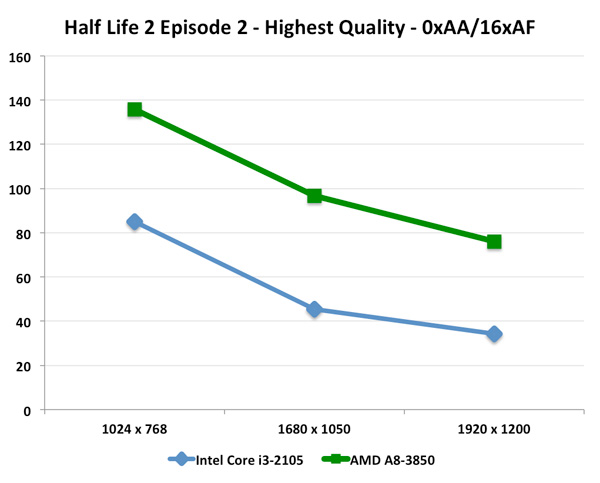

We saw similar results under Half Life 2: Episode Two:

Here the Core i3 maintains playability all the way up to 1920 x 1200, but you obviously get much higher frame rates from the Llano APU.

99 Comments

View All Comments

silverblue - Thursday, June 30, 2011 - link

So, in other words, you completely skipped over what I posted, especially the comparisons between similarly clocked parts? In that case, you may not recall my saying that comparing to Thuban was unfairly skewed in AMD's favour and that for productivity you'd have to be mad to fork out for a high-end Core 2 Quad that is soundly beaten by Thuban under those very circumstances.I'm not going to spend anymore time on this subject for fear it may cause my brain to dissolve.

silverblue - Thursday, June 30, 2011 - link

We really don't know how Bulldozer will perform. Superior in some areas, inferior in others, perhaps. Its roots are in the server domain so it probably won't be the be-all-and-end-all of desktop performance. Should handily thrash Phenom II though.That is, if they stop pushing it backwards... if they do it anymore we may as well wait for Enhanced Bulldozer. :/ I can only truly see Bulldozer being a 9-12 month stop gap before that appears, and as we know, Trinity is going to use the Enhanced cores instead of first generation Bulldozer cores, so it remains to be seen how long a shelf life the product will have.

duploxxx - Thursday, June 30, 2011 - link

Ivy Bridge before buldozer, oh man you really have no idea about roadmaps...Server and desktop will be there long time before Ivy, if they don't hurry with Ivy even Trinity will be ready to be launched.

j_iggga - Thursday, June 30, 2011 - link

Single and double threaded performance is most important for games.Bulldozer has no chance in that regard. The only hope Bulldozer has is that it outperforms a quad core Intel CPU with its 8 cores in a fully multithreaded application.

L. - Thursday, June 30, 2011 - link

GPU's are most important for games, that and a CPU that can feed your GPU's ;)More and more stuff is using 4+ threads, I'd be surprised if the next benchmark from Crytek isn't vastly multithreaded on the CPU side.

We'll see anyway, but the design choices AMD made for Bulldozer are definitely good, as were their multicore designs that Intel quickly copied - for the best.

frozentundra123456 - Thursday, June 30, 2011 - link

Are you kidding me??? If Bulldozer is so great why do they keep having delay after delay and are showing no performance figures??HangFire - Tuesday, July 5, 2011 - link

Given the number of Nocona CPU's Intel sold- as evidenced by the huge number showing up on the secondary market right now- Intel server shipments won't die even if they have an inferior product (again).The faithful will buy on.

L., you seem to be betting on a jump for Bulldozer that will exceed that of C2D over Netburst. I'm not sure that's even possible. C2D only lacked a built-in memory controller, and that has been fixed. I'll be happy if BD even matches Nehalem in core instruction processing efficiency.

But I agree the more progress AMD makes, the better the market is for all of us, no matter which we buy.

frozentundra123456 - Thursday, June 30, 2011 - link

totally agree with your post. Just a weak gpu tacked on to ancient CPU architecture that could easily be beaten by a 50.00 discrete GPU in the desktop.I can see a place for this chip in a laptop where power savings is important and it is not possible to add a GPU, but for the desktop, I really dont see a place for it.

And I agree that Bulldozer is late to the game and may not perform like the AMD fanboys claim. AMD talks a good game, but so far they have not been able to back it up in the CPU area.

jgarcows - Thursday, June 30, 2011 - link

Can you use OpenCL with the Llano GPU? If so, how does it perform for bitcoin mining?JarredWalton - Thursday, June 30, 2011 - link

Yes, Llano fully supports OpenCL -- it's basically a 5570 with slightly lower core clocks and less memory bandwidth (because it's shared). On a laptop, Llano is roughly half the performance of a desktop 5570 (around 40Mhash/s). The desktop chip should be about 40%-50% faster, depending on how much the memory bandwidth comes into play. But 60Mhash/s is nothing compared to a good GPU. Power would be around 60-70W for the entire system for something like 1Mhash/W. Stick a 5870 GPU into a computer and you're looking at around 400Mhash/s and a power draw of roughly 250W -- or 1.6Mhash/W.In short: for bitcoin mining you're far better off with a good dGPU. But hey, Llano's IGP is probably twice as fast as CPU mining with a quad-core Sandy Bridge.