OCZ RevoDrive 3 X2 (480GB) Preview: 200K IOPS & 1.5GB/s for $1699?

by Anand Lal Shimpi on June 28, 2011 12:00 PM EST- Posted in

- Storage

- SSDs

- SandForce

- OCZ

- RevoDrive

- SF-2000

- RevoDrive 3

- RevoDrive 3 X2

Random Read/Write Speed

The four corners of SSD performance are as follows: random read, random write, sequential read and sequential write speed. Random accesses are generally small in size, while sequential accesses tend to be larger and thus we have the four Iometer tests we use in all of our reviews.

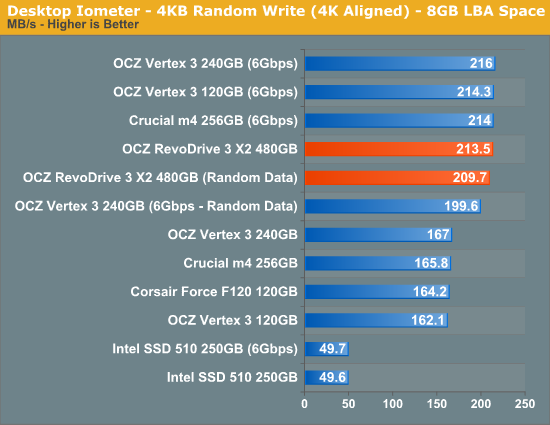

Our first test writes 4KB in a completely random pattern over an 8GB space of the drive to simulate the sort of random access that you'd see on an OS drive (even this is more stressful than a normal desktop user would see). I perform three concurrent IOs and run the test for 3 minutes. The results reported are in average MB/s over the entire time. We use both standard pseudo randomly generated data for each write as well as fully random data to show you both the maximum and minimum performance offered by SandForce based drives in these tests. The average performance of SF drives will likely be somewhere in between the two values for each drive you see in the graphs. For an understanding of why this matters, read our original SandForce article.

Low queue depth operation isn't going to show any advantage on the RevoDrive 3 X2. This is to be expected. I include these results to point out that for the majority of desktop users, you won't see any benefit from a 4-drive RAID-0 or the RevoDrive 3 X2. I already talked about how most modern SSDs deliver similar real world performance in our last SSD article. The RevoDrive 3 is no exception.

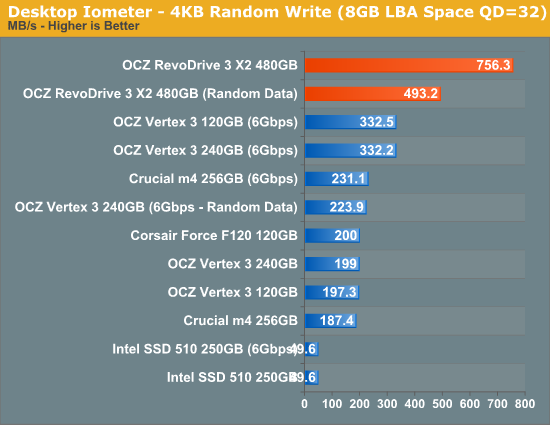

What happens during periods of intense IO activity however? The RevoDrive 3 X2 excels. With incompressible data the RevoDrive 3 X2 is over 2x faster than the 240GB Vertex 3 and with compressible data we're almost at 800MB/s for a single PCIe card.

Note that OCZ's specs for the RevoDrive 3 X2 promise up to 200,000 IOPS, however we're only seeing around 180K IOPS in our QD32 test. What gives? In order to hit those sorts of numbers you actually need to run in a multithreaded/ultra high queue depth configuration (two threads + QD64 in each case). If you actually run in this configuration but hit 100% of the LBA space, a reasonable workload for a high traffic server you'll get numbers similar to ours above (766MB/s vs. 756MB/s). If you limit the workload to an 8GB LBA space however you'll hit the 200K that OCZ advertises:

| OCZ RevoDrive 3 X2 (480GB) 4KB Random Write Performance (IOPS/MBps) | |||||||

| QD=3 | QD=32 | QD=64 | |||||

| IOPS | 52131 IOPS | 184649 IOPS | 202661 IOPS | ||||

| MB/s | 213.5 MB/s | 756.3 MB/s | 830.1 MB/s | ||||

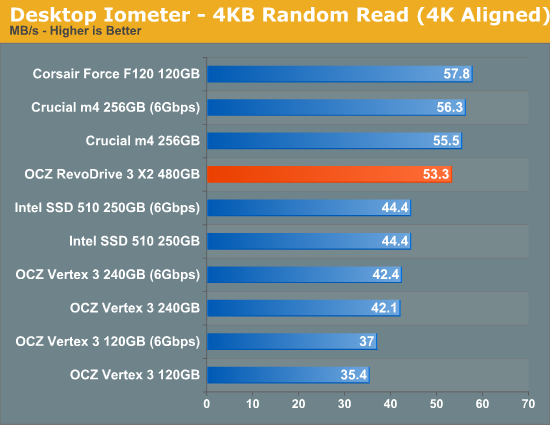

Low queue depth random read performance is nothing to be impressed by.

Sequential Read/Write Speed

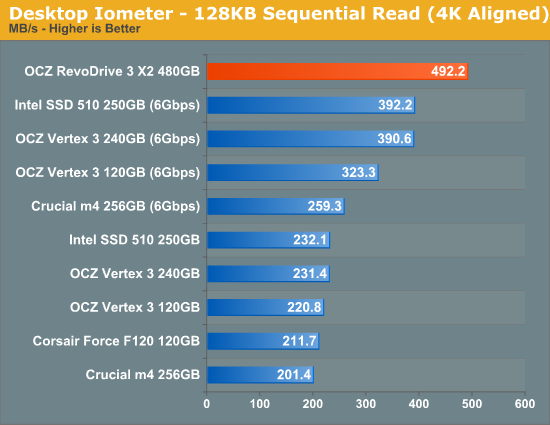

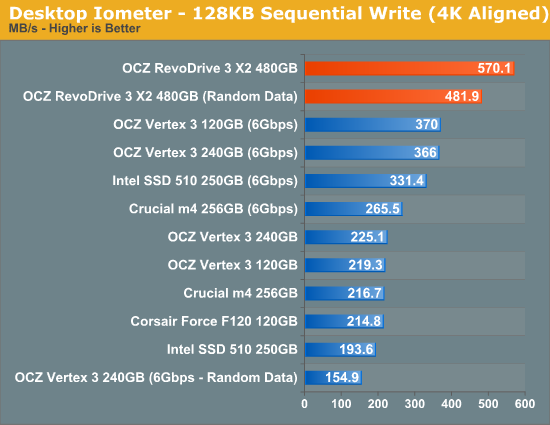

To measure sequential performance I ran a 1 minute long 128KB sequential test over the entire span of the drive at a queue depth of 1. The results reported are in average MB/s over the entire test length. These results are going to be the best indicator of large file copy performance.

We're still going through the unimpressive tests here - we see some benefit for larger transfer sizes, but the real advantages come when you start loading up the RevoDrive 3...

38 Comments

View All Comments

GullLars - Thursday, June 30, 2011 - link

This card will get decimated by even an 80GB ioDrive, not to mention the 320GB ioDrive Duo, in just about any real world scenario. They are designed from completely different standpoints.While both are highly parallell, the ioDrive is highly optimized for latency and can push close to 20.000 4KB random read IOPS (~75-80MB/s) at a QD of 1, while Revodrive 3 x2 reaches 13K at QD 3, and around 4-5K at QD 1.

The ioDrive also handles mixed IO well, and scales really fast at low block sizes and queue depths, so you would need incredibly heavy parallell workloads to keep it at a notable QD (>10).

What bothers me the most with the Revodrive 3 x2 is that it doesn't implement an onboard cache at all. With 64-512MB you could use it for read-ahead and hit those GB/s+ seq bandwidth numbers at a QD of 1 for fairly small block sizes.

With a (super?)capacitor you could also safely use say 8-16MB of that cache for write buffering and saturate the array.

GullLars - Thursday, June 30, 2011 - link

Correction, RevoDrive 3 x2 benched 7K 4KB random read IOPS @ QD 1 in AS SSD.Jharne - Tuesday, June 28, 2011 - link

The INF mentions VEN_11AB & VEN_1B4B which are both Marvell. So I'm guessing they built the controller.DigitalFreak - Tuesday, June 28, 2011 - link

LOL. All the trouble they go through to hide the controller vendor and all you need to do is look at the vendor IDVStrom - Tuesday, June 28, 2011 - link

You can boot with this on x64 with an unsigned driver...you just need to load Windows with the signed driver validation disabled (F8 before Windows loads).DanNeely - Tuesday, June 28, 2011 - link

If you need to do this before every boot it's a non-option in the server world. In normal operation no keyboard/mouse/monitor will be attached, all interaction will be via remote desktop type services after it's booted.VStrom - Tuesday, June 28, 2011 - link

True, but I was referring to Andand's comment specifically about Win 7 saying "Windows 7 x64 won't boot off of the RevoDrive 3". When this ships, the driver will be ready and a non-issue for servers.bacomatic - Tuesday, September 13, 2011 - link

Apparently this has been fixed, I am booting Win 7 x64 from this drive with the current drivers.quiksilvr - Tuesday, June 28, 2011 - link

I would have figured with this strong staple in the 2.5" SSD market with TRIM enabled devices this would not be hard to to.mikeblas - Tuesday, June 28, 2011 - link

Comparing this device against consumer-level SSDs seems like a waste of time. Why not compare against fusionio?I'd also like to see a benchmark that runs for a sustained period. The problem with SSD is the ability to keep up with write loads. If you run the 4K random IO benchmark for 48 hours, does the IOPS rate spike or trend downwards, or does it stay flat? The write ratio of your benchmark is around 33%, which I don't think represents OLTP applications too well.

The review doesn't comment on the stability of the drivers, their memory usage on the host, or the management and monitoring tools for the drive. Do I get a tool that measures remaps, does SNMP, or email alerting?