The AMD Llano Notebook Review: Competing in the Mobile Market

by Jarred Walton & Anand Lal Shimpi on June 14, 2011 12:01 AM EST

High Detail Gaming and Asymmetrical CrossFire Misfire

Update, 8/10/2011: Just to let you know, AMD managed to get me a new BIOS to address some of the rendering issues I experienced with CrossFire. As you'll read below, I had problems in several titles, and I still take exception with the "DX10/11 only" approach. I can name dozens of good games out there that are DX9-only that released in the past year. Anyway, the updated BIOS has at least addressed the rendering errors I noticed, so retail Asymmetrical CrossFire laptops should do better. With that disclaimer out of the way, here's my initial experience from two months back.

So far, the story for Llano and gaming has been quite good. The notebook we received comes with the 6620G fGPU along with a 6630M dGPU, though, and AMD has enabled Asymmetrical CrossFire...sort of. The results for ACF in 3DMarks were interesting if only academic, so now we're going to look at how Llano performs with ACF enabled and running at our High detail settings (using an external LCD).

Just a warning before we get to the charts: this is preproduction hardware, and AMD informed us (post-review) that they stopped worrying about fixing BIOS issues on this particular laptop because it isn't going to see production. AMD sent us an updated driver late last week that was supposed to address some of the CrossFire issues, but in our experience it didn’t help and actually hurt in a few titles. Given that the heart of the problem is in the current BIOS, that might also explain why Turbo Core doesn't seem to be working as well as we would expect.

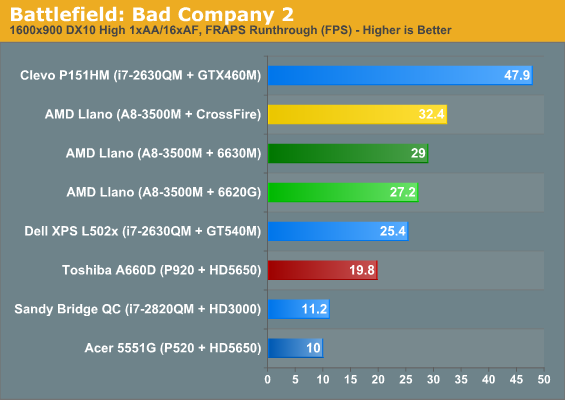

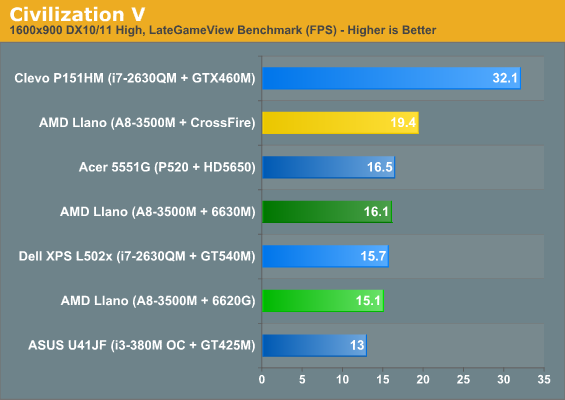

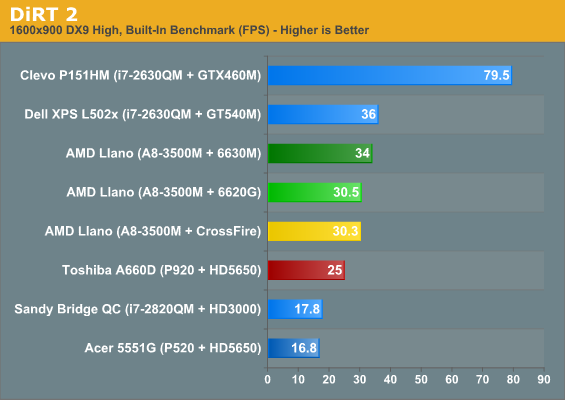

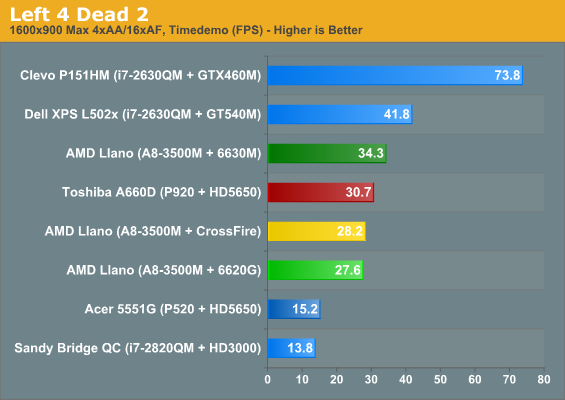

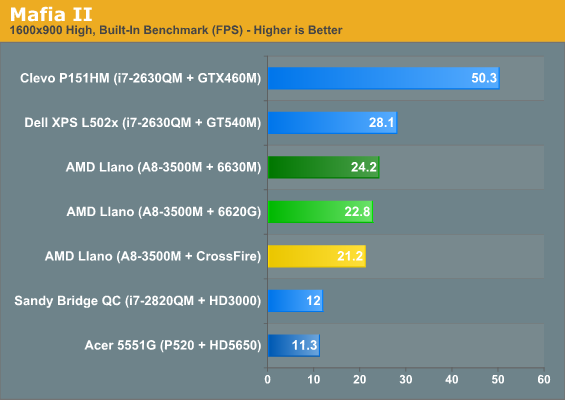

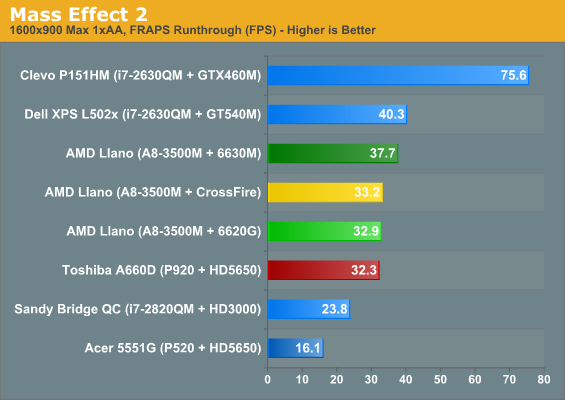

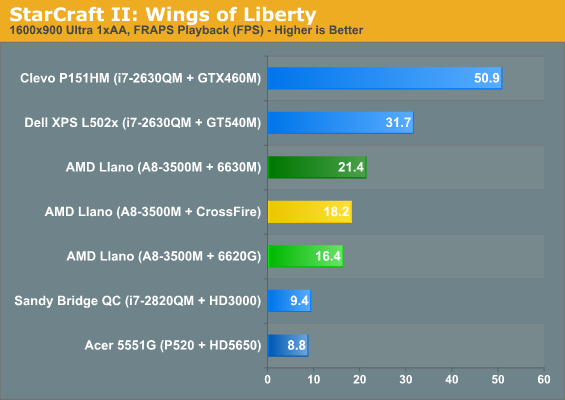

AMD also notes that the current ACF implementation only works on DX10/11 games, and at present that's their plan going forwards as the majority of software vendors state they will be moving to DX10/11. While the future might be a DX10/11 world, the fact is that many recent titles are still DX9 only. Even at our "High" settings, five of our ten titles are tested in DX9 mode (DiRT 2, L4D2, Mafia II, Mass Effect 2, and StarCraft II—lots of twos in there, I know!), so they shouldn't show any improvement...and they don't. Of those five titles, four don't have any support for DX10/11 (DiRT 2 being the exception), and even very recent, high-profile games are still shipping in DX9 form (e.g. Crysis 2, though a DX11 patch is still in the works). Not showing an improvement is one thing, but as we'll see in a moment, enabling CrossFire mode actually reduces performance by 10-15% relative to the dGPU. That's the bad news. The good news is that the other half of the games show moderate performance increases over the dGPU.

If that doesn't make the situation patently clear, CrossFire on our test unit is largely not in what we consider a working state. With that out of the way, here are the results we did managed to cobble together:

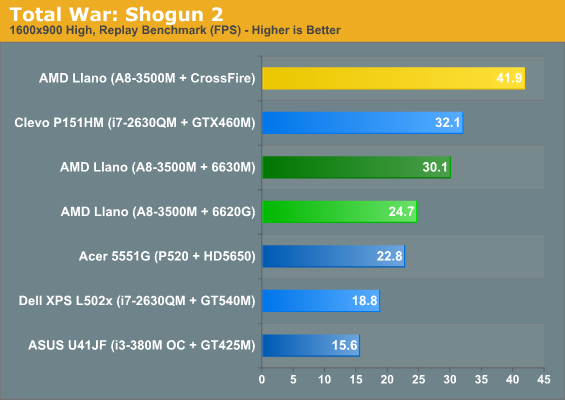

Given this is preproduction hardware that won't see a store shelf, the above results are almost meaningless. If ACF can provide at least a 30% increase on average, like what we see in TWS2, it could be useful. If it can't do at least 30%, it seems like switchable graphics with an HD 6730M would be less problematic and provide better performance. The only takeaway we have right now is that ACF is largely not working on this particular unit. Shipping hardware and drivers should be better (they could hardly be worse), but let's just do a quick discussion of the results.

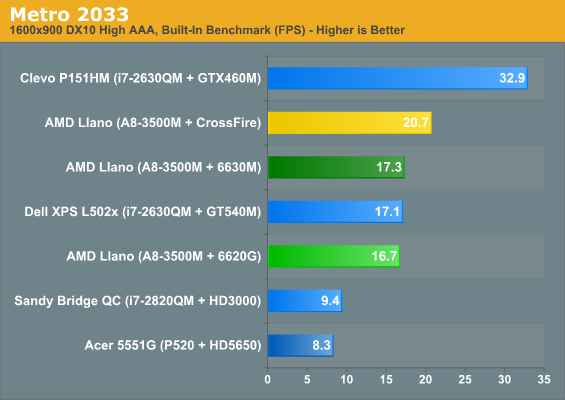

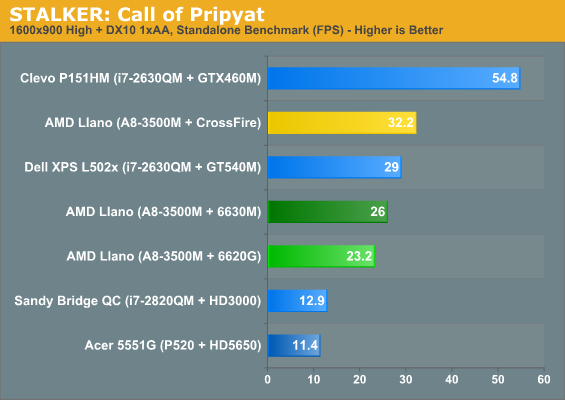

If we just look at games with DX10/11 enabled, the story isn't too bad. Not accounting for the rendering issues noted below, ACF is able to boost performance by an average of 24% over the dGPU at our High settings. We didn’t include the Low and Medium results for ACF on the previous page for what should be obvious reasons, but if the results at our High settings are less than stellar, Low and Medium settings are even less impressive. Trimming our list of titles to three games (we tested TWS2 and STALKER in DX9 mode at our Low and Medium settings), ACF manages to average a 1% performance increase over the dGPU at Low and a 14% increase at Medium, but Civ5 still had to contend with rendering errors and Metro 2033 showed reduced performance.

In terms of rendering quality, ACF is very buggy on the test system; the default BIOS settings initially resulted in corrupted output for most games and 3D apps, but even with the correct settings we still encountered plenty of rendering errors. Civilization V only had one GPU rendering everything properly while units were missing on the other GPU, so you’d get a flicker every other frame with units appearing/disappearing. At higher detail settings, the corruption was even more severe. STALKER: Call of Pripyat and Total War: Shogun 2 also had rendering errors/flickering at higher quality settings. Since we didn't enable DX10/11 until our High defaults, right when ACF is supposed to start helping is where we encountered rendering issues.

Just to be clear: none of this means that Asymmetrical CrossFire is a bad idea; it just needs a lot more work on the drivers and BIOS. If/when we get a retail notebook that includes Asymmetrical CrossFire support, we’ll be sure to revisit the topic. Why ACF isn’t supported in DX9 is still a looming question, and AMD’s drivers need a much better interface for managing switchable graphics profiles. A list of all supported games with a central location to change all the settings would be a huge step up from the current UI, and users need the ability to enable/disable CrossFire support on a per-game basis if AMD wants anyone to actually use ACF. We also hope AMD rethinks their “only for DX10/DX11 modes” stance; CrossFire has worked with numerous DX9 games in the past, and what we’d like to see is ACF with the same list of supported games as regular CrossFire. If nothing else, having ACF enabled shouldn't reduce performance in DX9 titles.

In summary: we don't know if ACF will really help that much. We tested Asymmetrical CrossFire on what is, at best, beta hardware and drivers, and it didn't work very well. We want it to work, and the potential is certainly there, but we'll need to wait for a better test platform. To be continued....

177 Comments

View All Comments

JarredWalton - Tuesday, June 14, 2011 - link

Totally agree with the pricing. The highest performance A8 laptops are going to need to be $700 with fGPU only, and maybe $800 with dGPU, because that's where dual-core i5 + Optimus laptops are currently sitting.Of course, I'd still pay more for good build quality and a nice LCD and keyboard.

Oh, and the people saying CPU is the be-all, end-all... well, even though I have a couple Core i7 Bloomfield systems in my house (and many Core i5/i7 laptops), my primary work machine is running... Core 2 QX6700 (@3.2GHz) with an HD 5670 GPU and 4GB RAM. The area I want to upgrade the most is storage (currently using RAID0 Raptor 150GB), but I have no desire to reformat and start transferring apps to another PC, so I continue to plug along on the Raptors. This CPU is now over four years old, and yet the only thing I really don't like is the HDD thrashing and slow POST times.

ionave - Thursday, June 16, 2011 - link

None of those GPU's match the power of the 6620, which you can find in even the A6 series, so your point is invalid.Dribble - Wednesday, June 15, 2011 - link

Actually you can normally tell quite easily which laptop has the slower cpu. It's the one with the fan whining away. With laptops having a more powerful processor that isn't having to work so hard is important just to keep the thing quiet.As for cpu power - well windows and it's software just isn't that efficient. Even a fairly complex word 2010 doc (few pictures/charts/etc) can start to feel slow on a 2.5Ghz C2D (I should know my laptop has a 2.4Ghz C2D). The flash games my kids seem to be forever finding are also cpu only and will run it flat out and the game won't seem as smooth as it would on a faster machine.

Sure you can get by with a slower machine, but it doesn't make for such a pleasant experience.

It has been the case since PC's arrived that over time software needs more and more power. e.g. I could run word 6 on a 486, I now really need a dual core 2Ghz machine to even run word 2010. I don't see that changing hence the faster your cpu the longer your pc will remain usable.

lukarak - Wednesday, June 15, 2011 - link

I've been using a 2007 tech MacBook white up until a few months ago with a 2.0 GHz C2D. Over time i upgraded it to include 6 GB of memory, a 64 GB SSD + 500 GB HDD, and then i transitioned to a 2011 MPB 13 with a SNB CPU and 4 GB of memory. Aside from a better screen, once i put in the SSD, i couldn't see the diference in speed. I usually use a lot of VM, use Eclipse and XCode, and most of the time watch 720p and the more than 3 years newer CPU isn't all that revolutionary. Sure, it may not use 30ish % of the CPU to play movies, but only 20ish, but until that's 50ish% when the fan gets louder it doesn't really matter for me.ionave - Thursday, June 16, 2011 - link

The CPU looks relatively slow to the i5/i7, but its really not that slow. Seriously. Compare it to an atom and see that its not that bad.ionave - Thursday, June 16, 2011 - link

The CPU isn't even bad. I don't know what you guys are all on but A8 cores are improved phenom II x4 cores... I would say its about the same performance as the i5 series. All the benchmarks online are measured on the WORST A8 chip, which has the worst CPU performance. All of the reviews are on A8-3500M. Just wait until the A8-3850 gets benchmarked.All I'm saying is that its not fair to compare the worst A8 to the best i5 or best i7, plain and simple.

sundancerx - Tuesday, June 14, 2011 - link

for most of the charts, yellow bar is assigned to INTEL asus k53e(i5-2520m+hd3000), but on asymetrical crossfire, this is assigned to AMD llano (18-3500m+crossfire). kind of confusing if you dont pay attention or am i the one confused?JarredWalton - Tuesday, June 14, 2011 - link

Dark yellow = K53E, bright yellow = CrossFire. If you have a different color you think would work, I'll be happy to change it. Purple? Brown? Orange?adrien - Tuesday, June 14, 2011 - link

I agree Brazos looks less interesting now but it still has one huge advantage: price. If Llano notebooks are going to sell for $600 (or $500), Brazos are 40% less expensive.JarredWalton - Tuesday, June 14, 2011 - link

Brazos E-350 (which is already 60% faster than C-50) start at around $425. They come with 2GB RAM and a 250GB HDD. AMD is saying $500 as the target price for A4, $600 for A6, and $700 for A8, but I suspect we'll see lower than that by at least $50. So if your choice is Brazos E-350 for $425 or Llano A4 for $450, and the Llano packs 4GB RAM and a 500GB HDD, there's no competition--though size will of course be another factor. I figure Llano will bottom out at 13.3-inch screens where Brazos is in 11.6" and 12.1". Personally, I'd never buy a 10" netbook; I just can use them comfortably. I'm happiest with 13.3" or 14" laptops.