The AMD Llano Notebook Review: Competing in the Mobile Market

by Jarred Walton & Anand Lal Shimpi on June 14, 2011 12:01 AM ESTWhat Took So Long?

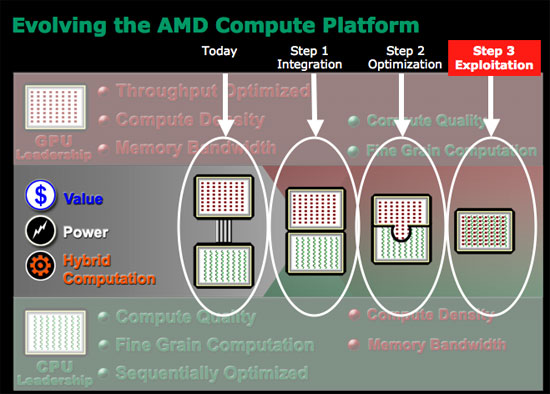

AMD announced the acquisition of ATI in 2006. By 2007 AMD had a plan for CPU/GPU integration and it looked like this. The red blocks in the diagram below were GPUs, the green blocks were CPUs. Stage 1 was supposed to be dumb integration of the two (putting a CPU and GPU on the same die). The original plan called for AMD to release the first Fusion APU to come out sometime in 2008—2009. Of course that didn't happen.

Brazos, AMD's very first Fusion platform, came out in Q4 of last year. At best AMD was two years behind schedule, at worst three. So what happened?

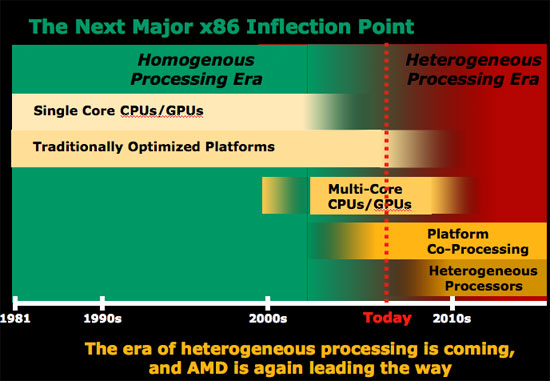

AMD and ATI both knew that designing CPUs and GPUs were incredibly different. CPUs, at least for AMD back then, were built on a five year architecture cadence. Designers used tons of custom logic and hand layout in order to optimize for clock speed. In a general purpose microprocessor instruction latency is everything, so optimizing to lower latency wherever possible was top priority.

GPUs on the other hand come from a very different world. Drastically new architectures ship every two years, with major introductions made yearly. Very little custom logic is employed in GPU design by comparison; the architectures are highly synthesizable. Clock speed is important but it's not the end all be all. GPUs get their performance from being massively parallel, and you can always hide latency with a wide enough machine (and a parallel workload to take advantage of it).

The manufacturing strategy is also very different. Remember that at the time of the ATI acquisition, only ATI was a fabless semiconductor—AMD still owned its own fabs. ATI was used to building chips at TSMC, while AMD was fabbing everything in Dresden at what would eventually become GlobalFoundries. While the folks at GlobalFoundries have done their best to make their libraries portable for existing TSMC customers, it's not as simple as showing up with a chip design and having it work on the first go.

As much sense as AMD made when it talked about the acquisition, the two companies that came together in 2006 couldn't have been more different. The past five years have really been spent trying to make the two work together both as organizations as well as architectures.

The result really holds a lot of potential and hope for the new, unified AMD. The CPU folks learn from the GPU folks and vice versa. Let's start with APU refresh cycles. AMD CPU architectures were updated once every four or five years (K7 1999, K8 2003, K10 2007) while ATI GPUs received substantial updates yearly. The GPU folks won this battle as all AMD APUs are now built on a yearly cadence.

Chip design is also now more GPU inspired. With a yearly design cadence there's a greater focus on building easily synthesizable chips. Time to design and manufacture goes down, but so do maximum clock speeds. Given how important clock speed can be to the x86 side of the business, AMD is going to be taking more of a hybrid approach where some elements of APU designs are built the old GPU way while others use custom logic and more CPU-like layout flows.

The past few years have been very difficult for AMD but we're at the beginning of what may be a brand new company. Without the burden of expensive fabs and with the combined knowledge of two great chip companies, the new AMD has a chance but it also has a very long road ahead. Brazos was the first hint of success along that road and today we have the second. Her name is Llano.

177 Comments

View All Comments

phantom505 - Tuesday, June 14, 2011 - link

I went with a K325 in a Toshiba with a Radeon IGP. Nobody I have lent it out to has every complained about it being slow or incapable of doing what they wanted/needed to. I get about 5 hours of battery life consistently. I don't do too much that is CPU intensive but I hear people moan and groan about the E-350 and Atom both when they try to open 50MB+ ppt files. I have no such problems.I for one an quite happy to see that AMD is still leading this segment since most users will be quite happy with AMD. I'm finding it more and more that Intel may own the top end, but nobody I know cares in the slightest.

mino - Tuesday, June 14, 2011 - link

E-350 is generally faster than K325 + IGP. Then than that, I fully agree.ash9 - Tuesday, June 14, 2011 - link

In this price range, I think not, besides Open(X) applications will reveal the potential - its up to the application developers nowGaMEChld - Tuesday, June 14, 2011 - link

My netbook is a pain to use precisely because of its graphics. It cannot properly play youtube or movie files fluently. Aside from its multi-media problems, I don't try to do ridiculous things on a netbook, so the other components are not much of a factor for me. But if I can't even watch videos properly, then it's trash.Luckily, I got that netbook for free, so I'm not that sad about it. I'll probably sell it on eBay and get a Brazos netbook at some point.

hvakrg - Tuesday, June 14, 2011 - link

Yes, they're becoming primary machines, but what exactly do you need the CPU part for in a primary machine today? Let's face it most people use their computer to browse the web, listen to music and watch videos, all of which are either relying on the GPU today or is clearly moving in that direction.Intel will have an advantage in the hardcore CPU market probably forever due to them being years ahead of the competition in manufacturing processes, but what advantage does that give them when it comes to selling computers to the end user? Things like battery life and GPU performance is what will be weighted in the future.

Broheim - Wednesday, June 15, 2011 - link

personally I need it to compile thousands of lines of code sometimes several times a day, if I were to settle for a E-350 I'd die of old age long before I get my masters in computer science.... some of us actually gives our 2600k @ 4.5ghz a run for it's money.th G in GPU doesn't stand for General... the GPU can only do a few highly specialized tasks, it's never going to replace and will always rely on the CPU. Unless you're a gamer you benifit much more from a fast CPU than a fast GPU, and even as a gamer you still need a good CPU.

don't believe me? take a E-350 and do all the things you listed, then strap a HD6990 onto it and try and see if you can tell the difference...

trust me, you can't.

ET - Wednesday, June 15, 2011 - link

Compiling code is a minority application, although I did that at a pinch on a 1.2GHz Pentium M, so the E-350 would do as well. Certainly won't use it for my main development machine, I agree.Still, as hvakrg said, most users do web browsing, listen to music, watch video. The E-350 would work well enough for that.

sinigami - Wednesday, June 15, 2011 - link

>most users do web>browsing, listen to music,

>watch video. The E-350

>would work well enough

>for that.

The Atom also works well enough for that, for less money.

You might be pleasantly surprised to find that current Atom netbooks can play 720p MKVs. For netbook level video, that's "well enough".

As you said, for anything tougher than that, i wouldn't use it for my "main machine" either.

ionave - Thursday, June 16, 2011 - link

Why would you spend $2000 for an intel powered laptop when you can build a desktop to do computations for a quarter of the price at 20x the speed, and get a laptop for $400 to run code on the desktop remotely and use it for lighter tasks? I'm surprised that you are a masters student in computer science, because your lack of logic doesn't reflect it. Correct me if I'm wrong, but why would you compute on the go when you can let the code on a desktop or cluster while the laptop is safely powered down in your backpack?Also, I can run Super Mario Galaxy using dolphin (CPU intensive) emulator at full frame rate on my AMD Phenom II X2 BE, and the cores in the A8 are improved versions of Phenom II X4. You really need to get your facts straight, since the CPU is actually VERY good. Go look at the benchmarks and do your research

Broheim - Thursday, June 16, 2011 - link

he clearly said primary machine, so before you go around insulting me I'd suggest you learn how to read.the 2600K is a desktop CPU you douchebucket, I never said my main machine was a laptop, quite to the contrary.

what you can and can't do is of no interrest to me, but first off, I never mentioned the A8 I said E-350, again with the failure to read.

nevertheless...

K10 is not even a match for Nehalem, and so far behind Sandy bridge it's ridiculous.

I've seen the benchmarks, I've done my research and concluded that the A8 CPU is far from "VERY" good, have you done yours?