The AMD Llano Notebook Review: Competing in the Mobile Market

by Jarred Walton & Anand Lal Shimpi on June 14, 2011 12:01 AM ESTThe Llano A-Series APU

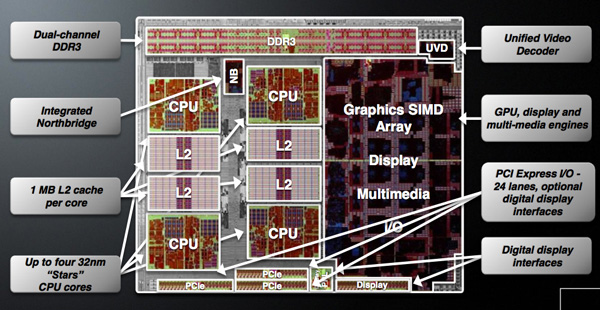

Although Llano is targeted solely at the mainstream, it is home to a number of firsts for AMD. This is AMD's first chip built on a 32nm SOI process at GlobalFoundries, it is AMD's first microprocessor to feature more than a billion transistors, and as you'll soon see it's the first platform with integrated graphics that's actually worth a damn.

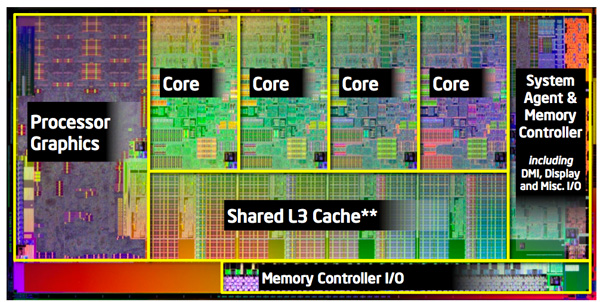

AMD is building two distinct versions of Llano, although only one will be available at launch. There's the quad-core, or big Llano, with four 32nm CPU cores and a 400 core GPU. This chip weighs in at 1.45 billion transistors, nearly 50% more than Sandy Bridge. Around half of the chip is dedicated to the GPU however, so those are tightly packed transistors resulting in a die size that's only 5% larger than Sandy Bridge.

| CPU Specification Comparison | ||||||||

| CPU | Manufacturing Process | Cores | Transistor Count | Die Size | ||||

| AMD Llano 4C | 32nm | 4 | 1.45B | 228mm2 | ||||

| AMD Llano 2C | 32nm | 2 | 758M | ? | ||||

| AMD Thuban 6C | 45nm | 6 | 904M | 346mm2 | ||||

| AMD Deneb 4C | 45nm | 4 | 758M | 258mm2 | ||||

| Intel Gulftown 6C | 32nm | 6 | 1.17B | 240mm2 | ||||

| Intel Nehalem/Bloomfield 4C | 45nm | 4 | 731M | 263mm2 | ||||

| Intel Sandy Bridge 4C | 32nm | 4 | 995M | 216mm2 | ||||

| Intel Lynnfield 4C | 45nm | 4 | 774M | 296mm2 | ||||

| Intel Clarkdale 2C | 32nm | 2 | 384M | 81mm2 | ||||

| Intel Sandy Bridge 2C (GT1) | 32nm | 2 | 504M | 131mm2 | ||||

| Intel Sandy Bridge 2C (GT2) | 32nm | 2 | 624M | 149mm2 | ||||

Given the transistor count, big Llano has a deceptively small amount of cache for the CPU cores. There is no large catch-all L3 and definitely no shared SRAM between the CPU and GPU, just a 1MB private L2 cache per core. That's more L2 cache than either the 45nm quad-core Athlon II or Phenom II parts.

Intel's Sandy Bridge die is only ~20% GPU

The little Llano is a 758 million transistor dual-core version with only 240 GPU cores. Cache sizes are unchanged; little Llano is just a smaller version for lower price points. Initially both quad- and dual-core parts will be serviced by the same 1.45B transistor die. Defective chips will have unused cores fused off and will be sold as dual-core parts. This isn't anything unusual, AMD, Intel and NVIDIA all use die harvesting as part of their overall silicon strategy. The key here is that in the coming months AMD will eventually introduce a dedicated little Llano die to avoid wasting fully functional big Llano parts on the dual-core market. This distinction is important as it indicates that AMD isn't relying on die harvesting in the long run but rather has a targeted strategy for separate market segments.

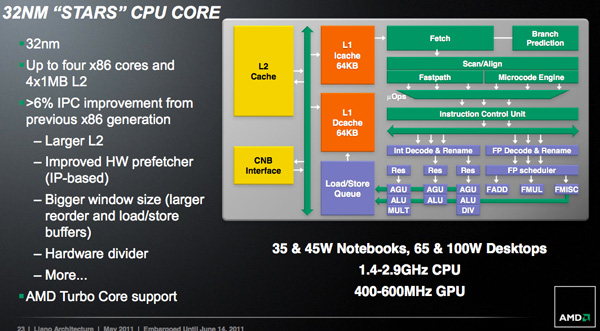

Architecturally AMD has made some minor updates to each Llano core. AMD is promising more than a 6% increase in instructions executed per clock (IPC) for the Llano cores vs. their 45nm Athlon II/Phenom II predecessors. The increase in IPC is due to the larger L2 cache, larger reorder and load/store buffers, new divide hardware, and improved hardware prefetchers.

On average I measured around a 3% performance improvement at the same clock speed as AMD's 45nm parts. Peak performance improved up to 14% however most of the gains were down in the 3—5% range. This is arguably the biggest problem that faces Llano. AMD's Phenom architecture debuted in 2007 and was updated in 2009. Llanos cores have been sitting around for the past 3-4 years with only a mild update while Intel has been through two tocks in the same timeframe. A ~6% increase in IPC isn't anywhere near close enough to bridge the gap left by Nehalem and Sandy Bridge.

Note that this comparison is without AMD's Turbo Core enabled, but more on that later.

177 Comments

View All Comments

ET - Wednesday, June 15, 2011 - link

It may be impossible to know the exact speed the cores run, but it would be interesting to run a test to get some relative numbers.You can run a single threaded CPU bound program such as SuperPI, then run it again with the other three cores at 100% (for example by having another three instances of SuperPI running). Do this on AC and battery, and it might generate some interesting numbers. At the very least we'll be able to tell whether the 1.5GHz -> 2.4GHz ratio looks right.

ET - Wednesday, June 15, 2011 - link

By the way, I just read Tom's Hardware review, which was unique in that it compared to a Phenom II X4 running at 1.5GHz and 2.4GHz. It looked from these benchmarks like the A8-3500M is always performing around the 1.5GHz level of the Phenom II X4 (sometimes it's a little faster, sometimes a little slower), which suggests that Turbo Core doesn't really kick in.i_am_the_avenger - Wednesday, June 15, 2011 - link

Maybe this will cheer the AMD Fans a bitThis article did not mention some nifty features the APUs have (or maybe it did I did not read it line by line)...................................

Watch the video below from engadget:

http://www.engadget.com/2011/06/13/amds-fusion-a-s...

It shows how these APUs can smooth out shaking videos real time, even while streaming from Youtube! and it does a very good job.

Another feature is how it en-chances videos (colour etc.)

This improves general user PC experience.......... something very desirable

The video also shows how AMD wants to target general users and not work enthusiasts

Another video shows comparison between the i7-2630QM and A8-3500M while multitasking video related applications.

http://www.engadget.com/2011/03/01/amd-compares-up...

---Interesting to note that the APU Gradually increased its power consumption while i7 was like bursting to and fro, something the way turbo core acts maybe-----

I think it is work vs general performance,

Intel's great for work, when you need to finish tasks and it needs to be done quickly,

while AMD APUs give you a good over all pc and multimedia performance - you watch videos, play games, so what if the zip file extracts a minute late and the fGPU performance is great....

You may buy a i7 SNB with discreate GPU but that has a battery life hit (for same battery capacity) and also extra heat generation which requires more fans, also the extra weight..

Please don't start judging me or something....

I am getting confused myself, while intel looks great in every way except stock gaming and battery life(not that bad)... I think I don't need that much power, even if I work - my work isn't so CPU oriented that an i7 would matter, a 30 second task finishes in 20 ok but it does not matter to me..... but improved video and battery seems more useful to me

I don't think that all of us have to tax our CPUs to full potential -- a few have to, not considering them -- so even if Intel have faster processors for many it does not affect them as much.

psychobriggsy - Wednesday, June 15, 2011 - link

For all your moaning about not getting Asymmetric CrossFire to work, you didn't read the reviewers guide that says it only works in DX10 and DX11 mode, not DX9. So your Dirt2 benches for example clearly state DX9 for this test. I don't know about the other titles on that page - you say 5 of the others are DX9 titles. Do these titles have DX10 modes of operation - if so, USE THEM.Otherwise it just looks like you are trying to get the best results for the Intel Integrated Graphics.

Just put "0 - Unsupported" for DX11 tests by HD3000 like other sites have done.

ET - Wednesday, June 15, 2011 - link

The article said:"AMD told us in an email on Monday (after all of our testing was already complete) that the current ACF implementation on our test notebook and with the test drivers only works on DX10/11 games. It's not clear if this will be the intention for future ACF enabled laptops or if this is specific to our review sample. Even at our "High" settings, five of our ten titles are DX9 games (DiRT 2, L4D2, Mafia II, Mass Effect 2, and StarCraft II--lots of twos in there, I know!), so they shouldn't show any improvement...and they don't. Actually, the five DX9 games even show reduced performance relative to the dGPU, so not only does ACF not help but it hinders. That's the bad news. The only good news is that the other half of the games show moderate performance increases over the dGPU."

I agree that at least in the case of DiRT 2 that's blatantly false, since that game was one of the first to use DX11, and was given with many Radeon 58x0 cards for this reason.

JarredWalton - Friday, June 17, 2011 - link

DiRT 2 supports DX11, but it's only DX9 or DX11. We chose to standardize on DX9 for our Low/Med/High settings -- and actually, DX11 runs slower at the High settings than DX9 does (though perhaps it looks slightly better). Anyway, we do test DiRT 2 with DX11 for our "Ultra" settings, but Llano isn't fast enough to handle 1080p with 4xAA and DX11. So to be clear, I'm not saying DiRT 2 isn't DX11; I'm saying that the settings we standardized on over a year ago are not DX11.jitttaaa - Wednesday, June 15, 2011 - link

How is the notebook llano performing as good, if not better than the desktop llano?ET - Wednesday, June 15, 2011 - link

At least as far as CPU power is concerned, the desktop part is obviously faster. The benchmarks are mostly not compatible so it's hard to judge, but in Cinebench R10 the mobile Llano gets 2037 while the desktop gets 3390. I agree that for graphics it looks like the desktop part is performing worse in games, which is strange considering the GPU is working at a faster speed.Only explanation I can think of is that the faster CPU is taking too much memory bandwidth, but it doesn't make much sense since it's been said that the GPU gets priority. It's definitely something that's worth checking out with AMD.

ionave - Thursday, June 16, 2011 - link

http://www.anandtech.com/show/4448/amd-llano-deskt...On average the A8-3850 is 58% faster than the Core i5 2500K.

Boom. Delivered. You think its slow? It really isn't. The A8-3850 has about the performance of a DESKTOP i3. If you think that is bad performance, then you don't know what you are talking about. The battery life is amazing for having that kind of performance in a laptop. I'm sorry, but it totally destroys i7 and i5 platforms because of the sheer performance in that amazing battery life.

JarredWalton - Friday, June 17, 2011 - link

Let me correct that for you:On average, the A8-3850 fGPU (6550D) is 58% faster than the Core i5-2500K's HD 3000 IGP, in games running at low quality settings. It is also 29% faster than the i5-2500K with a discrete HD 5450, which is a $25 graphics card. On the other hand, the i5-2500K with an HD 5570 (a $50 GPU) is on average 66% faster than the A8-3850.

Boom. Delivered. You think that's fast? It really isn't. The 6550D has about the performance of a $35 desktop GPU. If you think that is good performance, then you don't know what you are talking about.

At least Llano is decent for laptops, but for $650 you can already get i3-2310M with a GT 520M and Optimus. Let me spell it out for you: better performance on the CPU, similar or better performance on the GPU, and a price online that's already $50 below the suggested target of the A8-3500M. Realistically, A8-3500M will need to sell for $600 to be viable, A6 for $500, and A4 for $450 or less.