Discrete HTPC GPU Shootout

by Ganesh T S on June 12, 2011 10:30 PM ESTOne of the video post processing aspects heavily emphasized by the HQV 2.0 benchmark is cadence detection. Improper cadence detection / deinterlacing leads to the easily observed artifacts during video playback. When and where is cadence detection important? Unfortunately, the majority of the information about cadence detection online is not very clear. For example, one of the top Google search results makes it appear as if telecine and pulldown are one and the same. They also suggest that the opposite operations, inverse telecine and reverse pulldown are synonymous. Unfortunately, that is not exactly true.

We have already seen a high level view of how our candidates fare at cadence detection in the HQV benchmark section. In this section, we will talk about cadence detection in relation to HTPCs. After that, we will see how our candidates fare at inverse telecining.

Cadence detection literally refers to determining whether a pattern is present in a sequence of frames. Why do we have a pattern in a sequence of frames? This is because most films and TV series are shot at 24 frames per second. For the purpose of this section, we will refer to anything shot at 24 fps as a movie.

In the US, TV broadcasts conform to the NTSC standard, and hence, the programming needs to be at 60 frames/fields per second. Currently, some TV stations broadcast at 720p60 (1280x720 video at 60 progressive frames per second), while other stations broadcast at 1080i60 (1920x1080 video at 60 fields per second). The filmed material must be converted to either 60p or 60i before broadcast.

Pulldown refers to the process of increasing the movie frame rate by duplicating frames / fields in a regular pattern. Telecining refers to the process of converting progressive content to interlaced and also increasing the frame rate. (i.e, converting 24p to 60i). It is possible to perform pulldown without telecining, but not vice-versa.

For example, Fox Television broadcasts 720p60 content. The TV series 'House', shot at 24 fps, is subject to pulldown to be broadcast at 60 fps. However, there is no telecining involved. In this particular case, the pulldown applied is 2:3. For every two frames in the movie, we get five frames for the broadcast version by repeating the first frame twice and the second frame thrice.

Telecining is a bit more complicated. Each frame is divided into odd and even fields (interlaced). The first two fields of the 60i video are the odd and even fields of the first movie frame. The next three fields in the 60i video are the odd, even and odd fields of the second movie frame. This way, two frames of the movie are converted to five fields in the broadcast version. Thus, 24 frames are converted to 60 fields.

While the progressive pulldown may just result in judder (because every alternate frame stays on the screen a little bit longer than the other frame), improper deinterlacing of 60i content generated by telecining may result in very bad artifacting as shown below. This screenshot is from a sample clip in the Spears and Munsil (S&M) High Definition Benchmark Test Disc

| Inverse Telecine OFF | Inverse Telecine ON |

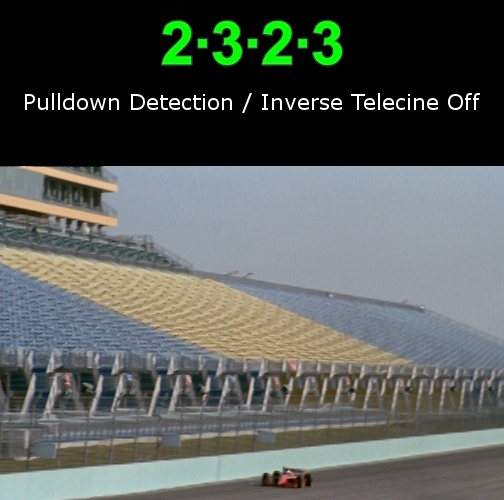

Cadence detection tries to detect what kind of pulldown / telecine pattern was applied. When inverse telecine is applied, cadence detection is used to determine the pattern. Once the pattern is known, the appropriate fields are considered in order to reconstruct the original frames through deinterlacing. Note that plain inverse telecine still retains the original cadence while sending out decoded frames to the display. Pullup removes the superfluous repeated frames (or fields) to get us back to the original movie frame rate. Unfortunately, none of the DXVA decoders are able to do pullup. This can be easily verified by taking a 1080i60 clip (of known cadence) and frame stepping it during playback. You can additionally ensure that the refresh rate of the display is set to the same as the original movie frame rate. It can be observed that a single frame repeats multiple times according to the cadence sequence.

Now that the terms are clear, let us take a look at how inverse telecining works in our candidates. The gallery below shows a screenshot while playing back the 2:3 pulldown version of the wedge pattern in S&M.

This clip checks the overall deinterlacing performance for film based material. As the wedges move, the narrow end of the horizontal wedge should have clear alternating black and white lines rather than blurry or flickering lines. The moire in the last quarter of the wedges can be ignored. It is also necessary for both wedges should remain steady and not flicker for the length of the clip.

The surprising fact here is that the NVIDIA GT 430 is the only one to perfectly inverse telecine the clip. Even the 6570 fails in this particular screenshot. In this particular clip, the 6570 momentarily lost the cadence lock, but regained it within the next 5 frames. Even during HQV benchmarking, we found that the NVIDIA cards locked onto the cadence sequence much faster than the AMD cards.

Cadence detection is only part of the story. The deinterlacing quality is also important. In the next section, we will evaluate that aspect.

70 Comments

View All Comments

enki - Monday, June 13, 2011 - link

How about a short conclusion section for those who just use a Windows 7 box with a Ceton tuner card to watch hdtv in Windows Media Center? (i.e. will just be playing back WTV files recorded directly on the box)What provides the best quality output?

What can stream better then stereo over HDMI? On my old 3400 ATI card it either streams the Dolby Digital directly (the computer doesn't do any processing of the audio) or can output stereo (doesn't think there can be more then 2 speakers connected)

Thanks

BernardP - Monday, June 13, 2011 - link

The inability to create and scale custom resolutions within AMD graphics drivers is, for me, a deal-breaker that keeps me from even considering AMD graphics. It will also keep me from Llano, Trinity and future AMD Fusion APU's. I'll stay with NVidia as long as they keep allowing for custom resolutions.My older eyes are grateful for the custom 1536 X 960 desktop resolution on my 24 inch 16:10 monitor. I couldn't create this resolution with AMD graphics drivers.

bobbozzo - Tuesday, June 14, 2011 - link

In your case, you should just increase the size of the fonts and widgets instead of lowering the screen res.Assimilator87 - Tuesday, June 14, 2011 - link

I wish there was a section dedicated to the silent stream bug. I have a GTX 470 hooked up to an Onkyo TX-SR805 and this issue is driving me insane. For instance, does this issue only plague certain cards or do all nVidia suffer from it? I was hoping the latest WHQL driver (275.33) would fix this, but sadly, no. Otherwise, the article was amazing and I'll definitely have to check out LAV Splitter.ganeshts - Tuesday, June 14, 2011 - link

The problem with the silent stream bug is that one driver version has it, the next one doesn't and then the next release brings it back. It is hard to pinpoint where the issue is.Amongst our candidates, even with the same driver release, the GT 520 had the bug, but the GT 430 didn't. I am quite confident that the GT 520 issue will get resolved in a future update, but then, I can just hope that it doesn't break the GT 430.

JoeHH - Tuesday, June 14, 2011 - link

This is simply one of the best articles I have ever seen about HTPC. Congrats Ganesh and thank you. Very informative and useful.bobbozzo - Tuesday, June 14, 2011 - link

Hi, Can you please compare hardware de-intelacing, etc., vs software?e.g. many players/codecs can do de-interlacing, de-noise, etc. in software, using the CPU.

How does this compare with a hardware implementation?

thanks

ganeshts - Tuesday, June 14, 2011 - link

This is a good suggestion. Let me try that out in the next HTPC / GPU piece.CiNcH - Wednesday, June 15, 2011 - link

Hey guys,here is how I understand the refresh rate issue. It does not matter weather it is 0.005 Hz off. You can't calculate frame drops/repeats from that. In DirectShow, frames are scheduled with the graph reference clock. So the real problem is how much the clock which the VSync is based on and the reference clock in the DirectShow graph drift from each other. And here comes ReClock into play. It derives the DirectShow graph clock from the VSync, i.e. synchronizes the two. So it does not matter weather your VSync is off as long as playback speed is adjusted accordingly. A problem here is synchronizing audio which is not too easy if you bitstream it...

NikosD - Thursday, June 16, 2011 - link

Nice guide but you missed something.It's called PotPlayer, it's free and has built-in almost everything.

CPU & DXVA (partial, full) codecs and splitters for almost every container and every video file out there.

The same is true for audio, too.

It has even Pass through (S/PDIF, HDMI) for AC3/TrueHD/DTS, DTS-HD. Only EAC3 is not working.

It has also support for madVR and a unique DXVA-renderless mode which combines DXVA & madVR!

I think it's close to perfect!

BTW, in the article says that there is no free audio decoder for DTS, DTS-HD.

That's not correct.

FFDShow is capable of decoding and pass through (S/PDIF, HDMI) both DTS and DTS-HD.

And PotPlayer of course!